This page shows you how to deploy a simple example gRPC service with the Extensible Service Proxy (ESP) in a Docker container in Compute Engine.

This page uses the Python version of the

bookstore-grpc

sample. See the What's next section for gRPC samples in other

languages.

For an overview of Cloud Endpoints, see About Endpoints and Endpoints architecture.

Objectives

Use the following high-level task list as you work through the tutorial. All tasks are required to successfully send requests to the API.

- Set up a Google Cloud project, and download required software. See Before you begin.

- Create a Compute Engine VM instance. See Creating a Compute Engine instance.

- Copy and configure files from the

bookstore-grpcsample. See Configuring Endpoints. - Deploy the Endpoints configuration to create an Endpoints service. See Deploying the Endpoints configuration.

- Deploy the API and ESP on the Compute Engine VM. See Deploying the API backend.

- Send a request to the API. See Sending a request to the API.

- Avoid incurring charges to your Google Cloud account. See Clean up.

Costs

In this document, you use the following billable components of Google Cloud:

To generate a cost estimate based on your projected usage,

use the pricing calculator.

When you finish the tasks that are described in this document, you can avoid continued billing by deleting the resources that you created. For more information, see Clean up.

Before you begin

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

- Make a note of the project ID because it's needed later.

- Install and initialize the Google Cloud CLI.

- Update the gcloud CLI and install the Endpoints

components:

gcloud components update

-

Make sure that the Google Cloud CLI (

gcloud) is authorized to access your data and services on Google Cloud:gcloud auth login

-

Set the default project to your project ID.

gcloud config set project YOUR_PROJECT_ID

Replace YOUR_PROJECT_ID with your project ID. If you have other Google Cloud projects, and you want to use

gcloudto manage them, see Managing gcloud CLI Configurations. - Follow the steps in the gRPC Python quickstart to install gRPC and the gRPC tools.

Creating a Compute Engine instance

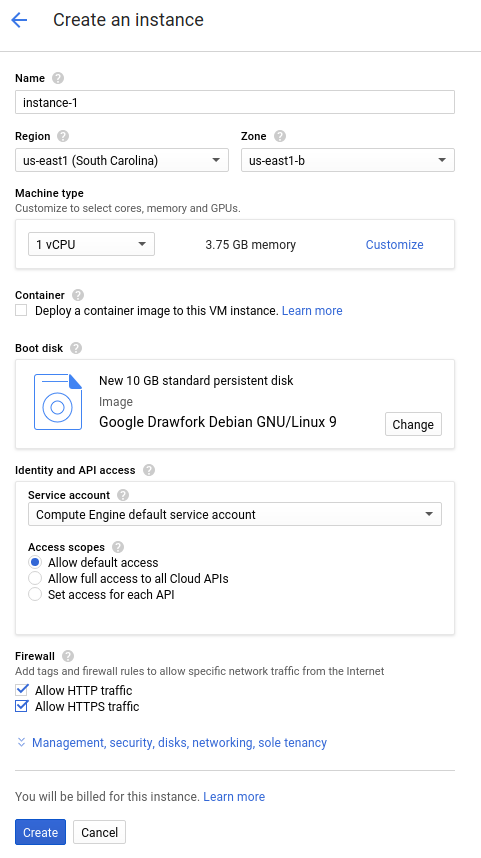

- In the Google Cloud console, go to the Create an instance page.

- In the Firewall section, select Allow HTTP traffic and Allow HTTPS traffic.

- To create the VM, click Create.

- Make sure you that you can connect to your VM instance.

- In the list of virtual machine instances, click SSH in the row of the instance that you want to connect to.

- You can now use the terminal to run Linux commands on your Debian instance.

- Enter

exitto disconnect from the instance.

- Make a note the instance name, zone, and external IP address because they are needed later.

To create a Compute Engine instance:

Allow a short time for the instance to start up. Once ready, it is listed on the VM Instances page with a green status icon.

Configuring Endpoints

Clone the bookstore-grpc sample

repository from GitHub.

To configure Endpoints:

- Create a self-contained protobuf descriptor file from your service

.protofile:- Save a copy of

bookstore.protofrom the example repository. This file defines the Bookstore service's API. - Create the following directory:

mkdir generated_pb2 - Create the descriptor file,

api_descriptor.pb, by using theprotocprotocol buffers compiler. Run the following command in the directory where you savedbookstore.proto:python -m grpc_tools.protoc \ --include_imports \ --include_source_info \ --proto_path=. \ --descriptor_set_out=api_descriptor.pb \ --python_out=generated_pb2 \ --grpc_python_out=generated_pb2 \ bookstore.proto

In the preceding command,

--proto_pathis set to the current working directory. In your gRPC build environment, if you use a different directory for.protoinput files, change--proto_pathso the compiler searches the directory where you savedbookstore.proto.

- Save a copy of

- Create a gRPC API configuration YAML file:

- Save a copy of the

api_config.yamlfile. This file defines the gRPC API configuration for the Bookstore service. - Replace MY_PROJECT_ID in your

api_config.yamlfile with your Google Cloud project ID. For example:# # Name of the service configuration. # name: bookstore.endpoints.example-project-12345.cloud.goog

Note that the

apis.namefield value in this file exactly matches the fully-qualified API name from the.protofile; otherwise deployment won't work. The Bookstore service is defined inbookstore.protoinside packageendpoints.examples.bookstore. Its fully-qualified API name isendpoints.examples.bookstore.Bookstore, just as it appears in theapi_config.yamlfile.apis: - name: endpoints.examples.bookstore.Bookstore

- Save a copy of the

See Configuring Endpoints for more information.

Deploying the Endpoints configuration

To deploy the Endpoints configuration, you use the

gcloud endpoints services deploy

command. This command uses

Service Management

to create a managed service.

- Make sure you are in the directory where the

api_descriptor.pbandapi_config.yamlfiles are located. - Confirm that the default project that the

gcloudcommand-line tool is currently using is the Google Cloud project that you want to deploy the Endpoints configuration to. Validate the project ID returned from the following command to make sure that the service doesn't get created in the wrong project.gcloud config list project

If you need to change the default project, run the following command:

gcloud config set project YOUR_PROJECT_ID

- Deploy the

proto descriptorfile and the configuration file by using the Google Cloud CLI:gcloud endpoints services deploy api_descriptor.pb api_config.yaml

As it is creating and configuring the service, Service Management outputs information to the terminal. When the deployment completes, a message similar to the following is displayed:

Service Configuration [CONFIG_ID] uploaded for service [bookstore.endpoints.example-project.cloud.goog]

CONFIG_ID is the unique Endpoints service configuration ID created by the deployment. For example:

Service Configuration [2017-02-13r0] uploaded for service [bookstore.endpoints.example-project.cloud.goog]

In the previous example,

2017-02-13r0is the service configuration ID andbookstore.endpoints.example-project.cloud.googis the service name. The service configuration ID consists of a date stamp followed by a revision number. If you deploy the Endpoints configuration again on the same day, the revision number is incremented in the service configuration ID.

Checking required services

At a minimum, Endpoints and ESP require the following Google services to be enabled:| Name | Title |

|---|---|

servicemanagement.googleapis.com |

Service Management API |

servicecontrol.googleapis.com |

Service Control API |

In most cases, the gcloud endpoints services deploy command enables these

required services. However, the gcloud command completes successfully but

doesn't enable the required services in the following circumstances:

If you used a third-party application such as Terraform, and you don't include these services.

You deployed the Endpoints configuration to an existing Google Cloud project in which these services were explicitly disabled.

Use the following command to confirm that the required services are enabled:

gcloud services list

If you do not see the required services listed, enable them:

gcloud services enable servicemanagement.googleapis.com

gcloud services enable servicecontrol.googleapis.comAlso enable your Endpoints service:

gcloud services enable ENDPOINTS_SERVICE_NAME

To determine the ENDPOINTS_SERVICE_NAME you can either:

After deploying the Endpoints configuration, go to the Endpoints page in the Cloud console. The list of possible ENDPOINTS_SERVICE_NAME are shown under the Service name column.

For OpenAPI, the ENDPOINTS_SERVICE_NAME is what you specified in the

hostfield of your OpenAPI spec. For gRPC, the ENDPOINTS_SERVICE_NAME is what you specified in thenamefield of your gRPC Endpoints configuration.

For more information about the gcloud commands, see

gcloud services.

If you get an error message, see Troubleshooting Endpoints configuration deployment.

See Deploying the Endpoints configuration for additional information.

Deploying the API backend

So far you have deployed the API configuration to Service Management, but you haven't yet deployed the code that serves the API backend. This section walks you through getting Docker set up on your VM instance and running the API backend code and the ESP in a Docker container.

Install Docker on the VM instance

To install Docker on the VM instance:

- Set the zone for your project by running the command:

gcloud config set compute/zone YOUR_INSTANCE_ZONE

Replace YOUR_INSTANCE_ZONE with the zone where your instance is running.

- Connect to your instance by using the following command:

gcloud compute ssh INSTANCE_NAME

Replace INSTANCE_NAME with your VM instance name.

- See the

Docker documentation

to set up the Docker repository. Make sure to follow the steps that match the version and architecture of your VM instance:

- Jessie or newer

- x86_64 / amd64

Run the sample API and ESP in a Docker container

To run the sample gRPC service with ESP in a Docker container so that clients can use it:

- On the VM instance, create your own container network called

esp_net.sudo docker network create --driver bridge esp_net

- Run the sample Bookstore server that serves the sample API:

sudo docker run \ --detach \ --name=bookstore \ --net=esp_net \ gcr.io/endpointsv2/python-grpc-bookstore-server:1 - Run the pre-packaged ESP Docker container. In the

ESP startup options, replace

SERVICE_NAME with the name of your service. This is

the same name that you configured in the

namefield in theapi_config.yamlfile. For example:bookstore.endpoints.example-project-12345.cloud.googsudo docker run \ --detach \ --name=esp \ --publish=80:9000 \ --net=esp_net \ gcr.io/endpoints-release/endpoints-runtime:1 \ --service=SERVICE_NAME \ --rollout_strategy=managed \ --http2_port=9000 \ --backend=grpc://bookstore:8000The

--rollout_strategy=managedoption configures ESP to use the latest deployed service configuration. When you specify this option, up to 5 minutes after you deploy a new service configuration, ESP detects the change and automatically begins using it. We recommend that you specify this option instead of a specific configuration ID for ESP to use. For more details on the ESP arguments, see ESP startup options.

If you have Transcoding enabled, make sure to configure a port for HTTP1.1 or SSL traffic.

If you get an error message, see Troubleshooting Endpoints on Compute Engine.

Sending a request to the API

If you’re sending the request from the same instance in which the Docker

containers are running, you can replace $SERVER_IP with localhost. Otherwise

replace $SERVER_IP with the external IP of the instance.

You can find the external IP address by running:

gcloud compute instances list

To send requests to the sample API, you can use a sample gRPC client written in Python.

Clone the git repo where the gRPC client code is hosted:

git clone https://github.com/GoogleCloudPlatform/python-docs-samples.git

Change your working directory:

cd python-docs-samples/endpoints/bookstore-grpc/

Install dependencies:

pip install virtualenvvirtualenv envsource env/bin/activatepython -m pip install -r requirements.txtSend a request to the sample API:

python bookstore_client.py --host SERVER_IP --port 80

Look at the activity graphs for your API in the Endpoints > Services page.

Go to the Endpoints Services page

It may take a few moments for the request to be reflected in the graphs.

Look at the request logs for your API in the Logs Explorer page.

If you don't get a successful response, see Troubleshooting response errors.

You just deployed and tested an API in Endpoints!

Clean up

To avoid incurring charges to your Google Cloud account for the resources used in this tutorial, either delete the project that contains the resources, or keep the project and delete the individual resources.

- Delete the API:

gcloud endpoints services delete SERVICE_NAME

Replace

SERVICE_NAMEwith the name of your service. - In the Google Cloud console, go to the VM instances page.

- Select the checkbox for the instance that you want to delete.

- To delete the instance, click More actions, click Delete, and then follow the instructions.