Gestire la risposta di elaborazione

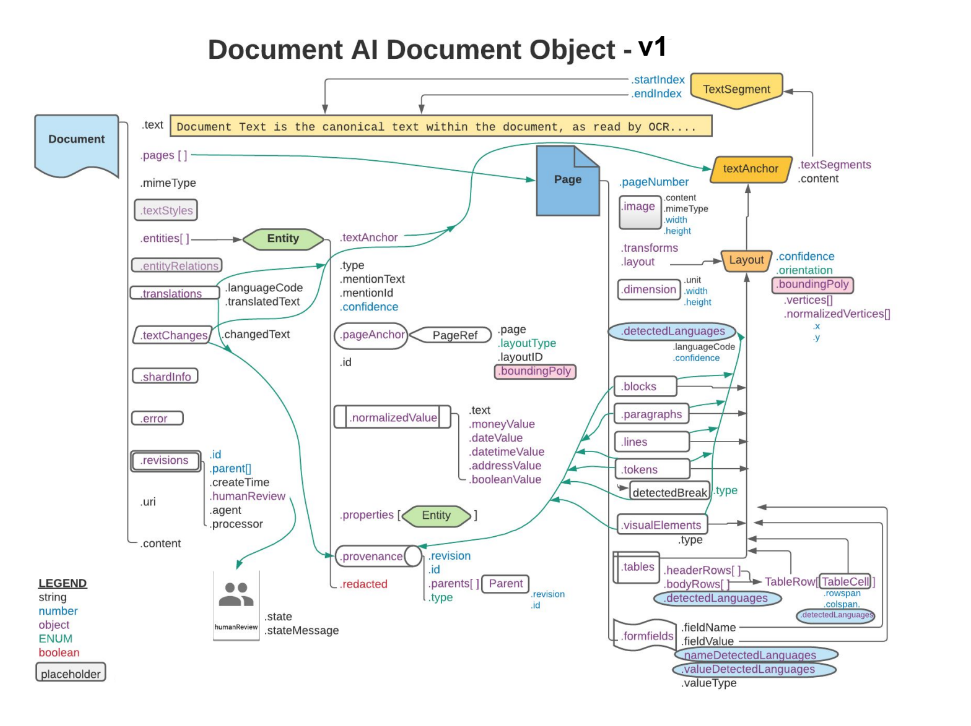

La risposta a una richiesta di elaborazione contiene un oggetto Document

che include tutte le informazioni note sul documento elaborato, incluse tutte

le informazioni strutturate che Document AI è stato in grado di estrarre.

Questa pagina spiega il layout dell'oggetto Document fornendo documenti di esempio,

e poi mappando gli aspetti dei risultati dell'OCR agli elementi specifici dell'oggetto JSON Document.

Fornisce inoltre esempi di codice delle librerie client e dell'SDK Document AI Toolbox.

Questi esempi di codice utilizzano l'elaborazione online, ma l'analisi dell'oggetto Document funziona allo stesso modo per l'elaborazione batch.

Utilizza un visualizzatore o un'utilità di modifica JSON progettata specificamente per espandere o comprimere gli elementi. La revisione del codice JSON non elaborato in un'utilità di testo normale non è efficiente.

Testo, layout e punteggi di qualità

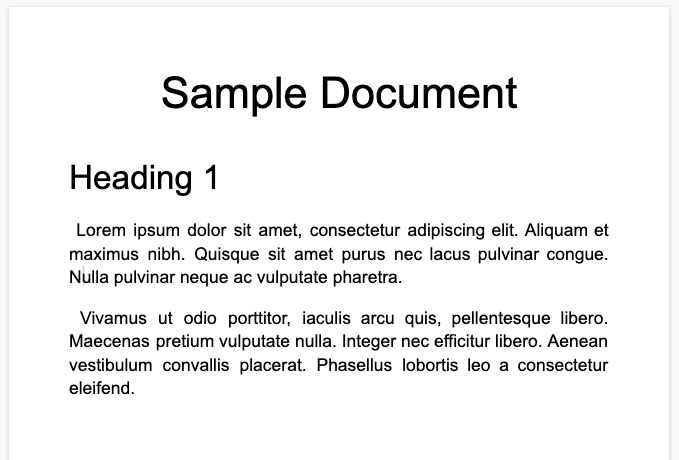

Ecco un documento di testo di esempio:

Ecco l'oggetto documento completo restituito dal processore Enterprise Document OCR:

Questo output OCR è sempre incluso anche nell'output del processore Document AI, poiché l'OCR viene eseguito dai processori. Utilizza i dati OCR esistenti, motivo per cui puoi inserire questi dati JSON utilizzando l'opzione Documento in linea nei processori Document AI.

image=None, # all our samples pass this var

mime_type="application/json",

inline_document=document_response # pass OCR output to CDE input - undocumented

Ecco alcuni dei campi importanti:

Testo non elaborato

Il campo text contiene il testo riconosciuto da Document AI.

Questo testo non contiene alcuna struttura di layout diversa da spazi, tabulazioni e feed di riga. Questo è l'unico campo che memorizza le informazioni di testo di un documento e funge da fonte attendibile del testo del documento. Altri campi possono fare riferimento a parti del campo di testo in base alla posizione (startIndex e endIndex).

{

text: "Sample Document\nHeading 1\nLorem ipsum dolor sit amet, ..."

}

Dimensioni pagina e lingue

Ogni page nell'oggetto documento corrisponde a una pagina fisica del documento di esempio. L'output JSON di esempio contiene una pagina perché è un'unica immagine PNG.

{

"pages:" [

{

"pageNumber": 1,

"dimension": {

"width": 679.0,

"height": 460.0,

"unit": "pixels"

},

}

]

}

- Il campo

pages[].detectedLanguages[]contiene le lingue trovate in una determinata pagina, insieme al punteggio di affidabilità.

{

"pages": [

{

"detectedLanguages": [

{

"confidence": 0.98009938,

"languageCode": "en"

},

{

"confidence": 0.01990064,

"languageCode": "und"

}

]

}

]

}

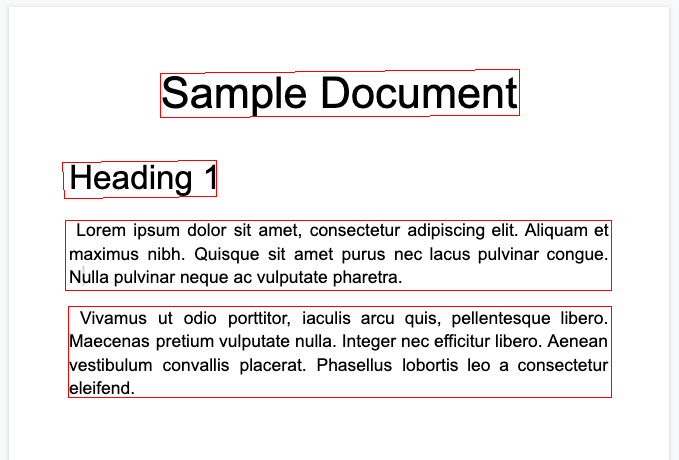

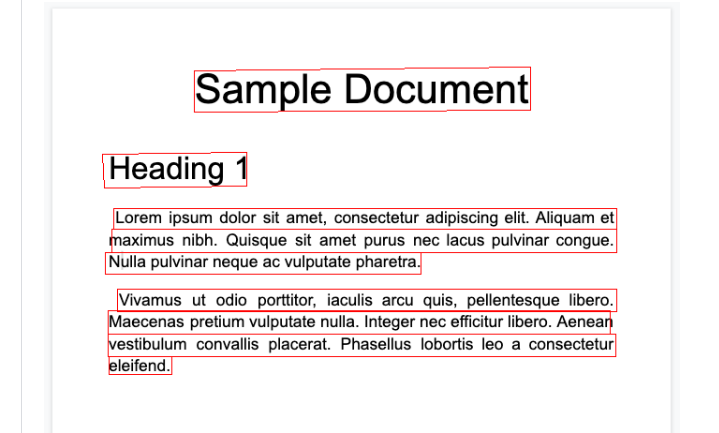

Dati OCR

L'OCR di Document AI rileva il testo con vari livelli di granularità o organizzazione nella pagina, ad esempio blocchi di testo, paragrafi, token e simboli (il livello di simbolo è facoltativo, se configurato per l'output dei dati a livello di simbolo). Si tratta di tutti i membri dell'oggetto pagina.

Ogni elemento ha un layout corrispondente che descrive la sua posizione e il suo testo. Anche gli elementi visivi non di testo (ad es. le caselle di controllo) si trovano a livello di pagina.

{

"pages": [

{

"paragraphs": [

{

"layout": {

"textAnchor": {

"textSegments": [

{

"endIndex": "16"

}

]

},

"confidence": 0.9939527,

"boundingPoly": {

"vertices": [ ... ],

"normalizedVertices": [ ... ]

},

"orientation": "PAGE_UP"

}

}

]

}

]

}

Il testo non elaborato viene fatto riferimento nell'oggetto textAnchor

che viene indicizzato nella stringa di testo principale con startIndex e endIndex.

Per

boundingPoly, l'origine(0,0)è l'angolo in alto a sinistra della pagina. I valori X positivi sono a destra e i valori Y positivi sono verso il basso.L'oggetto

verticesutilizza le stesse coordinate dell'immagine originale, mentrenormalizedVerticesrientrano nell'intervallo[0,1]. Esiste una matrice di trasformazione che indica le misure di correzione della distorsione e altri attributi della normalizzazione dell'immagine.

- Per disegnare il simbolo

boundingPoly, disegna segmenti di linea da un vertice all'altro. Quindi, chiudi il poligono tracciando un segmento di linea dall'ultimo vertice al primo. L'elemento orientation del layout indica se il testo è stato ruotato rispetto alla pagina.

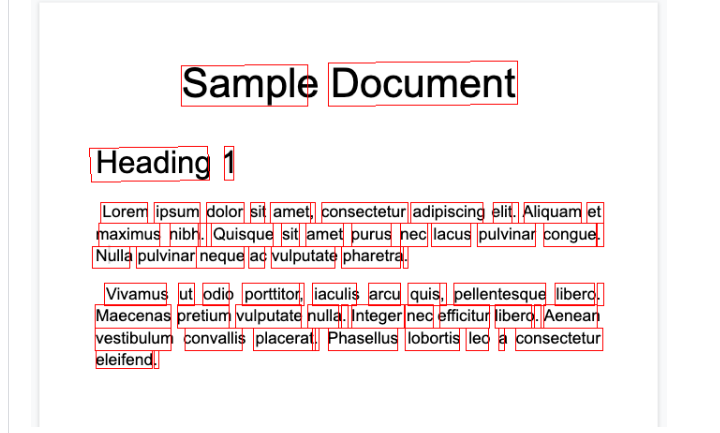

Per aiutarti a visualizzare la struttura del documento, le seguenti immagini disegnano i poligoni delimitanti per page.paragraphs, page.lines e page.tokens.

Paragrafi

Righe

Token

Blocchi

Il processore Enterprise Document OCR può eseguire la valutazione della qualità di un documento in base alla sua leggibilità.

- Devi impostare il campo

processOptions.ocrConfig.enableImageQualityScoressutrueper ottenere questi dati nella risposta dell'API.

Questa valutazione della qualità è un punteggio di qualità in [0, 1], dove 1 indica una qualità perfetta.

Il punteggio di qualità viene restituito nel campo Page.imageQualityScores.

Tutti i difetti rilevati sono elencati come quality/defect_* e ordinati in ordine decrescente in base al valore di confidenza.

Ecco un PDF troppo scuro e sfocato per essere letto comodamente:

Di seguito sono riportate le informazioni sulla qualità del documento restituite dal processore Enterprise Document OCR:

{

"pages": [

{

"imageQualityScores": {

"qualityScore": 0.7811847,

"detectedDefects": [

{

"type": "quality/defect_document_cutoff",

"confidence": 1.0

},

{

"type": "quality/defect_glare",

"confidence": 0.97849524

},

{

"type": "quality/defect_text_cutoff",

"confidence": 0.5

}

]

}

}

]

}

Esempi di codice

I seguenti esempi di codice mostrano come inviare una richiesta di elaborazione e poi leggere e stampare i campi nel terminale:

Java

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Java.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Node.js

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Node.js.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Moduli e tabelle

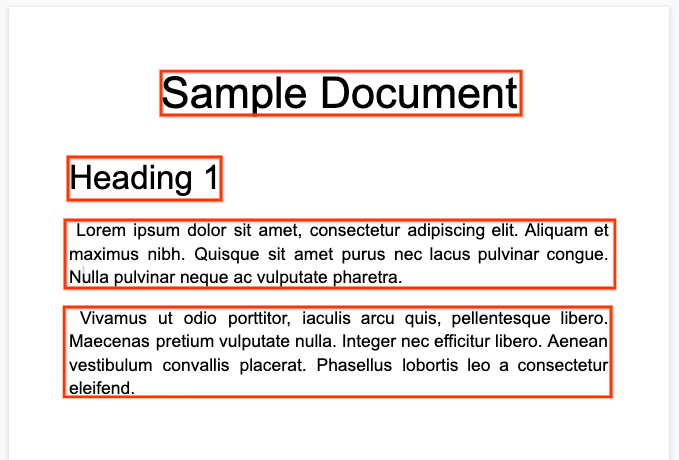

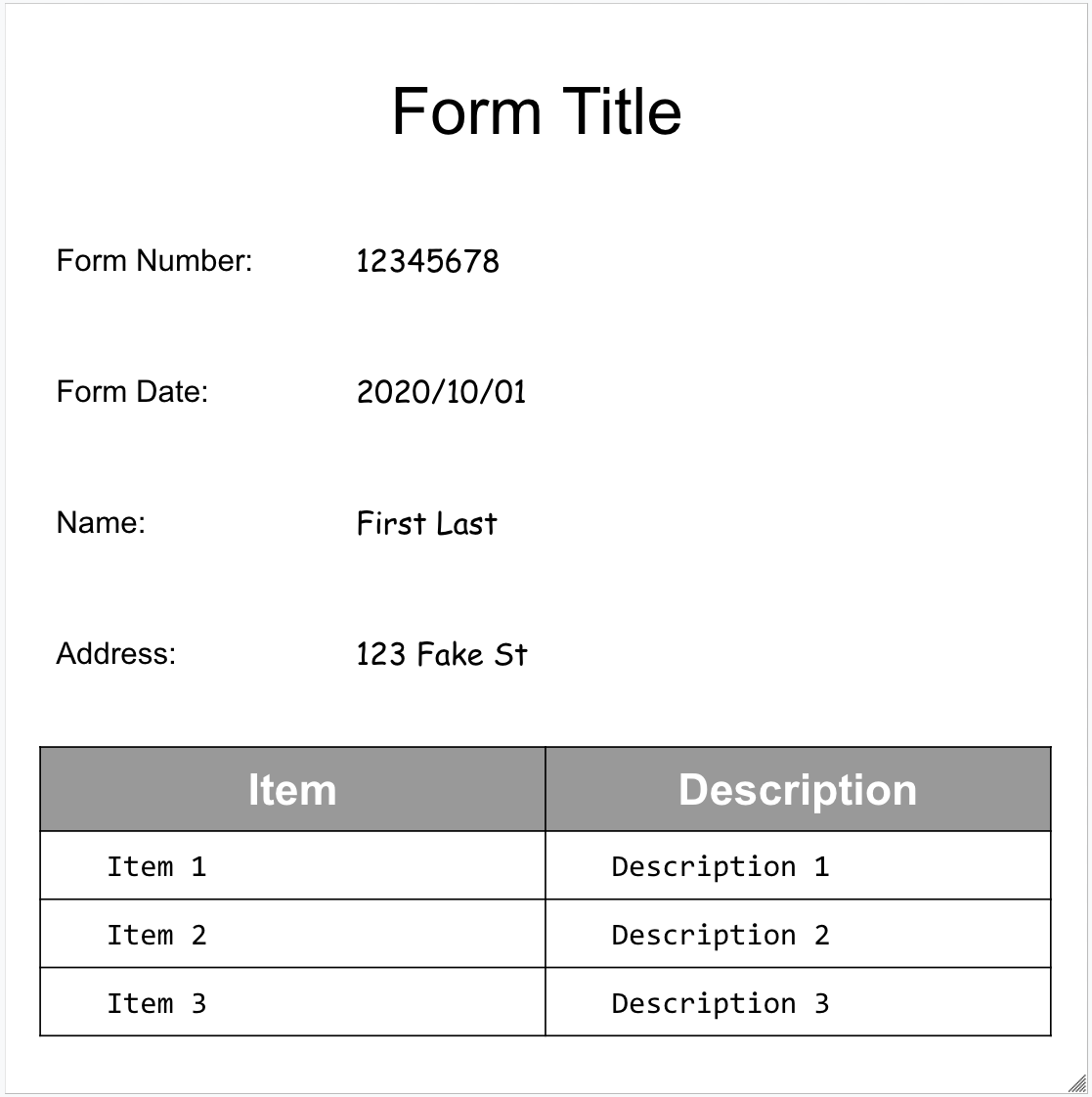

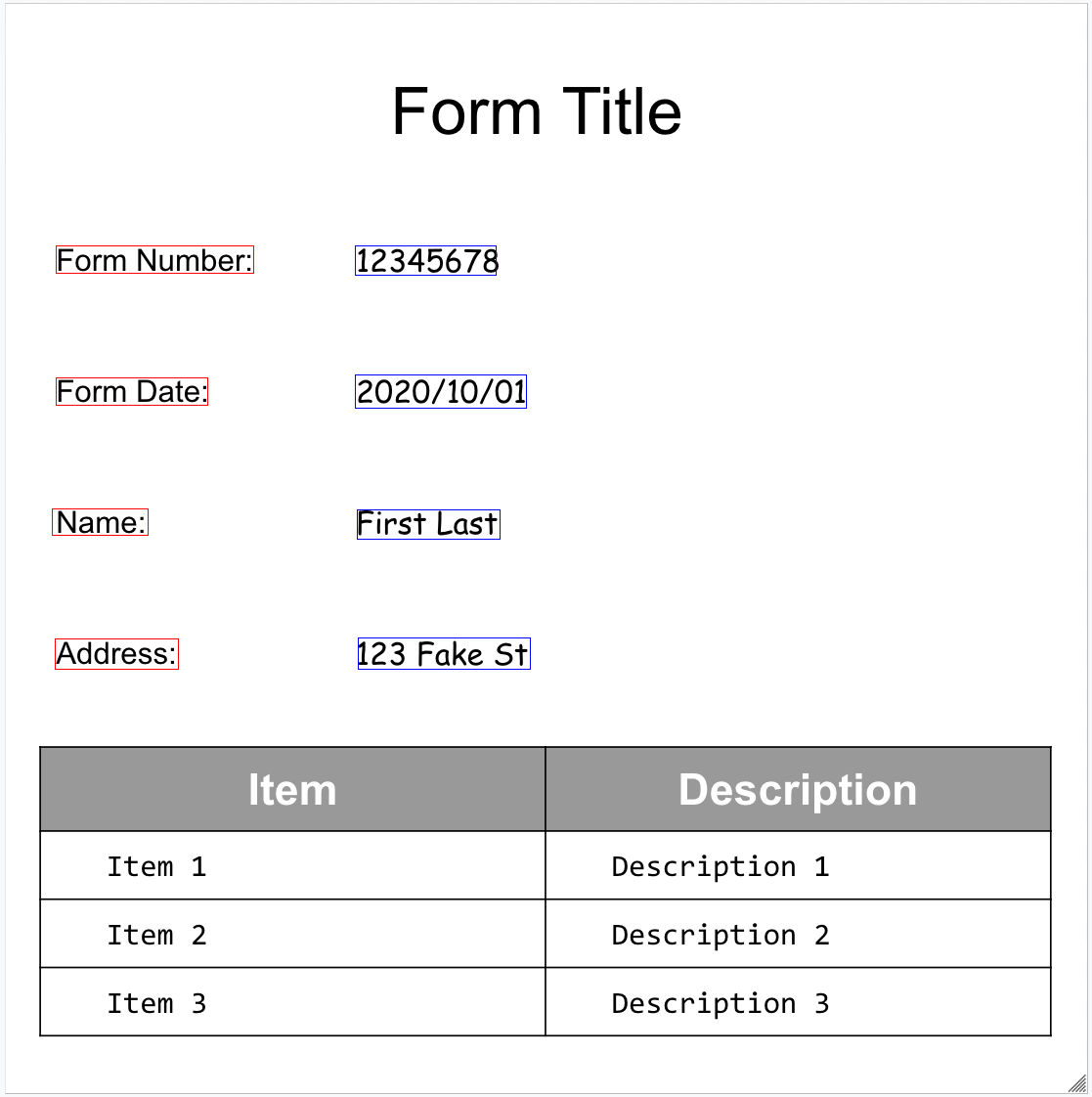

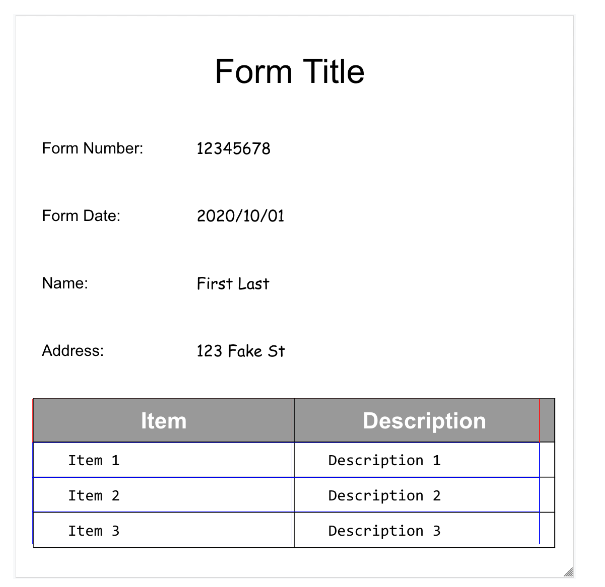

Ecco il nostro modulo di esempio:

Ecco l'oggetto documento completo restituito dal parser di moduli:

Ecco alcuni dei campi importanti:

L'analizzatore sintattico dei moduli è in grado di rilevare FormFields

nella pagina. Ogni campo del modulo ha un nome e un valore. Sono chiamate anche coppie chiave-valore (KVP). Tieni presente che le coppie chiave/valore sono diverse dalle entità (schema) in altri estrattori:

I nomi delle entità sono configurati. Le chiavi nei KVP sono letteralmente il testo della chiave nel documento.

{

"pages:" [

{

"formFields": [

{

"fieldName": { ... },

"fieldValue": { ... }

}

]

}

]

}

- Document AI può anche rilevare

Tablesnella pagina.

{

"pages:" [

{

"tables": [

{

"layout": { ... },

"headerRows": [

{

"cells": [

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

},

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

}

]

}

],

"bodyRows": [

{

"cells": [

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

},

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

}

]

}

]

}

]

}

]

}

L'estrazione delle tabelle all'interno di Form Parser riconosce solo tabelle semplici,

ovvero quelle senza celle che si estendono su righe o colonne. Pertanto, rowSpan e colSpan sono sempre 1.

A partire dalla versione del processore

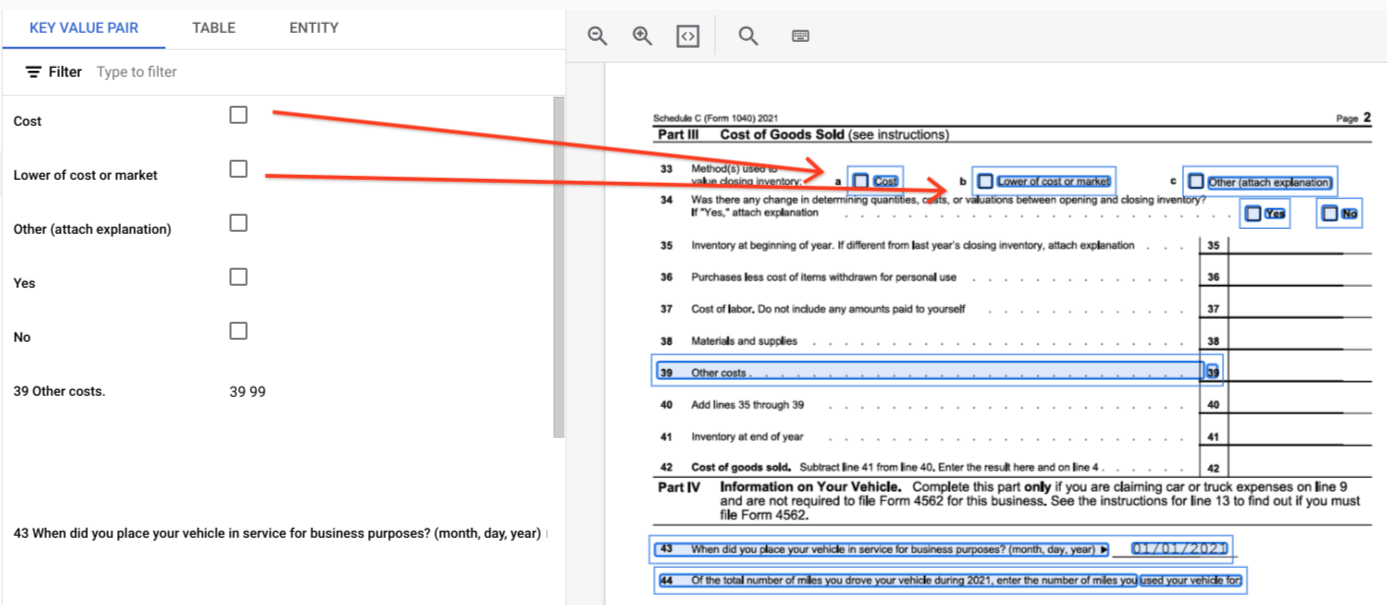

pretrained-form-parser-v2.0-2022-11-10, il parser di moduli può anche riconoscere entità generiche. Per ulteriori informazioni, consulta Parser dei moduli.Per aiutarti a visualizzare la struttura del documento, le seguenti immagini disegnano i poligoni delimitanti per

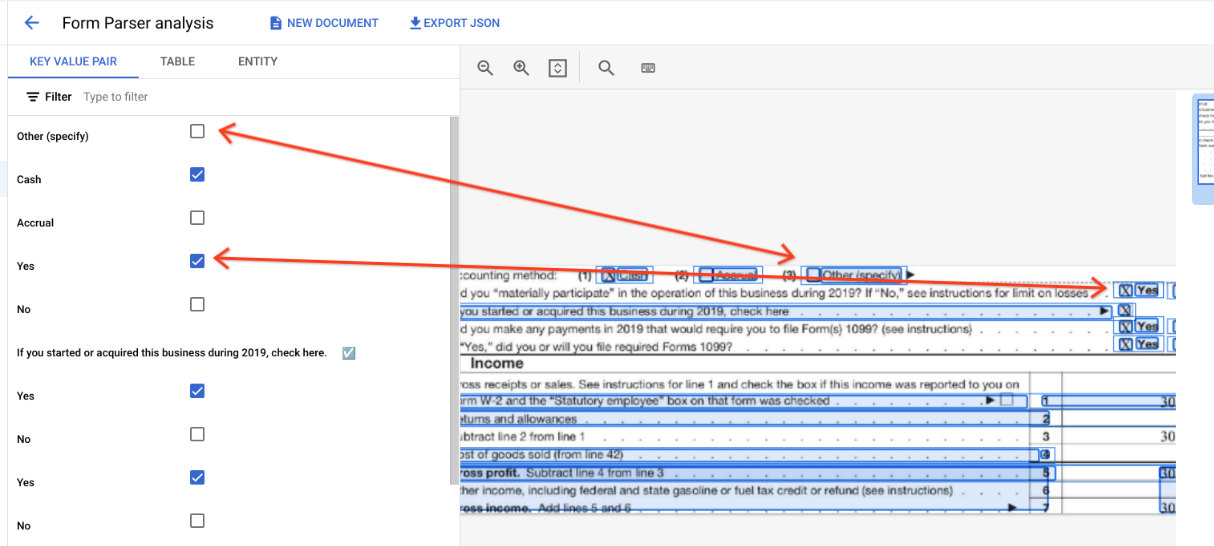

page.formFieldsepage.tables.Caselle di controllo nelle tabelle. Il parser di moduli è in grado di digitalizzare le caselle di controllo da immagini e PDF come coppie chiave-valore. Fornitura di un esempio di digitalizzazione delle caselle di controllo come coppia chiave-valore.

Al di fuori delle tabelle, le caselle di controllo sono rappresentate come elementi visivi all'interno di Form Parser. Evidenziare le caselle quadrate con i segni di spunta nell'interfaccia utente e il carattere Unicode ✓ nel JSON.

"pages:" [

{

"tables": [

{

"layout": { ... },

"headerRows": [

{

"cells": [

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

},

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

}

]

}

],

"bodyRows": [

{

"cells": [

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

},

{

"layout": { ... },

"rowSpan": 1,

"colSpan": 1

}

]

}

]

}

]

}

]

}

Nelle tabelle, le caselle di controllo vengono visualizzate come caratteri Unicode come ✓ (selezionata) o ☐ (deselezionata).

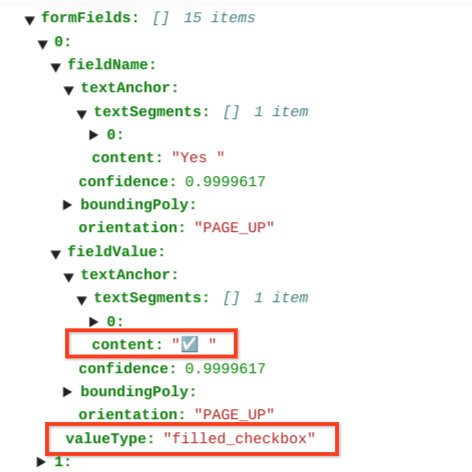

Le caselle di controllo selezionate hanno il valore filled_checkbox:

under pages > x > formFields > x > fieldValue > valueType.. Le caselle di controllo deselezionate hanno il valore unfilled_checkbox.

I campi dei contenuti mostrano il valore del contenuto della casella di controllo evidenziato ✓ nel percorso pages>formFields>x>fieldValue>textAnchor>content.

Per aiutarti a visualizzare la struttura del documento, le seguenti immagini disegnano i poligoni delimitanti per page.formFields e page.tables.

Campi modulo

Tabelle

Esempi di codice

I seguenti esempi di codice mostrano come inviare una richiesta di elaborazione e poi leggere e stampare i campi nel terminale:

Java

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Java.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Node.js

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Node.js.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Entità, entità nidificate e valori normalizzati

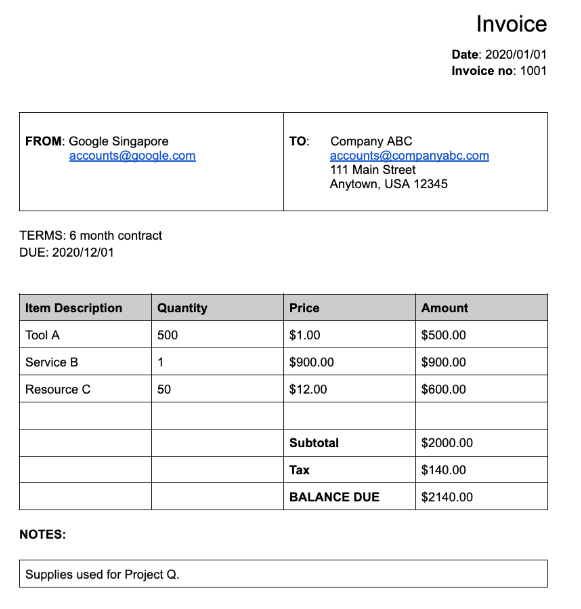

Molti dei processori specializzati estraggono dati strutturati basati su uno schema ben definito. Ad esempio, lo strumento di analisi delle fatture

rileva campi specifici come invoice_date e supplier_name. Ecco un'esempio di fattura:

Ecco l'oggetto documento completo restituito dal parser delle fatture:

Di seguito sono riportate alcune delle parti importanti dell'oggetto documento:

Campi rilevati:

Entitiescontiene i campi che il processore è stato in grado di rilevare, ad esempioinvoice_date:{ "entities": [ { "textAnchor": { "textSegments": [ { "startIndex": "14", "endIndex": "24" } ], "content": "2020/01/01" }, "type": "invoice_date", "confidence": 0.9938466, "pageAnchor": { ... }, "id": "2", "normalizedValue": { "text": "2020-01-01", "dateValue": { "year": 2020, "month": 1, "day": 1 } } } ] }Per alcuni campi, il processore normalizza anche il valore. In questo esempio, la data è stata normalizzata da

2020/01/01a2020-01-01.Normalizzazione: per molti campi supportati specifici, il processore normalizza anche il valore e restituisce un

entity. Il camponormalizedValueviene aggiunto al campo estratto non elaborato ottenuto tramite iltextAnchordi ogni entità. Pertanto, normalizza il testo letterale, spesso suddividendo il valore del testo in sottocampi. Ad esempio, una data come 1° settembre 2024 viene rappresentata come:

normalizedValue": {

"text": "2020-09-01",

"dateValue": {

"year": 2024,

"month": 9,

"day": 1

}

In questo esempio, la data è stata normalizzata da 01/01/2020 a 01/01/2020, un formato standardizzato per ridurre il post-trattamento e consentire la conversione al formato scelto.

Gli indirizzi sono spesso anche normalizzati, il che suddivide gli elementi dell'indirizzo in singoli campi. I numeri vengono normalizzati utilizzando un numero intero o con virgola mobile come normalizedValue.

- Arricchimento: alcuni processori e campi supportano anche l'arricchimento.

Ad esempio, il valore

supplier_nameoriginale nel documentoGoogle Singaporeè stato uniformato inGoogle Asia Pacific, Singaporein base a Enterprise Knowledge Graph. Inoltre, poiché l'Enterprise Knowledge Graph contiene informazioni su Google, Document AI deduconosupplier_addressanche se non era presente nel documento di esempio.

{

"entities": [

{

"textAnchor": {

"textSegments": [ ... ],

"content": "Google Singapore"

},

"type": "supplier_name",

"confidence": 0.39170802,

"pageAnchor": { ... },

"id": "12",

"normalizedValue": {

"text": "Google Asia Pacific, Singapore"

}

},

{

"type": "supplier_address",

"id": "17",

"normalizedValue": {

"text": "70 Pasir Panjang Rd #03-71 Mapletree Business City II Singapore 117371",

"addressValue": {

"regionCode": "SG",

"languageCode": "en-US",

"postalCode": "117371",

"addressLines": [

"70 Pasir Panjang Rd",

"#03-71 Mapletree Business City II"

]

}

}

}

]

}

Campi nidificati: lo schema (i campi) nidificato può essere creato dichiarando prima un'entità come principale e poi creando le entità secondarie sotto quella principale. La risposta all'analisi sintattica per l'elemento principale include i campi secondari nell'elemento

propertiesdel campo principale. Nell'esempio seguente,line_itemè un campo principale che ha due campi secondari:line_item/descriptioneline_item/quantity.{ "entities": [ { "textAnchor": { ... }, "type": "line_item", "confidence": 1.0, "pageAnchor": { ... }, "id": "19", "properties": [ { "textAnchor": { "textSegments": [ ... ], "content": "Tool A" }, "type": "line_item/description", "confidence": 0.3461604, "pageAnchor": { ... }, "id": "20" }, { "textAnchor": { "textSegments": [ ... ], "content": "500" }, "type": "line_item/quantity", "confidence": 0.8077843, "pageAnchor": { ... }, "id": "21", "normalizedValue": { "text": "500" } } ] } ] }

I seguenti analizzatori lo rispettano:

- Estrazione (estrattore personalizzato)

- Legacy

- Analizzatore sintattico estratto conto bancario

- Parser spese

- Analizzatore sintattico delle fatture

- Parser busta paga

- Analizzatore W2

Esempi di codice

I seguenti esempi di codice mostrano come inviare una richiesta di elaborazione e poi leggere e stampare i campi da un processore specializzato al terminale:

Java

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Java.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Node.js

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Node.js.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Estrattore di documenti personalizzato

Il processore Estrattore di documenti personalizzato può estrarre entità personalizzate dai documenti per i quali non è disponibile un processore preaddestrato. Questo può essere ottenuto addestrando un modello personalizzato o utilizzando i modelli di base di IA generativa per estrarre entità denominate senza alcun addestramento. Per saperne di più, consulta Creare un estrattore di documenti personalizzato nella console.

- Se addestri un modello personalizzato, il processore può essere utilizzato esattamente come un processore di estrazione di entità preaddestrato.

- Se utilizzi un modello di base, puoi creare una versione del processore per estrarre entità specifiche per ogni richiesta oppure puoi configurarlo su base singola richiesta.

Per informazioni sulla struttura di output, consulta Entità, entità nidificate e valori normalizzati.

Esempi di codice

Se utilizzi un modello personalizzato o hai creato una versione del processore utilizzando un modello di base, utilizza i campioni di codice per l'estrazione delle entità.

Il seguente esempio di codice mostra come configurare entità specifiche per un Estrattore di documenti personalizzato del modello di base su base di richiesta e stampare le entità estratte:

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Riassunto

Il processore di riassunti utilizza i modelli di base dell'IA generativa per riassumere il testo estratto da un documento. La lunghezza e il formato della risposta possono essere personalizzati nei seguenti modi:

- Lunghezza

BRIEF: un breve riepilogo di una o due frasiMODERATE: un riepilogo di un paragrafoCOMPREHENSIVE: l'opzione più lunga disponibile

- Formato

Puoi creare una versione del processore per una lunghezza e un formato specifici oppure puoi configurarla in base alla richiesta.

Il testo riepilogativo viene visualizzato in Document.entities.normalizedValue.text. Puoi trovare un file JSON di output di esempio completo in Output del programma di elaborazione dei sample.

Per saperne di più, consulta Creare un riepilogatore di documenti nella console.

Esempi di codice

Il seguente esempio di codice mostra come configurare una lunghezza e un formato specifici in una richiesta di elaborazione e stampare il testo riepilogativo:

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Suddivisione e classificazione

Ecco un PDF composito di 10 pagine contenente diversi tipi di documenti e moduli:

Ecco l'oggetto documento completo restituito dall'strumento per la divisione e la classificazione dei documenti creditizi:

Ogni documento rilevato dallo splitter è rappresentato da un

entity. Ad esempio:

{

"entities": [

{

"textAnchor": {

"textSegments": [

{

"startIndex": "13936",

"endIndex": "21108"

}

]

},

"type": "1040se_2020",

"confidence": 0.76257163,

"pageAnchor": {

"pageRefs": [

{

"page": "6"

},

{

"page": "7"

}

]

}

}

]

}

Entity.pageAnchorindica che questo documento è composto da 2 pagine. Tieni presente chepageRefs[].pageè basato su zero ed è l'indice nel campodocument.pages[].Entity.typespecifica che questo documento è un modulo 1040 Schedule SE. Per un elenco completo dei tipi di documenti che possono essere identificati, consulta Tipi di documenti identificati nella documentazione del processore.

Per ulteriori informazioni, consulta Comportamento degli splitter di documenti.

Esempi di codice

Gli elementi di suddivisione identificano i limiti di pagina, ma non suddividono effettivamente il documento di input. Puoi utilizzare Document AI Toolbox per suddividere fisicamente un file PDF utilizzando i confini di pagina. I seguenti esempi di codice stampano gli intervalli di pagine senza suddividere il PDF:

Java

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Java.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Node.js

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Node.js.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Document elaborato.

Python

Per ulteriori informazioni, consulta la documentazione di riferimento dell'API Document AI Python.

Per autenticarti a Document AI, configura le credenziali predefinite dell'applicazione. Per ulteriori informazioni, consulta Configurare l'autenticazione per un ambiente di sviluppo locale.

Document AI Workbench

Document AI Toolbox è un SDK per Python che fornisce funzioni di utilità per gestire, manipolare ed estrarre informazioni dalla risposta del documento.

Crea un oggetto documento "con wrapping" da una risposta del documento elaborata da file JSON in Cloud Storage, file JSON locali o output direttamente dal metodo process_document().

Può eseguire le seguenti azioni:

- Combina i file JSON

Documentframmentati dell'elaborazione collettiva in un unico documento "con wrapping". - Esportare i frammenti come

Documentunificato. -

Ottieni l'output

Documentda: - Accedi al testo di

Pages,Lines,Paragraphs,FormFieldseTablessenza gestire le informazioniLayout. - Cerca un

Pagescontenente una stringa target o che corrisponde a un'espressione regolare. - Cerca

FormFieldsper nome. - Cerca

Entitiesper tipo. - Converti

Tablesin un DataFrame Pandas o in un file CSV. - Inserisci

EntitieseFormFieldsin una tabella BigQuery. - Dividi un file PDF in base all'output di un'elaborazione di un separatore/classificatore.

- Estrai l'immagine

Entitiesdai riquadri di delimitazioneDocument. -

Converti

Documentsin e da formati di uso comune:- API Cloud Vision

AnnotateFileResponse - hOCR

- Formati di elaborazione di documenti di terze parti

- API Cloud Vision

- Crea batch di documenti da elaborare da una cartella Cloud Storage.

Esempi di codice

I seguenti esempi di codice mostrano come utilizzare Document AI Toolbox.