Dataplex Universal Catalog lets you define and measure the quality of the data in your BigQuery tables. You can automate the data scanning, validate data against defined rules, and log alerts if your data doesn't meet quality requirements. Auto data quality lets you manage data quality rules and deployments as code, improving the integrity of data production pipelines.

To scan data for anomalies, see Dataplex Universal Catalog data profile scan. The scan can generate data quality rules. You can also use predefined quality rules or build custom rules.

Dataplex Universal Catalog provides monitoring, troubleshooting, and Cloud Logging alerting that's integrated with auto data quality.

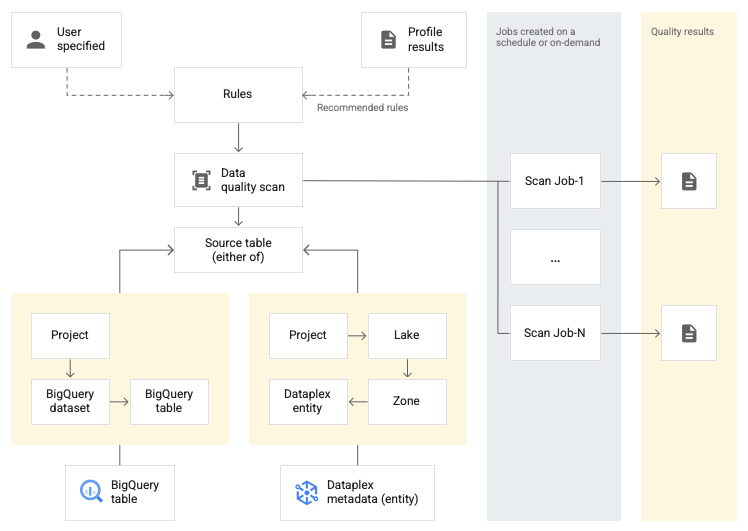

Conceptual model

A data scan is a Dataplex Universal Catalog job that samples data from

BigQuery and Cloud Storage and infers various types of

metadata. To measure the quality of a table using auto data quality, you create

a DataScan object of type data quality. The scan runs on only

one BigQuery table. The scan uses resources in a Google

tenant project, so you don't

need to set up your own infrastructure.

Creating and using a data quality scan consists of the following steps:

- Define data quality rules

- Configure rule execution

- Analyze data quality scan results

- Set up monitoring and alerting

- Troubleshoot data quality failures

Rule definition

Data quality rules associated with a data quality scan define data expectations. You can create data quality rules in the following ways:

- Use recommendations from Dataplex Universal Catalog data profiling

- Use the predefined rules

- Create custom SQL rules

Predefined rules

Dataplex Universal Catalog supports the following categories of predefined rules:

- Row-level

For row-level category rules, the expectation is applied against each data row. Each row independently passes or fails the condition. For example,

column_A_value < 1.Row-level checks require you to specify a passing threshold. When the percentage of rows passing the rule falls below the threshold value, the rule fails.

- Aggregate

For aggregate rules, the expectation is applied against a single value aggregated across the entire data. For example,

Avg(someCol) >= 10. To pass, the check must evaluate to the booleantrue. Aggregate rules don't provide an independent pass or fail count for each row.

For both of the rule categories, you can set the following parameters:

- The column to which the rule applies

- A dimension

The following table lists the supported row-level and aggregate rule types:

| Rule type (Name in Google Cloud console) |

Row-level or aggregate rule | Description | Supported column types | Rule-specific parameters |

|---|---|---|---|---|

RangeExpectation(Range Check) |

Row-level | Check if the value is between min and max. | All numeric, date, and timestamp type columns. | Required:

|

NonNullExpectation(Null check) |

Row-level | Validate that column values are not NULL. | All supported column types. | Required:

|

SetExpectation(Set check) |

Row-level | Check if the values in a column are one of the specified values in a set. | All supported column types, except Record and Struct. |

Required:

|

RegexExpectation(Regular expression check) |

Row-level | Check the values against a specified regular expression. | String | Required:

|

Uniqueness(Uniqueness Check) |

Aggregate | Check if all the values in a column are unique. | All supported column types, except Record and Struct. |

Required:

|

StatisticRangeExpectation(Statistic check) |

Aggregate | Check if the given statistical measure matches the range expectation. | All supported numeric column types. | Required:

|

Supported custom SQL rule types

SQL rules provide flexibility to expand the validation with custom logic. These rules come in the following types.

| Rule type | Row-level or aggregate rule | Description | Supported column types | Rule-specific parameters | Example |

|---|---|---|---|---|---|

| Row condition | Row-level | Specify an expectation for every row by defining a SQL

expression in a The expression can include a reference to another table, for example, to create referential integrity checks. |

All columns | Required:

|

grossWeight <= netWeight |

| Table condition (aggregate SQL expression) |

Aggregate | These rules are executed once per table. Provide a SQL expression that

evaluates to boolean The SQL expression can include a reference to another table using expression subqueries. |

All columns | Required:

|

Simple aggregate example: Using an expression subquery to compare values across a different table: |

| SQL assertion | Aggregate | An assertion rule uses a data quality query to find rows that fail one or more conditions specified in the query. Provide a SQL statement that is evaluated to return rows that match the invalid state. If the query returns any rows, the rule fails. Omit the trailing semicolon from the SQL statement. The SQL statement can include a reference to another table using expression subqueries. |

All columns | Required:

|

Simple aggregate example to make sure that Using an expression subquery to compare values across a different table: |

For example rules, see auto data quality sample rules.

For SQL functions supported, see GoogleSQL reference.

Dimensions

Dimensions let you aggregate the results of multiple data quality rules for monitoring and alerting. You must associate every data quality rule with a dimension. Dataplex Universal Catalog provides the following dimensions:

- Freshness

- Freshness measures when the data was last updated. Having this information can help you determine whether the data is recent enough to be useful.

- Volume

- Volume measures whether all of the expected data is present.

- Completeness

- Completeness assesses whether the data contains all of the information that's required for its intended purpose.

- Validity

- Validity evaluates whether the data conforms to predefined standards for

format, acceptable ranges, or other criteria. For example, if a valid date needs

to have the format

YYYY/mm/dd, then 08-12-2019 is invalid data. As another example, if a valid sales price for an item is between $10 and $20, then a sales price of $100 is invalid data. - Consistency

- Consistency refers to having the same values for data across multiple instances, such as tables and columns. Inconsistency in data arises when, for example, the revenue of a product differs when it is read from a sales database or a usage database.

- Accuracy

- Accuracy reflects the correctness of the data. Note that data that is valid isn't necessarily accurate. For example, a valid hair color might be brown, but if a person doesn't have brown hair, that is inaccurate data.

- Uniqueness

- Uniqueness measures whether the data is distinct with no duplicates.

Typed input in rules

All value parameters are passed as string values to the API. Dataplex Universal Catalog requires inputs to follow the BigQuery specified format.

Binary-typed parameters can be passed as a base64-encoded string.

| Type | Supported formats | Examples |

|---|---|---|

| Binary | Base64 encoded value | YXBwbGU= |

| Timestamp | YYYY-[M]M-[D]D[( |T)[H]H:[M]M:[S]S[.F]] [time_zone] OR YYYY-[M]M-[D]D[( |T)[H]H:[M]M:[S]S[.F]][time_zone_offset] |

2014-09-27 12:30:00.45-08 |

| Date | YYYY-M[M]-D[D] | 2014-09-27 |

| Time | [H]H:[M]M:[S]S[.DDDDDD] | 12:30:00.45 |

| DateTime | YYYY-[M]M-[D]D [[H]H:[M]M:[S]S[.DDDDDD]] | 2014-09-27 12:30:00.45 |

Data reference parameter

When you create a custom SQL rule, you can refer to a data source table and all

of its precondition filters by using the data reference parameter ${data()} in

the rule, instead of explicitly mentioning the source table and its filters.

Dataplex Universal Catalog interprets the parameter as a reference to the source

table and its filters. Examples of precondition filters include row filters,

sampling percents, and incremental filters.

For example, say that you have a data source table called

my_project_id.dim_dataset.dim_currency. You want to run an incremental data

quality scan that scans only on new daily data. A row filter that filters for

today's entries, transaction_timestamp >= current_date(), is applied on the

table.

A custom SQL rule to find rows with discount_pct for today looks like this:

discount_pct IN (SELECT discount_pct FROM my_project_id.dim_dataset.dim_currency WHERE transaction_timestamp >= current_date())

If you use the data reference parameter, you can simplify the rule. Replace the

mention of the table and its precondition filters with the ${data()}

parameter:

discount_pct IN (SELECT discount_pct FROM ${data()})

Dataplex Universal Catalog interprets the ${data()} parameter as a

reference to the data source table with today's entries,

my_project_id.dim_dataset.dim_currency WHERE transaction_timestamp >= current_date().

In this example, the data reference parameter refers only to the incremental

data.

The ${data()} parameter is case sensitive.

When you use an alias within a subquery to refer to columns in the source table,

either use the data reference parameter to refer to the source table, or omit

the table reference. Don't refer to the columns in the source table by using a

direct table reference in the WHERE clause.

Recommended:

Use the data reference parameter to refer to the source table:

discount_pct IN ( SELECT discount_pct FROM `my_project_id.dim_dataset.dim_currency` AS temp-table WHERE temp-table.transaction_timestamp = ${data()}.timestamp )Omit the table reference:

discount_pct IN ( SELECT discount_pct FROM `my_project_id.dim_dataset.dim_currency` AS temp-table WHERE temp-table.transaction_timestamp = timestamp )

Not recommended:

Don't use a direct table reference to refer to columns in the source table:

discount_pct IN ( SELECT discount_pct FROM `my_project_id.dim_dataset.dim_currency` AS temp-table WHERE temp-table.transaction_timestamp = `my_project_id.dim_dataset.dim_currency`.timestamp )

Rule execution

You can schedule data quality scans to run at a specific interval, or you can run a scan on demand.

When you run a data quality scan, Dataplex Universal Catalog creates a job. As part of the specification of a data quality scan, you can specify the scope of a job to be one of the following:

- Full Table

- Each job validates the entire table.

- Incremental

- Each job validates incremental data. To

determine increments, provide a

Date/Timestampcolumn in the table that can be used as a marker. Typically, this is the column on which the table is partitioned.

Filter data

You can filter data to be scanned for data quality by using a row filter. Creating a row filter lets you focus on data within a specific time period or specific segment, such as a certain region. Using filters can reduce the run time and cost. For example, you can filter out data with a timestamp before a certain date.

Sample data

You can specify a percentage of records from your data to sample for running a data quality scan. Creating data quality scans on a smaller sample of data can reduce the run time and the cost relative to querying the entire dataset.

Data quality scan results

The results of your data quality scans are available in Dataplex Universal Catalog and BigQuery. You can also review and analyze the scan results by using the following methods:

Export results to BigQuery

You can export the scan results to a BigQuery table for further analysis. To customize reporting, you can connect the BigQuery table data to a Looker dashboard. You can build an aggregated report by using the same results table across multiple scans.

Publish results as Dataplex Universal Catalog metadata

You can publish the data quality scan results as Dataplex Universal Catalog metadata. The latest results are saved to the Dataplex Universal Catalog entry that represents the source table, under the

data-quality-scorecardsystem aspect type. You can view the results on the source table's BigQuery and Dataplex Universal Catalog pages in the Google Cloud console, on the Data quality tab. You can also retrieve the results by using the API.For more information about Dataplex Universal Catalog metadata, see About metadata management in Dataplex Universal Catalog.

Review data quality scores

Each scan result provides data quality scores that indicate the percentage of rules that passed. The scores are reported at the overall job level, the column level (if the rule is evaluated against a column), and the dimension level. Use the data quality scores to normalize data quality across tables or columns, track trends, and identify data that doesn't meet quality requirements.

For more information, see View the data quality scan results.

Monitoring and alerting

You can monitor and get alerts about data quality scans by using the following methods:

Set alerts in Cloud Logging

You can monitor the data quality jobs using the

data_scananddata_quality_scan_rule_resultlogs in the Logs Explorer.For each data quality job, the

data_scanlog with thedata_scan_typefield set toDATA_QUALITYcontains the following information:- Data source used for the data scan.

- Job execution details, such as creation time, start time, end time, and job state.

- Result of the data quality job: pass or fail.

- Dimension level pass or fail.

Every succeeded job contains a

data_quality_scan_rule_resultlog with the following detailed information about each rule in that job:- Configuration information, such as rule name, rule type, evaluation type, and dimension.

- Result information, such as pass or failure, total row count, passing row count, null row count, and evaluated row count.

The information in the logs is available through the API and Google Cloud console. You can use this information to set up alerts. For more information, see Set alerts in Logging.

Send email notification reports

You can send email notification reports to alert people about the status and results of a data quality job. Notification reports are available for the following scenarios:

- The data quality score is lower than a specified target score

- The job failed

- The job finished

You configure notification reports when you create a data quality scan.

Troubleshoot data quality failures

When a rule fails, Dataplex Universal Catalog produces a query to get the failed records. Run this query to see the records that did not match your rule. For more information, see Troubleshoot a data quality failure.

Limitations

- Rule recommendations aren't supported in the gcloud CLI.

- The choice of dimensions is fixed to one of the predefined seven dimensions.

- The number of rules per data quality scan is limited to 1000.

- Data quality scores that are reported at the column level are supported only in the API.

Pricing

For more information about pricing, see Dataplex Universal Catalog pricing.

What's next?

- Learn how to use auto data quality.

- Learn about data profiling.

- Learn how to use data profiling.