Cloud Composer 3 | Cloud Composer 2 | Cloud Composer 1

Auf dieser Seite wird beschrieben, wie Sie Aufgaben in Ihren Airflow-Pipelines mithilfe der folgenden Designmuster gruppieren können:

- Gruppieren von Aufgaben in der DAG-Grafik

- Untergeordnete DAGs aus einem übergeordneten DAG auslösen.

- Aufgaben mit dem Operator

TaskGroupgruppieren.

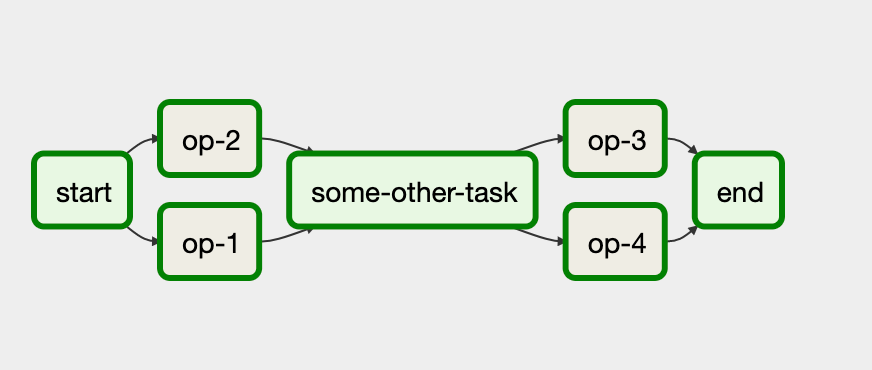

Aufgaben in der DAG-Grafik gruppieren

Zum Gruppieren von Aufgaben in bestimmten Phasen Ihrer Pipeline können Sie Beziehungen zwischen den Aufgaben in Ihrer DAG-Datei verwenden.

Dazu ein Beispiel:

In diesem Workflow werden die Aufgaben op-1 und op-2 nach der ersten Aufgabe start ausgeführt. Dies erreichen Sie, indem Sie Aufgaben zusammen mit der Anweisung start >> [task_1, task_2] gruppieren.

Das folgende Beispiel enthält eine vollständige Implementierung dieses DAG:

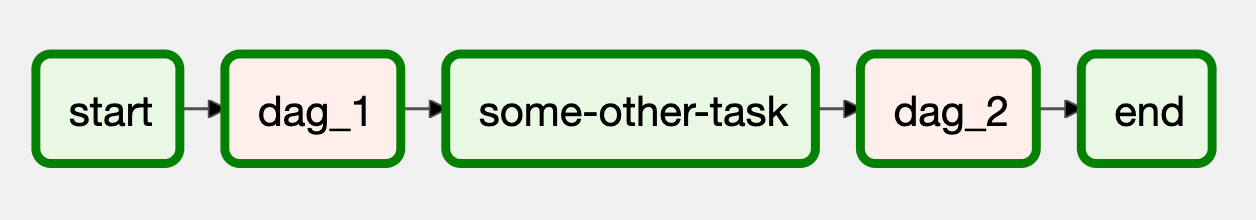

Untergeordnete DAGs aus einem übergeordneten DAG auslösen

Sie können mit dem TriggerDagRunOperator-Operator einen DAG aus einem anderen DAG auslösen.

Dazu ein Beispiel:

In diesem Workflow stellen die Blöcke dag_1 und dag_2 eine Reihe von Aufgaben dar, die in einem separaten DAG in der Cloud Composer-Umgebung gruppiert sind.

Die Implementierung dieses Workflows erfordert zwei separate DAG-Dateien. Die Steuerungs-DAG-Datei sieht so aus:

Die Implementierung des untergeordneten DAG, die vom Steuerungs-DAG ausgelöst wird, sieht so aus:

Damit der DAG funktioniert, müssen Sie beide DAG-Dateien in Ihre Cloud Composer-Umgebung hochladen.

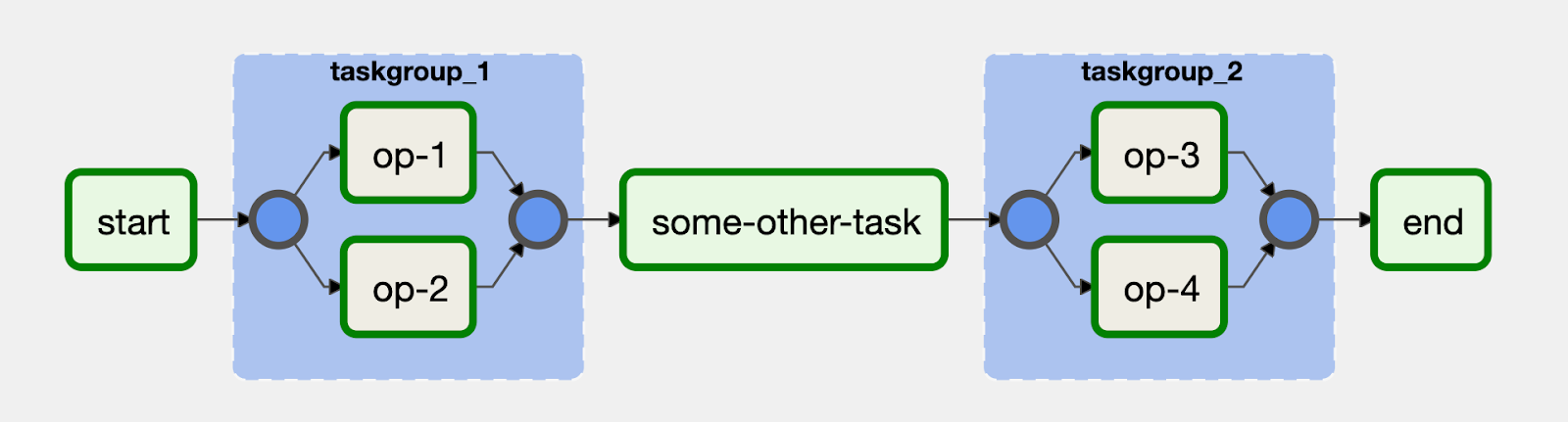

Aufgaben mit dem TaskGroup-Operator gruppieren

Sie können den TaskGroup-Operator verwenden, um Aufgaben in Ihrem DAG zu gruppieren. Aufgaben, die in einem TaskGroup-Block definiert sind, sind weiterhin Teil des Haupt-DAG.

Dazu ein Beispiel:

Die Aufgaben op-1 und op-2 werden in einem Block mit der ID taskgroup_1 gruppiert. Eine Implementierung dieses Workflows sieht so aus: