Take charge of your sensitive data with the Cloud Data Loss Prevention (DLP) API

Scott Ellis

Senior Product Manager

This week, we announced the general availability of the Cloud Data Loss Prevention (DLP) API, a Google Cloud security service that helps you discover, classify and redact sensitive data at rest and in real-time.

When it comes to properly handling sensitive data, the first step is knowing where it exists in your data workloads. This not only helps enterprises more tightly secure their data, it’s a fundamental component of reducing risk in today’s regulatory environment, where the mismanagement of sensitive information can come with real costs.

The DLP API is a flexible and robust tool that helps identify sensitive data like credit card numbers, social security numbers, names and other forms of personally identifiable information (PII). Once you know where this data lives, the service gives you the option to de-identify that data using techniques like redaction, masking and tokenization. These features help protect sensitive data while allowing you to still use it for important business functions like running analytics and customer support operations. On top of that, the DLP API is designed to plug into virtually any workload—whether in the cloud or on-prem—so that you can easily stream in data and take advantage of our inspection and de-identification capabilities.

In light of data privacy regulations like GDPR, it’s important to have tools that can help you uncover and secure personal data. The DLP API is also built to work with your sensitive workloads and is supported by Google Cloud’s security and compliance standards. For example, it’s a covered product under our Cloud HIPAA Business Associate Agreement (BAA), which means you can use it alongside our healthcare solutions to help secure PII.

To illustrate how easy it is to plug DLP into your workloads, we’re introducing a new tutorial that uses the DLP API and Cloud Functions to help you automate the classification of data that’s uploaded to Cloud Storage. This function uses DLP findings to determine what action to take on sensitive files, such as moving them to a restricted bucket to help prevent accidental exposure.

In short, the DLP API is a useful tool for managing sensitive data—and you can take it for a spin today for up to 1 GB at no charge. Now, let’s take a deeper look at its capabilities and features.

Identify sensitive data with flexible predefined and custom detectors

Backed by a variety of techniques including machine learning, pattern matching, mathematical checksums and context analysis, the DLP API provides over 70 predefined detectors (or “infotypes”) for sensitive data like PII and GCP service account credentials.You can also define your own custom types using:

- Dictionaries — find new types or augment the predefined infotypes

- Regex patterns — find your own patterns and define a default likelihood score

- Detection rules — enhance your custom dictionaries and regex patterns with rules that can boost or reduce the likelihood score based on nearby context or indicator hotwords like “banking,” “taxpayer,” and “passport.”

Stream data from virtually anywhere

Are you building a customer support chat app and want to make sure you don’t inadvertently collect sensitive data? Do you manage data that’s on-prem or stored on another cloud provider? The DLP API “content” mode allows you to stream data from virtually anywhere. This is a useful feature for working with large batches to classify or dynamically de-identify data in real-time. With content mode, you can scan data before it’s stored or displayed, and control what data is streamed to where.Native discovery for Google Cloud storage products

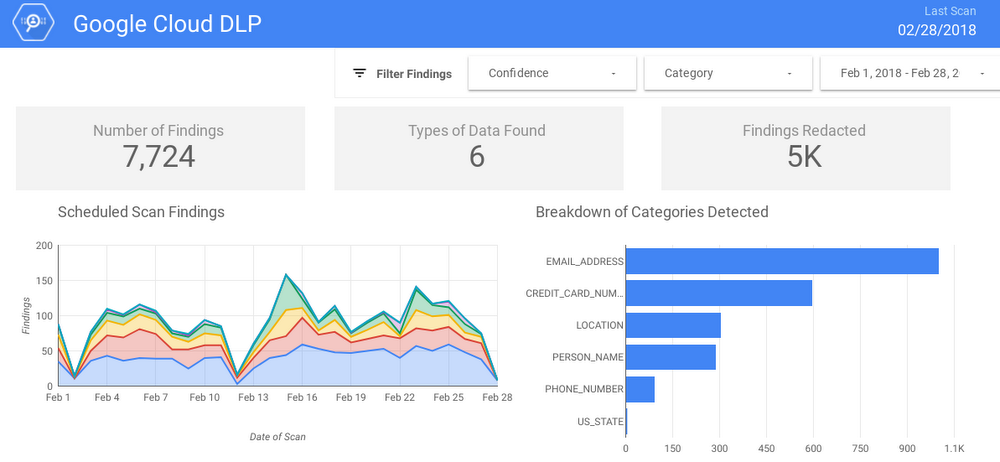

The DLP API has native support for data classification in Cloud Storage, Cloud Datastore and BigQuery. Just point the API at your Cloud Storage bucket or BigQuery table, and we handle the rest. The API supports:- Periodic scans — trigger a scan job to run daily or weekly

- Notifications — launch jobs and receive Cloud Pub/Sub notifications when they finish; this is great for serverless workloads using Cloud Functions

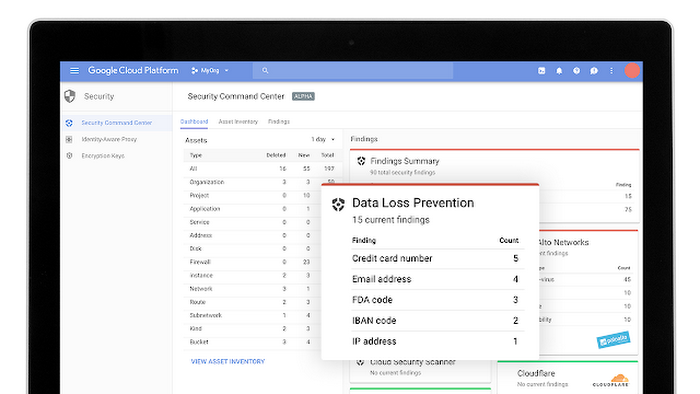

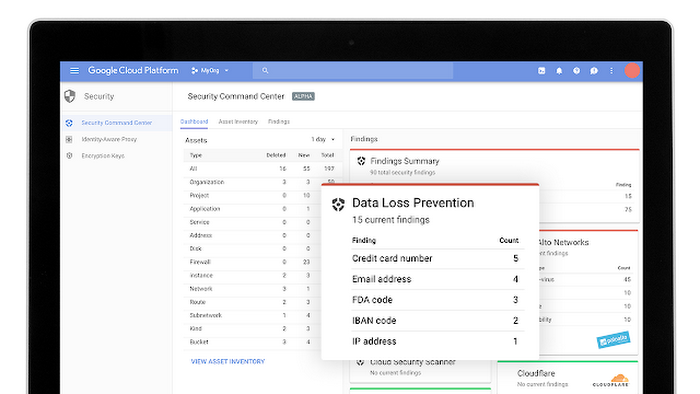

- Integration with Cloud Security Command CenterAlpha

- SQL data analysis — write the results of your DLP scan into the BigQuery dataset of your choice, then use the power of SQL to analyze your findings. You can build custom reports in Google Data Studio or export the data to your preferred data visualization or analysis system.

Redact data from free text and structured data at the same time

With the DLP API, you can stream unstructured free text, use our powerful classification engine to find different sensitive elements and then redact them according to your needs. You can also stream in tabular text and redact it based on the record types or column names. Or do both at the same time, while keeping integrity and consistency across your data. For example, you can take a social security number that’s classified in a comment field as well as in a structured column, and it generates the same token or hash.Extend beyond redaction with a full suite of de-identification tools

From simple redaction to more advanced format-preserving tokenization, the DLP API offers a variety of techniques to help you redact sensitive elements from your data while preserving its utility.Below are a few supported techniques:

| Transformation type | Description |

| Replacement | Replaces each input value with infoType name or a user customized value |

| Redaction | Redacts a value by removing it |

| Mask or partial mask | Masks a string either fully or partially by replacing a given number of characters with a specified fixed character |

| Pseudonymization with cryptographic hash | Replaces input values with a string generated using a given data encryption key |

| Pseudonymization with format preserving token | Replaces an input value with a “token,” or surrogate value, of the same length using format-preserving encryption (FPE) with the FFX mode of operation |

| Bucket values | Masks input values by replacing them with “buckets,” or ranges within which the input value falls |

| Extract time data | Extracts or preserves a portion of dates or timestamps |

The Cloud DLP API can also handle standard bitmap images such as JPEGs and PNGs. Using optical character recognition (OCR) technology, the DLP API analyzes the text in images to return findings or generate a new image with the sensitive findings blocked out.

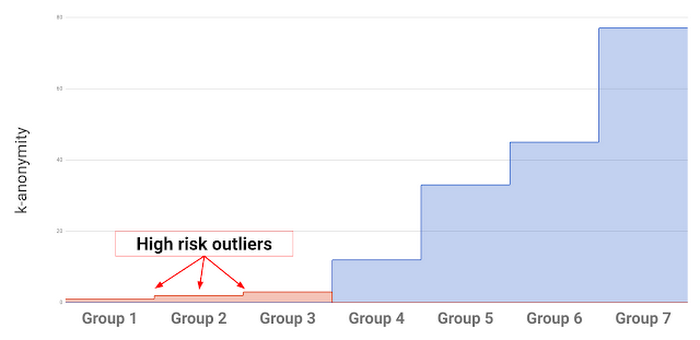

Measure re-identification risk with k-anonymity and l-diversity

Not all sensitive data is immediately obvious like a social security number or credit card number. Sometimes you have data where only certain values or combinations of values identify an individual, for example, a field containing information about an employee's job title doesn’t identify most employees. However, it does single out individuals with unique job titles like "CEO" where there’s only one employee with this title. Combined with other fields such as company, age or zip code, you may arrive at a single, identifiable individual. To help you better understand these kinds of quasi-identifiers, the DLP API provides a set of statistical risk analysis metrics. For example, risk metrics such as k-anonymity can help identify these outlier groups and give you valuable insights into how you might want to further de-identify your data, perhaps by removing rows and bucketing fields.

Integrate the DLP API into your workloads across the cloud ecosystem

The DLP API is built to be flexible and scalable, and includes several features to help you integrate it into your workloads, wherever they may be.

- DLP templates — Templates allow you to configure and persist how you inspect your data and define how you want to transform it. You can then simply reference the template in your API calls and workloads, allowing you to easily update templates without having to redeploy new API calls or code.

- Triggers — Triggers allow you to set up jobs to scan your data on a periodic basis, for example, daily, weekly or monthly.

- Actions — When a large scan job is done, you can configure the DLP API to send a notification with Cloud Pub/Sub. This is a great way to build a robust system that plays well within a serverless, event-driven ecosystem.

Sensitive data is everywhere, but the DLP API can help make sure it doesn’t go anywhere it’s not supposed to. Watch this space for future blog posts that show you how to use the DLP API for specific use cases.