Bridging the gap between data and insights

Sudhir Hasbe

Sr. Director of Product Management, Google Cloud

We’re constantly working with our Google Cloud analytics customers—from analytics customers like GO-JEK and Ocado or Fortune 500 companies like Home Depot and HSBC—to understand their needs. And much of what we've learned has had a direct impact on our data analytics platform.

Today, we want to share a number of updates that will make data analytics easier and more accessible to all businesses. Our goal is to help you focus on data analysis instead of infrastructure management, give you the freedom to orchestrate workloads across clouds, use machine-learning in a way that's integrated with your data analytics operations, and take advantage of open source data processing innovation.

Here’s what we’re announcing:

- BigQuery ML, available now in beta

- BigQuery Clustering, in beta

- BigQuery GIS, in public alpha

- A Sheets data connector for BigQuery, in beta

- Data Studio Explorer, in beta

- Cloud Composer, now generally available

- Customer Managed Encryption Keys for Dataproc (generally available for BigQuery, and beta for Compute Engine and Cloud Storage)

- Streaming analytics updates, including Dataflow Streaming Engine and Python Streaming, in beta, and Dataflow Shuffle for batch data, now generally available

- Dataproc Autoscaling and Dataproc Custom Packages, in alpha

Introducing BigQuery ML: Bringing machine learning closer to your data

All businesses generate data, but only some adopt machine learning to truly understand it. And there are many reasons why. Data analysts, proficient in SQL, don’t always have proficiency in programming languages like R or Python, or a deep understanding of feature engineering, model selection, and hypertuning processes. Hiring a team of data scientists to build predictive analytics solutions can be prohibitively expensive. And moving data to and from an enterprise data warehouse can be complex, time-consuming, and costly.

Today we’re announcing BigQuery ML to address these challenges. With BigQuery ML, data scientists and data analysts can build and deploy machine learning models on massive, structured or semi-structured datasets directly inside BigQuery using simple SQL statements. This means they can perform predictive analytics like forecasting sales and creating customer segments right at the source, where they already store their data. And all of this is possible within a fraction of the time associated with traditional ML systems.

Many of our customers say they’re excited by the workflows they’re starting to run on BigQuery ML.

“BigQuery ML empowers our data analysts and statisticians to perform predictive analytics. It expands our workforce to come up with new and innovative ideas when developing machine learning models,” says Ayin Vala, Chief Data Scientist, Foundation for Precision Medicine (FPM). “In our organization, it is now the fastest way to build an ML model, and the fastest way to run it on our large datasets.” (You can find a video on FPM’s use of BigQuery ML here.)

"We've been using BigQuery to analyze multiple data sources, including subscription data, customer service data, browsing data, newsletter usage,” says Naveed Ahmad, Sr Director Data Engineering & Machine Learning, Hearst. “With BigQuery ML, we are able to quickly build and use machine learning models for our customer and content optimization. What would have taken months is now taking days, as it doesn't require learning complex machine learning concepts or setting up multiple tools."

We’ll offer a deeper look at BigQuery ML, and what that can mean for businesses, in the coming weeks. In the meantime, you can learn more on our website, or give BigQuery ML, currently in beta, a spin today.

Enhancing BigQuery to help you get more from your data

Offering expanded functionality through familiar tools can help data scientists and data analysts do more with data. Here are a few new enhancements we’re bringing to BigQuery.

BigQuery clustering is now in beta

Whether analyzing ad impressions, IoT device data, gaming events, or point-of-sale transactions, BigQuery users expect fast analytics on large datasets. And now users can create clustered tables in BigQuery as an additional, easy layer of data optimization. Defining clustering keys on high-cardinality fields dramatically accelerates query performance, makes queries cheaper, and improves query efficiency for a broad range of queries.

In clustered tables, rows with similar cluster keys are bunched together, so that BigQuery can query the data more efficiently and charge only for the data that BigQuery scanned, rather than the entire table or partition.

Clustering will roll out to all BigQuery users over the course of the next several days.

New geospatial data types and functions with BigQuery GIS

Geospatial data is a critical element of modern IoT, telematics, retail, and manufacturing workflows. Accordingly, we partnered with the Google Earth Engine team on BigQuery GIS (geographic information systems) to integrate geospatial data types and functions as first class citizens within BigQuery. Our implementation, currently in alpha, uses the S2 library, which now has over a billion users through products such as Google Earth Engine and Google Maps.

Our new functions and data types follow the SQL/MM Spatial standard and will be familiar to PostGIS users and anyone already doing geospatial analysis in SQL. This makes workload migrations to BigQuery easier. We also support WKT and GeoJSON, so getting data in and out to your other GIS tools will be easy.

Another benefit from the Earth Engine partnership is our collaboration on a lightweight visualization tool called BigQuery Geo Viz. This is a companion app designed for BigQuery users that want to plot and style their geospatial query results on a map.

Using the BigQuery Geo Viz view and the New York Citibike Public Dataset, we can quickly map bike availability and station capacity across the city.

BigQuery GIS and BigQuery Geo Viz are in public alpha now. To request access to both, please fill out this form. We’ll whitelist your GCP projects and send you the BigQuery GIS documentation.

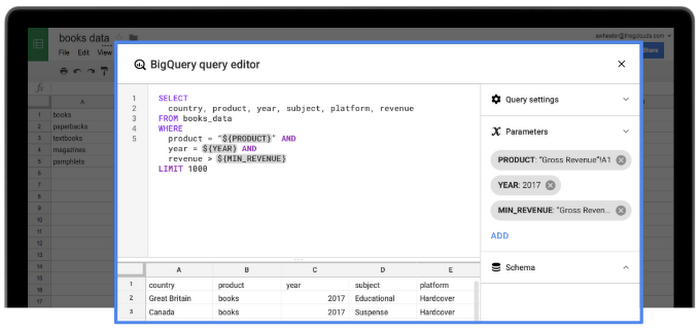

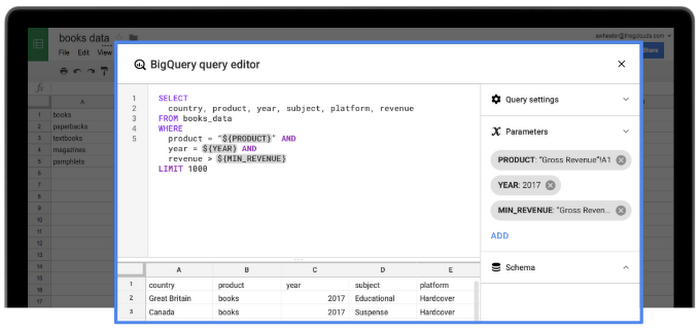

Google Sheets data connector for BigQuery

To help customers access data insights without leaving their familiar, spreadsheet-based workflows, we’re introducing the Sheets data connector for BigQuery, in beta. With it, you can directly access and refresh data in BigQuery from Sheets. You can also take advantage of Sheets tools like Explore to generate suggested charts and pivot tables, easily get the answers you need by asking questions in plain English, and collaborate more seamlessly with your business partners.

You can learn more here.

Visually explore BigQuery data in Google Data Studio

Because seeing the bigger picture is critical to finding insights and honing your analyses, we’re introducing an even deeper integration between BigQuery and Google Data Studio, which enables users to rapidly visualize their query results. In one click from BigQuery’s new UI, users can access the Data Studio Explorer space and immediately start evaluating their queried data. From there, you can create a report and join it with virtually any other data, or start collaborating on a dashboard in real time.

Upgraded capabilities to make real-time insights easier

For many businesses, the ability to collect, analyze and act upon data in real time presents powerful advantages. Google Cloud’s stream analytics offering, comprised of Cloud Pub/Sub and Cloud Dataflow, delivers a serverless experience that scales seamlessly based on your business’s demands. A range of customers use Google Cloud’s stream analytics solution to drive business value. For example, Brightcove creates over seven billion analytics events per day to better understand videos and users. Traveloka powers fraud detection and personalization as it offers customers over 200k travel routes and over 40 payment options. And Wix generates real-time dashboards for nearly 90 million users as a key business differentiator.

We’re continuously working to make our stream analytics solution easier to use and more efficient. With that, we’ve extended the solution in the following ways:

- Dataflow Streaming Engine (beta) gives streaming customers more responsive autoscaling on fewer resources, with improved supportability, by separating compute and state storage, and introducing a streaming shuffle in the middle.

- Dataflow Shuffle (GA) helps customers run faster batch jobs and scale to hundreds of terabytes through a distributed in-memory shuffle engine that connects compute and state storage.

- Python Streaming (beta) delivers the capability to author stream processing jobs in Apache Beam via Python, one of the most popular programming languages, opening our stream analytics service to a broader set of users.

Learn more about our stream analytics solution and customer stories here.

Embracing our commitment to open source data projects

Much of the broader data analytics community have hard-earned skills tied to the Apache Hadoop and Apache Spark open source projects. Cloud Dataproc enables users to apply their expertise in these projects while taking advantage of an infrastructure that provides true elasticity through per-second billing on ephemeral clusters that can spin up within 90 seconds.

We’ve introduced new features to allow users to get even more utility from their Hadoop and Spark distributions through Dataproc:

- Dataproc Autoscaling (alpha) gives users Hadoop and Spark clusters that scale automatically based on the resource requirements of submitted jobs, delivering a serverless experience.

- Dataproc Custom Packages (alpha) allows customers to deploy a selection of top-level Apache components quickly through a checkbox-like selection experience within Dataproc.

- Customer Managed Encryption Keys give Dataproc customers the ability to create, use, and revoke key encryption for BigQuery (GA), Compute Engine (beta), and Cloud Storage (beta).

- Hortonworks support for GCP yields the ability to run HDP and HDF on GCP with Cloud Storage serving as the data lake. This gives customers the freedom to run the distribution that their employees are most familiar with.

- Managed Apache Kafka from Confluent provides GCP users with a managed version to the popular open source messaging framework.

Earlier this year, we announced the beta release of Cloud Composer, a fully managed workflow orchestration service that lets you author, schedule, and monitor pipelines within Google Cloud, and across other public clouds or on-premises data centers. Based on the open source Apache Airflow project, Cloud Composer further demonstrates our commitment to open source software, and our commitment to helping our customers distribute their workloads across as many clouds as they need—even our competitors.

Cloud Composer is now generally available, and is already handling roughly 5 million tasks per month.

Looking forward, equipped with insights

Whether your aim is to solve global social problems with data, or simply forecast the next quarter, we want to help. From extending BigQuery’s abilities with new features like machine learning, to deepening our support for real-time, streaming analytics, to enhancing Data Studio to meet business intelligence demand, our goal is to give you the tools you need.

We’ll be sharing more in-depth information on all of these announcements in the coming weeks. In the meantime, you can learn more about data analytics solutions on Google Cloud on our website.