A solution for implementing industrial predictive maintenance: Part III

Prashant Dhingra

Machine Learning Lead, Advanced Solutions Lab, Google Cloud

Predictive maintenance helps businesses ensure that their equipment will last longer, and reduces downtime in manufacturing facilities by making production systems more reliable. In previous installments of this series (part I and part II), we established some reasons your business might wish to deploy predictive systems, and explained the sensor and data types typically fed into a predictive system. In this third and final post of this blog series, we’ll explain how we assemble a full predictive maintenance reference solution from Google Cloud Platform (GCP) products, including Cloud IoT Core and Cloud IoT Edge, big data and data processing tools like BigQuery and Cloud Dataflow, and machine learning platforms like Cloud ML Engine.

A predictive maintenance reference architecture

To demonstrate how to design and build a predictive maintenance solution on GCP, including the various architectural components and machine learning (ML) model components, we’ll use the example of a company that owns and maintains oil rigs. It plans to replace its “react and repair” practices with a “predictive maintenance” model using data collected by equipment suppliers about the usage and health status of their products.

The company decides to use a cloud-based predictive maintenance solution to analyze real-time sensor data from oil rigs, plus a pre-trained machine learning model to predict the remaining life of each machine and when that machine will fail. With such a model, the company can even avoid unscheduled downtime.

Figure 1: our overall reference architecture

The elements shown in this architecture and scenario are as follows:

Cloud IoT Core helps securely connect, manages, and ingests data from oil rigs around the world.

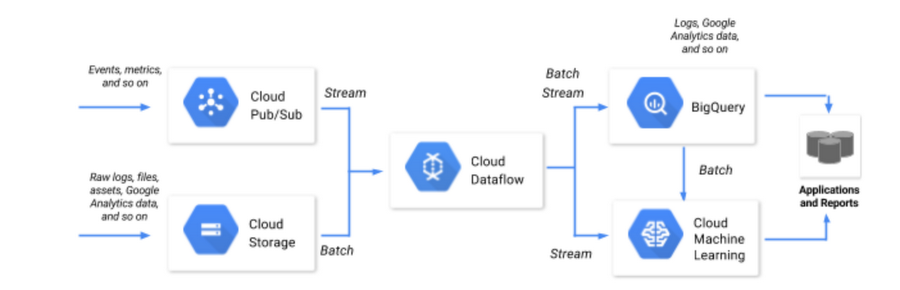

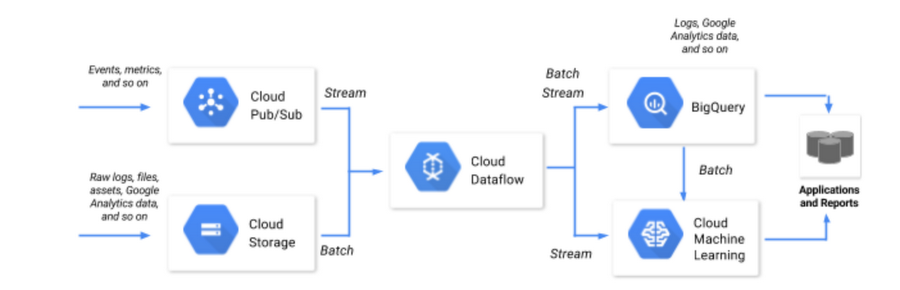

Cloud Pub/Sub ingests streaming event data and delivers the data to Cloud Dataflow.

Cloud Dataflow (a managed service) transforms the data and stores it in BigQuery.

BigQuery provides a serverless data warehouse environment to store and analyze the data, providing the architecture’s big data solution. You can run analytics on both batch and streaming data in BigQuery.

App Engine hosts the system’s visualization engine.

Cloud ML Engine permits you to both train and serve the machine learning model.

Cloud IoT Edge extends Google Cloud’s powerful data processing and machine learning to edge devices, such as robotic arms, wind turbines, and oil rigs, so they can act on the data from their sensors in real time and predict outcomes locally.

Training the predictive maintenance model with ML

With those elements in place, let’s look at how you can build your own predictive maintenance model on GCP.

In our architecture, the ML model predicts remaining life of a specific machine (a pump, perhaps) on the oil rig. It first trains on historical sensor data stored in BigQuery. As real-time data is streamed to the system, the model then predicts the remaining life of the machine.

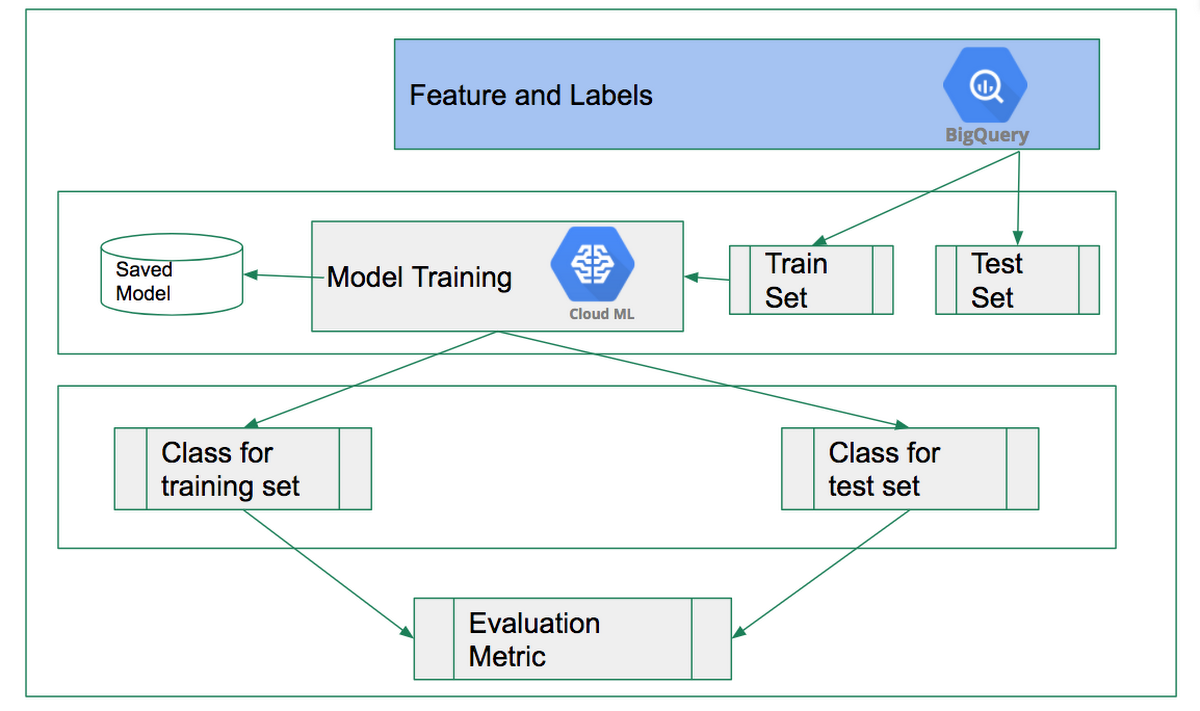

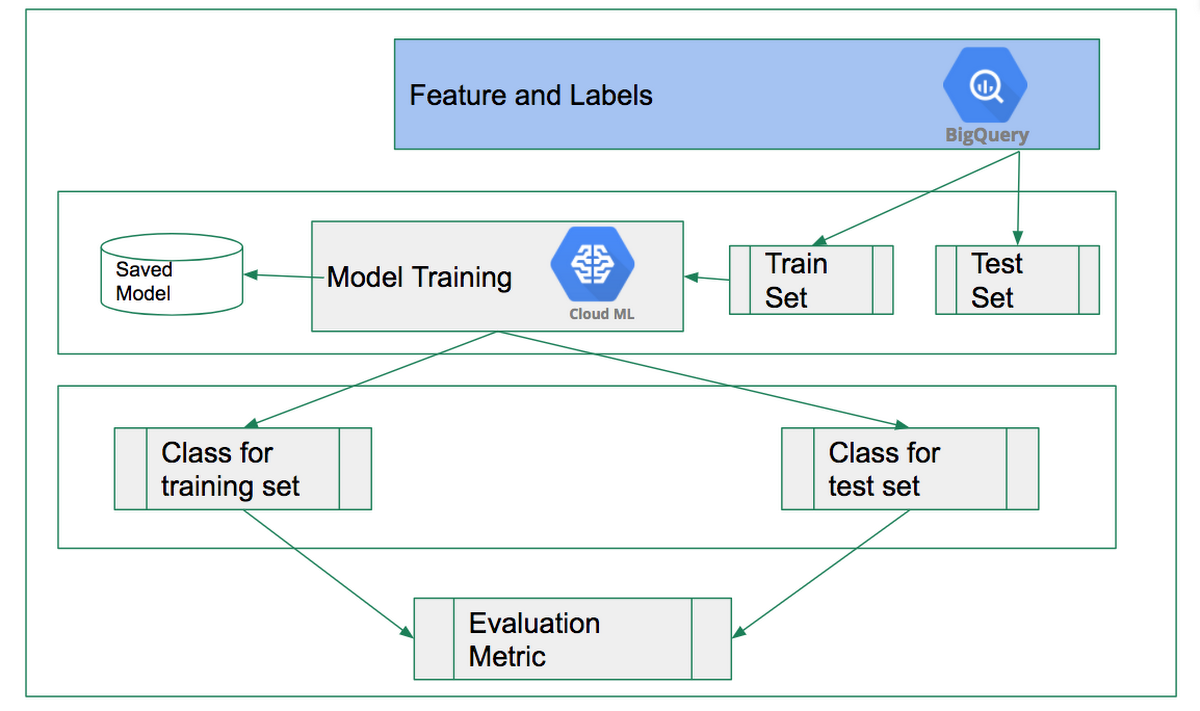

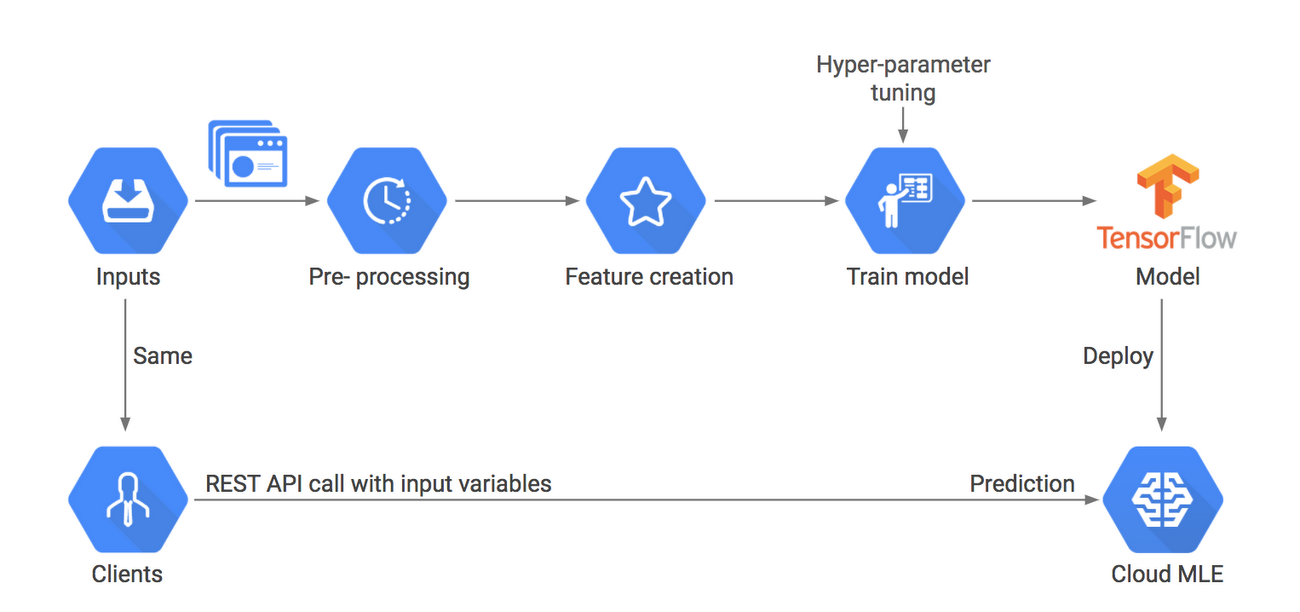

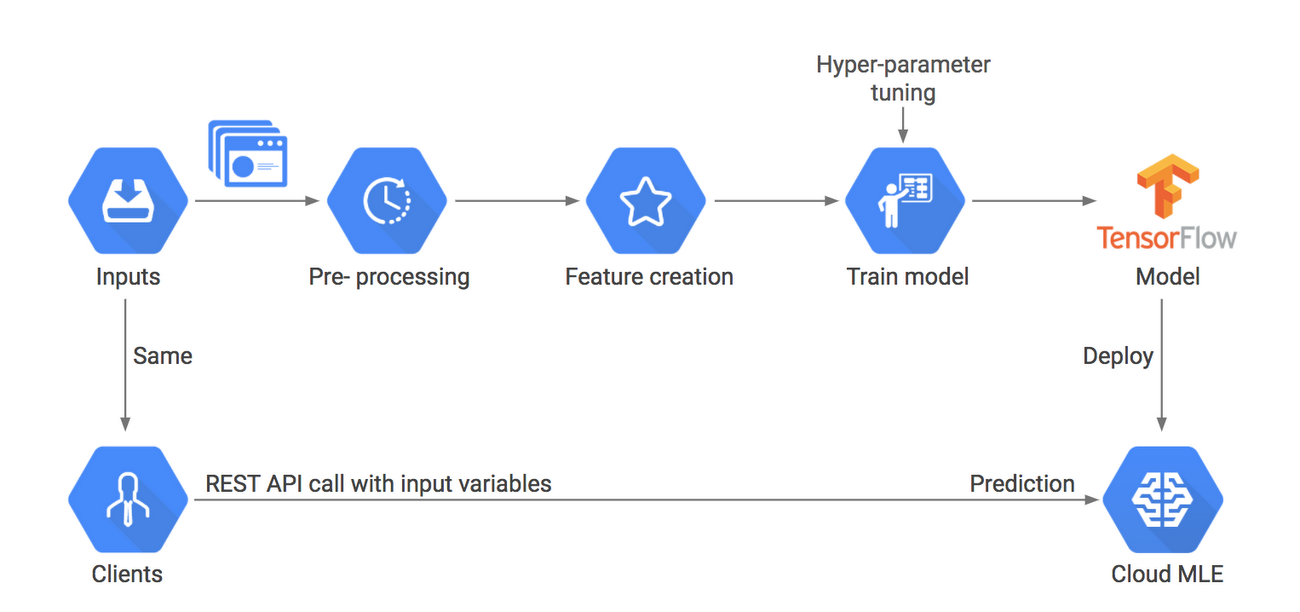

The diagram below shows the architecture and ML model presented in the above demo. For real-time data ingest (online prediction), transformations are carried out prior to making predictions: the preprocessing component should be shared between training and prediction to ensure highest accuracy.

Figure 2: our reference architecture as it pertains to model training

Figure 3: model training and prediction reference architecture

An important component of this architecture is Cloud Dataflow, which can apply the same set of transformations to both historical data (for training) and streaming data (for predictions). Since Cloud Dataflow can stream into BigQuery, up-to-date data is available to applications and reports for agile decision making.

Machine learning at the edge

While it is true that putting data in the cloud means you can take advantage of more complex processing and richer machine learning models, there are many scenarios in which a prediction on data is useful for only a few seconds after it is generated. Think, for example, of credit card fraud, self-driving cars, and factory machinery—these scenarios require almost instantaneous inference, whereas in other scenarios, inference at the edge means an amazing user experience—in translation, with speech, and in IoT scenarios, to name a few. Then there are cases where network connectivity is inconsistent, as with airplanes and ships, and others where it is more cost effective to make predictions on the edge than it is to send data over the network.

Edge computing—putting data processing power at the edge of a network instead of centralizing that processing power in a cloud or in a data warehouse—is a powerful concept, especially when it comes to IoT. With edge computing, you can process IoT data closer to the point of its creation, rather than sending it over long distances.

In the case of our oil rig, Cloud IoT Edge extends Google Cloud’s powerful data processing and machine learning to billions of edge devices such as robotic arms, wind turbines, and oil rigs, so they can act on the data from their sensors in real time and predict outcomes locally.

Edge computing, in particular, brings five potential advantages to manufacturing:

1. Faster response time: No roundtrip to the cloud reduces latency and empowers faster responses. This will help stop critical machine operations from breaking down or hazardous incidents from taking place.

2. Reliable operations with intermittent connectivity: For remote assets such as oil wells, farm pumps, solar farms or windmills with unreliable internet connectivity, monitoring can be difficult. Edge devices' ability to locally store and process data ensures no data loss or operational failure in the event of limited internet connectivity.

3. Security and compliance: Due to the nature of edge computing's architecture, you can eliminate a lot of excess data transfer between your devices and the cloud. It's possible to filter sensitive information locally and only transmit important data model-building information to the cloud. This allows users to build an adequate security and compliance framework that is essential for enterprise security and audits.

4. Cost-effective solutions: One of the practical concerns around IoT is the upfront cost of network bandwidth, data storage, and computational power. With edge computing, you can perform a lot of data transformations locally, which allows businesses to decide which services to deploy or scale in the cloud.

5. The Edge TPU: In addition to Cloud IoT, GCP provides Edge TPU, an IoT-focused chip for the edge environment, plus Cloud IoT Edge, a software stack that extends Google Cloud’s AI capability to gateways and connected devices. Together, Edge TPU and Cloud IoT Edge allow you to build and train machine learning models in the cloud, then run them on Cloud IoT Edge devices while using an Edge TPU hardware accelerator.

Today’s manufacturing facilities, aircraft engines, and oil rigs are becoming complex industrial IoT systems with thousands of components, each of which must operate correctly for optimum performance. Performing predictive maintenance on those systems can provide significant cost savings and improve reliability. With new technologies such as IoT, big data, machine learning, and edge computing, you gain crucial visibility into production processes, and start to transform the world of machinery into one that is connected, integrated, and smart. To learn more, check out our IoT platform page.