This page shows how to configure the Seesaw load balancer for a GKE on-prem cluster.

GKE on-prem clusters can run with one of two load balancing modes: integrated or manual. To use the Seesaw load balancer, you use manual load balancing mode.

Steps common to all manual load balancing

Before you configure your Seesaw load balancer, perform the following steps, which are common to any manual load balancing configuration:

Reserving IP addresses and a VIP for Seesaw VMs

Reserve two IP addresses for a pair of Seesaw VMs. Also reserve a single VIP for the pair of Seesaw VMs. All three of these addresses must be on the same VLAN as your cluster nodes.

Creating Seesaw VMs

Create two clean VMs in your vSphere environment to run a Seesaw active-passive pair. Ubuntu 18.04 + Linux kernel 4.15 is recommended for the Seesaw VMs, although there are other OS versions that work with Seesaw.

The requirements for the two VMs are as follows:

Each VM has a NIC named ens192. This NIC is configured with the IP address of the VM.

Each VM has a NIC named ens224. This NIC does not need an IP address.

The VMs must be on the same VLAN as your cluster nodes.

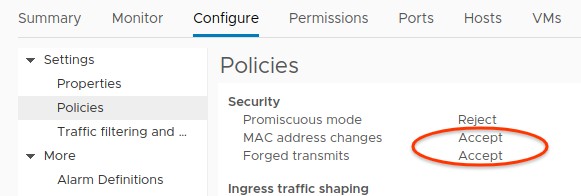

The port group of ens224 must allow MAC address changes and Forged transmits. This is because the Seesaw VMs use the Virtual Router Redundancy Protocol (VRRP), which means Seesaw must be able to configure the MAC addresses of network interfaces. In particular, Seesaw must be able to set the MAC address to a VRRP MAC address of the form 00-00-5E-00-01-[VRID], where [VRID] is a virtual router identifier for the Seesaw pair.

You can configure this in the vSphere user interface in the policy settings of the port group.

Allowing MAC address changes and Forged commits (Click to enlarge)

Compiling Seesaw

To build the Seesaw binaries, execute the following script on a machine with Golang installed:

#!/bin/bash

set -e

DEST=$PWD/seesaw_files

export GOPATH=$DEST/seesaw

SEESAW=$GOPATH/src/github.com/google/seesaw

mkdir -p $GOPATH

cd $GOPATH

sudo apt -y install libnl-3-dev libnl-genl-3-dev

git clone https://github.com/google/seesaw.git $SEESAW

cd $SEESAW

GO111MODULE=on GOOS=linux GOARCH=amd64 make install

cp -r $GOPATH/bin $DEST/

mkdir -p $DEST/cfg

cp etc/seesaw/watchdog.cfg etc/systemd/system/seesaw_watchdog.service $DEST/cfg/

sudo rm -rf $GOPATH

This generates a seesaw_files directory that holds the Seesaw binaries and

configuration files. To see the see the files, run the tree command:

tree seesaw_files

The output shows the generated directories and files:

seesaw_files/

├── bin

│ ├── seesaw_cli

│ ├── seesaw_ecu

│ ├── seesaw_engine

│ ├── seesaw_ha

│ ├── seesaw_healthcheck

│ ├── seesaw_ncc

│ └── seesaw_watchdog

└── cfg

├── seesaw_watchdog.service

└── watchdog.cfg

2 directories, 9 files

Exporting environment variables

Before you configure your Seesaw VMs, you need to export several environment variables.

On each Seesaw VM, export the following environment variables:

NODE_IP: The IP address of the Seesaw VM.PEER_IP: The IP address of the other Seesaw VM.NODE_NETMASK: The length of the prefix of the CIDR range for your cluster nodes.VIP: The VIP that you reserved for the Seesaw pair of VMs.VRID: The Virtual Router identifier that you want to use for the Seesaw pair.

Here is an example:

export NODE_IP=203.0.113.2 export PEER_IP=203.0.113.3 export NODE_NETMASK=25 export VIP=203.0.113.4 export VRID=128

Configuring Seesaw VMs

Copy the seesaw_files directory to the two Seesaw VMs.

On each Seesaw VM, in the seesaw_files directory, run the following script:

# execute_seesaw.sh

#!/bin/bash

set -e

# Tools

apt -y install ipvsadm libnl-3-dev libnl-genl-3-dev

# Module

modprobe ip_vs

modprobe nf_conntrack_ipv4

modprobe dummy numdummies=1

echo "ip_vs" > /etc/modules-load.d/ip_vs.conf

echo "nf_conntrack_ipv4" > /etc/modules-load.d/nf_conntrack_ipv4.conf

echo "dummy" > /etc/modules-load.d/dummy.conf

echo "options dummy numdummies=1" > /etc/modprobe.d/dummy.conf

# dummy interface

ip link add dummy0 type dummy || true

cat > /etc/systemd/network/10-dummy0.netdev <<EOF

[NetDev]

Name=dummy0

Kind=dummy

EOF

DIR=./seesaw_files

mkdir -p /var/log/seesaw

mkdir -p /etc/seesaw

mkdir -p /usr/local/seesaw

cp $DIR/bin/* /usr/local/seesaw/

chmod a+x /usr/local/seesaw/*

cp $DIR/cfg/watchdog.cfg /etc/seesaw

cat > /etc/seesaw/seesaw.cfg <<EOF

[cluster]

anycast_enabled = false

name = bundled-seesaw-onprem

node_ipv4 = $NODE_IP

peer_ipv4 = $PEER_IP

vip_ipv4 = $VIP

vrid = $VRID

[config_server]

primary = 127.0.0.1

[interface]

node = ens192

lb = ens224

EOF

cat > /etc/seesaw/cluster.pb <<EOF

seesaw_vip: <

fqdn: "seesaw-vip."

ipv4: "$VIP/$NODE_NETMASK"

status: PRODUCTION

>

node: <

fqdn: "node.$NODE_IP."

ipv4: "$NODE_IP/$NODE_NETMASK"

>

node: <

fqdn: "node.$PEER_IP."

ipv4: "$PEER_IP/$NODE_NETMASK"

>

EOF

cp $DIR/cfg/seesaw_watchdog.service /etc/systemd/system

systemctl --system daemon-reload

Reconfiguring Seesaw

The following configuration specifies IP addresses for your cluster nodes. It also specifies VIPs for your control planes and ingress controllers. As written, the configuration specifies these addresses:

100.115.222.143 is the VIP for the admin control plane.

100.115.222.144 is the VIP for the admin cluster ingress controller.

100.115.222.146 is the VIP for the user control plane.

100.115.222.145 is the VIP for the user cluster ingress controller.

The admin cluster node addresses are 100.115.222.163, 100.115.222.164, 100.115.222.165, and 100.115.222.166.

The user cluster node addresses are 100.115.222.167, 100.115.222.168, and 100.115.222.169.

Edit the configuration so that it contains the VIPs and IP addresses that you

have chosen for your admin and user clusters. Then on each Seesaw VM, append the

configuration to /etc/seesaw/cluster.pb:

Notice that the user cluster control plane is implemented as a Service in the

admin cluster. So the node IP addresses under user-1-control-plane are the

addresses of the admin cluster nodes.

vserver: <

name: "admin-control-plane"

entry_address: <

fqdn: "admin-control-plane"

ipv4: "100.115.222.143/32"

>

rp: "cloudysanfrancisco@gmail.com"

vserver_entry: <

protocol: TCP

port: 443

scheduler: WLC

mode: DSR

healthcheck: <

type: HTTP

port: 10256

mode: PLAIN

proxy: false

tls_verify: false

send: "/healthz"

>

>

backend: <

host: <

fqdn: "admin-1"

ipv4: "100.115.222.163/32"

>

>

backend: <

host: <

fqdn: "admin-2"

ipv4: "100.115.222.164/32"

>

>

backend: <

host: <

fqdn: "admin-3"

ipv4: "100.115.222.165/32"

>

>

backend: <

host: <

fqdn: "admin-4"

ipv4: "100.115.222.166/32"

>

>

>

vserver: <

name: "admin-ingress-controller"

entry_address: <

fqdn: "admin-ingress-controller"

ipv4: "100.115.222.144/32"

>

rp: "cloudysanfrancisco@gmail.com"

vserver_entry: <

protocol: TCP

port: 80

scheduler: WLC

mode: DSR

healthcheck: <

type: HTTP

port: 10256

mode: PLAIN

proxy: false

tls_verify: false

send: "/healthz"

>

>

vserver_entry: <

protocol: TCP

port: 443

scheduler: WLC

mode: DSR

healthcheck: <

type: HTTP

port: 10256

mode: PLAIN

proxy: false

tls_verify: false

send: "/healthz"

>

>

backend: <

host: <

fqdn: "admin-1"

ipv4: "100.115.222.163/32"

>

>

backend: <

host: <

fqdn: "admin-2"

ipv4: "100.115.222.164/32"

>

>

backend: <

host: <

fqdn: "admin-3"

ipv4: "100.115.222.165/32"

>

>

backend: <

host: <

fqdn: "admin-4"

ipv4: "100.115.222.166/32"

>

>

>

vserver: <

name: "user-1-control-plane"

entry_address: <

fqdn: "user-1-control-plane"

ipv4: "100.115.222.146/32"

>

rp: "cloudysanfrancisco@gmail.com"

vserver_entry: <

protocol: TCP

port: 443

scheduler: WLC

mode: DSR

healthcheck: <

type: HTTP

port: 10256

mode: PLAIN

proxy: false

tls_verify: false

send: "/healthz"

>

>

backend: <

host: <

fqdn: "admin-1"

ipv4: "100.115.222.163/32"

>

>

backend: <

host: <

fqdn: "admin-2"

ipv4: "100.115.222.164/32"

>

>

backend: <

host: <

fqdn: "admin-3"

ipv4: "100.115.222.165/32"

>

>

backend: <

host: <

fqdn: "admin-4"

ipv4: "100.115.222.166/32"

>

>

>

vserver: <

name: "user-1-ingress-controller"

entry_address: <

fqdn: "user-1-ingress-controller"

ipv4: "100.115.222.145/32"

>

rp: "cloudysanfrancisco@gmail.com"

vserver_entry: <

protocol: TCP

port: 80

scheduler: WLC

mode: DSR

healthcheck: <

type: HTTP

port: 10256

mode: PLAIN

proxy: false

tls_verify: false

send: "/healthz"

>

>

vserver_entry: <

protocol: TCP

port: 443

scheduler: WLC

mode: DSR

healthcheck: <

type: HTTP

port: 10256

mode: PLAIN

proxy: false

tls_verify: false

send: "/healthz"

>

>

backend: <

host: <

fqdn: "user-1"

ipv4: "100.115.222.167/32"

>

>

backend: <

host: <

fqdn: "user-2"

ipv4: "100.115.222.168/32"

>

>

backend: <

host: <

fqdn: "user-3"

ipv4: "100.115.222.169/32"

>

>

>

Starting the Seesaw service

On each Seesaw VM, run the following command:

systemctl --now enable seesaw_watchdog.service

Seesaw is now running on both of the Seesaw VMs. You can check logs under

/var/log/seesaw/.

Modifying the cluster configuration file

Before you create a cluster, you generate a cluster configuration file. Fill in the cluster configuration file as described in Modify the configuration file.

In particular, set lbmode to "Manual". Also, fill in the manuallbspec and

vips fields under both admincluster and usercluster. Here's an example:

admincluster:

manuallbspec:

ingresshttpnodeport: 32527

ingresshttpsnodeport: 30139

controlplanenodeport: 30968

vips:

controlplanevip: "100.115.222.143"

ingressvip: "100.115.222.144"

usercluster:

manuallbspec:

ingresshttpnodeport: 30243

ingresshttpsnodeport: 30879

controlplanenodeport: 30562

vips:

controlplanevip: "100.115.222.146"

ingressvip: "100.115.222.145"

lbmode: "Manual"

Known issues

Currently, gkectl's load balancer validation doesn't support Seesaw and might

return errors. If you are installing or upgrading clusters that use Seesaw,

pass in the --skip-validation-load-balancer flag.

For more information, refer to Troubleshooting.