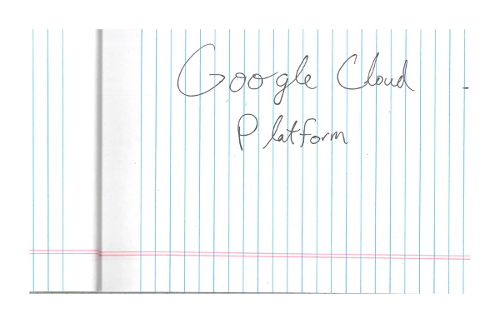

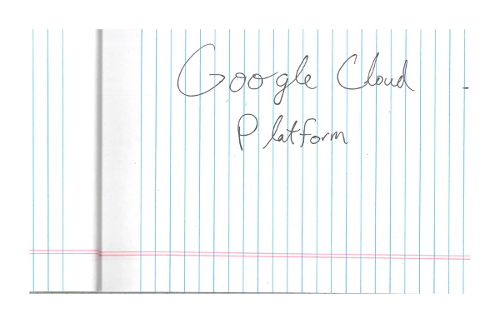

透過光學字元辨識 (OCR) 偵測手寫內容

Vision API 可偵測及擷取圖片中的文字:

DOCUMENT_TEXT_DETECTION會從圖片 (或檔案) 擷取文字,並針對密集文字和文件進行最佳化。JSON 包含網頁、區塊、段落、字詞和換行資訊。

DOCUMENT_TEXT_DETECTION 的其中一項用途是偵測圖片中的手寫文字。

歡迎試用

如果您未曾使用過 Google Cloud,歡迎建立帳戶,親自體驗實際使用 Cloud Vision API 的成效。新客戶可以獲得價值 $300 美元的免費抵免額,可用於執行、測試及部署工作負載。

免費試用 Cloud Vision API文件文字偵測要求

設定 Google Cloud 專案和驗證

如果您尚未建立 Google Cloud 專案,請立即建立。展開這個部分即可查看操作說明。

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the Vision API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

Install the Google Cloud CLI.

-

如果您使用外部識別資訊提供者 (IdP),請先 使用聯合身分登入 gcloud CLI。

-

如要初始化 gcloud CLI,請執行下列指令:

gcloud init -

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the Vision API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

Install the Google Cloud CLI.

-

如果您使用外部識別資訊提供者 (IdP),請先 使用聯合身分登入 gcloud CLI。

-

如要初始化 gcloud CLI,請執行下列指令:

gcloud init - BASE64_ENCODED_IMAGE:二進位圖片資料的 Base64 表示法 (ASCII 字串)。這個字串應類似下列字串:

/9j/4QAYRXhpZgAA...9tAVx/zDQDlGxn//2Q==

- PROJECT_ID:您的 Google Cloud 專案 ID。

- CLOUD_STORAGE_IMAGE_URI:Cloud Storage 值區中有效圖片檔案的路徑。您必須至少擁有檔案的讀取權限。

範例:

gs://cloud-samples-data/vision/handwriting_image.png

- PROJECT_ID:您的 Google Cloud 專案 ID。

us:僅限美國eu:歐盟- https://eu-vision.googleapis.com/v1/projects/PROJECT_ID/locations/eu/images:annotate

- https://eu-vision.googleapis.com/v1/projects/PROJECT_ID/locations/eu/images:asyncBatchAnnotate

- https://eu-vision.googleapis.com/v1/projects/PROJECT_ID/locations/eu/files:annotate

- https://eu-vision.googleapis.com/v1/projects/PROJECT_ID/locations/eu/files:asyncBatchAnnotate

- REGION_ID:有效的區域位置 ID 之一:

us:僅限美國eu:歐盟

- CLOUD_STORAGE_IMAGE_URI:Cloud Storage 值區中有效圖片檔案的路徑。您必須至少擁有檔案的讀取權限。

範例:

gs://cloud-samples-data/vision/handwriting_image.png

- PROJECT_ID:您的 Google Cloud 專案 ID。

偵測本機圖片中的文件文字

您可以使用 Vision API 對本機圖片檔執行特徵偵測。

如果是 REST 要求,請在要求主體中,以 base64 編碼字串的形式傳送圖片檔案內容。

如果是 gcloud 和用戶端程式庫要求,請在要求中指定本機圖片的路徑。

REST

使用任何要求資料之前,請先替換以下項目:

HTTP 方法和網址:

POST https://vision.googleapis.com/v1/images:annotate

JSON 要求主體:

{

"requests": [

{

"image": {

"content": "BASE64_ENCODED_IMAGE"

},

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

}

]

}

]

}

如要傳送要求,請選擇以下其中一個選項:

curl

將要求主體儲存在名為 request.json 的檔案中,然後執行下列指令:

curl -X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: PROJECT_ID" \

-H "Content-Type: application/json; charset=utf-8" \

-d @request.json \

"https://vision.googleapis.com/v1/images:annotate"

PowerShell

將要求主體儲存在名為 request.json 的檔案中,然後執行下列指令:

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "PROJECT_ID" }

Invoke-WebRequest `

-Method POST `

-Headers $headers `

-ContentType: "application/json; charset=utf-8" `

-InFile request.json `

-Uri "https://vision.googleapis.com/v1/images:annotate" | Select-Object -Expand Content

如果要求成功,伺服器會傳回 200 OK HTTP 狀態碼與 JSON 格式的回應。

回應

{

"responses": [

{

"textAnnotations": [

{

"locale": "en",

"description": "O Google Cloud Platform\n",

"boundingPoly": {

"vertices": [

{

"x": 14,

"y": 11

},

{

"x": 279,

"y": 11

},

{

"x": 279,

"y": 37

},

{

"x": 14,

"y": 37

}

]

}

},

],

"fullTextAnnotation": {

"pages": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"width": 281,

"height": 44,

"blocks": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 279, "y": 11

},

{

"x": 279, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"paragraphs": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 279, "y": 11

},

{

"x": 279, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"words": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 23, "y": 11

},

{

"x": 23, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"symbols": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

],

"detectedBreak": {

"type": "SPACE"

}

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 23, "y": 11

},

{

"x": 23, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"text": "O"

}

]

},

]

}

],

"blockType": "TEXT"

}

]

}

],

"text": "Google Cloud Platform\n"

}

}

]

}

Go

在試用這個範例之前,請先按照Go「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Go API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

// detectDocumentText gets the full document text from the Vision API for an image at the given file path.

func detectDocumentText(w io.Writer, file string) error {

ctx := context.Background()

client, err := vision.NewImageAnnotatorClient(ctx)

if err != nil {

return err

}

f, err := os.Open(file)

if err != nil {

return err

}

defer f.Close()

image, err := vision.NewImageFromReader(f)

if err != nil {

return err

}

annotation, err := client.DetectDocumentText(ctx, image, nil)

if err != nil {

return err

}

if annotation == nil {

fmt.Fprintln(w, "No text found.")

} else {

fmt.Fprintln(w, "Document Text:")

fmt.Fprintf(w, "%q\n", annotation.Text)

fmt.Fprintln(w, "Pages:")

for _, page := range annotation.Pages {

fmt.Fprintf(w, "\tConfidence: %f, Width: %d, Height: %d\n", page.Confidence, page.Width, page.Height)

fmt.Fprintln(w, "\tBlocks:")

for _, block := range page.Blocks {

fmt.Fprintf(w, "\t\tConfidence: %f, Block type: %v\n", block.Confidence, block.BlockType)

fmt.Fprintln(w, "\t\tParagraphs:")

for _, paragraph := range block.Paragraphs {

fmt.Fprintf(w, "\t\t\tConfidence: %f", paragraph.Confidence)

fmt.Fprintln(w, "\t\t\tWords:")

for _, word := range paragraph.Words {

symbols := make([]string, len(word.Symbols))

for i, s := range word.Symbols {

symbols[i] = s.Text

}

wordText := strings.Join(symbols, "")

fmt.Fprintf(w, "\t\t\t\tConfidence: %f, Symbols: %s\n", word.Confidence, wordText)

}

}

}

}

}

return nil

}

Java

在試用這個範例之前,請先按照使用用戶端程式庫的 Vision API 快速入門導覽課程中的 Java 設定操作說明進行操作。詳情請參閱 Vision API Java 參考說明文件。

public static void detectDocumentText(String filePath) throws IOException {

List<AnnotateImageRequest> requests = new ArrayList<>();

ByteString imgBytes = ByteString.readFrom(new FileInputStream(filePath));

Image img = Image.newBuilder().setContent(imgBytes).build();

Feature feat = Feature.newBuilder().setType(Type.DOCUMENT_TEXT_DETECTION).build();

AnnotateImageRequest request =

AnnotateImageRequest.newBuilder().addFeatures(feat).setImage(img).build();

requests.add(request);

// Initialize client that will be used to send requests. This client only needs to be created

// once, and can be reused for multiple requests. After completing all of your requests, call

// the "close" method on the client to safely clean up any remaining background resources.

try (ImageAnnotatorClient client = ImageAnnotatorClient.create()) {

BatchAnnotateImagesResponse response = client.batchAnnotateImages(requests);

List<AnnotateImageResponse> responses = response.getResponsesList();

client.close();

for (AnnotateImageResponse res : responses) {

if (res.hasError()) {

System.out.format("Error: %s%n", res.getError().getMessage());

return;

}

// For full list of available annotations, see http://g.co/cloud/vision/docs

TextAnnotation annotation = res.getFullTextAnnotation();

for (Page page : annotation.getPagesList()) {

String pageText = "";

for (Block block : page.getBlocksList()) {

String blockText = "";

for (Paragraph para : block.getParagraphsList()) {

String paraText = "";

for (Word word : para.getWordsList()) {

String wordText = "";

for (Symbol symbol : word.getSymbolsList()) {

wordText = wordText + symbol.getText();

System.out.format(

"Symbol text: %s (confidence: %f)%n",

symbol.getText(), symbol.getConfidence());

}

System.out.format(

"Word text: %s (confidence: %f)%n%n", wordText, word.getConfidence());

paraText = String.format("%s %s", paraText, wordText);

}

// Output Example using Paragraph:

System.out.println("%nParagraph: %n" + paraText);

System.out.format("Paragraph Confidence: %f%n", para.getConfidence());

blockText = blockText + paraText;

}

pageText = pageText + blockText;

}

}

System.out.println("%nComplete annotation:");

System.out.println(annotation.getText());

}

}

}Node.js

在試用這個範例之前,請先按照Node.js「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Node.js API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

// Imports the Google Cloud client library

const vision = require('@google-cloud/vision');

// Creates a client

const client = new vision.ImageAnnotatorClient();

/**

* TODO(developer): Uncomment the following line before running the sample.

*/

// const fileName = 'Local image file, e.g. /path/to/image.png';

// Read a local image as a text document

const [result] = await client.documentTextDetection(fileName);

const fullTextAnnotation = result.fullTextAnnotation;

console.log(`Full text: ${fullTextAnnotation.text}`);

fullTextAnnotation.pages.forEach(page => {

page.blocks.forEach(block => {

console.log(`Block confidence: ${block.confidence}`);

block.paragraphs.forEach(paragraph => {

console.log(`Paragraph confidence: ${paragraph.confidence}`);

paragraph.words.forEach(word => {

const wordText = word.symbols.map(s => s.text).join('');

console.log(`Word text: ${wordText}`);

console.log(`Word confidence: ${word.confidence}`);

word.symbols.forEach(symbol => {

console.log(`Symbol text: ${symbol.text}`);

console.log(`Symbol confidence: ${symbol.confidence}`);

});

});

});

});

});Python

在試用這個範例之前,請先按照Python「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Python API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

def detect_document(path):

"""Detects document features in an image."""

from google.cloud import vision

client = vision.ImageAnnotatorClient()

with open(path, "rb") as image_file:

content = image_file.read()

image = vision.Image(content=content)

response = client.document_text_detection(image=image)

for page in response.full_text_annotation.pages:

for block in page.blocks:

print(f"\nBlock confidence: {block.confidence}\n")

for paragraph in block.paragraphs:

print("Paragraph confidence: {}".format(paragraph.confidence))

for word in paragraph.words:

word_text = "".join([symbol.text for symbol in word.symbols])

print(

"Word text: {} (confidence: {})".format(

word_text, word.confidence

)

)

for symbol in word.symbols:

print(

"\tSymbol: {} (confidence: {})".format(

symbol.text, symbol.confidence

)

)

if response.error.message:

raise Exception(

"{}\nFor more info on error messages, check: "

"https://cloud.google.com/apis/design/errors".format(response.error.message)

)

其他語言

C#: 請按照用戶端程式庫頁面上的C# 設定說明操作, 然後前往 .NET 適用的 Vision 參考說明文件。

PHP: 請按照用戶端程式庫頁面上的 PHP 設定說明操作, 然後前往 PHP 適用的 Vision 參考文件。

Ruby: 請按照用戶端程式庫頁面的 Ruby 設定說明操作, 然後前往 Ruby 適用的 Vision 參考說明文件。

偵測遠端圖片中的文件文字

您可以透過 Vision API,對位於 Cloud Storage 或網路上的遠端圖片檔案執行特徵偵測。如要傳送遠端檔案要求,請在要求內文中指定檔案的網頁網址或 Cloud Storage URI。

REST

使用任何要求資料之前,請先替換以下項目:

HTTP 方法和網址:

POST https://vision.googleapis.com/v1/images:annotate

JSON 要求主體:

{

"requests": [

{

"image": {

"source": {

"imageUri": "CLOUD_STORAGE_IMAGE_URI"

}

},

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

}

]

}

]

}

如要傳送要求,請選擇以下其中一個選項:

curl

將要求主體儲存在名為 request.json 的檔案中,然後執行下列指令:

curl -X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: PROJECT_ID" \

-H "Content-Type: application/json; charset=utf-8" \

-d @request.json \

"https://vision.googleapis.com/v1/images:annotate"

PowerShell

將要求主體儲存在名為 request.json 的檔案中,然後執行下列指令:

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "PROJECT_ID" }

Invoke-WebRequest `

-Method POST `

-Headers $headers `

-ContentType: "application/json; charset=utf-8" `

-InFile request.json `

-Uri "https://vision.googleapis.com/v1/images:annotate" | Select-Object -Expand Content

如果要求成功,伺服器會傳回 200 OK HTTP 狀態碼與 JSON 格式的回應。

回應

{

"responses": [

{

"textAnnotations": [

{

"locale": "en",

"description": "O Google Cloud Platform\n",

"boundingPoly": {

"vertices": [

{

"x": 14,

"y": 11

},

{

"x": 279,

"y": 11

},

{

"x": 279,

"y": 37

},

{

"x": 14,

"y": 37

}

]

}

},

],

"fullTextAnnotation": {

"pages": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"width": 281,

"height": 44,

"blocks": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 279, "y": 11

},

{

"x": 279, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"paragraphs": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 279, "y": 11

},

{

"x": 279, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"words": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 23, "y": 11

},

{

"x": 23, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"symbols": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

],

"detectedBreak": {

"type": "SPACE"

}

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 23, "y": 11

},

{

"x": 23, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"text": "O"

}

]

},

]

}

],

"blockType": "TEXT"

}

]

}

],

"text": "Google Cloud Platform\n"

}

}

]

}

Go

在試用這個範例之前,請先按照Go「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Go API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

// detectDocumentText gets the full document text from the Vision API for an image at the given file path.

func detectDocumentTextURI(w io.Writer, file string) error {

ctx := context.Background()

client, err := vision.NewImageAnnotatorClient(ctx)

if err != nil {

return err

}

image := vision.NewImageFromURI(file)

annotation, err := client.DetectDocumentText(ctx, image, nil)

if err != nil {

return err

}

if annotation == nil {

fmt.Fprintln(w, "No text found.")

} else {

fmt.Fprintln(w, "Document Text:")

fmt.Fprintf(w, "%q\n", annotation.Text)

fmt.Fprintln(w, "Pages:")

for _, page := range annotation.Pages {

fmt.Fprintf(w, "\tConfidence: %f, Width: %d, Height: %d\n", page.Confidence, page.Width, page.Height)

fmt.Fprintln(w, "\tBlocks:")

for _, block := range page.Blocks {

fmt.Fprintf(w, "\t\tConfidence: %f, Block type: %v\n", block.Confidence, block.BlockType)

fmt.Fprintln(w, "\t\tParagraphs:")

for _, paragraph := range block.Paragraphs {

fmt.Fprintf(w, "\t\t\tConfidence: %f", paragraph.Confidence)

fmt.Fprintln(w, "\t\t\tWords:")

for _, word := range paragraph.Words {

symbols := make([]string, len(word.Symbols))

for i, s := range word.Symbols {

symbols[i] = s.Text

}

wordText := strings.Join(symbols, "")

fmt.Fprintf(w, "\t\t\t\tConfidence: %f, Symbols: %s\n", word.Confidence, wordText)

}

}

}

}

}

return nil

}

Java

在試用這個範例之前,請先按照使用用戶端程式庫的 Vision API 快速入門導覽課程中的 Java 設定操作說明進行操作。詳情請參閱 Vision API Java 參考說明文件。

public static void detectDocumentTextGcs(String gcsPath) throws IOException {

List<AnnotateImageRequest> requests = new ArrayList<>();

ImageSource imgSource = ImageSource.newBuilder().setGcsImageUri(gcsPath).build();

Image img = Image.newBuilder().setSource(imgSource).build();

Feature feat = Feature.newBuilder().setType(Type.DOCUMENT_TEXT_DETECTION).build();

AnnotateImageRequest request =

AnnotateImageRequest.newBuilder().addFeatures(feat).setImage(img).build();

requests.add(request);

// Initialize client that will be used to send requests. This client only needs to be created

// once, and can be reused for multiple requests. After completing all of your requests, call

// the "close" method on the client to safely clean up any remaining background resources.

try (ImageAnnotatorClient client = ImageAnnotatorClient.create()) {

BatchAnnotateImagesResponse response = client.batchAnnotateImages(requests);

List<AnnotateImageResponse> responses = response.getResponsesList();

client.close();

for (AnnotateImageResponse res : responses) {

if (res.hasError()) {

System.out.format("Error: %s%n", res.getError().getMessage());

return;

}

// For full list of available annotations, see http://g.co/cloud/vision/docs

TextAnnotation annotation = res.getFullTextAnnotation();

for (Page page : annotation.getPagesList()) {

String pageText = "";

for (Block block : page.getBlocksList()) {

String blockText = "";

for (Paragraph para : block.getParagraphsList()) {

String paraText = "";

for (Word word : para.getWordsList()) {

String wordText = "";

for (Symbol symbol : word.getSymbolsList()) {

wordText = wordText + symbol.getText();

System.out.format(

"Symbol text: %s (confidence: %f)%n",

symbol.getText(), symbol.getConfidence());

}

System.out.format(

"Word text: %s (confidence: %f)%n%n", wordText, word.getConfidence());

paraText = String.format("%s %s", paraText, wordText);

}

// Output Example using Paragraph:

System.out.println("%nParagraph: %n" + paraText);

System.out.format("Paragraph Confidence: %f%n", para.getConfidence());

blockText = blockText + paraText;

}

pageText = pageText + blockText;

}

}

System.out.println("%nComplete annotation:");

System.out.println(annotation.getText());

}

}

}Node.js

在試用這個範例之前,請先按照Node.js「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Node.js API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

// Imports the Google Cloud client libraries

const vision = require('@google-cloud/vision');

// Creates a client

const client = new vision.ImageAnnotatorClient();

/**

* TODO(developer): Uncomment the following lines before running the sample.

*/

// const bucketName = 'Bucket where the file resides, e.g. my-bucket';

// const fileName = 'Path to file within bucket, e.g. path/to/image.png';

// Read a remote image as a text document

const [result] = await client.documentTextDetection(

`gs://${bucketName}/${fileName}`

);

const fullTextAnnotation = result.fullTextAnnotation;

console.log(fullTextAnnotation.text);Python

在試用這個範例之前,請先按照Python「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Python API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

def detect_document_uri(uri):

"""Detects document features in the file located in Google Cloud

Storage."""

from google.cloud import vision

client = vision.ImageAnnotatorClient()

image = vision.Image()

image.source.image_uri = uri

response = client.document_text_detection(image=image)

for page in response.full_text_annotation.pages:

for block in page.blocks:

print(f"\nBlock confidence: {block.confidence}\n")

for paragraph in block.paragraphs:

print("Paragraph confidence: {}".format(paragraph.confidence))

for word in paragraph.words:

word_text = "".join([symbol.text for symbol in word.symbols])

print(

"Word text: {} (confidence: {})".format(

word_text, word.confidence

)

)

for symbol in word.symbols:

print(

"\tSymbol: {} (confidence: {})".format(

symbol.text, symbol.confidence

)

)

if response.error.message:

raise Exception(

"{}\nFor more info on error messages, check: "

"https://cloud.google.com/apis/design/errors".format(response.error.message)

)

gcloud

如要執行手寫偵測,請使用 gcloud ml vision detect-document 指令,如下列範例所示:

gcloud ml vision detect-document gs://cloud-samples-data/vision/handwriting_image.png

其他語言

C#: 請按照用戶端程式庫頁面上的C# 設定說明操作, 然後前往 .NET 適用的 Vision 參考說明文件。

PHP: 請按照用戶端程式庫頁面上的 PHP 設定說明操作, 然後前往 PHP 適用的 Vision 參考文件。

Ruby: 請按照用戶端程式庫頁面的 Ruby 設定說明操作, 然後前往 Ruby 適用的 Vision 參考說明文件。

指定語言 (選用)

這兩種 OCR 要求的其中一項支援一或多個 languageHints,可指定圖片中任何文字的語言。不過,空值通常會產生最佳結果,因為省略值可啟用自動語言偵測功能。如果語言使用拉丁字母,則不需要設定 languageHints。在少數情況下,如果知道圖片中文字的語言,設定提示有助於獲得更準確的結果 (但如果提示錯誤,可能會造成重大阻礙)。如果指定的一或多種語言不是支援的語言,文字偵測就會傳回錯誤。

如要提供語言提示,請修改要求主體 (request.json 檔案),在 imageContext.languageHints 欄位中提供其中一種支援語言的字串,如下列範例所示:

{ "requests": [ { "image": { "source": { "imageUri": "IMAGE_URL" } }, "features": [ { "type": "DOCUMENT_TEXT_DETECTION" } ], "imageContext": { "languageHints": ["en-t-i0-handwrit"] } } ] }

多區域支援

您現在可以指定洲際資料儲存空間和 OCR 處理作業。目前支援的地區如下:

位置

您可以控管專案資源的儲存和處理位置。具體來說,您可以設定 Cloud Vision,只在歐盟境內儲存及處理資料。

根據預設,Cloud Vision 會在「全球」位置儲存及處理資源,也就是說,Cloud Vision 無法保證資源會保留在特定位置或區域。如果選擇「歐盟」,Google 只會在歐盟儲存及處理資料。您和使用者可以從任何位置存取資料。

使用 API 設定位置資訊

Vision API 支援全球 API 端點 (vision.googleapis.com),以及兩個以區域為準的端點:歐盟端點 (eu-vision.googleapis.com) 和美國端點 (us-vision.googleapis.com)。請使用這些端點進行特定區域的處理作業。舉例來說,如要只在歐盟儲存及處理資料,請在 REST API 呼叫中使用 URI eu-vision.googleapis.com,取代 vision.googleapis.com:

如要只在美國儲存及處理資料,請使用上述方法搭配美國端點 (us-vision.googleapis.com)。

使用用戶端程式庫設定位置

Vision API 用戶端程式庫預設會存取全域 API 端點 (vision.googleapis.com)。如要只在歐盟境內儲存及處理資料,您必須明確設定端點 (eu-vision.googleapis.com)。下列程式碼範例說明如何設定這項設定。

REST

使用任何要求資料之前,請先替換以下項目:

HTTP 方法和網址:

POST https://REGION_ID-vision.googleapis.com/v1/projects/PROJECT_ID/locations/REGION_ID/images:annotate

JSON 要求主體:

{

"requests": [

{

"image": {

"source": {

"imageUri": "CLOUD_STORAGE_IMAGE_URI"

}

},

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

}

]

}

]

}

如要傳送要求,請選擇以下其中一個選項:

curl

將要求主體儲存在名為 request.json 的檔案中,然後執行下列指令:

curl -X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: PROJECT_ID" \

-H "Content-Type: application/json; charset=utf-8" \

-d @request.json \

"https://REGION_ID-vision.googleapis.com/v1/projects/PROJECT_ID/locations/REGION_ID/images:annotate"

PowerShell

將要求主體儲存在名為 request.json 的檔案中,然後執行下列指令:

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "PROJECT_ID" }

Invoke-WebRequest `

-Method POST `

-Headers $headers `

-ContentType: "application/json; charset=utf-8" `

-InFile request.json `

-Uri "https://REGION_ID-vision.googleapis.com/v1/projects/PROJECT_ID/locations/REGION_ID/images:annotate" | Select-Object -Expand Content

如果要求成功,伺服器會傳回 200 OK HTTP 狀態碼與 JSON 格式的回應。

回應

{

"responses": [

{

"textAnnotations": [

{

"locale": "en",

"description": "O Google Cloud Platform\n",

"boundingPoly": {

"vertices": [

{

"x": 14,

"y": 11

},

{

"x": 279,

"y": 11

},

{

"x": 279,

"y": 37

},

{

"x": 14,

"y": 37

}

]

}

},

],

"fullTextAnnotation": {

"pages": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"width": 281,

"height": 44,

"blocks": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 279, "y": 11

},

{

"x": 279, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"paragraphs": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 279, "y": 11

},

{

"x": 279, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"words": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

]

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 23, "y": 11

},

{

"x": 23, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"symbols": [

{

"property": {

"detectedLanguages": [

{

"languageCode": "en"

}

],

"detectedBreak": {

"type": "SPACE"

}

},

"boundingBox": {

"vertices": [

{

"x": 14, "y": 11

},

{

"x": 23, "y": 11

},

{

"x": 23, "y": 37

},

{

"x": 14, "y": 37

}

]

},

"text": "O"

}

]

},

]

}

],

"blockType": "TEXT"

}

]

}

],

"text": "Google Cloud Platform\n"

}

}

]

}

Go

在試用這個範例之前,請先按照Go「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Go API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

import (

"context"

"fmt"

vision "cloud.google.com/go/vision/apiv1"

"google.golang.org/api/option"

)

// setEndpoint changes your endpoint.

func setEndpoint(endpoint string) error {

// endpoint := "eu-vision.googleapis.com:443"

ctx := context.Background()

client, err := vision.NewImageAnnotatorClient(ctx, option.WithEndpoint(endpoint))

if err != nil {

return fmt.Errorf("NewImageAnnotatorClient: %w", err)

}

defer client.Close()

return nil

}

Java

在試用這個範例之前,請先按照使用用戶端程式庫的 Vision API 快速入門導覽課程中的 Java 設定操作說明進行操作。詳情請參閱 Vision API Java 參考說明文件。

ImageAnnotatorSettings settings =

ImageAnnotatorSettings.newBuilder().setEndpoint("eu-vision.googleapis.com:443").build();

// Initialize client that will be used to send requests. This client only needs to be created

// once, and can be reused for multiple requests. After completing all of your requests, call

// the "close" method on the client to safely clean up any remaining background resources.

ImageAnnotatorClient client = ImageAnnotatorClient.create(settings);Node.js

在試用這個範例之前,請先按照Node.js「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Node.js API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

// Imports the Google Cloud client library

const vision = require('@google-cloud/vision');

async function setEndpoint() {

// Specifies the location of the api endpoint

const clientOptions = {apiEndpoint: 'eu-vision.googleapis.com'};

// Creates a client

const client = new vision.ImageAnnotatorClient(clientOptions);

// Performs text detection on the image file

const [result] = await client.textDetection('./resources/wakeupcat.jpg');

const labels = result.textAnnotations;

console.log('Text:');

labels.forEach(label => console.log(label.description));

}

setEndpoint();Python

在試用這個範例之前,請先按照Python「使用用戶端程式庫的 Vision 快速入門導覽課程」中的設定說明操作。詳情請參閱 Vision Python API 參考說明文件。

如要向 Vision 進行驗證,請設定應用程式預設憑證。 詳情請參閱「為本機開發環境設定驗證」。

from google.cloud import vision

client_options = {"api_endpoint": "eu-vision.googleapis.com"}

client = vision.ImageAnnotatorClient(client_options=client_options)試試看

請在下列工具中試用文字偵測和文件文字偵測功能。你可以按一下「執行」(gs://cloud-samples-data/vision/handwriting_image.png) 使用已指定的圖片,也可以自行指定圖片。

要求主體:

{

"requests": [

{

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

}

],

"image": {

"source": {

"imageUri": "gs://cloud-samples-data/vision/handwriting_image.png"

}

}

}

]

}