Evaluating models

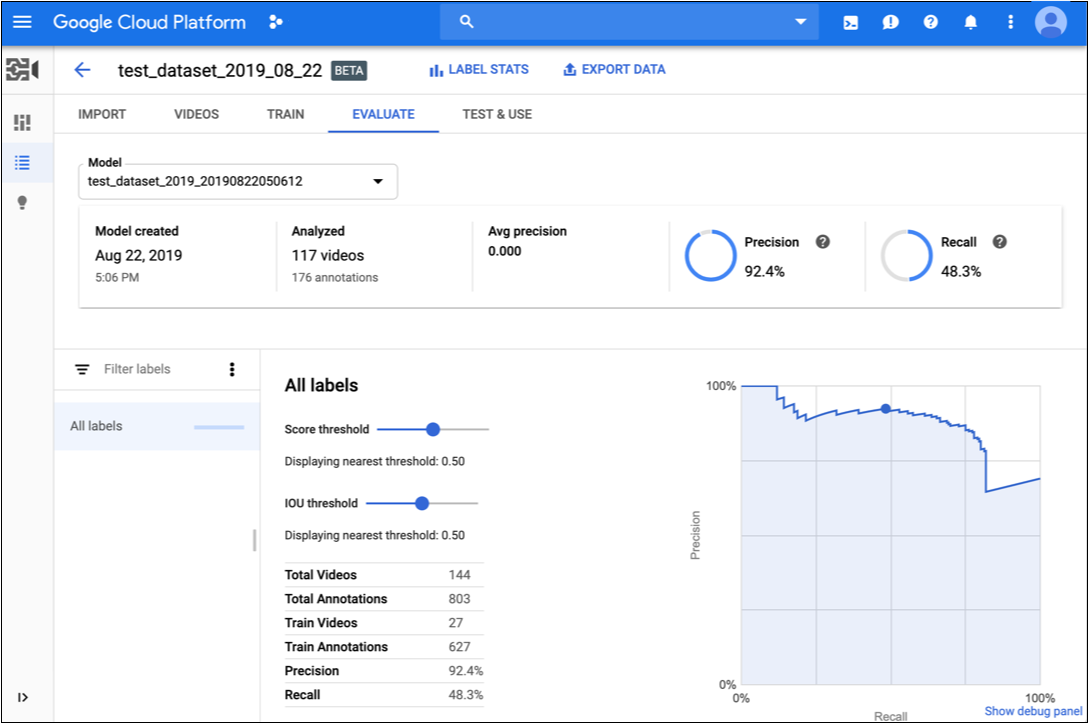

After training a model, AutoML Video Intelligence Object Tracking uses items from the TEST set to evaluate the quality and accuracy of the new model.

AutoML Video Intelligence Object Tracking provides an aggregate set of evaluation metrics indicating how well the model performs overall, as well as evaluation metrics for each category label, indicating how well the model performs for that label.

IoU : Intersection over Union, a metric used in object tracking to measure the overlap of a predicted versus actual bounding box for an object instance in a video frame. The closer the predicted bounding box values are to the actual bounding box values, the greater the intersection and the IoU value.

AuPRC : Area under Precision/Recall curve, also referred to as "average precision." Generally between 0.5 and 1.0. Higher values indicate more accurate models.

The Confidence threshold curves show how different confidence thresholds can affect precision, recall, true and false positive rates. Read about the relationship of precision and recall.

Use this data to evaluate your model's readiness. Low AUC scores, or low precision and recall scores can indicate that your model needs additional training data or has inconsistent labels. A very high AUC score and perfect precision and recall can indicate that the data is too easy and may not generalize well.

Get model evaluation values

Web UI

-

Open the Models page in the AutoML Video Object Tracking UI.

-

Click the row for the model you want to evaluate.

-

Click the Evaluate tab.

If training has completed for the model, AutoML Video Object Tracking shows its evaluation metrics.

- To view metrics for a specific label, select the label name from the list of labels in the lower part of the page.

REST

Before using any of the request data, make the following replacements:

- model-id: replace with the identifier for your model

- project-number: the number of your project

- location-id: the Cloud region where annotation should take

place. Supported cloud regions are:

us-east1,us-west1,europe-west1,asia-east1. If no region is specified, a region will be determined based on video file location.

HTTP method and URL:

GET https://automl.googleapis.com/v1beta1/projects/project-number/locations/location-id/models/model-id:modelEvaluations

To send your request, choose one of these options:

curl

Execute the following command:

curl -X GET \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: project-number" \

"https://automl.googleapis.com/v1beta1/projects/project-number/locations/location-id/models/model-id:modelEvaluations"

PowerShell

Execute the following command:

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "project-number" }

Invoke-WebRequest `

-Method GET `

-Headers $headers `

-Uri "https://automl.googleapis.com/v1beta1/projects/project-number/locations/location-id/models/model-id:modelEvaluations" | Select-Object -Expand Content

8703337066443674578.

Java

To authenticate to AutoML Video Object Tracking, set up Application Default Credentials. For more information, see Set up authentication for a local development environment.

Node.js

To authenticate to AutoML Video Object Tracking, set up Application Default Credentials. For more information, see Set up authentication for a local development environment.

Python

To authenticate to AutoML Video Object Tracking, set up Application Default Credentials. For more information, see Set up authentication for a local development environment.

Iterate on your model

If you're not happy with the quality levels, you can go back to earlier steps to improve the quality:

- You might need to add different types of videos such as wider angle, higher or lower resolution, different points of view.

- Consider removing labels altogether if you don't have enough training videos.

- Remember that machines can't read your label name; it's just a random string of letters to them. If you have one label that says "door" and another that says "door_with_knob" the machine has no way of figuring out the nuance other than the videos you provide it.

- Augment your data with more examples of true positives and negatives. Especially important examples are the ones that are close to the decision boundary.

Once you've made changes, train and evaluate a new model until you reach a high enough quality level.