Cloud Composer 3 | Cloud Composer 2 | Cloud Composer 1

Halaman ini menjelaskan cara mengelompokkan tugas di pipeline Airflow menggunakan pola desain berikut:

- Mengelompokkan tugas dalam grafik DAG.

- Memicu DAG turunan dari DAG induk.

- Mengelompokkan tugas dengan operator

TaskGroup.

Mengelompokkan tugas dalam grafik DAG

Untuk mengelompokkan tugas dalam fase tertentu di pipeline, Anda dapat menggunakan hubungan antar-tugas dalam file DAG.

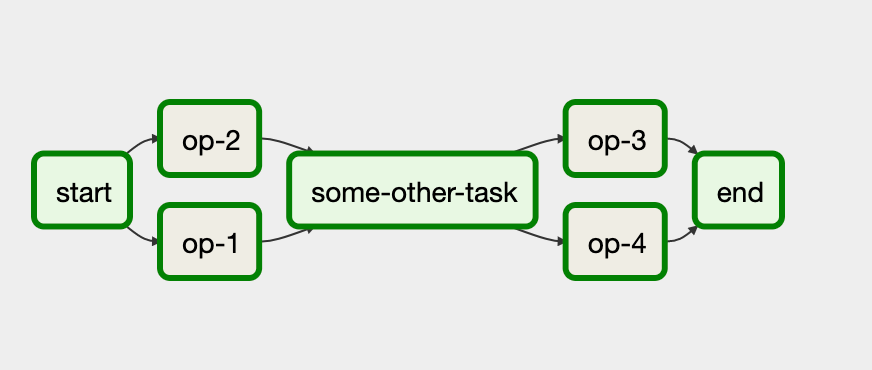

Perhatikan contoh berikut:

Dalam alur kerja ini, tugas op-1 dan op-2 berjalan bersama setelah tugas

awal start. Anda dapat melakukannya dengan mengelompokkan tugas menggunakan pernyataan

start >> [task_1, task_2].

Contoh berikut memberikan penerapan lengkap DAG ini:

Memicu DAG turunan dari DAG induk

Anda dapat memicu satu DAG dari DAG lain dengan

operator TriggerDagRunOperator.

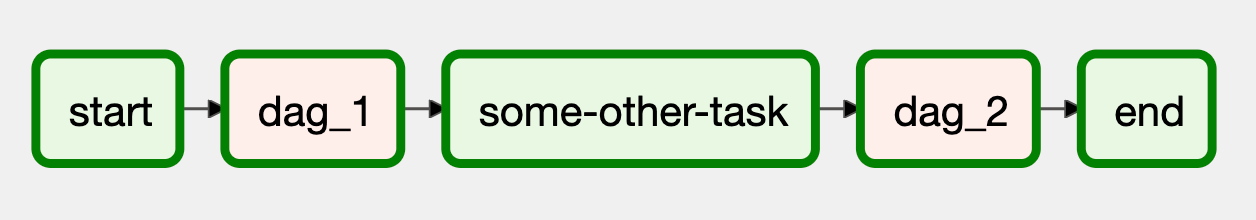

Perhatikan contoh berikut:

Dalam alur kerja ini, blok dag_1 dan dag_2 merepresentasikan serangkaian tugas

yang dikelompokkan bersama dalam DAG terpisah di lingkungan Cloud Composer.

Penerapan alur kerja ini memerlukan dua file DAG terpisah. File DAG yang mengontrol akan terlihat seperti berikut:

Implementasi DAG turunan, yang dipicu oleh DAG pengontrol, akan terlihat seperti berikut:

Anda harus mengupload kedua file DAG di lingkungan Cloud Composer agar DAG berfungsi.

Mengelompokkan tugas dengan operator TaskGroup

Anda dapat menggunakan

operator TaskGroup untuk mengelompokkan tugas

bersama-sama dalam DAG. Tugas yang ditentukan dalam blok TaskGroup tetap menjadi bagian

dari DAG utama.

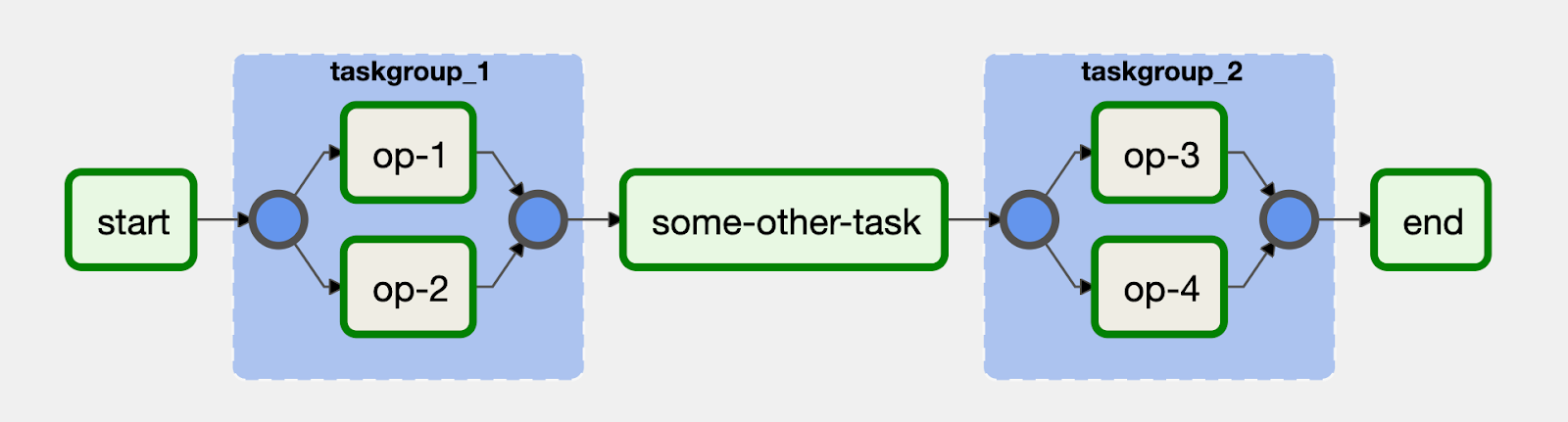

Perhatikan contoh berikut:

Tugas op-1 dan op-2 dikelompokkan bersama dalam blok dengan ID

taskgroup_1. Penerapan alur kerja ini terlihat seperti kode berikut: