Cloud Composer 3 | Cloud Composer 2 | Cloud Composer 1

Questa pagina descrive come raggruppare le attività nelle pipeline Airflow utilizzando i seguenti pattern di progettazione:

- Raggruppamento delle attività nel grafico DAG.

- Attivazione dei DAG secondari da un DAG principale.

- Raggruppamento delle attività con l'operatore

TaskGroup.

Raggruppare le attività nel grafico DAG

Per raggruppare le attività in determinate fasi della pipeline, puoi utilizzare le relazioni tra le attività nel file DAG.

Considera l'esempio seguente:

In questo flusso di lavoro, le attività op-1 e op-2 vengono eseguite insieme dopo l'attività

iniziale start. Puoi farlo raggruppando le attività con l'istruzione

start >> [task_1, task_2].

Il seguente esempio fornisce un'implementazione completa di questo DAG:

Airflow 2

Airflow 1

Attivare i DAG secondari da un DAG principale

Puoi attivare un DAG da un altro DAG con l'operatore

TriggerDagRunOperator.

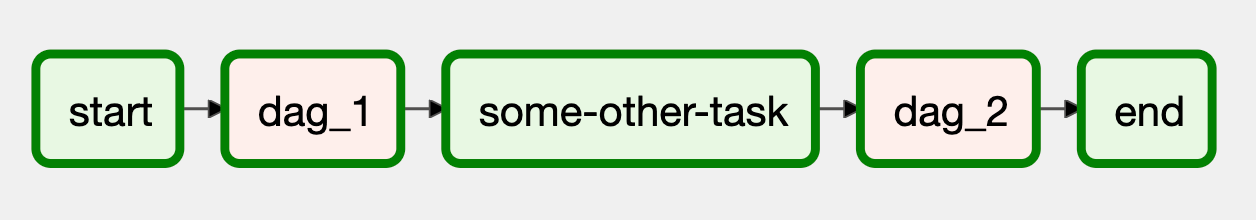

Considera l'esempio seguente:

In questo flusso di lavoro, i blocchi dag_1 e dag_2 rappresentano una serie di attività

raggruppate in un DAG separato nell'ambiente

Cloud Composer.

L'implementazione di questo flusso di lavoro richiede due file DAG separati. Il file DAG di controllo ha il seguente aspetto:

Airflow 2

Airflow 1

L'implementazione del DAG secondario, attivato dal DAG di controllo, è simile alla seguente:

Airflow 2

Airflow 1

Per il corretto funzionamento del DAG, devi caricare entrambi i file DAG nel tuo ambiente Cloud Composer.

Raggruppamento delle attività con l'operatore TaskGroup

Questo approccio funziona solo in Airflow 2.Puoi utilizzare l'operatore

TaskGroup per raggruppare le attività

nel DAG. Le attività definite all'interno di un blocco TaskGroup fanno ancora parte

del DAG principale.

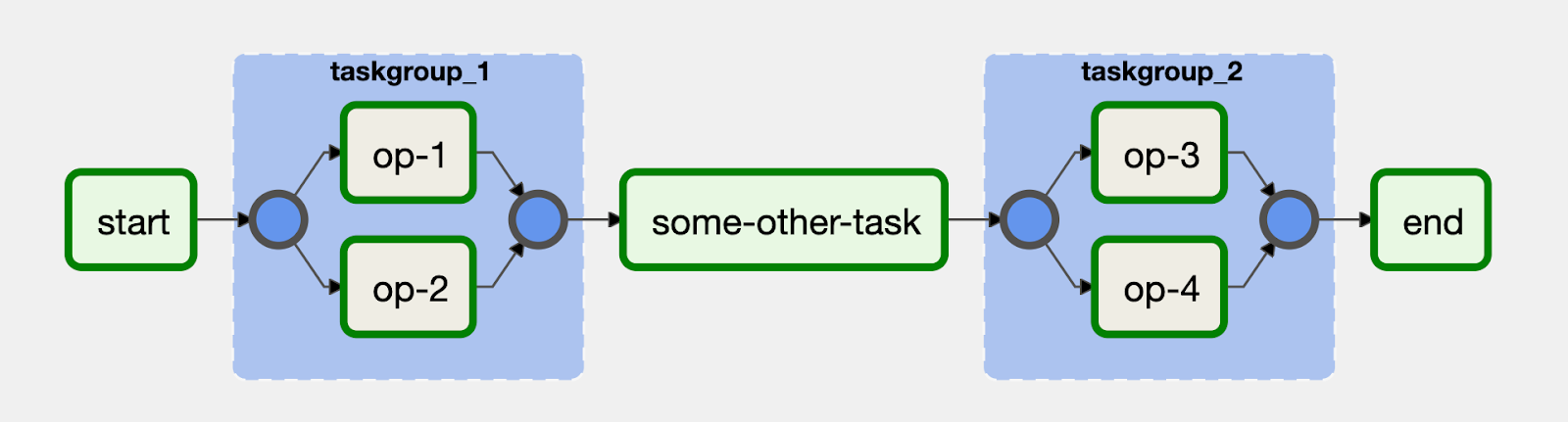

Considera l'esempio seguente:

Le attività op-1 e op-2 sono raggruppate in un blocco con ID

taskgroup_1. Un'implementazione di questo flusso di lavoro è simile al seguente codice: