Scaling data access to 10Tbps (yes, terabits) with Lustre

Dean Hildebrand

Technical Director, Office of the CTO, Google Cloud

Ilias Katsardis

Sr. Product Manager, Cluster Director, Google Cloud

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialData growth is a massive challenge for all organizations, but just as important is ensuring access to the data doesn’t become a bottleneck. For high performance computing (HPC) and ML/AI applications, reducing time to insight is key, and so finding the right storage solution that can support low latency, high bandwidth data access at an affordable price is critical. Today, we are excited to announce that Google Cloud, working with its partners NAG and DDN, demonstrated the highest performing Lustre file system on the IO500 ranking of the fastest HPC storage systems.

About the IO500 list

IO500 is an HPC storage benchmark that seeks to capture the full picture of an HPC storage system by calculating a score based upon a wide range of storage characteristics. The IO500 website captures all of the technical details of each submission and allows users to understand the strengths and weaknesses of each storage system. For example, it is easy to see if a specific storage system excels at bandwidth, metadata operations, small files, or all of the above. This gives IT organizations access to realistic performance expectations and to help administrators select their next HPC storage system.

At Google Cloud, we appreciate the openness of the IO500, since all configurations they evaluate are readily available to all organizations. Even though most users don’t need to deploy at extreme scale, by looking through the details of the Google Cloud submissions, users can see the potential of what is possible and feel confident that we can meet their needs over the long haul. Google Cloud first participated in 2019 in an earlier collaboration with DDN, and at that time the goal was to demonstrate the capabilities of a Lustre system using Persistent Disk Standard (HDD) and Persistent Disk SSD. Lustre on Persistent Disk is a great choice for a long term persistent storage system where data must be stored safely.

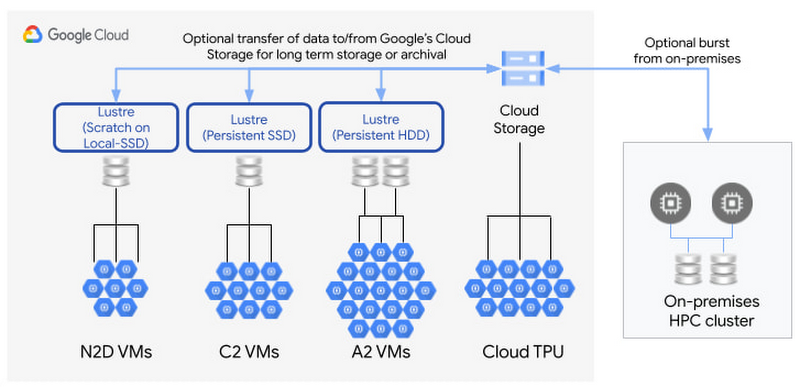

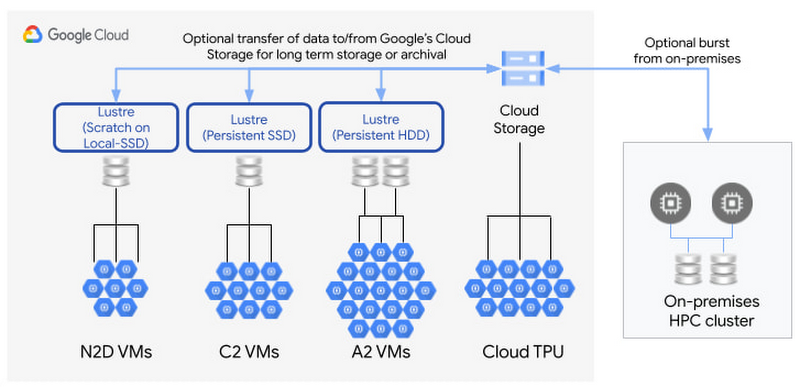

Since 2019, Google Cloud has released numerous new HPC capabilities such as 100 Gbps networking, larger and faster Local SSD volumes, and pre-tuned HPC VM Images. Working with our partners at NAG, who provided Cloud HPC integration and benchmarking expertise, along with DDN’s storage specialists, we decided to resubmit to the IO500 this year to demonstrate how these new capabilities can help users deploy an extreme scale scratch storage system. When using scratch storage, the goal is to go fast for the entire runtime of an application, but initial data must first be copied into the system and the final results stored persistently elsewhere. For example, all data can start in Cloud Storage, be transferred into the Lustre storage system for the run of a job, and then the results can be copied back to Cloud Storage.

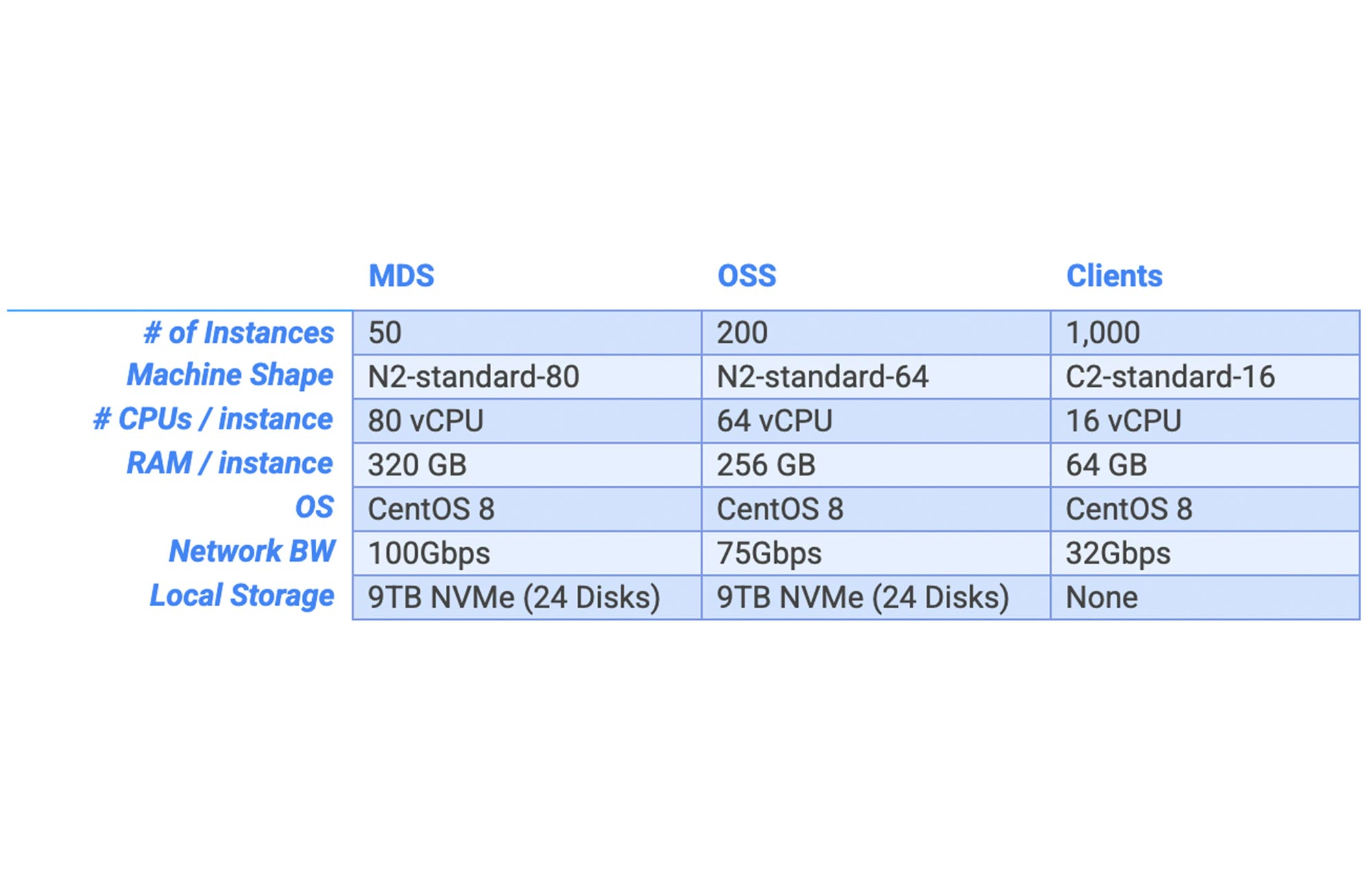

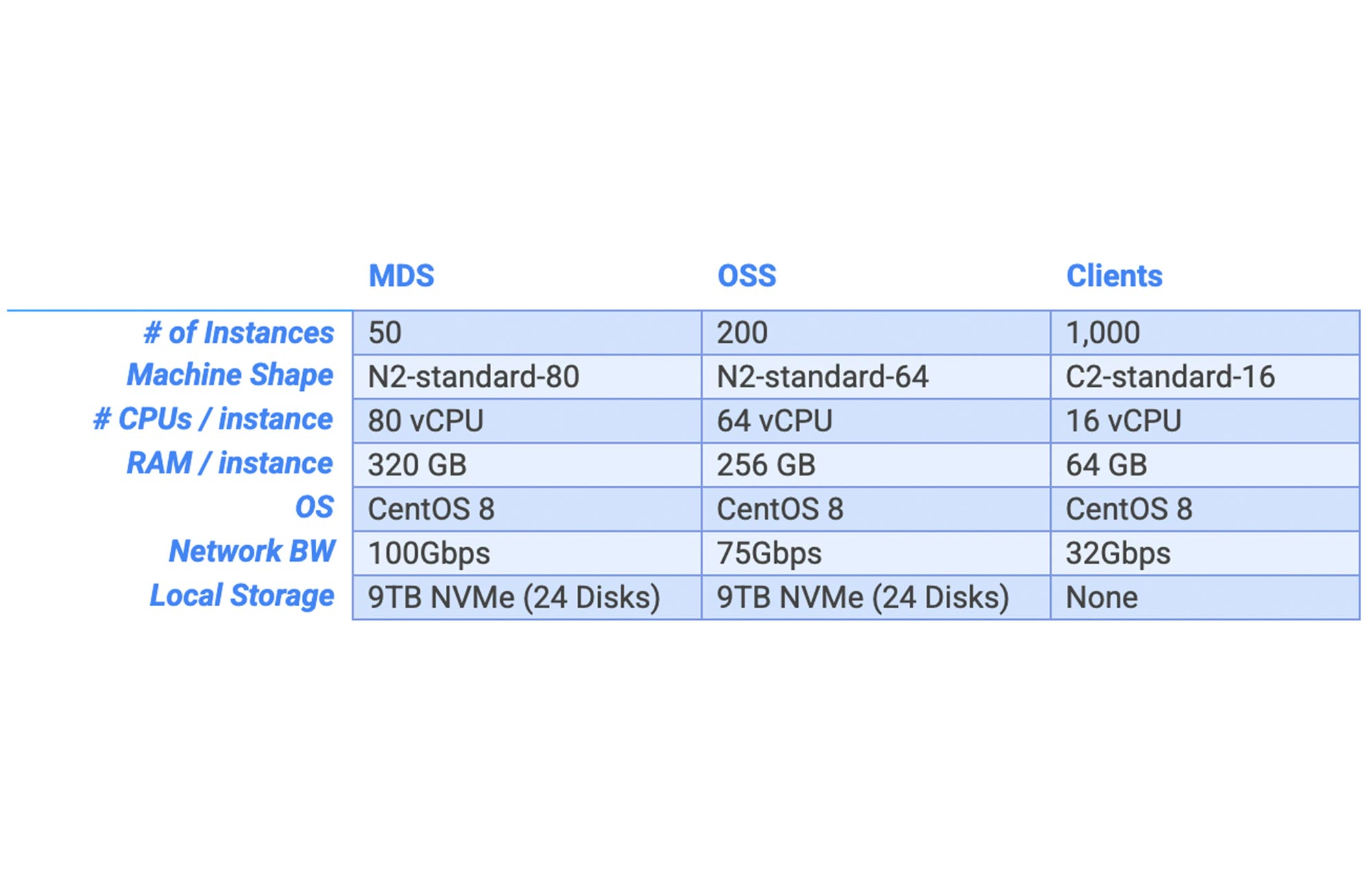

We’re proud to report our latest submission ranked 8th and is currently the highest ranked Lustre storage system on the list—quite a feat considering Lustre is one of the most widely deployed HPC file systems in the world. Our submission deployed a 1.8PB Lustre file system on 200 N2 VM instances with Local-SSD for the storage servers (Lustre OSSs), 50 N2 VM instances with Local-SSD for the metadata servers (Lustre MDTs), and 1,000 C2 VM instances using the HPC Image for the compute nodes (Lustre clients). The storage servers utilized 75Gbps networking (to match the read bandwidth of the SSD).

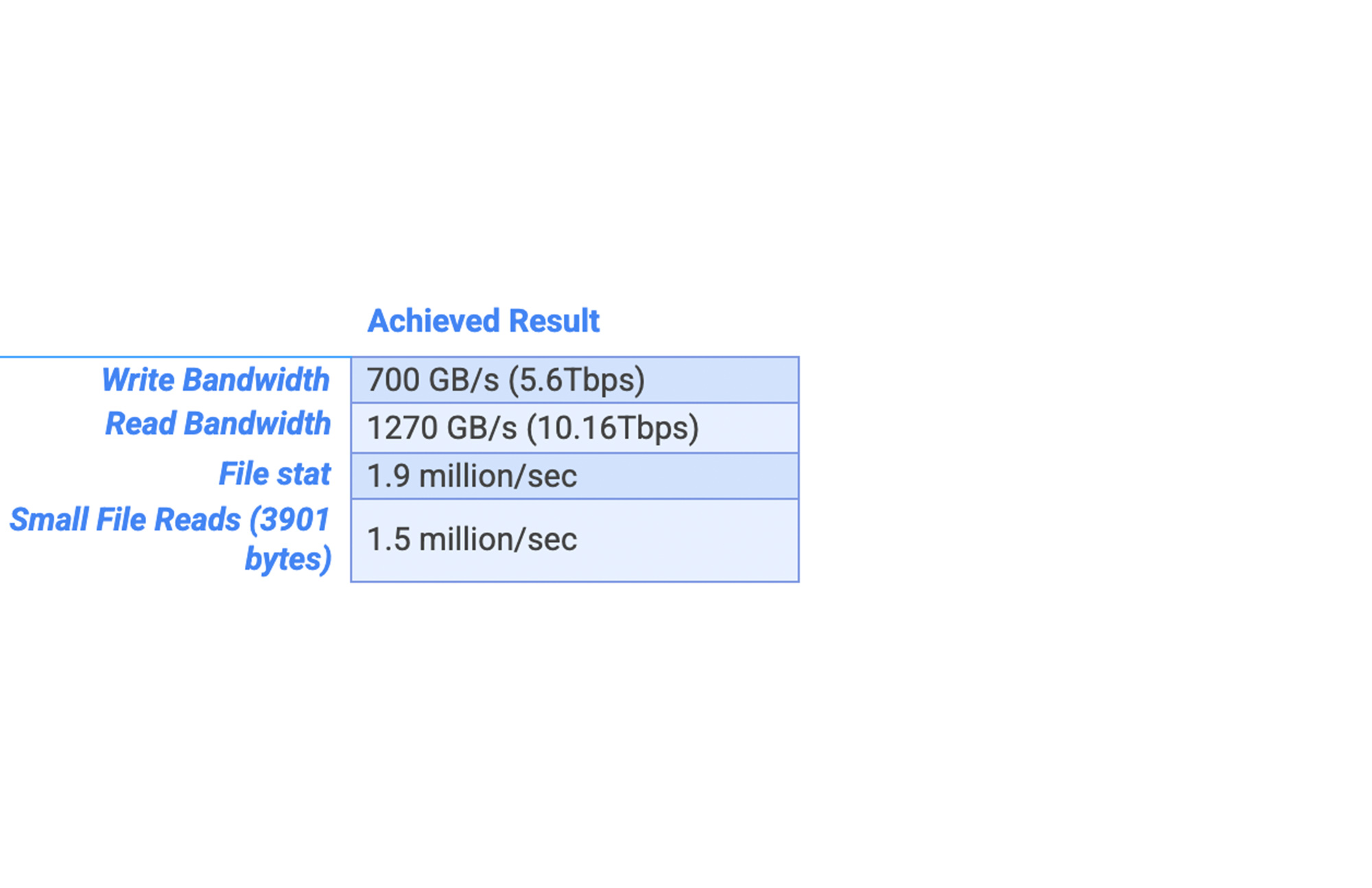

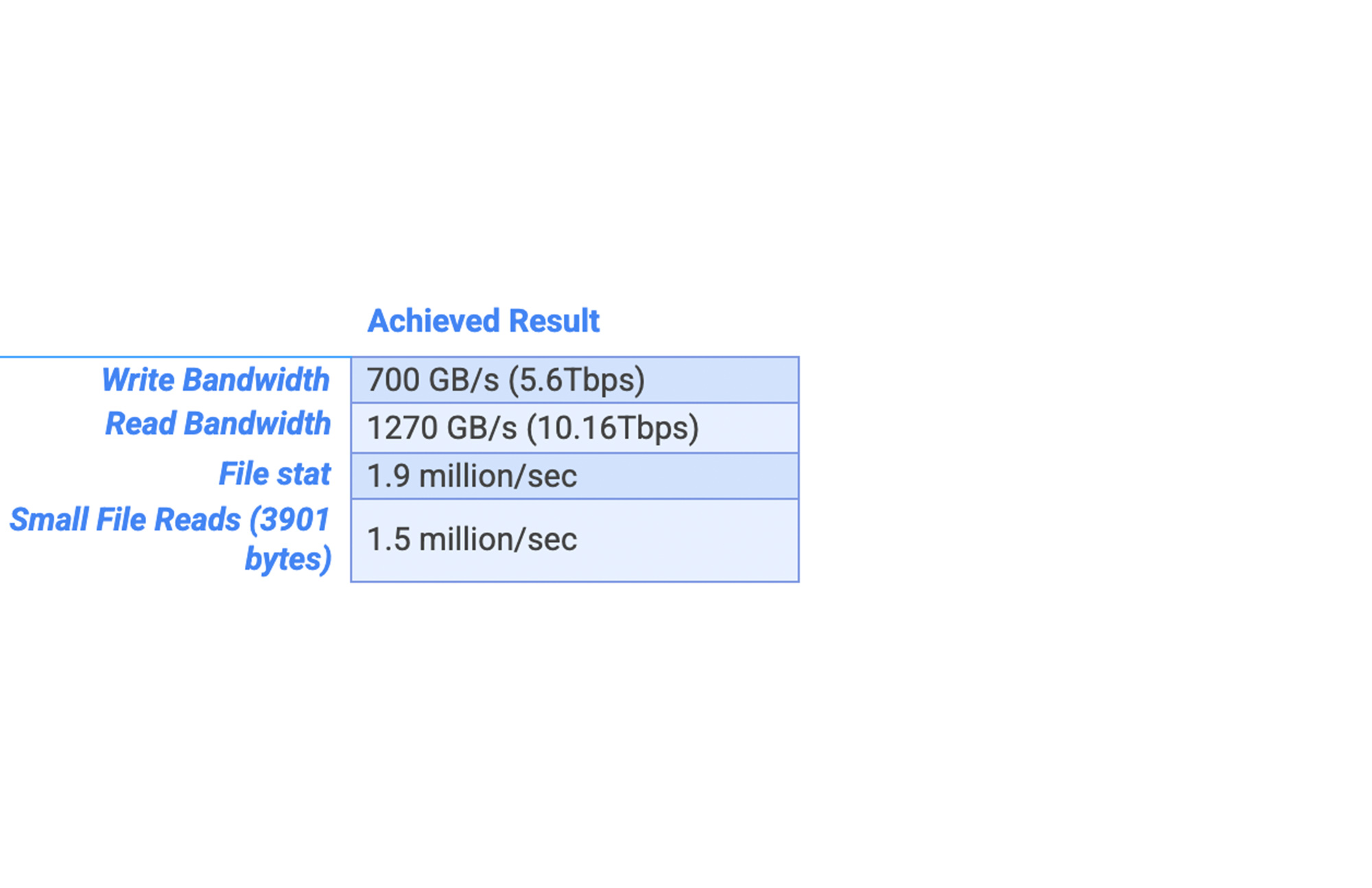

All of the performance details are on the IO500 website, but some key results include:

On read bandwidth, this is a 12x improvement over our submission in 2019 using Persistent Disk, which really demonstrates the potential for deploying scratch file systems in the cloud.

Further, Lustre on Google Cloud is one of only 3 systems demonstrating greater than 1TB/s, indicating that even high bandwidth (and not just IOPs) remains challenging for many HPC storage deployments.

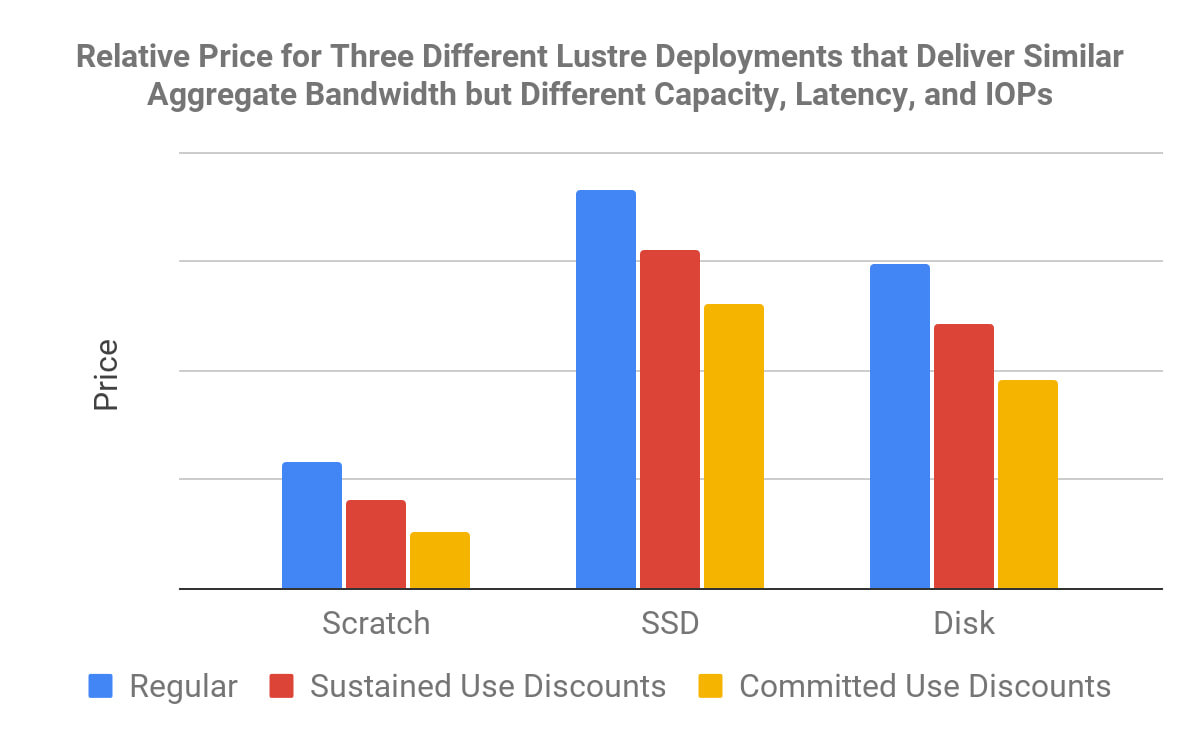

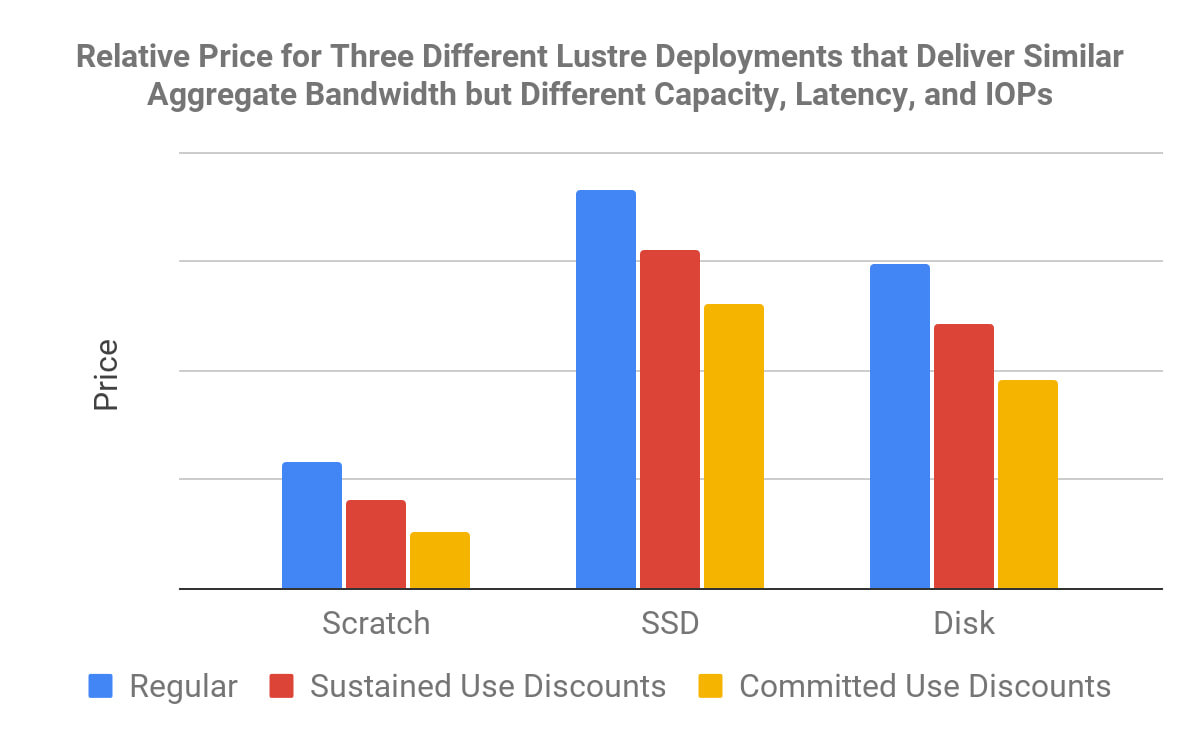

Each of our three IO500 submissions demonstrates that while it is possible to build a single monolithic extremely-fast Lustre file system on Google Cloud, it can be more cost effective to tailor the compute/networking/storage deployment aspects to the needs of your application. For example, different levels of price/performance can be achieved by leveraging the range of Persistent Disk options for long lasting persistent deployments and optimizing the ratio of Local-SSD capacity to the number of vCPUs for relatively short lived deployments that really need to go fast.

Real-world applications

Full automation of Lustre on Google Cloud enables HPC workloads such as data-intensive ML training using Compute Engine A2 instances, highly available and high bandwidth SAS Grid analytics, and other HPC applications that need either high-bandwidth access to large files or low latency access to millions to billions of small files.

HPC in the cloud is growing faster than the HPC industry overall, which makes sense when you think about how easy it is to spin up very large compute and storage clusters in the cloud in mere minutes. Typically on-premises HPC deployments take many months to plan: procure hardware and software, install and configure the infrastructure, and optimize the application. Google has demonstrated the highest performing Lustre deployment that took a few minutes to deploy with a few keystrokes. Whether for born-in-the-cloud HPC applications, full migration of HPC applications in the cloud, hybrid and burst deployments, or simply for POC evaluations to improve on-prem supercomputers, the elasticity, pay per use, and the lower associated maintenance cost of HPC in the cloud has many of benefits.

When deploying both new and existing high performance applications to the cloud, there are a number of decisions to consider, including rightsizing VMs, deployment and scheduling tools, monitoring frameworks and much more. One of the most significant decisions is the storage architecture, as there are many great options in Google Cloud. When high performance storage is done right, it's magical, flowing data seamlessly to compute nodes at an astounding speed. But when HPC storage is done wrong, it can limit time to insight (and even grind all progress to a halt), cause many management headaches, and be unnecessarily expensive.

The use of parallel file systems such as Lustre in Google Cloud fills a critical need for many HPC applications that balances many of the benefits of both Cloud Storage and cloud-based NAS solutions such as Filestore. Cloud Storage effortlessly scales beyond petabytes of data at very high bandwidth and at a very low cost per byte, but requires the use of Cloud Storage APIs and/or libraries, incurs extra operation charges, and has higher latency relative to on-prem storage systems. NAS filers like Filestore include robust enterprise security and data management features, have very low latency, no per-operation charges, all while enabling use of NFS/SMB that allows applications to be seamlessly deployed from laptops and on-premises to the cloud. But users must be mindful of the lack of parallel I/O (which can constrain maximum performance), relatively low capacity limits (currently up to 100TB per volume), and the relatively high cost as compared to Cloud Storage.

DDN EXAScaler on Google Cloud, an enterprise version of Lustre by the Lustre open-source maintainers at DDN, delivers a balance of both Cloud Storage and NAS filer storage. EXAScaler enables full POSIX-based file APIs and low-latency access, but also scales to petabytes. As shown by our performance results on the IO500, using DDN EXAScaler on Google Cloud can scale to TB/s of bandwidth and millions of metadata operations per second. Because the features are balanced, the price also ends up being balanced and typically falls somewhere between the cost of using Cloud Storage and the cost of using NAS (although this is highly dependent on the type of storage).

We would like to thank the Lustre experts at DDN and NAG for their incredibly valuable insights in tuning and tailoring Google Cloud and Lustre for the IO500. An easy way to get started using DDN’s EXAScaler on Google Cloud is by using the Marketplace console, or for more advanced users, get more control by using Terraform deployment scripts. There’s also an easy-to-follow demo, and if you continue to need some guidance, HPC experts here at Google as well as at NAG and DDN are here to help.