Running workloads on dedicated hardware just got better

David Cheng

Senior Product Manager

Kevan Miller

Staff Software Engineer

At Google Cloud, we repeatedly hear how important flexibility, openness, and choice are for your cloud migration and modernization journey. For enterprise customers that require dedicated hardware due to requirements such as performance isolation (for gaming), physical separation (for finance or healthcare), or license compliance (Windows workloads), we've improved the flexibility of our sole-tenant nodes to better meet your isolation, security, and compliance needs.

Sole-tenant nodes already let you mix, match, and right-size different VM shapes on each node, take advantage of live migration for maintenance events, as well as auto-schedule your instances onto a specific node, node group, or group of nodes using node affinity labels. Today, we are excited to announce the availability of three new features on sole-tenant nodes:

Live migration within a fixed node pool for bring your own license (BYOL) (beta)

Node group autoscaler (beta)

Migrate between sole- and multi-tenant nodes (GA)

These new features make it easier and more cost-effective to deploy, manage, and run workloads on dedicated Google Cloud hardware.

More refined maintenance controls for Windows BYOL

There are several ways to license Windows workloads to run on Google Cloud: you can purchase on-demand licenses, use License Mobility for Microsoft applications, or bring existing eligible server-bound licenses onto sole-tenant nodes. Sole-tenant nodes let you launch your instances onto physical Compute Engine servers that are dedicated exclusively to your workloads to comply with dedicated hardware requirements. At the same time, sole-tenant nodes also provide visibility into the underlying host hardware and support your license reporting through integration with BigQuery.

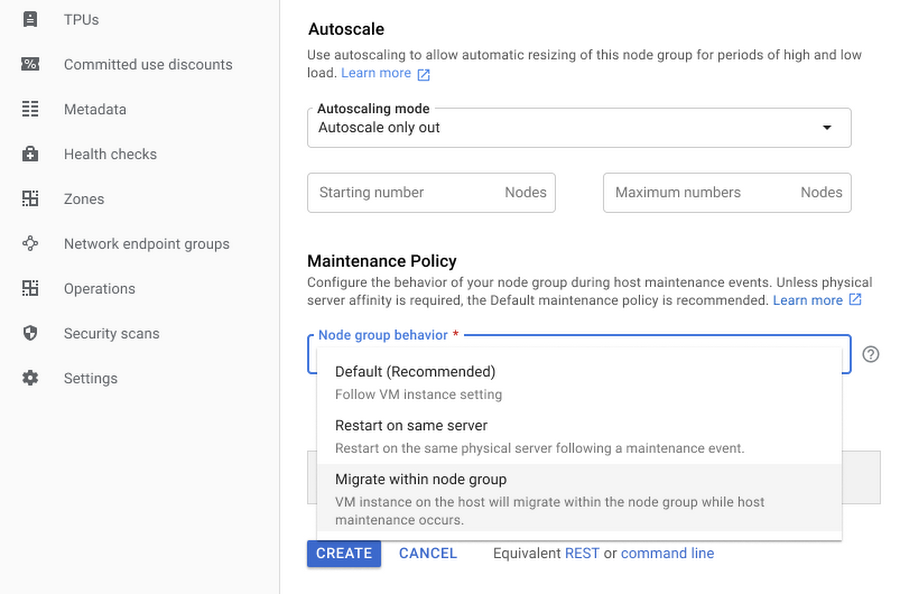

Now, sole-tenant nodes offer you extended control over your dedicated machines with a new node group maintenance policy. This setting allows you to specify the behavior of the instances on your sole-tenant node group during host maintenance events. To avoid additional licensing costs and provide you with the latest kernel and security updates while supporting your per-core or per-processor licenses, the new 'Migrate Within Node Group' maintenance policy setting enables transparent installation of kernel updates, without VM downtime, and while keeping your unique physical core usage to a minimum.

Node groups configured with this setting live migrate instances within a fixed pool of sole-tenant nodes (dedicated servers) during host maintenance events. By limiting migrations to that fixed pool of hosts, you are able to dynamically move your virtual machines between already licensed servers and avoid license pollution. It also helps us keep you running on the newest kernel updates for better performance and security, and enables continuous uptime through automatic migrations. Now your server-bound bring-your-own-license workloads can strike a better balance between licensing cost, workload uptime, and platform security.

In addition to the 'Migrate Within Node Group' setting, you can also choose to configure your node group to the 'Default' setting, which moves instances to a new host during maintenance events (recommended for workloads without server affinity requirements), or to the 'Restart In Place" setting which terminates the instances and restarts them on the same physical server following host maintenance events.

For more information on node-group maintenance policies visit the bring your own license documentation.

Node group autoscaler

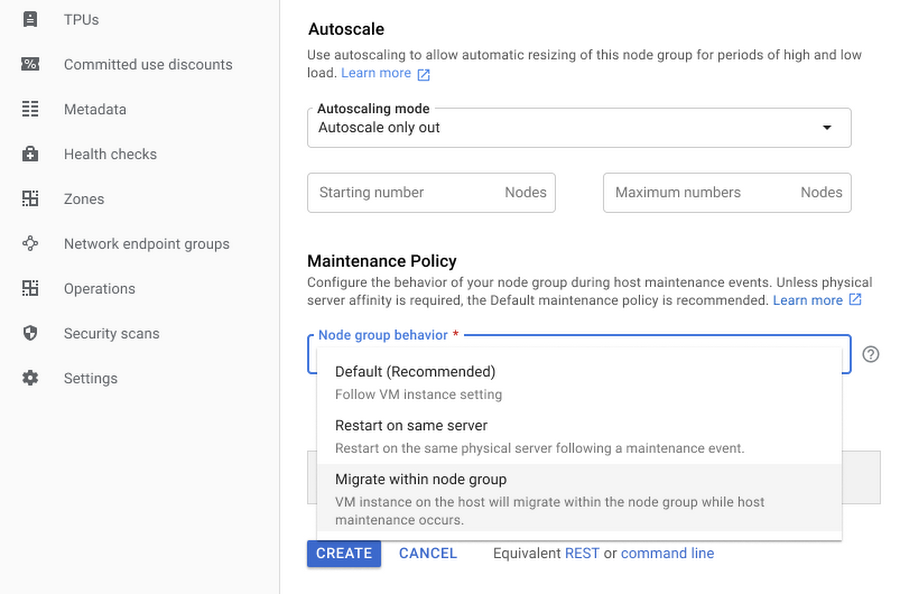

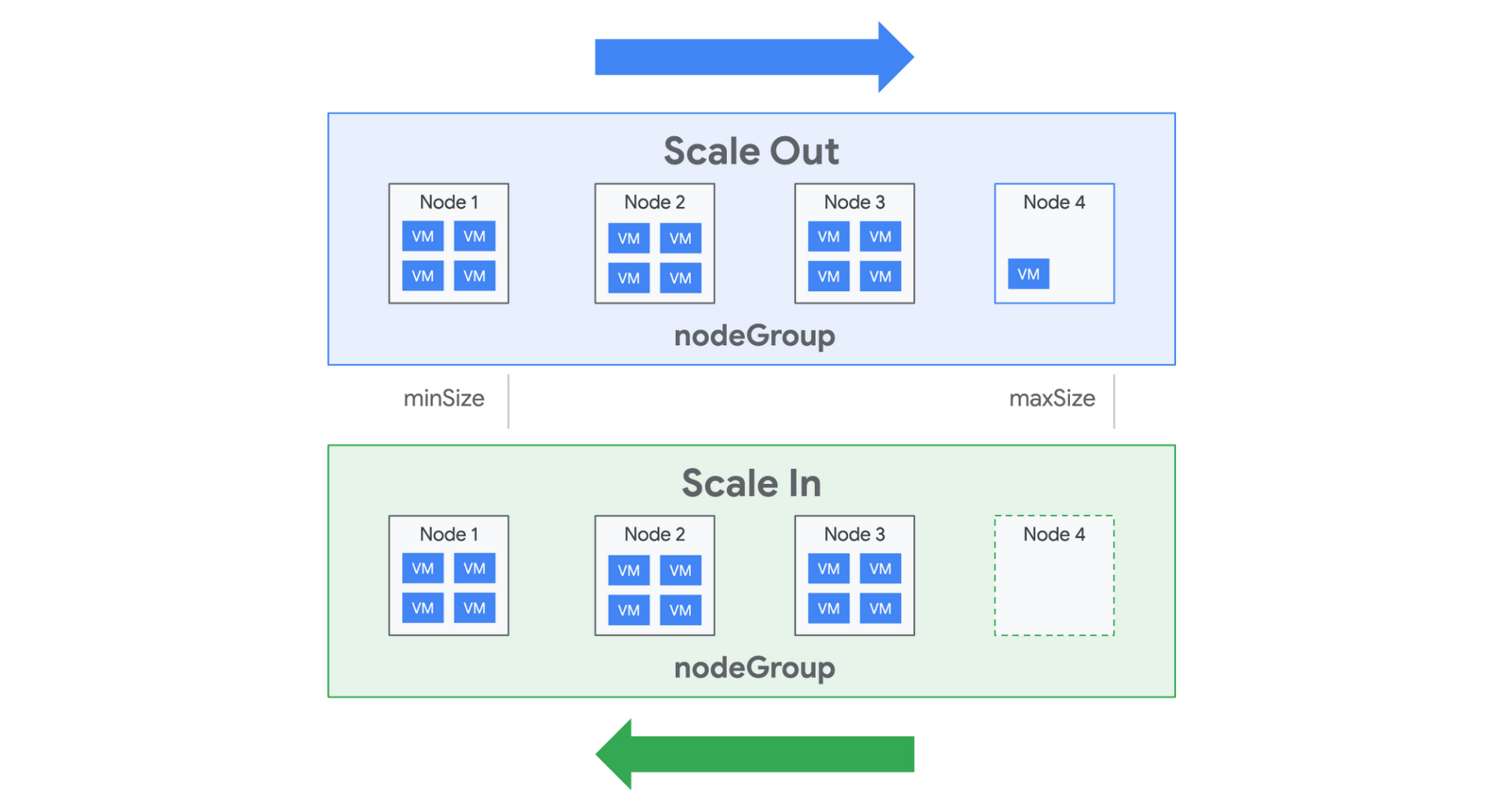

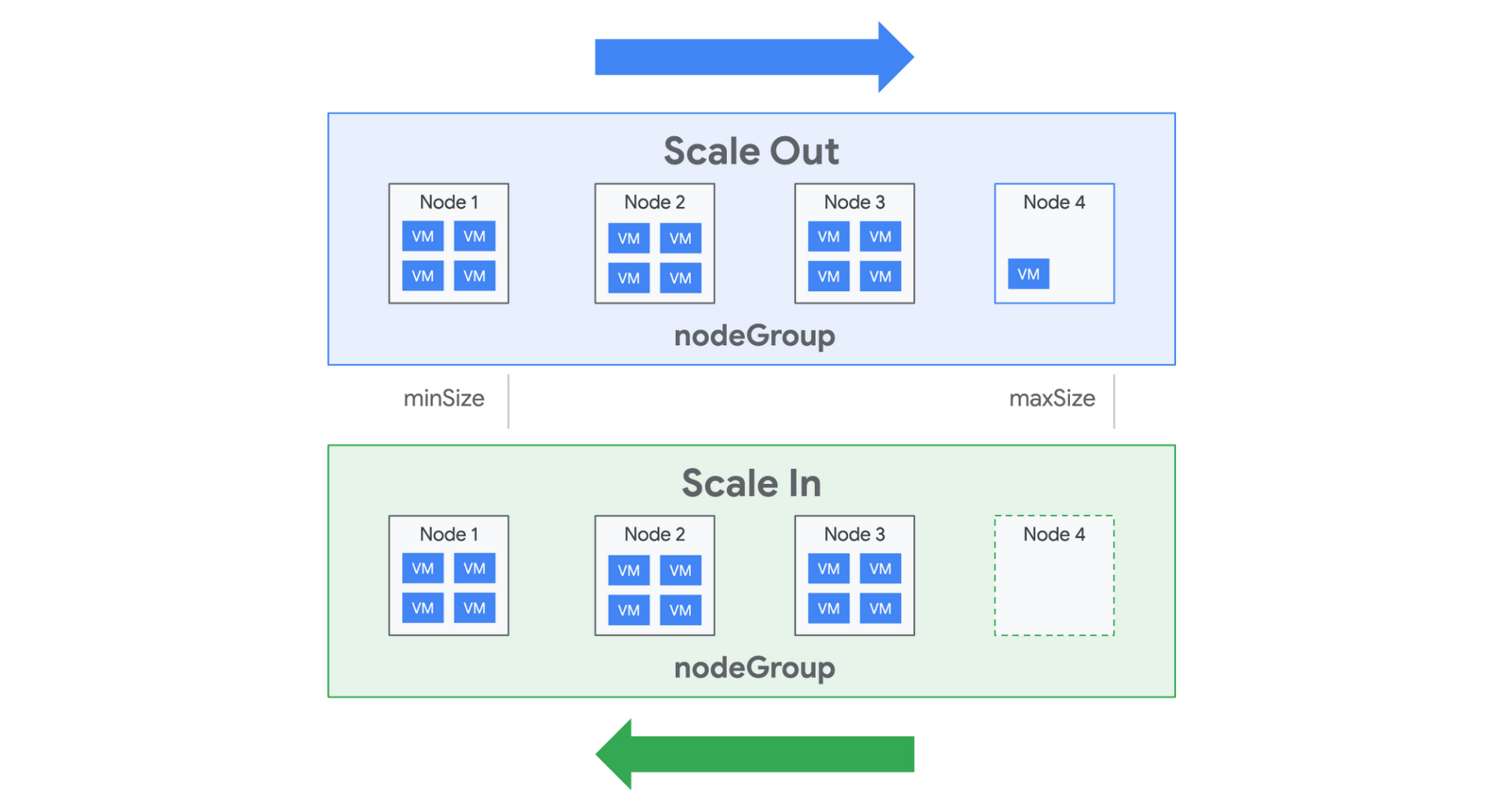

If you have dynamic capacity requirements, autoscaler for sole-tenant node groups automatically manages your pool of sole-tenant nodes, allowing you to scale your workloads without worrying about independently scaling your node group. Autoscaler for sole-tenant node groups increases the size of your node group when there is insufficient capacity to accommodate a new instance, and automatically decreases the size of a node group when it detects the presence of an empty node. This reduces scheduling overhead, increases resource utilization, and drives down your infrastructure costs.

Autoscaler allows you to set the minimum and maximum boundaries for your node group size and scales behind the scenes to accommodate your changing workload. For additional flexibility, autoscaling also supports a scale-out (increase-only) mode to support monotonically increasing workloads or workloads whose licenses are tied to a physical cores or processors.

Migrating into sole tenancy

Finally, if you need additional agility for your workloads, you can now move instances into, between, and out of sole-tenant nodes. This allows you to achieve hardware isolation for existing VM instances based on your changing security, compliance, or performance isolation needs. You might want to move an instance into a sole-tenant node for special events like a big online shopping day, game launch, or any moment that requires peak performance and the highest level of control. The example below illustrates the steps for migrating an instance onto a sole-tenant node:

For details on rescheduling your instances onto dedicated hardware, see the documentation.

Pricing and availability

Pricing for sole-tenant nodes remains simple: pay only for the nodes you use on a per-second basis, with a one-minute minimum charge. Sustained use discounts automatically apply, as do any new or existing committed use discounts. Visit the pricing page to learn more about sole-tenant nodes, as well as the regional availability page to find out if they are available in your region.