This page explains how to use a Docker container to host your Elastic Stack installation, and automatically send Security Command Center findings, assets, audit logs, and security sources to Elastic Stack. It also describes how to manage the exported data.

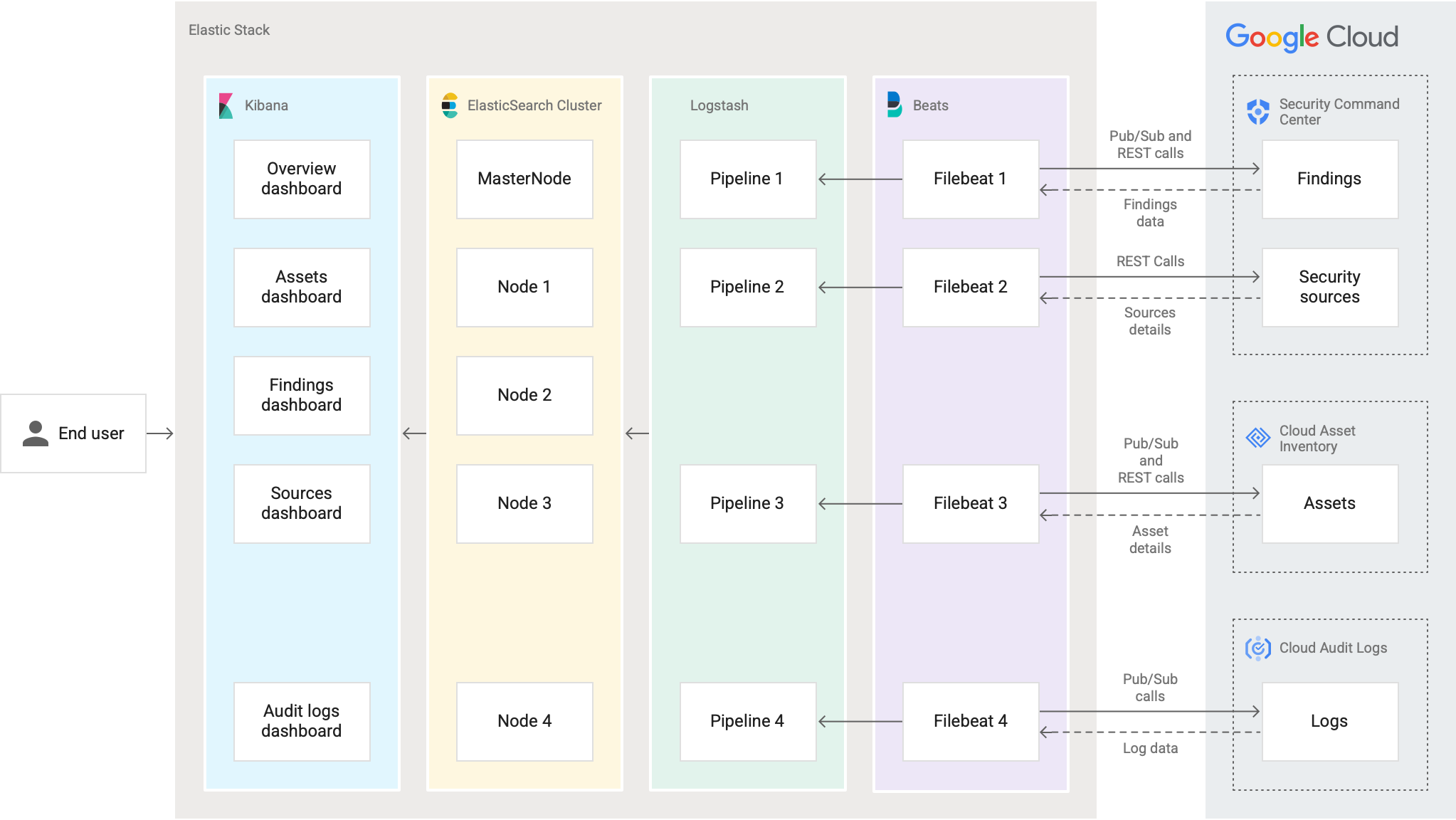

Docker is a platform for managing applications in containers. Elastic Stack is a security information and event management (SIEM) platform that ingests data from one or more sources and lets security teams manage responses to incidents and perform real-time analytics. The Elastic Stack configuration discussed in this guide includes four components:

- Filebeat: a lightweight agent installed on edge hosts, such as virtual machines (VM), that can be configured to collect and forward data

- Logstash: a transformation service that ingests data, maps it into required fields, and forwards the results to Elasticsearch

- Elasticsearch: a search database engine that stores data

- Kibana: powers dashboards that let you visualize and analyze data

In this guide, you set up Docker, ensure that the required Security Command Center and Google Cloud services are properly configured, and use a custom module to send findings, assets, audit logs, and security sources to Elastic Stack.

The following figure illustrates the data path when using Elastic Stack with Security Command Center.

Configure authentication and authorization

Before connecting to Elastic Stack, you need to create an Identity and Access Management (IAM) service account in each Google Cloud organization that you want to connect and grant the account both the organization-level and project-level IAM roles that Elastic Stack needs.

Create a service account and grant IAM roles

The following steps use the Google Cloud console. For other methods, see the links at the end of this section.

Complete these steps for each Google Cloud organization that you want to import Security Command Center data from.

- In the same project in which you create your Pub/Sub topics, use the Service Accounts page in the Google Cloud console to create a service account. For instructions, see Creating and managing service accounts.

Grant the service account the following roles:

- Pub/Sub Admin (

roles/pubsub.admin) - Cloud Asset Owner (

roles/cloudasset.owner)

- Pub/Sub Admin (

Copy the name of the service account that you just created.

Use the project selector in the Google Cloud console to switch to the organization level.

Open the IAM page for the organization:

On the IAM page, click Grant access. The grant access panel opens.

In the Grant access panel, complete the following steps:

- In the Add principals section in the New principals field, paste the name of the service account.

In the Assign roles section, use the Role field to grant the following IAM roles to the service account:

- Security Center Admin Editor (

roles/securitycenter.adminEditor) - Security Center Notification Configurations Editor

(

roles/securitycenter.notificationConfigEditor) - Organization Viewer (

roles/resourcemanager.organizationViewer) - Cloud Asset Viewer (

roles/cloudasset.viewer) - Logs Configuration Writer (

roles/logging.configWriter)

Click Save. The service account appears on the Permissions tab of the IAM page under View by principals.

By inheritance, the service account also becomes a principal in all child projects of the organization. The roles that are applicable at the project level are listed as inherited roles.

For more information about creating service accounts and granting roles, see the following topics:

Provide the credentials to Elastic Stack

Depending on where you are hosting Elastic Stack, how you provide the IAM credentials to Elastic Stack differs.

If you are hosting the Docker container in Google Cloud, consider the following:

Add the service account to the VM that will host your Kubernetes nodes. If you are using multiple Google Cloud organizations, add this service account to the other organizations and grant it the IAM roles that are described in steps 5 to 7 of Create a service account and grant IAM roles.

If you deploy the Docker container in a service perimeter, create the ingress and egress rules. For instructions, see Granting perimeter access in VPC Service Controls.

If you are hosting the Docker container in your on-premises environment, create a service account key for each Google Cloud organization. You need the service account keys in JSON format to complete this guide.

To learn about best practices for storing your service account keys securely, see Best practices for managing service account keys.

If you are installing the Docker container in another cloud, configure workload identity federation and download the credentials configuration files. If you are using multiple Google Cloud organizations, add this service account to the other organizations and grant it the IAM roles that are described in steps 5 to 7 of Create a service account and grant IAM roles.

Configure notifications

Complete these steps for each Google Cloud organization that you want to import Security Command Center data from.

Set up finding notifications as follows:

- Enable the Security Command Center API.

- Create a filter to export findings and assets.

- Create four Pub/Sub topics: one each for

findings, resources, audit logs, and assets. The

NotificationConfigmust use the Pub/Sub topic that you create for findings.

You will need your organization ID, project ID, and Pub/Sub topic names from this task to configure Elastic Stack.

Enable the Cloud Asset API for your project.

Install the Docker and Elasticsearch components

Follow these steps to install the Docker and Elasticsearch components in your environment.

Install Docker Engine and Docker Compose

You can install Docker for use on-premises or with a cloud provider. To get started, complete the following guides in Docker's product documentation:

Install Elasticsearch and Kibana

The Docker image that you installed in Install Docker includes Logstash and Filebeat. If you don't already have Elasticsearch and Kibana installed, use the following guides to install the applications:

You need the following information from those tasks to complete this guide:

- Elastic Stack: host, port, certificate, username, and password

- Kibana: host, port, certificate, username, and password

Download the GoApp module

This section explains how to download the GoApp module, a Go program

maintained by Security Command Center. The module automates the process of scheduling

Security Command Center API calls and regularly retrieves Security Command Center data for use in

Elastic Stack.

To install GoApp, do the following:

In a terminal window, install wget, a free software utility used to retrieve content from web servers.

For Ubuntu and Debian distributions, run the following:

apt-get install wgetFor RHEL, CentOS, and Fedora distributions, run the following:

yum install wgetInstall

unzip, a free software utility used to extract the contents of ZIP files.For Ubuntu and Debian distributions, run the following:

apt-get install unzipFor RHEL, CentOS, and Fedora distributions, run the following:

yum install unzipCreate a directory for the GoogleSCCElasticIntegration installation package:

mkdir GoogleSCCElasticIntegrationDownload the GoogleSCCElasticIntegration installation package:

wget -c https://storage.googleapis.com/security-center-elastic-stack/GoogleSCCElasticIntegration-Installation.zipExtract the contents of the GoogleSCCElasticIntegration installation package into the

GoogleSCCElasticIntegrationdirectory:unzip GoogleSCCElasticIntegration-Installation.zip -d GoogleSCCElasticIntegrationCreate a working directory to store and run

GoAppmodule components:mkdir WORKING_DIRECTORYReplace

WORKING_DIRECTORYwith the directory name.Navigate to the

GoogleSCCElasticIntegrationinstallation directory:cd ROOT_DIRECTORY/GoogleSCCElasticIntegration/Replace

ROOT_DIRECTORYwith the path to the directory that contains theGoogleSCCElasticIntegrationdirectory.Move

install,config.yml,dashboards.ndjson, and thetemplatesfolder (with thefilebeat.tmpl,logstash.tmpl, anddocker.tmplfiles) into your working directory.mv install/install install/config.yml install/templates/docker.tmpl install/templates/filebeat.tmpl install/templates/logstash.tmpl install/dashboards.ndjson WORKING_DIRECTORYReplace

WORKING_DIRECTORYwith the path to your working directory.

Install the Docker container

To set up the Docker container, you download and install a preformatted image from Google Cloud that contains Logstash and Filebeat. For information about the Docker image, go to the Artifact Registry repository in the Google Cloud console.

During installation, you configure the GoApp module with Security Command Center

and Elastic Stack credentials.

Navigate to your working directory:

cd /WORKING_DIRECTORYReplace

WORKING_DIRECTORYwith the path to your working directory.Verify that the following files appear in your working directory:

├── config.yml ├── install ├── dashboards.ndjson ├── templates ├── filebeat.tmpl ├── docker.tmpl ├── logstash.tmplIn a text editor, open the

config.ymlfile and add requested variables. If a variable isn't required, you can leave it blank.Variable Description Required elasticsearchThe section for your Elasticsearch configuration. Required hostThe IP address of your Elastic Stack host. Required passwordYour Elasticsearch password. Optional portThe port for your Elastic Stack host. Required usernameYour Elasticsearch username. Optional cacertThe certificate for the Elasticsearch server (for example, path/to/cacert/elasticsearch.cer).Optional http_proxyA link with the username, password, IP address, and port for your proxy host (for example, http://USER:PASSWORD@PROXY_IP:PROXY_PORT).Optional kibanaThe section for your Kibana configuration. Required hostThe IP address or hostname to which the Kibana server will bind. Required passwordYour Kibana password. Optional portThe port for the Kibana server. Required usernameYour Kibana username. Optional cacertThe certificate for the Kibana server (for example, path/to/cacert/kibana.cer).Optional cronThe section for your cron configuration. Optional assetThe section for your asset cron configuration (for example, 0 */45 * * * *).Optional sourceThe section for your source cron configuration (for example, 0 */45 * * * *). For more information, see Cron expression generator.Optional organizationsThe section for your Google Cloud organization configuration. To add multiple Google Cloud organizations, copy everything from - id:tosubscription_nameunderresource.Required idYour organization ID. Required client_credential_pathOne of: - The path to your JSON file, if you are using service account keys.

- The credential configuration file, if you are using Workload Identity Federation.

- Do not specify anything if this is the Google Cloud organization that you are installing the Docker container in.

Optional, depending on your environment updateWhether you are upgrading from a previous version, either nfor no oryfor yesOptional projectThe section for your project ID. Required idThe ID for the project that contains the Pub/Sub topic. Required auditlogThe section for the Pub/Sub topic and subscription for audit logs. Optional topic_nameThe name of the Pub/Sub topic for audit logs Optional subscription_nameThe name of the Pub/Sub subscription for audit logs Optional findingsThe section for the Pub/Sub topic and subscription for findings. Optional topic_nameThe name of the Pub/Sub topic for findings. Optional start_dateThe optional date to start migrating findings, for example, 2021-04-01T12:00:00+05:30Optional subscription_nameThe name of the Pub/Sub subscription for findings. Optional assetThe section for the asset configuration. Optional iampolicyThe section for the Pub/Sub topic and subscription for IAM policies. Optional topic_nameThe name of the Pub/Sub topic for IAM policies. Optional subscription_nameThe name of the Pub/Sub subscription for IAM policies. Optional resourceThe section for the Pub/Sub topic and subscription for resources. Optional topic_nameThe name of the Pub/Sub topic for resources. Optional subscription_nameThe name of the Pub/Sub subscription for resources. Optional Example

config.ymlfileThe following example shows a

config.ymlfile that includes two Google Cloud organizations.elasticsearch: host: 127.0.0.1 password: changeme port: 9200 username: elastic cacert: path/to/cacert/elasticsearch.cer http_proxy: http://user:password@proxyip:proxyport kibana: host: 127.0.0.1 password: changeme port: 5601 username: elastic cacert: path/to/cacert/kibana.cer cron: asset: 0 */45 * * * * source: 0 */45 * * * * organizations: – id: 12345678910 client_credential_path: update: project: id: project-id-12345 auditlog: topic_name: auditlog.topic_name subscription_name: auditlog.subscription_name findings: topic_name: findings.topic_name start_date: 2021-05-01T12:00:00+05:30 subscription_name: findings.subscription_name asset: iampolicy: topic_name: iampolicy.topic_name subscription_name: iampolicy.subscription_name resource: topic_name: resource.topic_name subscription_name: resource.subscription_name – id: 12345678911 client_credential_path: update: project: id: project-id-12346 auditlog: topic_name: auditlog2.topic_name subscription_name: auditlog2.subscription_name findings: topic_name: findings2.topic_name start_date: 2021-05-01T12:00:00+05:30 subscription_name: findings1.subscription_name asset: iampolicy: topic_name: iampolicy2.topic_name subscription_name: iampolicy2.subscription_name resource: topic_name: resource2.topic_name subscription_name: resource2.subscription_nameRun the following commands to install the Docker image and configure the

GoAppmodule.chmod +x install ./installThe

GoAppmodule downloads the Docker image, installs the image, and sets up the container.When the process is finished, copy the email address of the WriterIdentity service account from the installation output.

docker exec googlescc_elk ls docker exec googlescc_elk cat Sink_}}HashId{{Your working directory should have the following structure:

├── config.yml ├── dashboards.ndjson ├── docker-compose.yml ├── install ├── templates ├── filebeat.tmpl ├── logstash.tmpl ├── docker.tmpl └── main ├── client_secret.json ├── filebeat │ └── config │ └── filebeat.yml ├── GoApp │ └── .env └── logstash └── pipeline └── logstash.conf

Update permissions for audit logs

To update permissions so that audit logs can flow to your SIEM:

Navigate to the Pub/Sub topics page.

Select your project that includes your Pub/Sub topics.

Select the Pub/Sub topic that you created for audit logs.

In Permissions, add the WriterIdentity service account (that you copied in step 4 of the installation procedure) as a new principal and assign it the Pub/Sub Publisher role. The audit log policy is updated.

The Docker and Elastic Stack configurations are complete. You can now set up Kibana.

View Docker logs

Open a terminal, and run the following command to see your container information, including container IDs. Note the ID for the container where Elastic Stack is installed.

docker container lsTo start a container and view its logs, run the following commands:

docker exec -it CONTAINER_ID /bin/bash cat go.logReplace

CONTAINER_IDwith the ID of the container where Elastic Stack is installed.

Set up Kibana

Complete these steps when you are installing the Docker container for the first time.

Open

kibana.ymlin a text editor.sudo vim KIBANA_DIRECTORY/config/kibana.ymlReplace

KIBANA_DIRECTORYwith the path to your Kibana installation folder.Update the following variables:

server.port: the port to use for Kibana's backend server; default is 5601server.host: the IP address or hostname to which the Kibana server will bindelasticsearch.hosts: the IP address and port of the Elasticsearch instance to use for queriesserver.maxPayloadBytes: the maximum payload size in bytes for incoming server requests; default is 1,048,576url_drilldown.enabled: a Boolean value that controls the ability to navigate from Kibana dashboard to internal or external URLS; default istrue

The completed configuration resembles the following:

server.port: PORT server.host: "HOST" elasticsearch.hosts: ["http://ELASTIC_IP_ADDRESS:ELASTIC_PORT"] server.maxPayloadBytes: 5242880 url_drilldown.enabled: true

Import Kibana dashboards

- Open the Kibana application.

- In the navigation menu, go to Stack Management, and then click Saved Objects.

- Click Import, navigate to the working directory and select dashboards.ndjson. The dashboards are imported and index patterns are created.

Upgrade the Docker container

If you deployed a previous version of the GoApp module, you can upgrade to a newer version.

When you upgrade the Docker container to a newer version, you can keep your

existing service account setup, Pub/Sub

topics, and ElasticSearch components.

If you are upgrading from an integration that didn't use a Docker container, see Upgrade to the latest release.

If you are upgrading from v1, complete these actions:

Add the Logs Configuration Writer (

roles/logging.configWriter) role to the service account.Create a Pub/Sub topic for your audit logs.

If you are installing the Docker container in another cloud, configure workload identity federation and download the credentials configuration file.

Optionally, to avoid issues when importing the new dashboards, remove the existing dashboards from Kibana:

- Open the Kibana application.

- In the navigation menu, go to Stack Management, and then click Saved Objects.

- Search for Google SCC.

- Select all the dashboards that you want to remove.

- Click Delete.

Remove the existing Docker container:

Open a terminal and stop the container:

docker stop CONTAINER_IDReplace

CONTAINER_IDwith the ID of the container where Elastic Stack is installed.Remove the Docker container:

docker rm CONTAINER_IDIf necessary, add

-fbefore the container ID to remove the container forcefully.

Complete steps 1 through 7 in Download the GoApp module.

Move the existing

config.envfile from your previous installation into the\updatedirectory.If necessary, give executable permission to run

./update:chmod +x ./update ./updateRun

./updateto convertconfig.envtoconfig.yml.Verify that the

config.ymlfile includes your existing configuration. If not, re-run./update.To support multiple Google Cloud organizations, add another organization configuration to the

config.ymlfile.Move the

config.ymlfile into your working directory, where theinstallfile is located.Complete the steps in Install Docker.

Complete the steps in Update permissions for audit logs.

Import the new dashboards, as described in Import Kibana dashboards. This step will overwrite your existing Kibana dashboards.

View and edit Kibana dashboards

You can use custom dashboards in Elastic Stack to visualize and analyze your findings, assets, and security sources. The dashboards display critical findings and help your security team prioritize fixes.

Overview dashboard

The Overview dashboard contains a series of charts that displays the total number of findings in your Google Cloud organizations by severity level, category, and state. Findings are compiled from Security Command Center's built-in services, such as Security Health Analytics, Web Security Scanner, Event Threat Detection, and Container Threat Detection, and any integrated services you enable.

To filter content by criteria such as misconfigurations or vulnerabilities, you can select the Finding class.

Additional charts show which categories, projects, and assets are generating the most findings.

Assets dashboard

The Assets dashboard displays tables that show your Google Cloud assets. The tables show asset owners, asset counts by resource type and projects, and your most recently added and updated assets.

You can filter asset data by organization, asset name, asset type, and parents, and quickly drill down to findings for specific assets. If you click an asset name, you are redirected to Security Command Center's Assets page in the Google Cloud console and shown details for the selected asset.

Audit logs dashboard

The Audit logs dashboard displays a series of charts and tables that show audit log information. The audit logs that are included in the dashboard are the administrator activity, data access, system events, and policy denied audit logs. The table includes the time, severity, log type, log name, service name, resource name, and resource type.

You can filter the data by organization, source (such as a project), severity, log type, and resource type.

Findings dashboard

The Findings dashboard includes charts showing your most recent findings. The charts provide information about the number of findings, their severity, category, and state. You can also view active findings over time, and which projects or resources have the most findings.

You can filter the data by organization and finding class.

If you click a finding name, you are redirected to Security Command Center's Findings page in the Google Cloud console and shown details for the selected finding.

Sources dashboard

The Sources dashboard shows the total number of findings and security sources, the number of findings by source name, and a table of all your security sources. Table columns include name, display name, and description.

Add columns

- Navigate to a dashboard.

- Click Edit, and then click Edit visualization.

- Under Add sub-bucket, select Split rows.

- In the list, select Terms aggregation.

- In the Descending drop-down menu, select ascending or descending. In the Size field, enter the maximum number of rows for the table.

- Select the column you want to add and click Update.

- Save the changes.

Hide or remove columns

- Navigate to the dashboard.

- Click Edit.

- To hide a column, next to the column name, click the visibility, or eye, icon.

- To remove a column, next to the column name, click the X or delete icon.

Uninstall the integration with Elasticsearch

Complete the following sections to remove the integration between Security Command Center and Elasticsearch.

Remove dashboards, indexes, and index patterns

Remove dashboards when you want to uninstall this solution.

Navigate to the dashboards.

Search for Google SCC and select all the dashboards.

Click Delete dashboards.

Navigate to Stack Management > Index Management.

Close the following indexes:

- gccassets

- gccfindings

- gccsources

- gccauditlogs

Navigate to Stack Management > Index Patterns.

Close the following patterns:

- gccassets

- gccfindings

- gccsources

- gccauditlogs

Uninstall Docker

Delete the NotificationConfig for Pub/Sub. To find the name of the NotificationConfig, run:

docker exec googlescc_elk ls docker exec googlescc_elk cat NotificationConf_}}HashId{{Remove Pub/Sub feeds for assets, findings, IAM policies, and audit logs. To find the names for the feeds, run:

docker exec googlescc_elk ls docker exec googlescc_elk cat Feed_}}HashId{{Remove the sink for the audit logs. To find the name for the sink, run:

docker exec googlescc_elk ls docker exec googlescc_elk cat Sink_}}HashId{{To see your container information, including container IDs, open the terminal and run the following command:

docker container lsStop the container:

docker stop CONTAINER_IDReplace

CONTAINER_IDwith the ID of the container where Elastic Stack is installed.Remove the Docker container:

docker rm CONTAINER_IDIf necessary, add

-fbefore the container ID to remove the container forcefully.Remove the Docker image:

docker rmi us.gcr.io/security-center-gcr-host/googlescc_elk_v3:latestDelete the working directory and the

docker-compose.ymlfile:rm -rf ./main docker-compose.yml

What's next

Learn more about setting up finding notifications in Security Command Center.

Read about filtering finding notifications in Security Command Center.