Cloud Composer 3 | Cloud Composer 2 | Cloud Composer 1

本頁面說明 Cloud Composer 環境的架構。

環境架構設定

Cloud Composer 3 環境只有一種設定,不需依據網路類型而定:

客戶和租戶專案

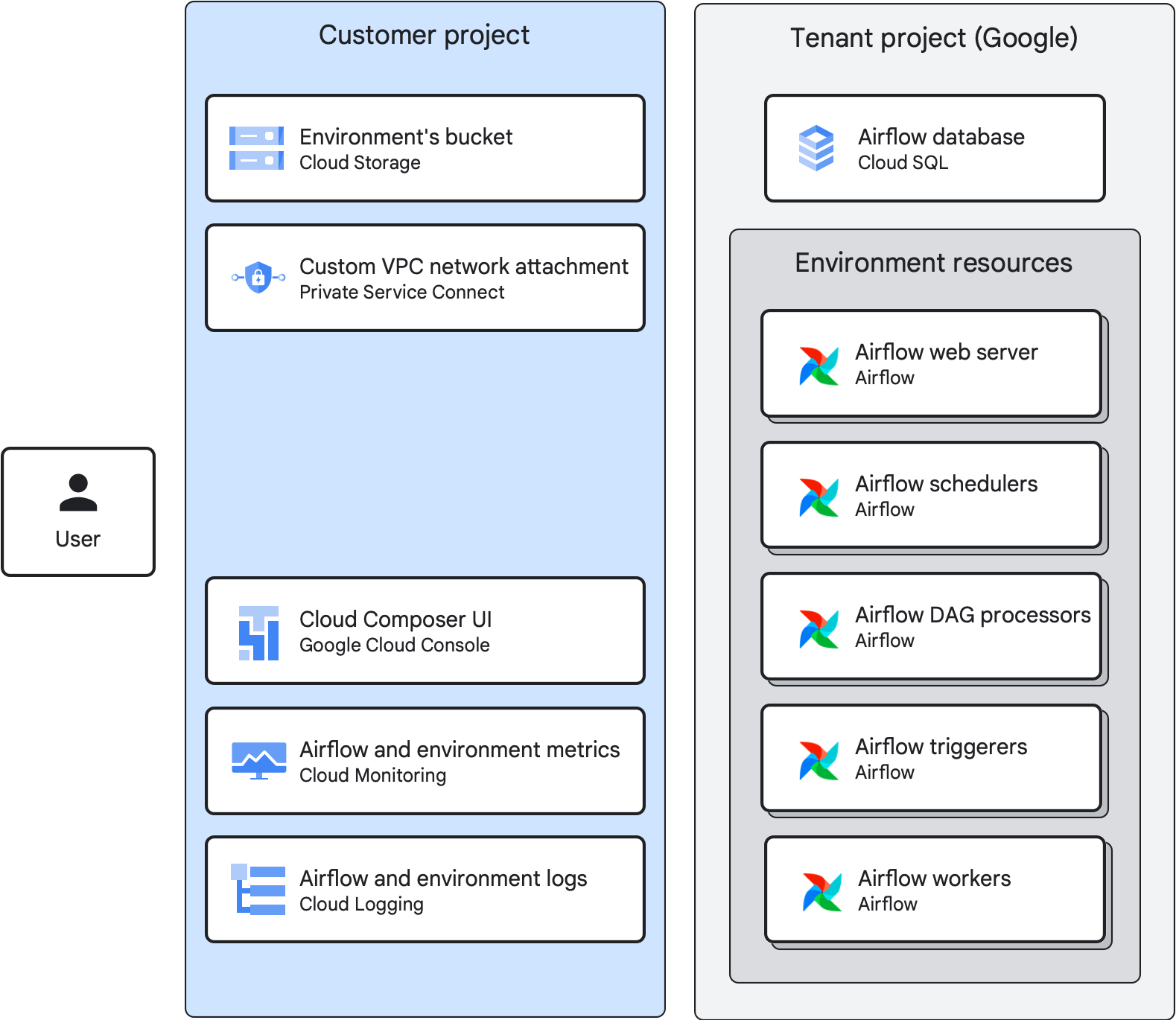

當您建立環境時,Cloud Composer 會將環境的資源分配至用戶群和客戶專案:

客戶專案是您建立環境的 Google Cloud 專案。您可以在單一客戶專案中建立多個環境。

租戶專案是 Google 管理的租戶專案,屬於 Google.com 機構。租戶專案提供統一的存取權控管機制,並為環境多添一層資料安全防護。每個 Cloud Composer 環境都有自己的租戶專案。

環境元件

Cloud Composer 環境是由環境元件組成。

環境元件是代管 Airflow 基礎架構的元素,會做為環境的一部分在 Google Cloud上執行。環境元件會在環境的租戶或客戶專案中執行。

環境值區

環境值區是Cloud Storage 值區,用於儲存 DAG、外掛程式、資料依附元件和 Airflow 記錄。環境的值區位於客戶專案中。

當您將 DAG 檔案上傳到環境 bucket 的 /dags 資料夾時,Cloud Composer 會將 DAG 同步到環境的 Airflow 元件。

Airflow 網路伺服器

Airflow 網路伺服器會執行環境的 Airflow UI。

Cloud Composer 會根據使用者身分和為使用者定義的 IAM 政策繫結,提供介面存取權。

Airflow 資料庫

Airflow 資料庫是Cloud SQL 執行個體,可在環境的租戶專案中執行。用來代管 Airflow 中繼資料庫。

為保護機密連線和工作流程資訊,Cloud Composer 只允許環境的服務帳戶存取資料庫。

其他 Airflow 元件

環境中執行的其他 Airflow 元件包括:

Airflow 排程器會剖析 DAG 定義檔案、依據排程間隔排定 DAG 執行作業,並將任務排入佇列來讓 Airflow 工作站執行。

Airflow 觸發器會以非同步方式監控環境中的所有延後工作。如果環境中的觸發條件數量大於零,即可在 DAG 中使用可延遲運算子。

Airflow DAG 處理器會處理 DAG 檔案,並將其轉換為 DAG 物件。在 Cloud Composer 3 中,DAG 處理器會以獨立的環境元件執行。

Airflow 工作站會執行 Airflow 排程器排定的任務。環境中工作站數量的下限與上限會根據佇列中的工作數量動態變更。

Cloud Composer 3 環境架構

在 Cloud Composer 3 環境中:

- 租戶專案會代管含有 Airflow 資料庫的 Cloud SQL 執行個體。

- 所有 Airflow 資源都會在租戶專案中執行。

- 客戶專案會代管環境的值區。

- 客戶專案中的自訂虛擬私有雲網路附件,可用於將環境附加至自訂虛擬私有雲網路。您可以使用現有附件,也可以讓 Cloud Composer 視需要自動建立附件。您也可以將環境從 VPC 網路中分離。

- Google Cloud 控制台、監控和記錄功能,可讓您在客戶專案中管理環境、DAG 和 DAG 執行作業,並存取環境的指標和記錄。您也可以使用 Airflow UI、Google Cloud CLI、Cloud Composer API 和 Terraform 達到相同目的。

在高度彈性的 Cloud Composer 3 環境中:

環境的 Cloud SQL 執行個體已設定為高可用性 (屬於區域執行個體)。在區域執行個體中,設定是由主要執行個體和待命執行個體組成。

您的環境會在不同可用區中執行下列 Airflow 元件:

- 兩個 Airflow 排程器

- 兩個網路伺服器

- 至少兩個 DAG 處理器 (最多 10 個)

如果使用觸發器,至少要有兩個觸發器 (最多 10 個)

工作站數量下限設為兩個,且環境的叢集會在區域之間分配工作站執行個體。如果區域服務中斷,受影響的工作站執行個體會重新排定至其他區域。

與 Cloud Logging 和 Cloud Monitoring 整合

Cloud Composer 會與 Google Cloud 專案的 Cloud Logging 和 Cloud Monitoring 整合,讓您集中查看 Airflow 和 DAG 記錄。

Cloud Monitoring 會從 Cloud Composer 收集和擷取指標、事件和中繼資料,透過資訊主頁和圖表產生深入分析資料。

Cloud Logging 具有串流特性,因此您可以立即查看 Airflow 元件發出的記錄,而不必等候 Airflow 記錄出現在環境的 Cloud Storage 值區中。

如要限制 Google Cloud 專案中的記錄數量,您可以停止擷取所有記錄。請勿停用記錄功能。