Local SSDs + VMs = love at first (tera)byte

Todd Rafacz

Senior Software Engineer

Rahul Venkatraj

Product Manager

Data storage is the foundation for all kinds of enterprises and their workloads. For most of those, our Google Cloud standard object, block, and file storage products offer the necessary performance. But for companies doing compute-intensive work like analytics for ecommerce websites, or gaming and visual effects rendering, compute performance can have a big impact on the bottom line. A slow website experience, or slow processing causing missed deadlines, just can’t happen. To make sure your workloads are set up for performance and latency, the first place to start is your storage. For the fastest storage available, that’s local solid state drives, or Local SSDs.

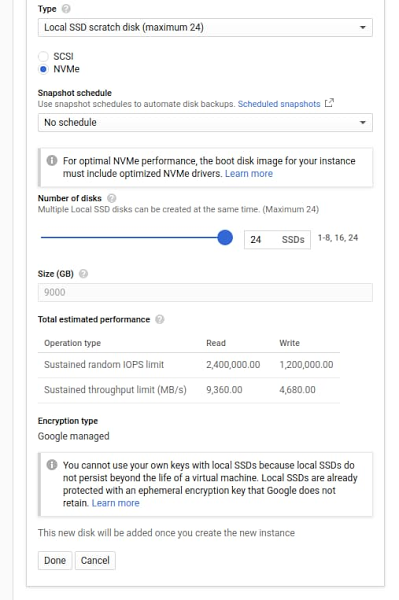

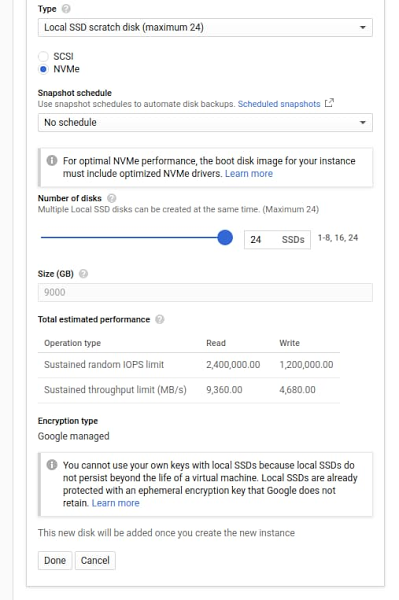

With that in mind, we’re announcing that you can now attach 6TB and 9TB Local SSDs to your Compute Engine virtual machines. The throughput and IOPS (per VM) of these new offerings will be up to 3.5 times our current 3TB offering. This means fewer instances will be needed to meet your performance goals, which frequently leads to reduced costs. If you’re already using Local SSDs, you can access these larger sizes with the same APIs you use today.

How Local SSDs work

Local SSDs are high-performance devices that are physically attached to the server that hosts your VM instances. This physical coupling translates to the lowest latency and highest throughput to the VM. These local disks are always encrypted, not replicated, and used as temporary block storage. Local SSDs are typically used as high-performance scratch disks, cache, or the high I/O hot tier in distributed data stores and analytics stacks.

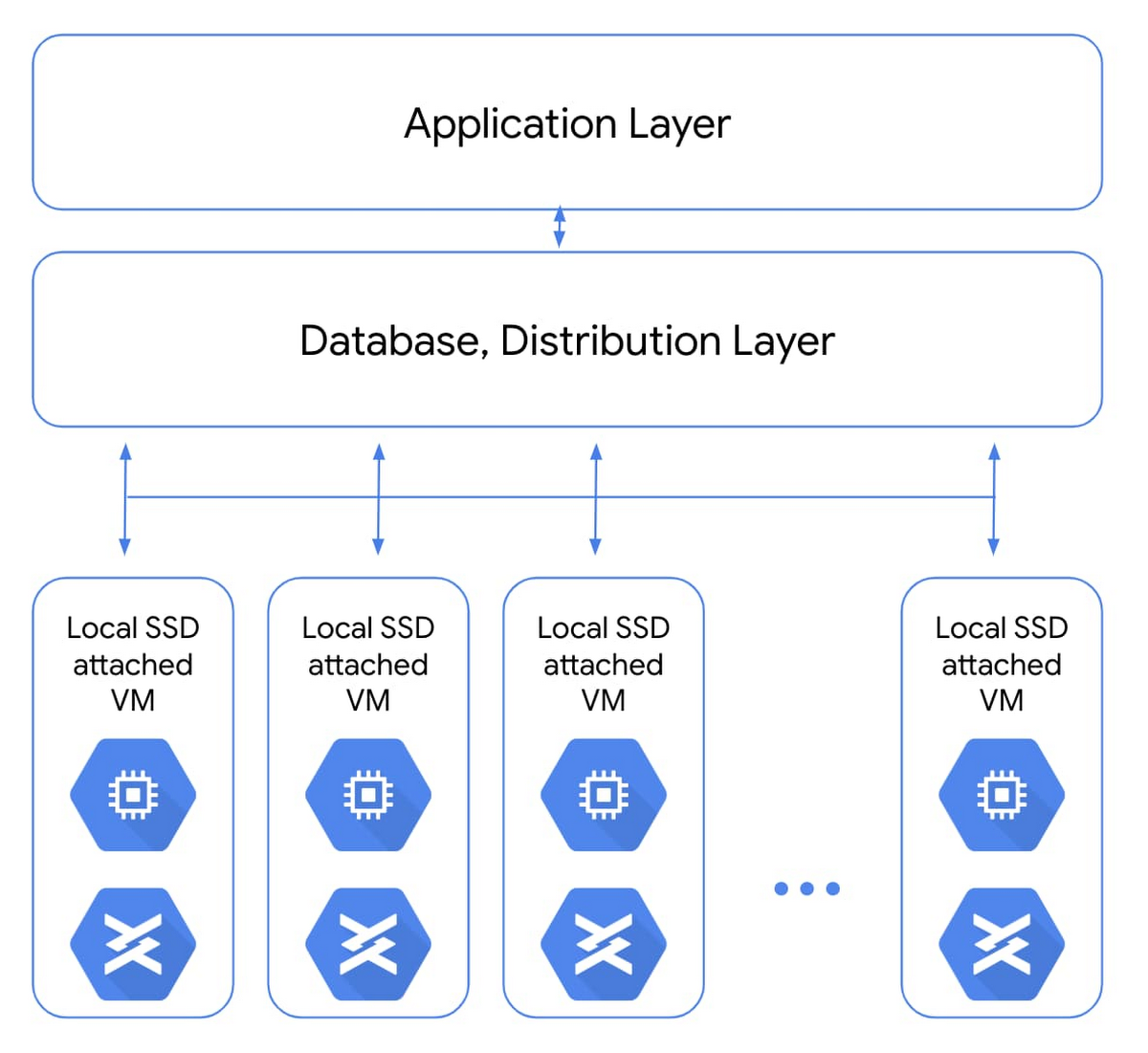

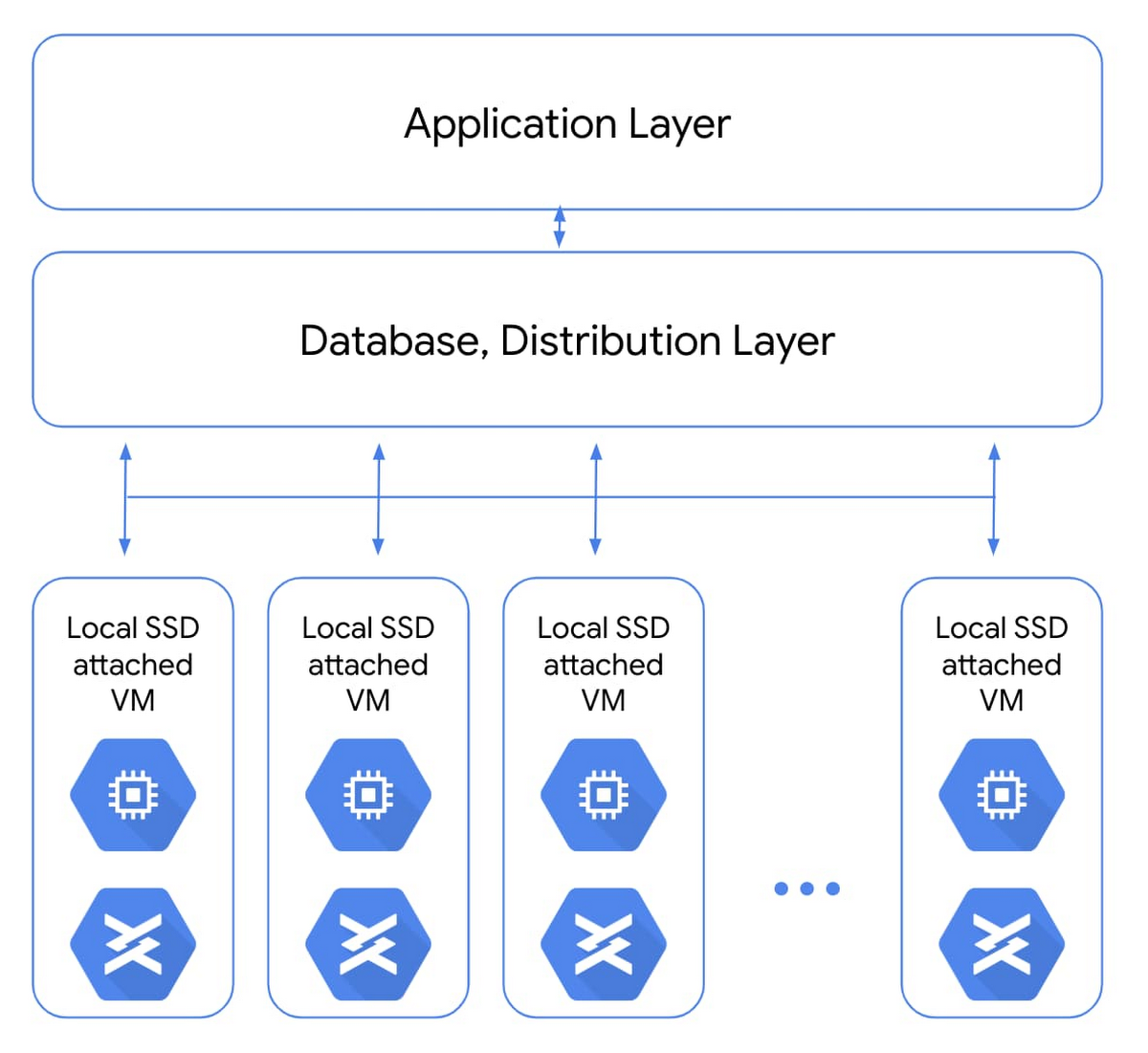

A common use case for local SSDs is in flash-optimized databases that have distribution and replication built into the layers above storage. For apps like real-time fraud detection or ad exchanges, only local SSDs can bring the necessary sub-millisecond latencies combined with very high input/output operations per second (IOPS).

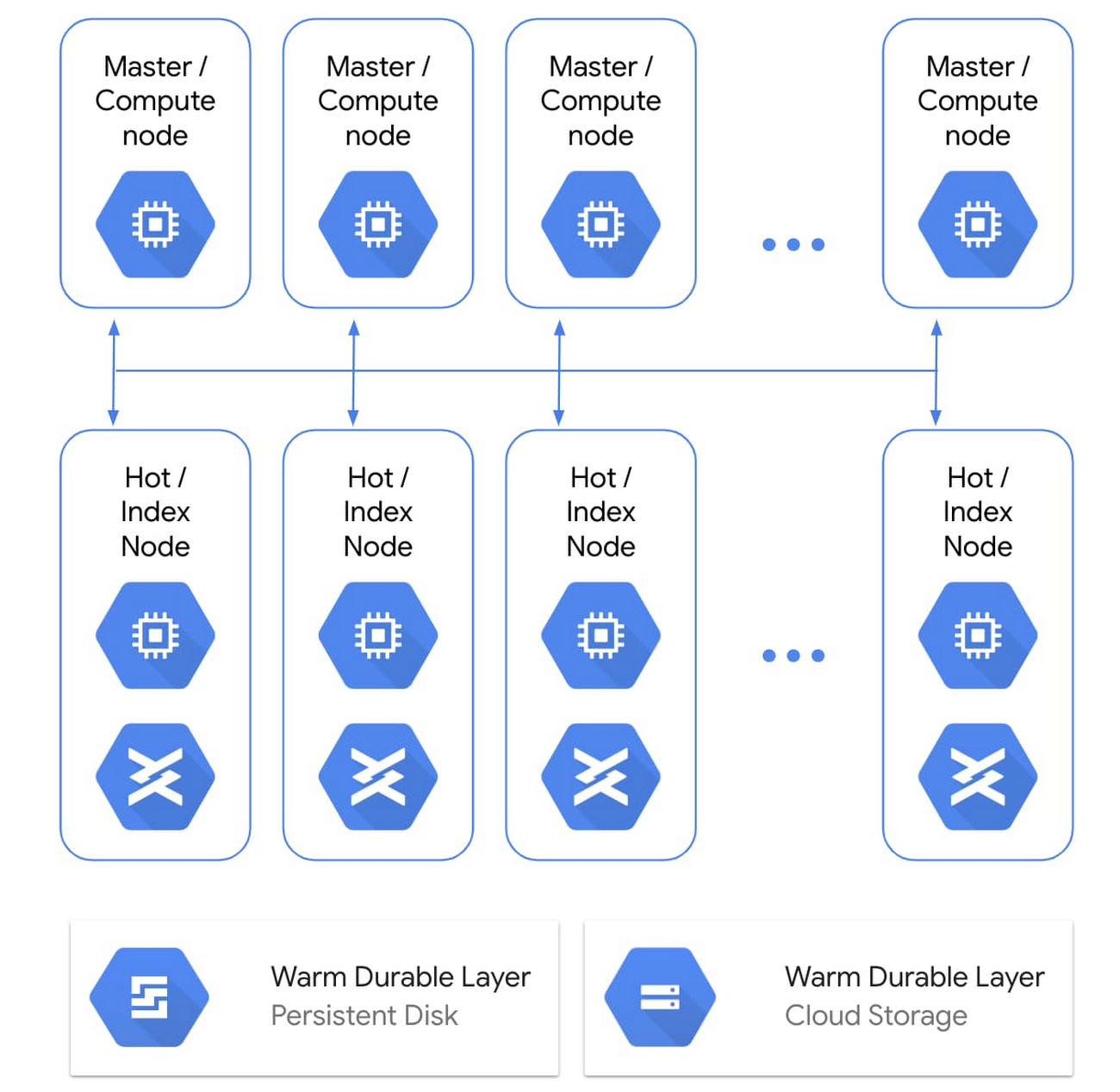

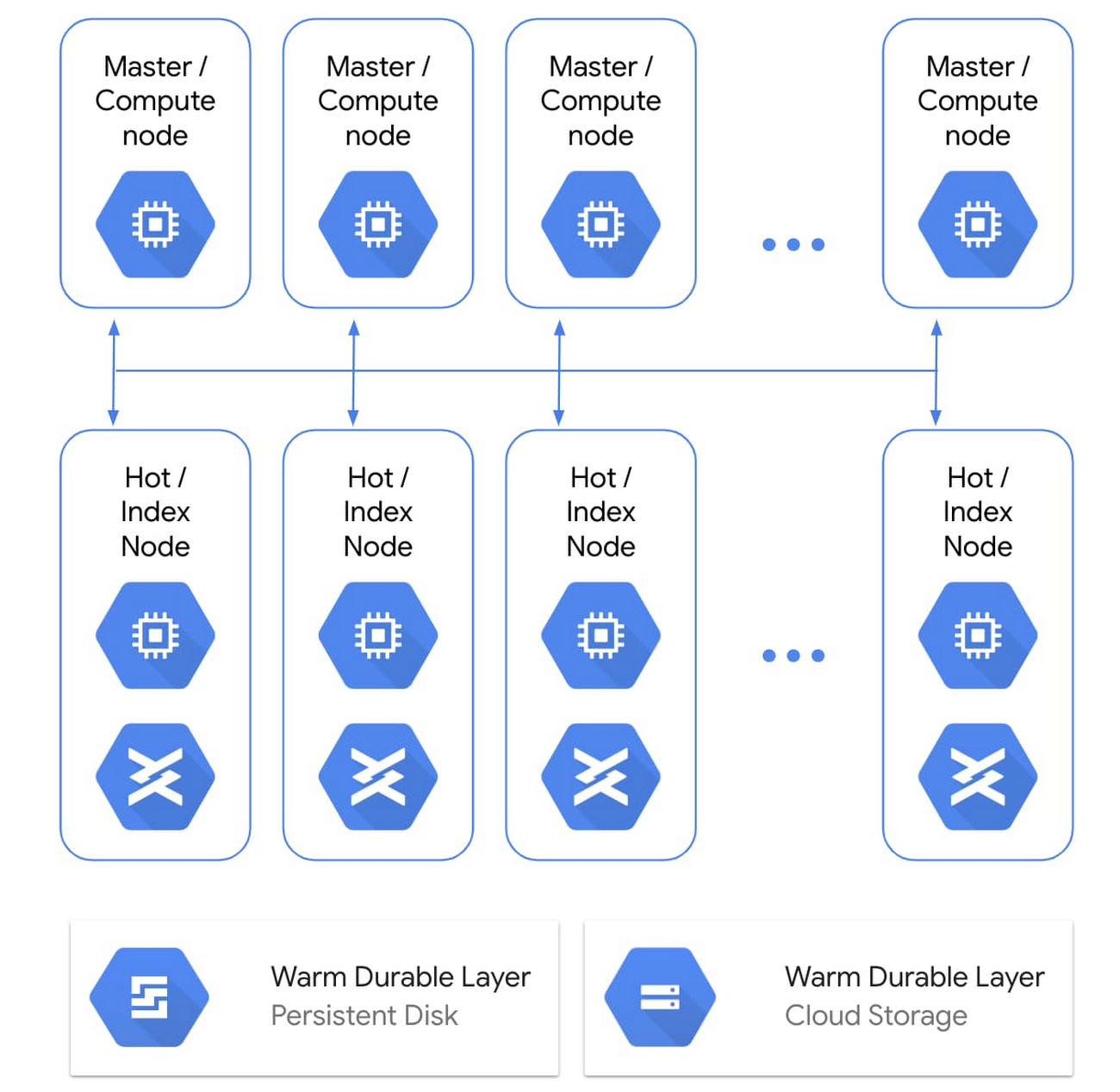

Another common use for Local SSDs is as a hot storage tier (typically for caching and indexing) as part of tiered storage in performance-sensitive analytics stacks. For example, Google Cloud customer Mixpanel caches hundreds of terabytes of data on local SSDs on Compute Engine to maintain sub-second query speeds for their data analytics platform.

When using larger, faster Local SSDs, performance is the goal. The new SSDs we’re announcing combine enhanced performance with unique attach-flexibility, translating to a highly compelling price per IOPS and price per throughput for locally attached storage.

These new SSDs bring you new capabilities and can help reduce the total cost of ownership (TCO) for distributed workloads. For example, a SaaS provider doing real-time analytics on a flash-optimized database cluster can now see better performance and TCO benefits. And highly transactional, performance-sensitive workloads like analytics or media rendering can now transact millions of IOPS on a wide variety of VMs.

Here's a look at how to attach a 9TB Local SSD with a Compute Engine instance.

We hear that users like the flexibility of Local SSDs, like the ability to attach them with a wide range of custom VM shapes (rather than being tied to specific VM shape per SSD size), and this extends to the new 6TB and 9TB Local SSDs as well.

Both the 6TB and 9TB instances will retain the current per-GB pricing. Visit our pricing page to see the specific pricing in your region. 6TB and 9TB Local SSDs can be attached on N1 VMs (now in beta). This capability will be available on N2 VMs shortly. For more details, check out our documentation for Local SSDs. If you have questions or feedback, check out the Getting Help page.