Traffic Director takes application networking beyond Google Cloud

Stewart Reichling

Product Manager, Traffic Director

Chao Xin

Software Engineer, Traffic Director

Whether you run services in Google Cloud, on-premises, in other clouds, or all of the above, the fundamental challenges of application networking remain the same: How do you get traffic to these services? And how do these services talk to each other?

Traffic Director is a fully managed control plane for service mesh and load balancing. We built Traffic Director with the vision that it could solve these challenges, no matter where your services live. Today, we're delivering on another part of that vision so that we can better support your multi-environment needs.

With Traffic Director, you can now send traffic to services and gateways that are hosted outside of Google Cloud. Traffic is routed privately, over Cloud Interconnect or Cloud VPN, according to the rules that you configure in Traffic Director.

This capability enables services in your VPC network to interoperate more seamlessly with services in other environments. It also enables you to build advanced solutions based on Google Cloud's portfolio of networking products, such as Cloud Armor protection for your private on-prem services. Whether you're only running workloads in Google Cloud, or have advanced multi-environment needs, Traffic Director is a versatile tool in your networking toolkit.

Introducing Hybrid Connectivity NEGs

This is all made possible through support for Hybrid Connectivity Network Endpoint Groups (NEGs). Now generally available, think of a Hybrid Connectivity NEG as a collection of IP addresses and ports ("endpoints") that clients can use to reach your application.

You probably already use NEGs when configuring Traffic Director with GKE-based services. Hybrid Connectivity NEGs are special because they don't need to resolve to a destination within Google Cloud. Instead, they can resolve to a destination outside of your VPC network (like a gateway in an on-prem data center or an application on another public cloud).

If you're sending traffic from Google Cloud to another environment, that traffic travels over hybrid connectivity (for example, Cloud Interconnect or Cloud VPN). This allows you to have workloads in another environment and reach them securely from Google Cloud, without having to make those workloads accessible via the public internet.

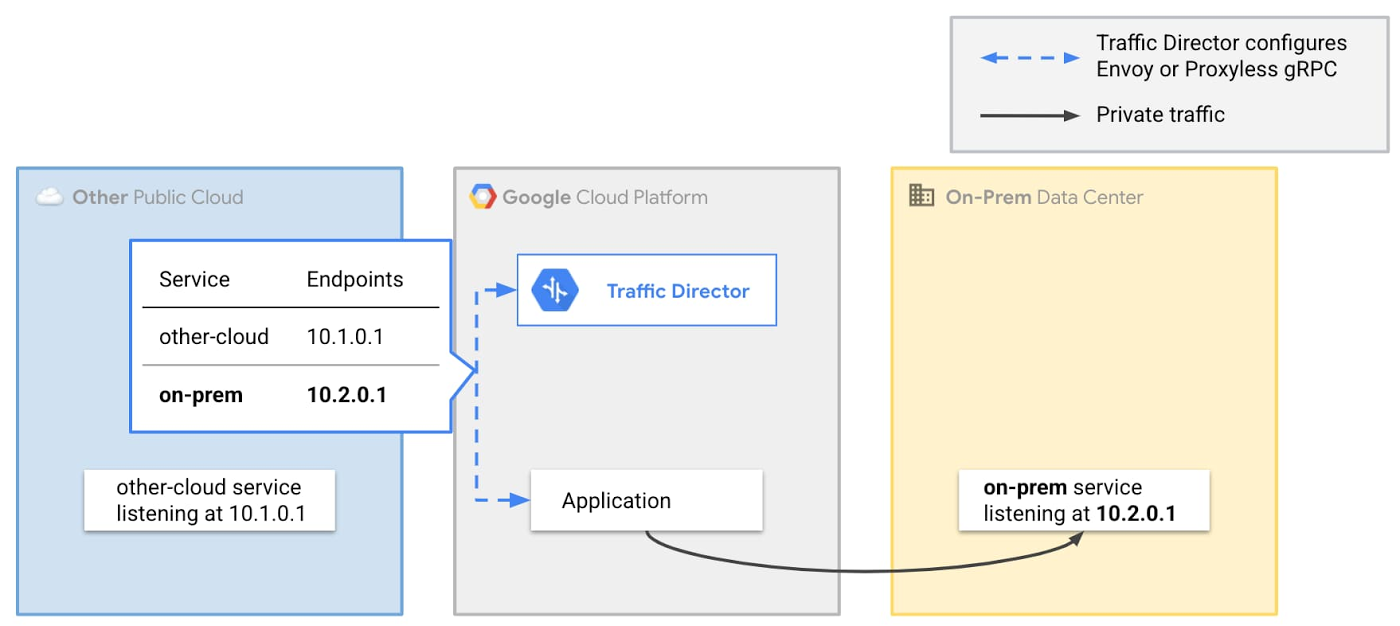

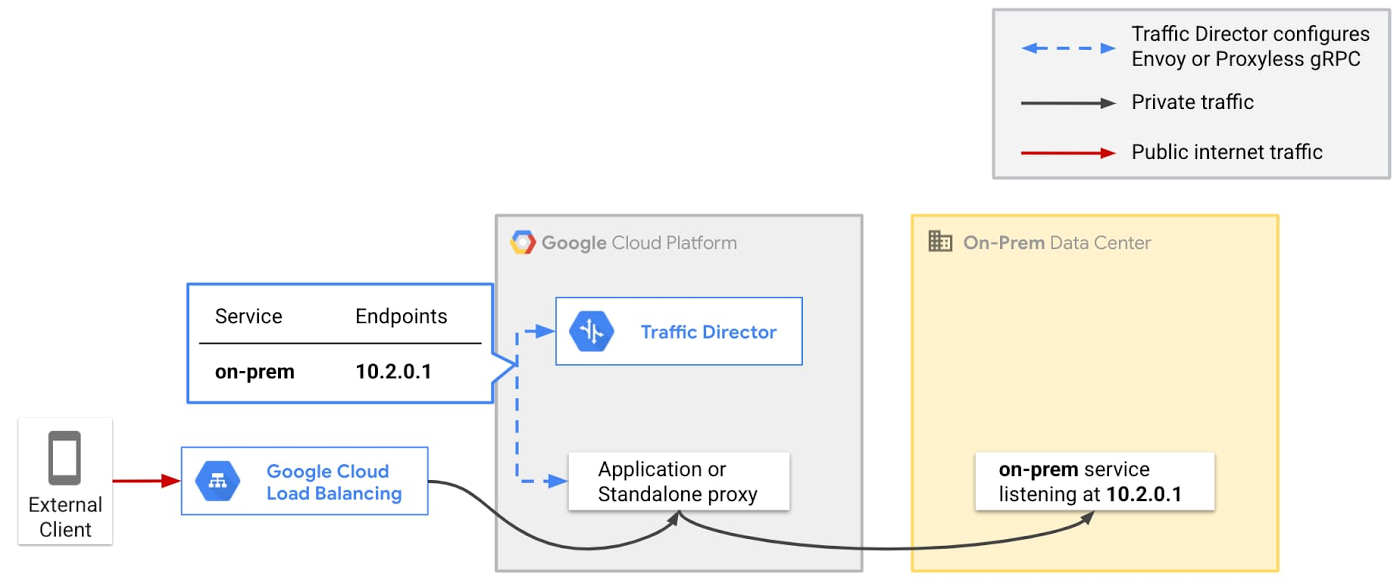

Here's a simple example of how you might use Hybrid Connectivity NEGs with Traffic Director:

Imagine you have a virtual machine (VM) running on-prem and that VM can be reached from your VPC network via Cloud VPN interconnect at 10.0.0.1 on port 80.

In Traffic Director, you create a service called `on-prem-service` and add a Hybrid Connectivity NEG with an endpoint with IP address 10.0.0.1 and port 80.

Traffic Director then sends that information to its clients (for example, Envoy sidecar proxies running alongside your applications). Thus, when your application sends a request to `on-prem-service`, the Traffic Director client inspects the request and directs it to `10.0.0.1:80`.

What can I do with it?

With this feature, you can now build powerful solutions that involve existing Traffic Director capabilities as well as Cloud Load Balancing's global network edge services. Here are a few examples:

Route mesh traffic to on-prem or another cloud

The simplest use case for this feature is plain old traffic routing. For example:

You want to get traffic from one environment to another. In the above example, when your application sends a request to the `on-prem` service, the Traffic Director client inspects the outbound request and updates its destination. The destination gets set to an endpoint associated with the `on-prem` service (in this case, `10.2.0.1`). The request then travels over VPN or Interconnect to its intended destination.

If you need to add more endpoints, you just add them to your service by updating Traffic Director. You don't need to make any changes to your application code.

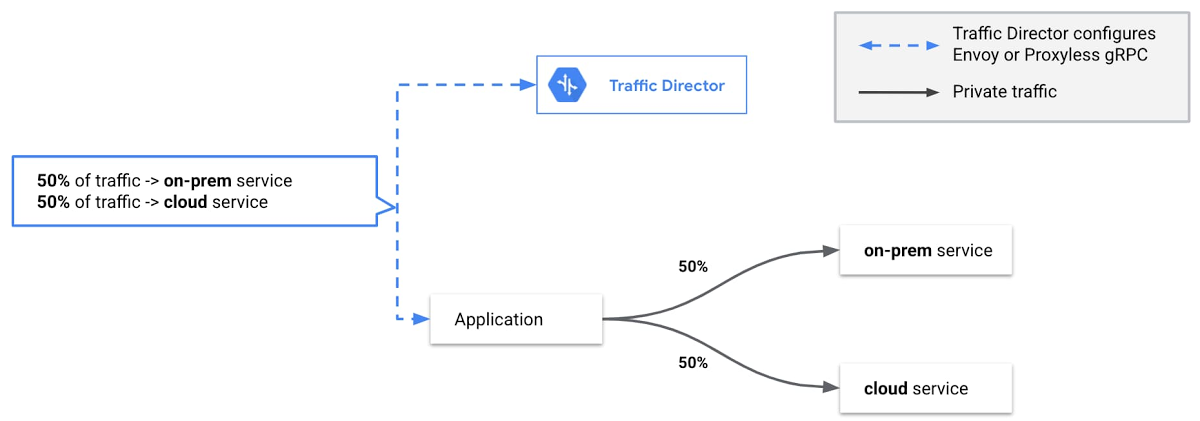

Migrate a service between environments

Being able to send traffic privately to an endpoint outside of Google Cloud is powerful. But things get even more interesting when you combine this with Traffic Director capabilities like weight-based traffic splitting.

The above example extends the previous pattern, but instead of configuring Traffic Director to send all traffic to the `on-prem` service, you configure Traffic Director to split traffic across two services using weight-based traffic splitting.

Traffic splitting allows you to start by sending 0% of traffic to the `cloud` service and 100% to the `on-prem` service. You can then gradually increase the proportion of traffic sent to the `cloud` service. Eventually, you send 100% of traffic to the `cloud` service and you can retire the `on-prem` service.

Use Google Cloud's edge services with workloads in other environments

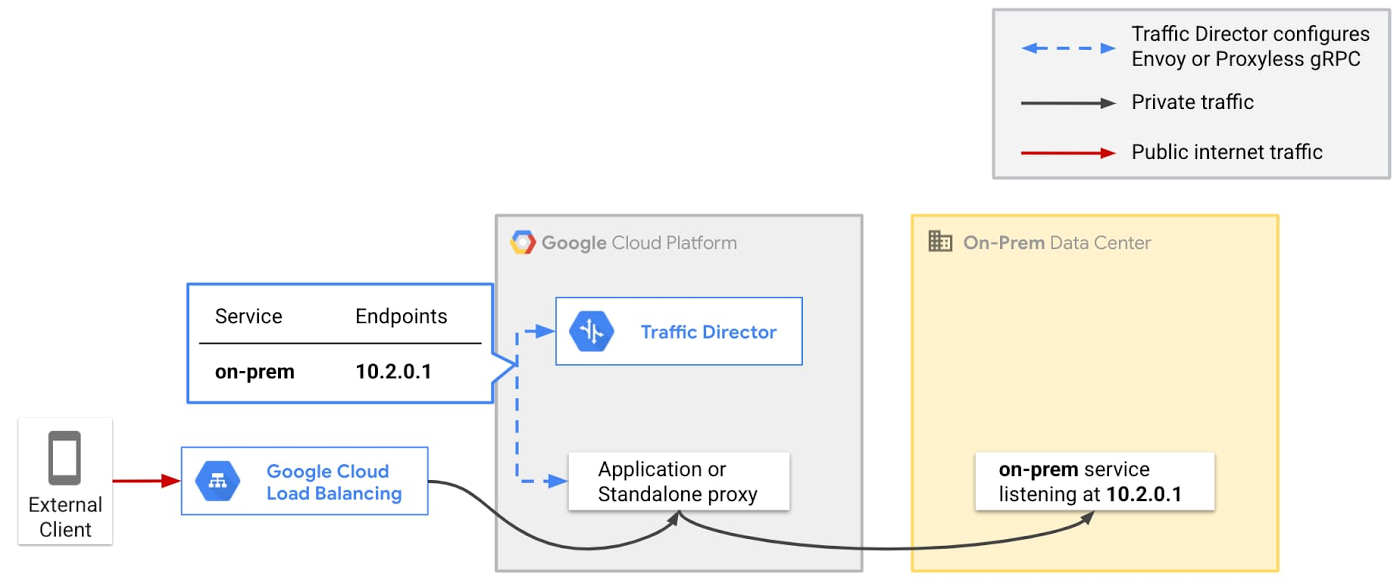

Finally, you can combine this functionality with Cloud Load Balancing to bring global edge capabilities to workloads that are outside of your VPC network. Cloud Load Balancing offers a wide range of network edge services, such as globally distributed ingress, Cloud Armor for DDoS protection, and Cloud CDN.

You can now use these with Traffic Director to support workloads that are not exposed to the public internet: if you have Cloud VPN or Cloud Interconnect between Google Cloud and another environment, your traffic will travel via that private route. We previously posted about how you can use Cloud Armor with on-prem and cloud workloads that can be reached via the public internet. In the approach described below, workloads in other environments don't need to be publicly reachable.

In this example, traffic from clients travels over the public internet and enters Google Cloud's network via a Cloud Load Balancer such as our global external HTTP(S) load balancer. You can use network edge services, for example, Cloud Armor DDoS protection or Identity-Aware Proxy user authentication when traffic reaches the load balancer.

After you've secured your ingress path, the traffic makes a brief stop in Google Cloud, where an application or standalone proxy (configured by Traffic Director) forwards it across Cloud VPN or Cloud Interconnect to your on-prem service.

Get started today

With Traffic Director, we've been focused on enterprise needs since day one, and we're excited about the class of problems that Hybrid Connectivity NEGs solve for enterprises that operate beyond GCP.

Get started today with Traffic Director, Google Cloud Load Balancing and Cloud Armor to set up a secure global ingress solution for private multi-environment workloads. This is just a first step on our journey to delivering on Traffic Director's mission of being a truly universal application networking solution. More to come!