Google Cloud networking in depth: How Traffic Director provides global load balancing for open service mesh

Anna Berenberg

Engineering Fellow, Google Cloud

Arunkumar Jayaraman

Software Engineer, Google

At Next ’19 last week, we announced Traffic Director for service mesh, bringing global traffic management to your VM and container services. We also gave you a glimpse of Traffic Director’s capabilities in our blog. Today, we’ll take it a step further with a deep dive into its features and benefits.

Traffic Director for service mesh

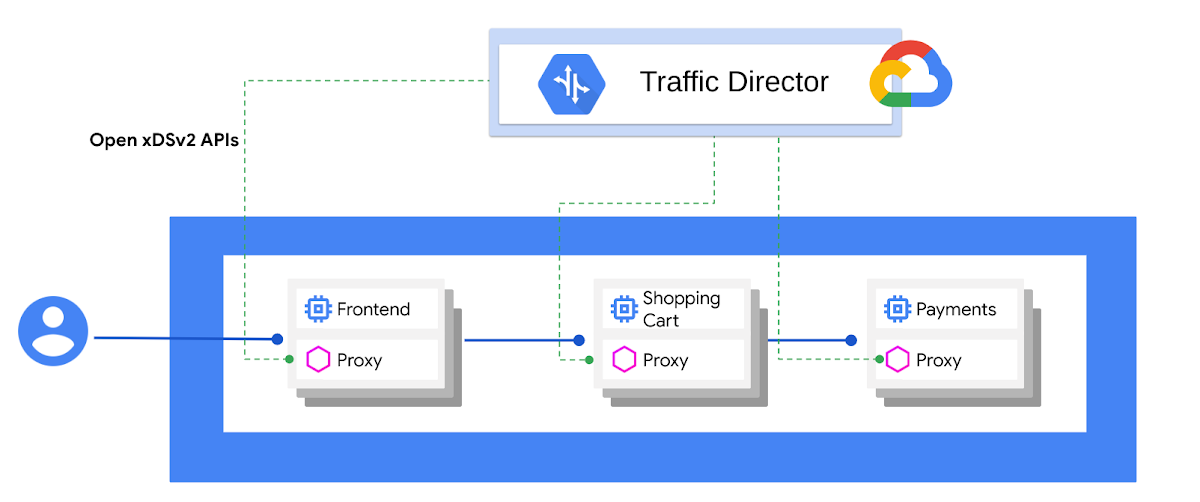

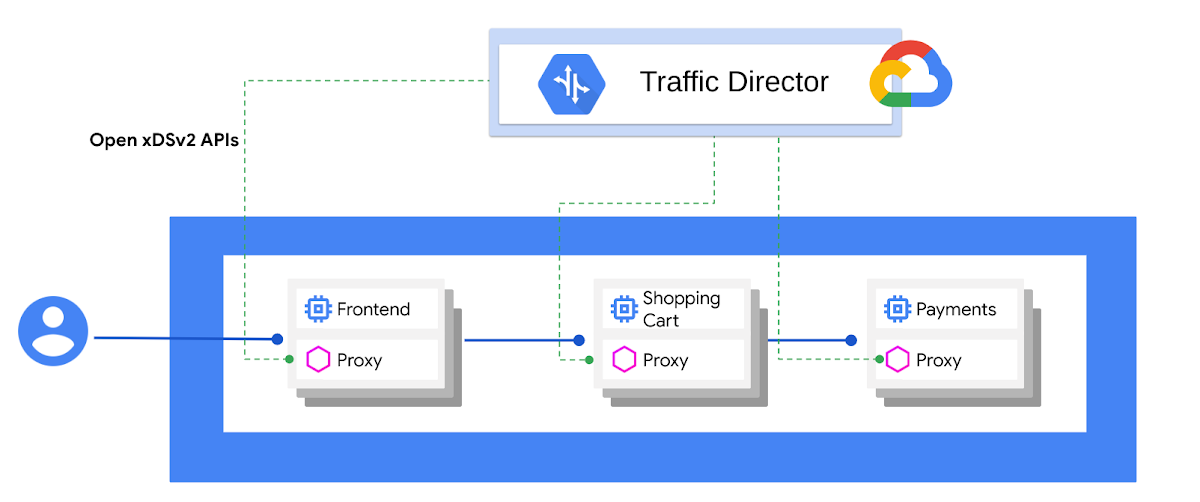

At its core, a service mesh provides a foundation for independent microservices that are written in different languages and maintained by separate teams. A service mesh helps to decouple development from operations. Developers no longer need to write and maintain policies and networking code inside their applications, they move into service proxies, such as Envoy, and to a service-mesh control plane that provisions and dynamically manages the proxies.

“Traffic Director makes it easier to bring the benefits of service mesh and Envoy to production environments,” said Matt Klein, creator of Envoy Proxy.

Traffic Director is Google Cloud’s fully managed traffic control plane for service mesh. Traffic Director works out of the box for both VMs and containers. It uses the open source xDS APIs to communicate with the service proxies in the data plane, ensuring that you’re never locked into a proprietary interface.

Traffic Director capabilities

Global load balancing

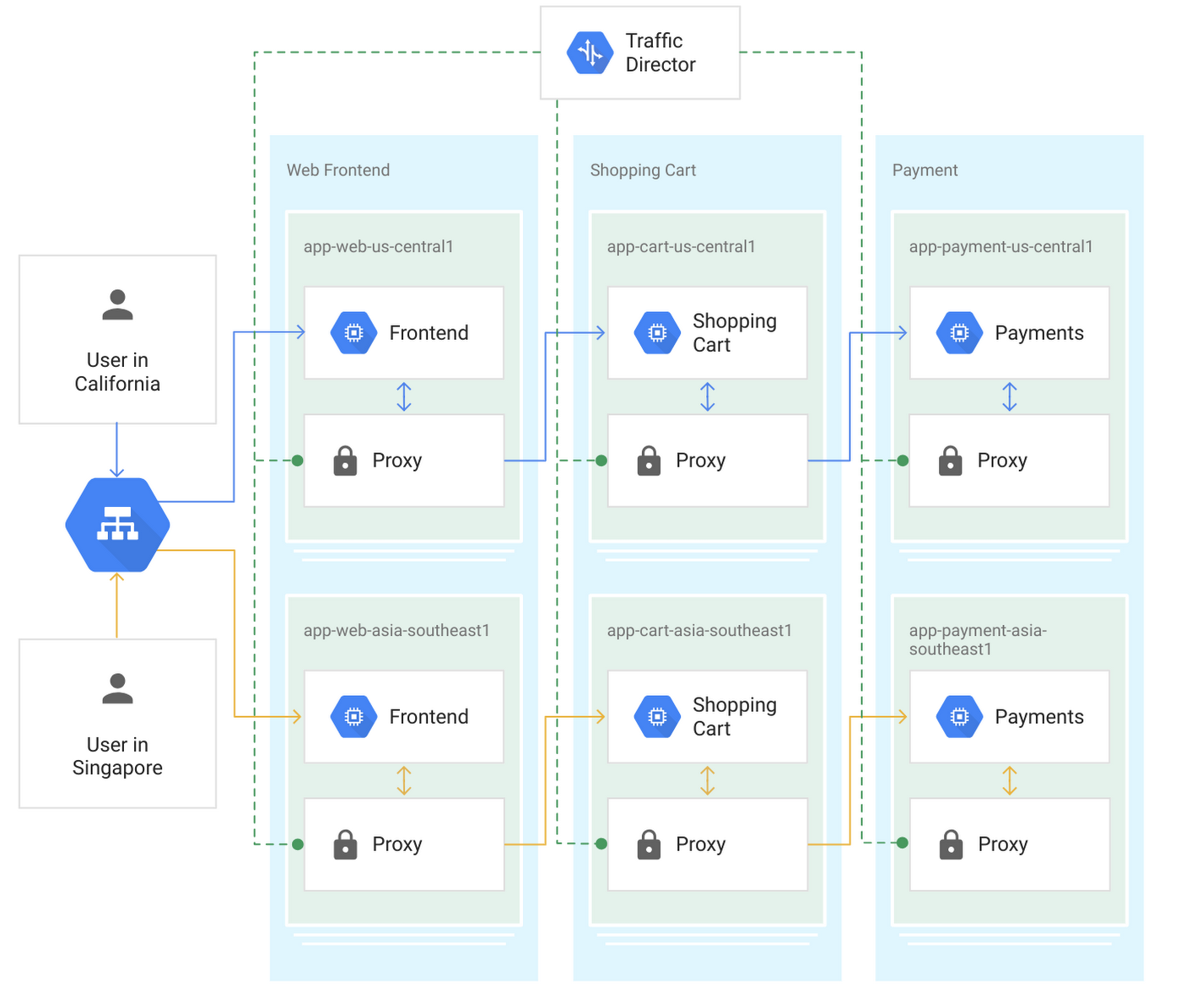

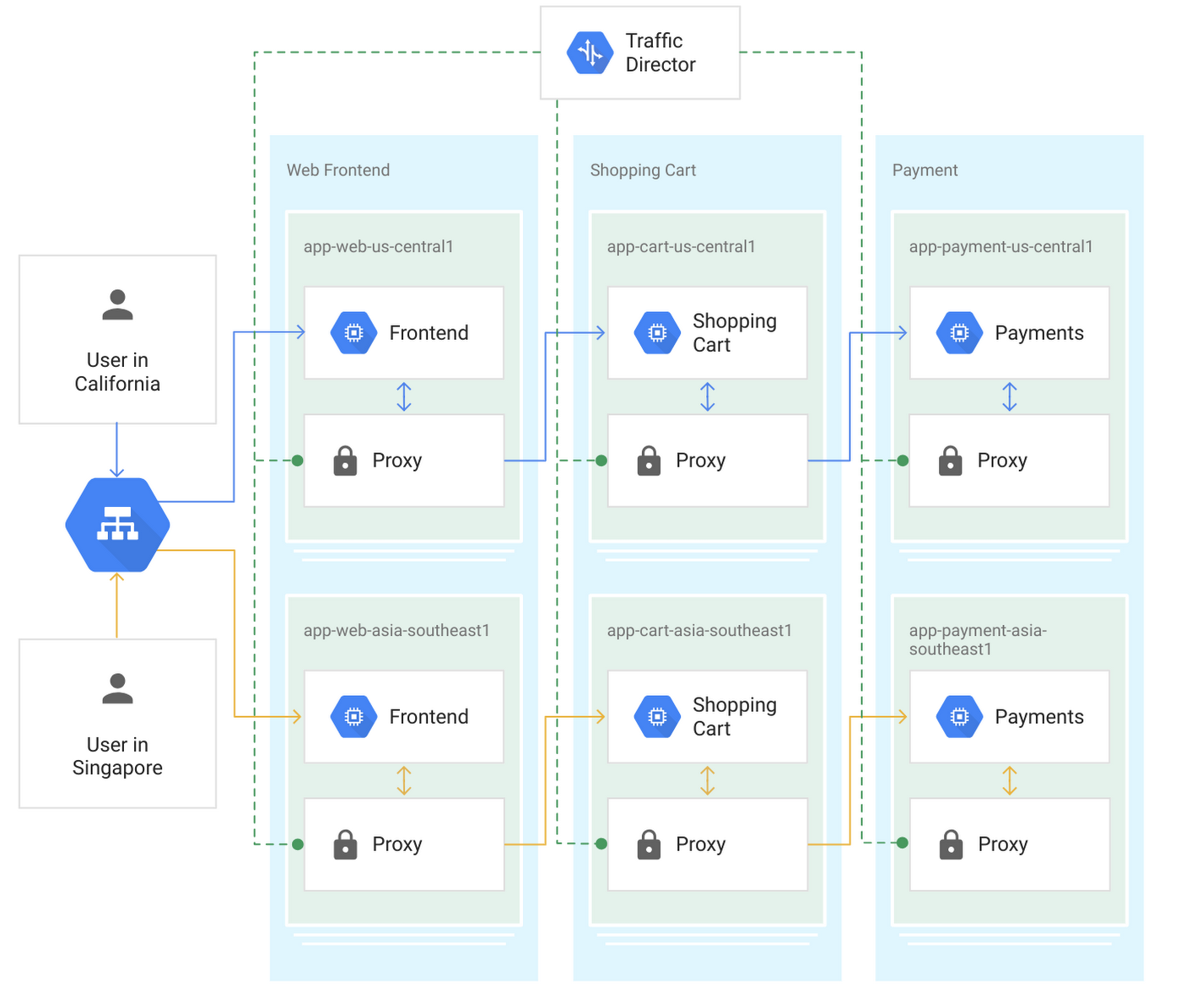

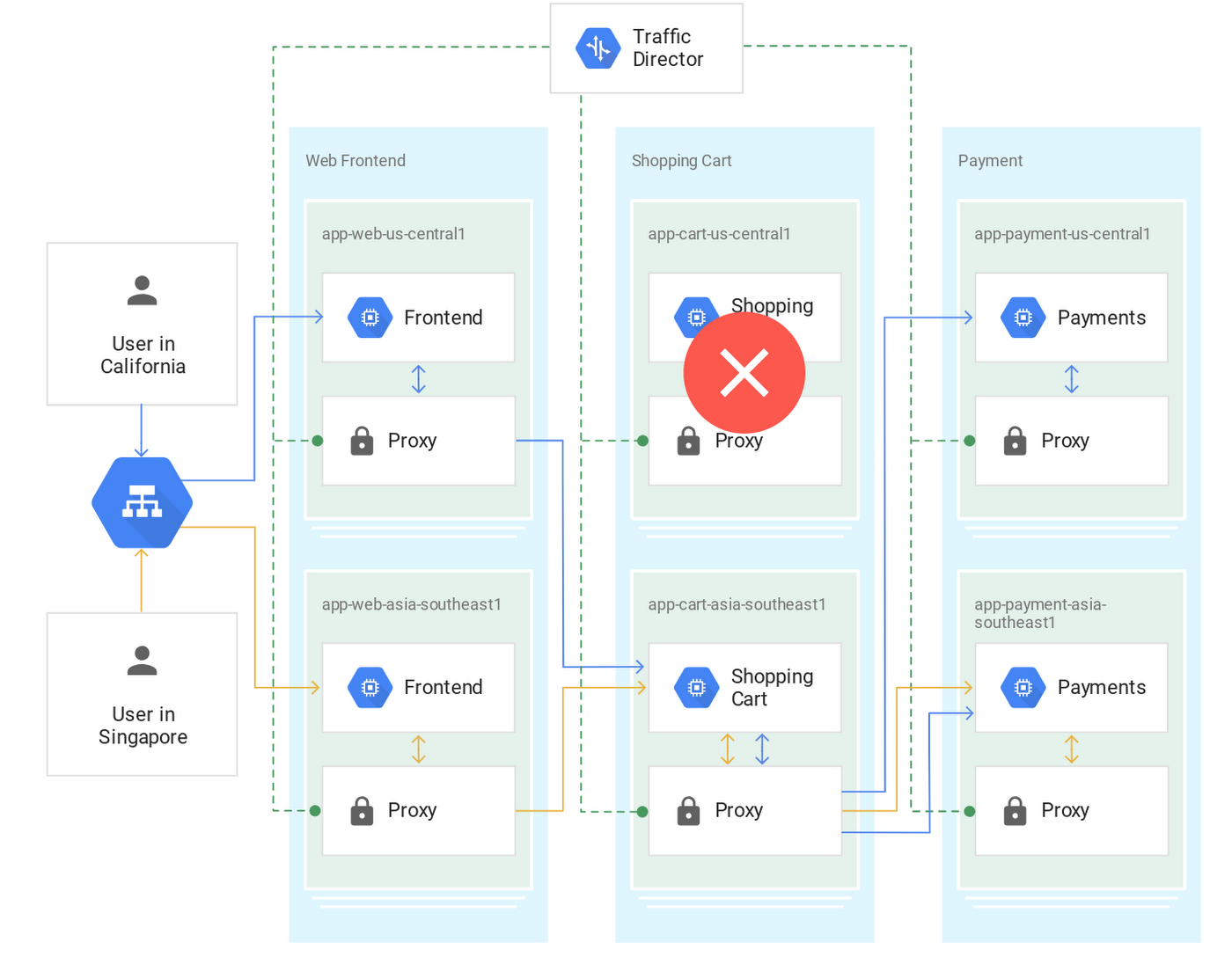

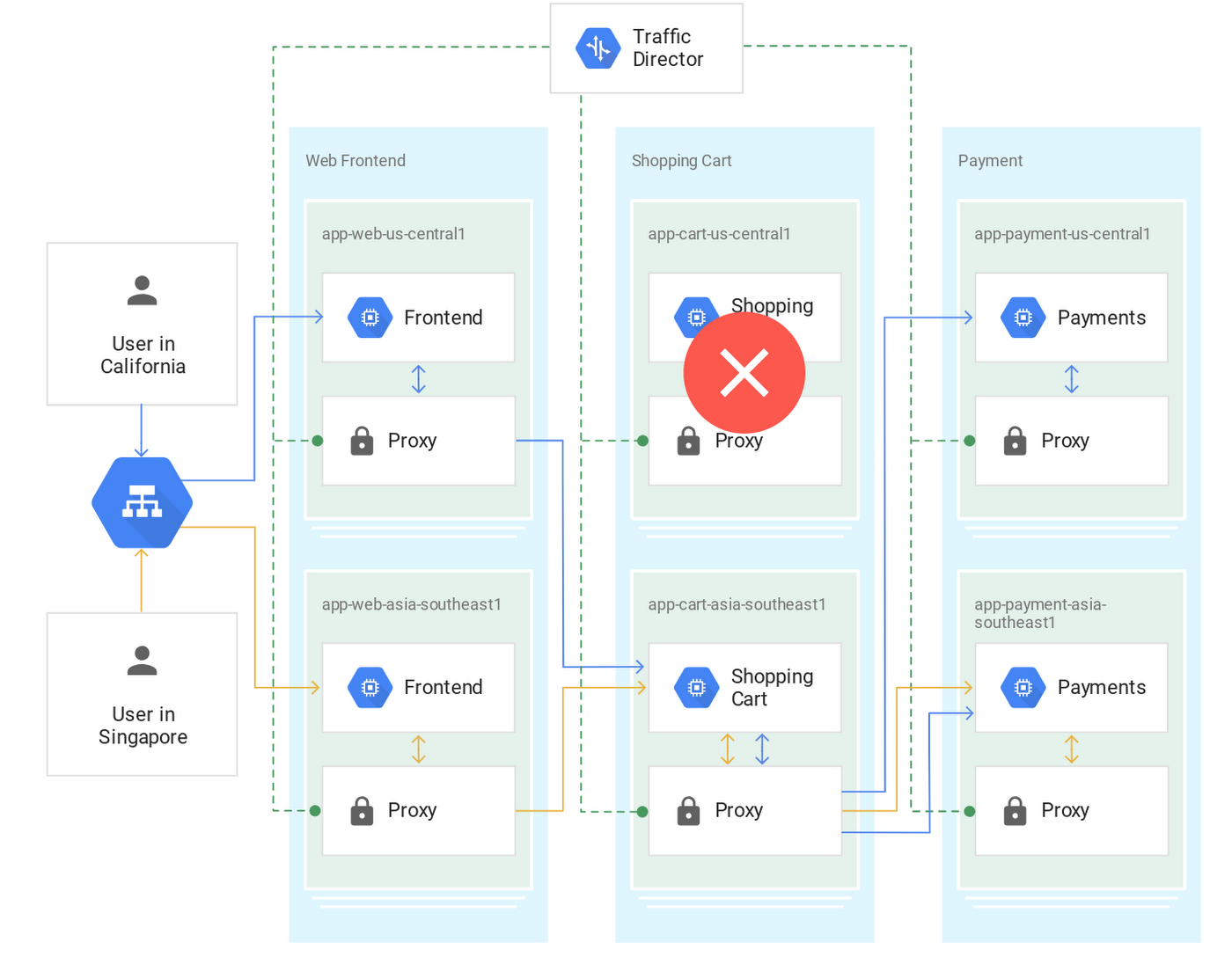

Many of you use Google’s global load balancing for your internet-facing services. Traffic Director brings global load balancing to internal microservices in a service mesh. With global load balancing, you can provision your service instances in Google Cloud Platform (GCP) regions around the world. Traffic Director provides intelligence to the clients to send traffic to the closest service instance with available capacity. This optimizes global traffic distribution between services that originate traffic and those that consume it, with the shortest round-trip time (RTT) per request.

If the instances closest to the client service are down or overloaded, Traffic Director provides intelligence to seamlessly shift the traffic to a healthy instance in the next closest region.

Centralized health-checking

Large service meshes can generate a large amount of health-checking traffic, since every sidecar proxy has to health-check all the service instances in the service mesh. As the mesh grows, having every client proxy health-check every server instance creates an n2 health-checking problem, which can become an obstacle to growing and scaling your deployments.

Traffic Director solves this by centralizing health-checks, where a globally distributed, resilient system monitors all service instances. Traffic Director then distributes the aggregated health-check results to all the proxies in the worldwide mesh using the EDS API.

Load-based autoscaling

Traffic Director enables autoscaling based on the load signal that proxies report to it. Traffic Director notifies the Compute Engine autoscaler of any changes in traffic and lets autoscaler grow to the required size in one shot (instead of repeated steps as other autoscalers do), decreasing the time it takes for the autoscaler to react to traffic spikes.

While the Compute Engine autoscaler is ramping up capacity where it’s needed, Traffic Director temporarily redirects traffic to other available instances—even in other regions as needed. Once the autoscaler grows enough capacity for the workload to sustain the spike, Traffic Director moves traffic back to the closest zone and region, once again optimizing traffic distribution to minimize per-request RTT.

Built-in resiliency

Since Traffic Director is a fully managed service from GCP, you don’t have to worry about its uptime, lifecycle management, scalability, or availability. Traffic Director infrastructure is globally distributed and resilient around the world, and uses the same battle-tested systems that serve Google’s user-facing services. Traffic Director will offer a 99.99% service level agreement (SLA) when it is generally available (GA).

Traffic control capabilities

Traffic Director lets you control traffic without having to modify the application code itself.

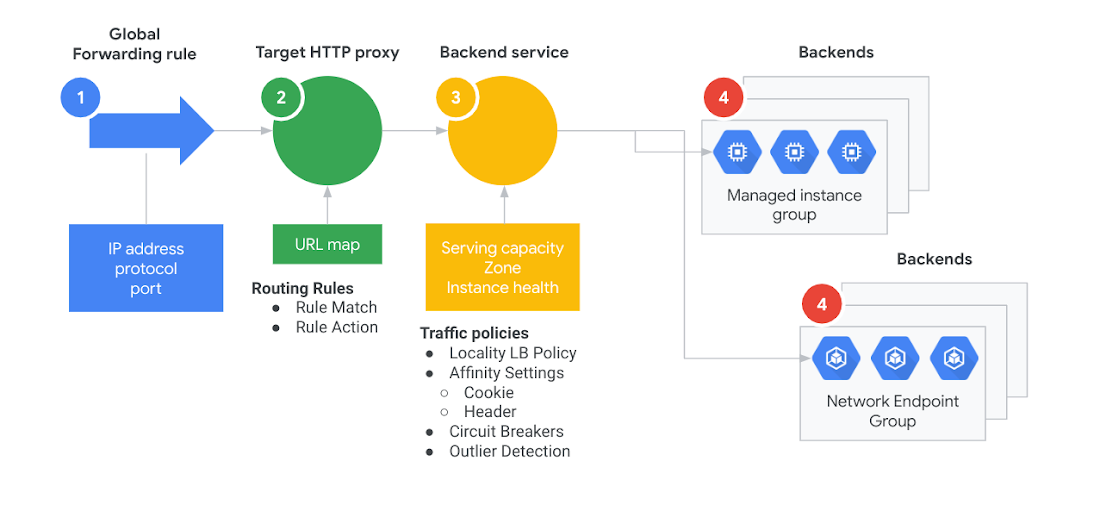

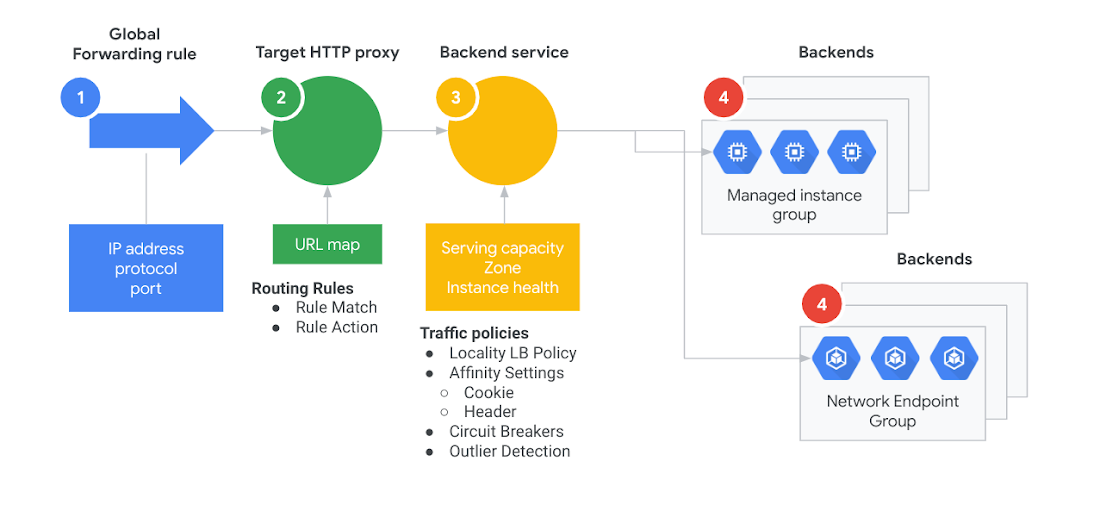

You can create custom traffic control rules and policies by specifying:

HTTP match rules: Specify parameters including host, path and headers to match in an incoming request.

HTTP actions: Actions to be performed on request after a match. These include redirects, rewrites, header transforms, mirroring, fault injection and more.

Per-service traffic policies: These specify load-balancing algorithms, circuit-breaking parameters, and other service-centric configurations.

Configuration filtering: Capability to push configuration to a subset of clients.

Using the above routing rules and traffic policies, you get sophisticated traffic control capabilities without the typical toil.

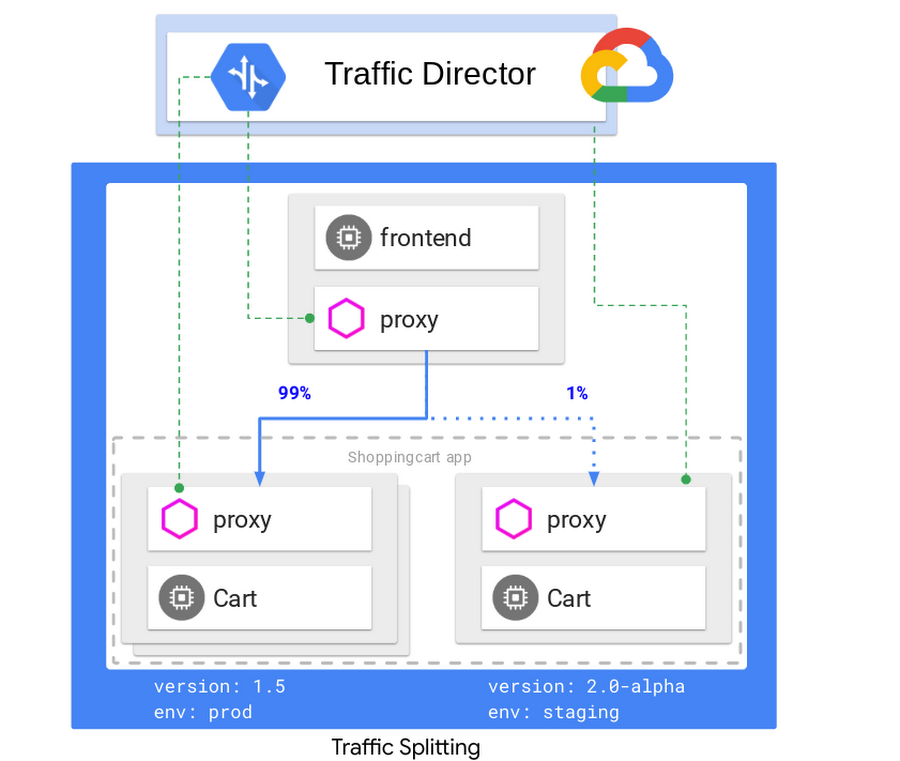

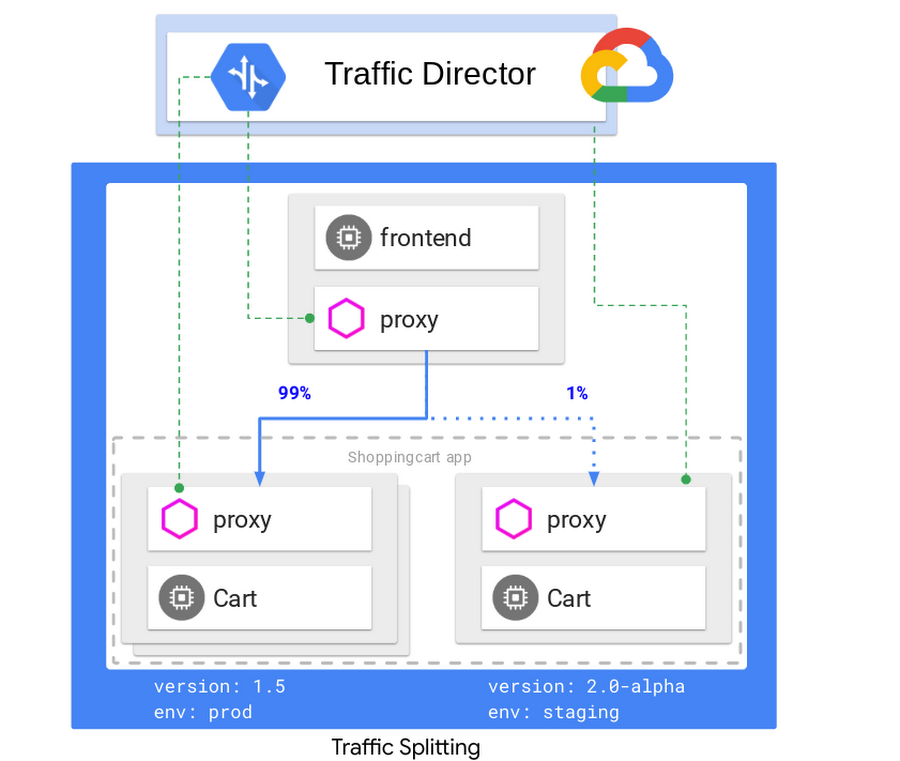

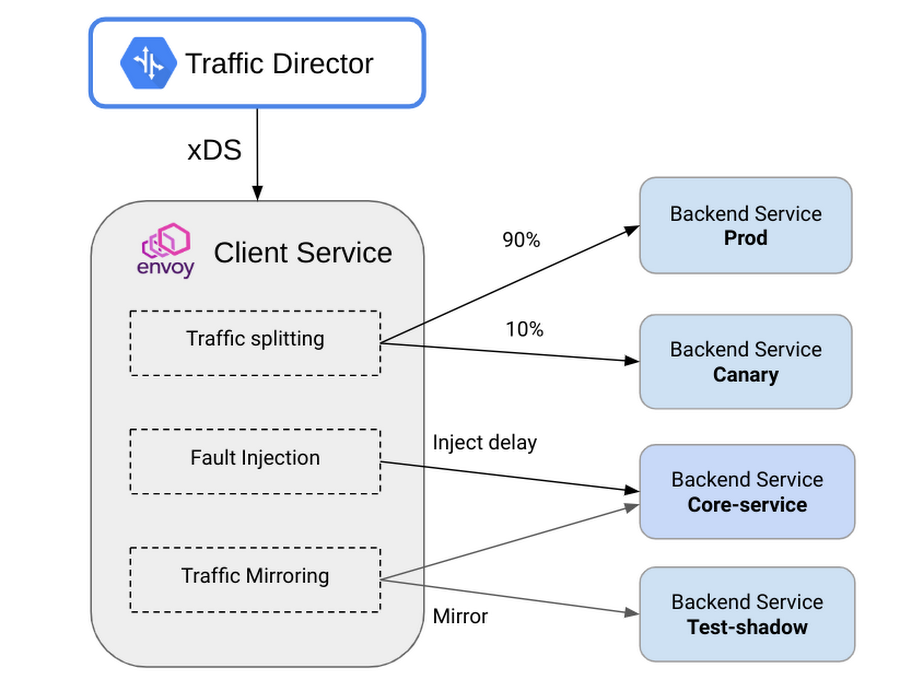

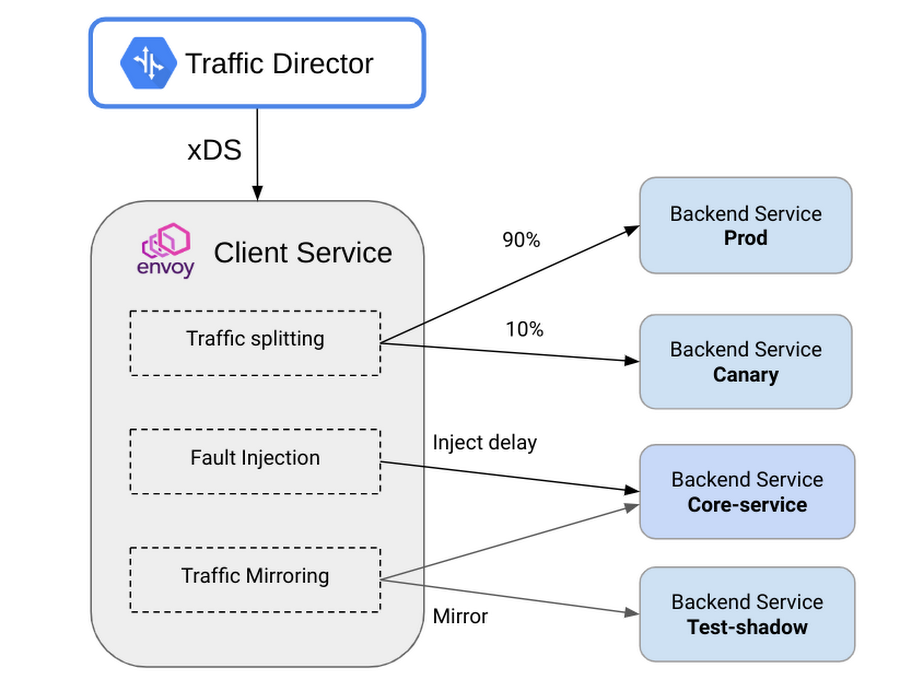

Let’s look at an example of Traffic Director’s traffic control capabilities: traffic splitting. Traffic Director lets you easily configure scenarios such as rolling out a new version of a service, say a shopping cart, and gradually ramping up the traffic routed to it.

You can also configure traffic steering to direct traffic based on HTTP headers, fault injection for testing resiliency of your service, mirroring so you can send a copy of the production traffic to a shadow service, and more.

You can get access to these features by signing up for the traffic control alpha.

Consistent traffic management for VM and container services

Traffic Director allows you to seamlessly deploy and manage heterogeneous deployments comprised of container and VM services. The instances for each service can span multiple regions.

With Traffic Director, the VM endpoints are configured using managed instance groups and container endpoints as standalone network endpoint groups. As described above, an open source service proxy like Envoy is injected into each of these instances. The rest of the data model and policies remain the same for both containers and VMs, as shown below:

This model provides consistency when it comes to service deployment, and the ability to globally load balance, seamlessly, across VMs and container instances in a service.

Try Traffic Director today

Learn more about Traffic Director online and watch the Next ’19 talks on Traffic Director and Service Mesh Networking. We’d love your feedback on Traffic Director and any new features you’d like to see—you can reach us at gcp-networking@google.com.