Cloud Machine Learning Perception services updates: Cloud Video Intelligence enters beta and Cloud Vision gets new features

Ram Ramanathan

Product Manager, Google Cloud Platform

As a follow-up to our machine learning announcements from Google Cloud Next in March 2017, today we’re excited to announce enhancements to our Cloud Machine Learning Perception services: Cloud Video intelligence API is graduating to public beta, and we’ve released some important updates to Cloud Vision API.

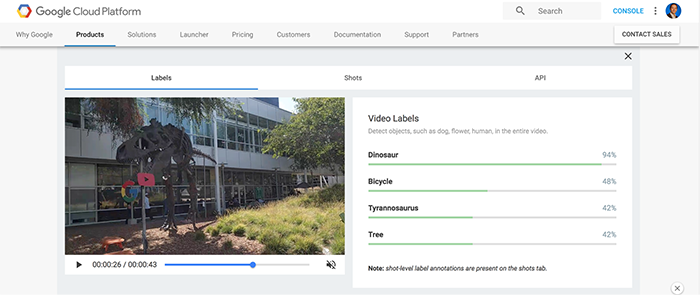

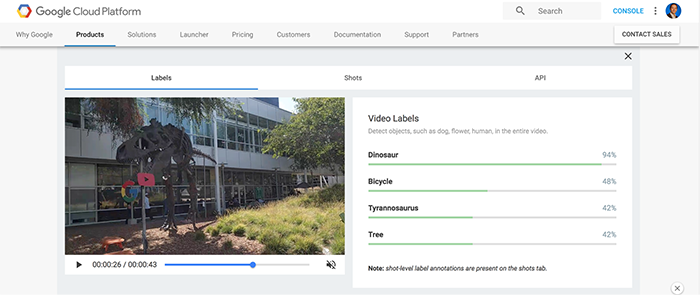

Cloud Video Intelligence beta is now open to all

Cloud Video Intelligence API allows businesses to build applications that can automatically extract entities from a video. Now any Google Cloud Platform (GCP) user can use Cloud Video Intelligence API to understand their video content, and make videos searchable and discoverable via an easy-to-use REST API.With Cloud Video Intelligence API, you can identify relevant entities across every moment of every video file within your catalog. With the beta release, we're also introducing pornographic content detection to the API, to detect inappropriate content within a video. We've also made model changes to Label Detection to improve model accuracy.

We’re also announcing pricing:

- Label and adult content detection is free for the first 1,000 minutes, and costs $0.10/minute from 1,001 to 10,000 minutes.

- Shot detection (detecting scene changes within a video) is free for the first 1,000 minutes, and costs $0.05/minute from 1,001 to 10,000 minutes.

Early customers have had a great experience with Video Intelligence API. For example SpringML recently helped a broadcasting station to make their media content discoverable and engaging.

We used Video Intelligence API to perform deep video analytics to classify media assets, detect context and generate real-time transcription. Using the API we've been able to organize video content and add labeling in a matter of days, a process which previously took months to complete.

Prabhu Palanisamy, President and Chief Strategy Officer, SpringML

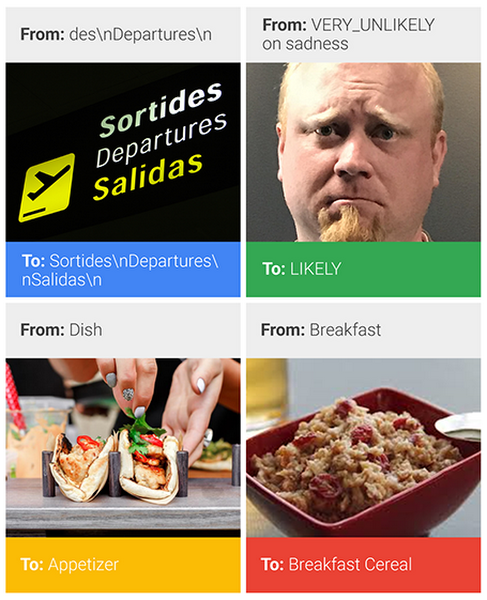

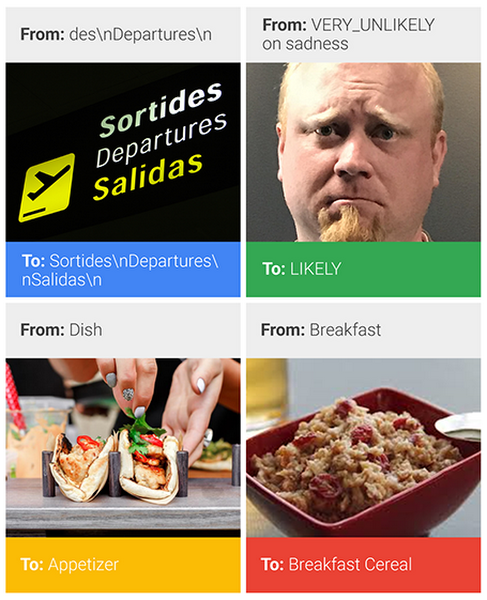

Updates to Cloud Vision API

Since its initial release in December 2015, Cloud Vision API has become more powerful and accurate thanks to functionality improvements and significant adoption by customers. Today we're updating many existing features and releasing new features like web and document detection for general use. Specifically:- Updated label detection model that has over 2x increase in recall, and an increased overall vocabulary to over 10,000 entities

- Updated "Safe Search" model with improved detection of adult content, and 30% error reduction

- Updated text detection model that reduces mean latency by 25%, with over 5% improvement on recall on Latin languages

- Updated face detection, with over 2x improvement in accuracy for sadness, surprise and anger

- Web detection powered by Google Image Search, helps users find similar pictures on the web and their associated metadata, and has processed over 10 million images over the course of the beta

These updates will be rolled out over the next three weeks and will be available globally.