Best practices for working with Google Cloud Audit Logs

Grace Mollison

Head Cloud Solutions Architects EMEA

Mary Koes

Product Manager, Google Cloud

As an auditor, you probably spend a lot of time reviewing logs. Google Cloud Audit Logs is an integral part of the Google Stackdriver suite of products, and understanding how it works and how to use it is a key skill you need to implement an auditing approach for systems deployed on Google Cloud Platform (GCP). In this post, we’ll discuss the key functionality of Cloud Audit Logs and call out some best practices.

The first thing to know about Cloud Audit Logs is that each project consists of two log streams: admin activity and data access. GCP services generate these logs to help you answer the question of "who did what, where, and when?" within your GCP projects. Further, these logs are distinct from your application logs.

Admin activity logs contain log entries for API calls or other administrative actions that modify the configuration or metadata of resources. Admin activity logs are always enabled. There's no charge for admin activity audit logs, and they're retained for 13 months/400 days.

Data access logs, on the other hand, record API calls that create, modify or read user-provided data. Data access audit logs are disabled by default because they can grow to be quite large.

For your reference, here’s the full list of GCP services that produce audit logs.

Configure and view audit logs

Getting started with Cloud Audit Logs is simple. Some services are on by default, and others are just a few clicks away from being operational. Here’s how to set up, configure and use various Cloud Audit Logs capabilities.Configuring audit log collection

Admin activity logs are enabled by default; you don’t need to do anything to start collecting them. With the exception of BigQuery, however, data Access audit logs are disabled by default. Follow the guidance detailed in Configuring Data Access Logs to enable them.

One best practice for data access logs is to use a test project to validate the configuration for your data access audit collection before you propagate it to developer and production projects. If you configure your IAM controls incorrectly, your projects may become inaccessible.

Viewing audit logs

You can view audit logs from two places in the GCP Console: via the activity feed, which provides summary entries, and via the Stackdriver Logs viewer page, which gives full entries.

Permissions

You should consider access to audit log data as sensitive and configure appropriate access controls. You can do this by using IAM roles to apply access controls to logs.

To view logs, you need to grant the IAM role logging.viewer (Logs Viewer) for the admin activity logs, and logging.privateLogViewer (Private Logs viewer) for the data access logs.

When configuring roles for Cloud Audit Logs, this how to guide describes some typical scenarios and provides guidance on configuring IAM policies that address the need to control access to audit logs. One best practice is to ensure that you’ve applied the appropriate IAM controls, to restrict who can access the audit logs.

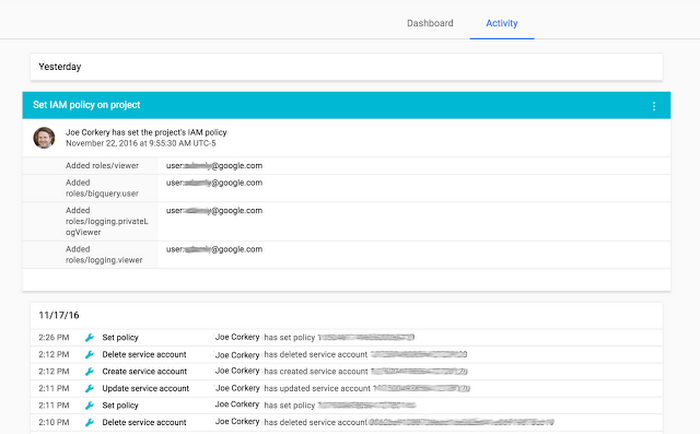

Viewing the activity feed

You can see a high-level overview of all your audit logs on the Cloud Console Activity page. Click on any entry to display a detailed view of that event, as shown below.

By default, this feed does not display data access logs. To enable them, go to the Filter configuration panel and select the “Data Access” field under Categories. (Please note, you also need to have the Private Logs Viewer

IAM permission in order to see data access logs).

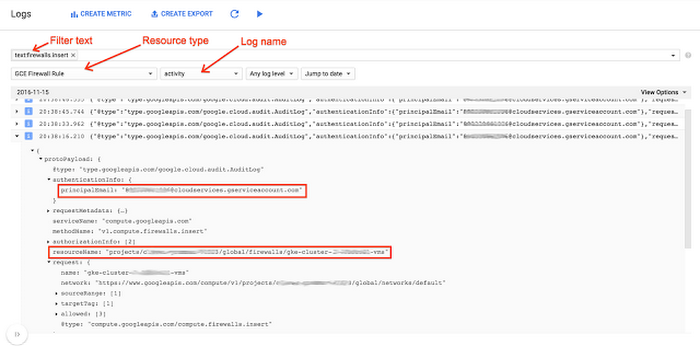

Viewing audit logs via the Stackdriver Logs viewer

You can view detailed log entries from the audit logs in the Stackdriver Logs Viewer. With Logs Viewer, you can filter or perform free text search on the logs, as well as select logs by resource type and log name (“activity” for the admin activity logs and “data_access” for the data access logs).

The example below displays some log entries in their JSON format, and highlights a few important fields.

Filtering Audit Logs

Stackdriver provides both basic and advanced logs filters. Basic log filters allows you to filter the results displayed in the feed by user, resource type and date/time.

An advanced logs filter is a Boolean expression that specifies a subset of all the log entries in your project. You can use to it choose the log entries:

- from specific logs or log services

- within a given time range

- that satisfy conditions on metadata or user-defined fields

- that represent a sampling percentage of all log entries

Below is a snippet of the log entry that shows that the SetIamPolicy call was made to grant the BigQuery dataviewer IAM role to Alice.

Exporting logs

Log entries are held in Stackdriver Logging for a limited time known as the retention period. After that, the entries are deleted. To keep log entries longer, you need to export them outside of Stackdriver Logging by configuring log sinks.

A sink includes a destination and a filter that selects the log entries to export, and consists of the following properties:

- Sink identifier: A name for the sink

- Parent resource: The resource in which you create the sink. This can be a project, folder, billing account, or an organization

- Logs filter: Selects which log entries to export through this sink, giving you the flexibility to export all logs or specific logs

- Destination: A single place to send the log entries matching your filter. Stackdriver Logging supports three destinations: Google Cloud Storage buckets, BigQuery datasets, and Cloud Pub/Sub topics.

- Writer identity: A service account that has been granted permissions to write to the destination.

Another feature for working with logs is Aggregated Exports, which allows you to set up a sink at the Cloud IAM organization or folder level, and export logs from all the projects inside the organization or folder. For example, the following gcloud command sends all admin activity logs from your entire organization to a single BigQuery sink:

Be aware that an aggregated export sink sometimes exports very large numbers of log entries. When designing your aggregated export sink to export the data you need to store, here are some best practices to keep in mind:

- Ensure that logs are exported for longer term retention

- Ensure that appropriate IAM controls are set against the export sink destination

- Design aggregated exports for your organization to filter and export the data that will be useful for future analysis

- Configure log sinks before you start receiving logs

- Follow the best practices for common logging export scenarios

Managing exclusions

Stackdriver Logging provides exclusion filters to let you completely exclude certain log messages for a specific product or messages that match a certain query. You can also choose to sample certain messages so that only a percentage of the messages appear in Stackdriver Logs Viewer. Excluded log entries do not count against the Stackdriver Logging logs allotment provided to projects.

It’s also possible to export log entries before they're excluded. For more information, see Exporting Logs. Excluding this noise will not only make it easier to review the logs but will also allow you to minimize any charges for logs over your monthly allotment.

Best practices:

- Ensure you're using exclusion filters to exclude logging data that will not be useful. For example, you shouldn’t need to log data access logs in development projects. Storing data access logs is a paid service (see our log allotment and coverage charges), so recording superfluous data incurs unnecessary overhead

Cloud Audit Logs best practices, recapped

Cloud Audit Logs is a powerful tool that can help you manage and troubleshoot your GCP environment, as well as demonstrate compliance. As you start to set up your logging environment, here are some best practices to keep in mind:

- Use a test project to validate the configuration of your data-access audit collection before propagating to developer and production projects

- Be sure you’ve applied appropriate IAM controls to restrict who can access the audit logs

- Determine whether you need to export logs for longer-term retention

- Set appropriate IAM controls against the export sink destination

- Design aggregated exports on which your organization can filter and export the data for future analysis

- Configure log sinks before you start receiving logs

- Follow the best practices for common logging export scenarios

- Make sure to use exclusion filters to exclude logging data that isn’t useful.