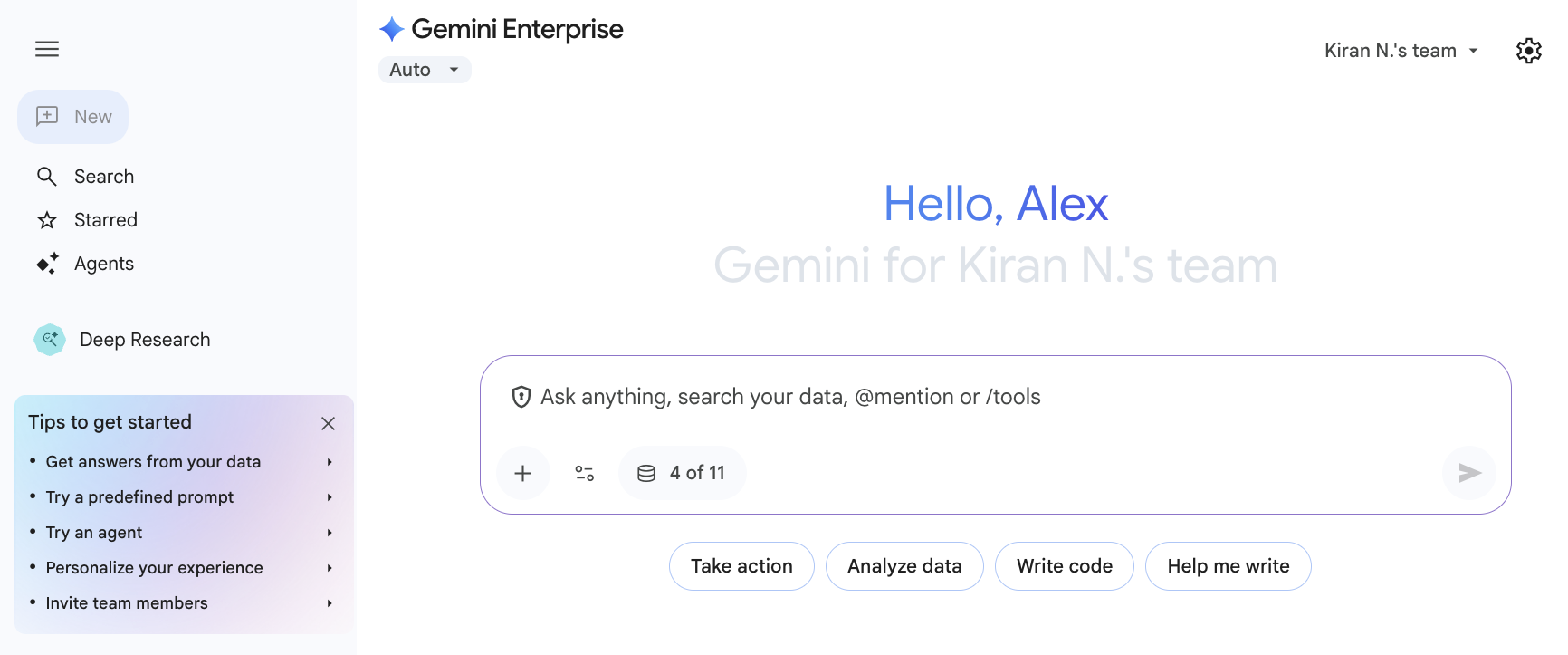

Gemini Enterprise is an intranet search, AI assistant, and agentic platform, bringing knowledge workers the power of generative AI and agentic workflows and leveraging data sources from across your organization. Gemini Enterprise includes prebuilt connectors for the most commonly-used third-party applications — such as Confluence, Jira, Microsoft SharePoint, and ServiceNow — giving employees a single multimodal search interface with permissions-aware access to enterprise information. Gemini Enterprise can provide conversational assistance, answer complex questions, and host custom AI agents that apply generative AI contextually.

Use cases for Gemini Enterprise

You can use Gemini Enterprise to answer questions, carry out tasks, and iterate on deliverables based on connected data sources from across your organization. You can use Gemini Enterprise in the following ways:

| Use case | Example prompts |

|---|---|

| Create images, videos, and reports | Create a series of three images for a social media campaign promoting our new line of artisanal coffee beans. |

| I'm attaching the latest company 10-K report. Analyze it and give me a summary of the key financial highlights and risk factors. | |

| Turn the attached blog post about our company's history into a short documentary-style video, using archival photos and a professional voiceover. | |

| Handle administrative tasks | I have a late fee in my expense report; what do I do to resolve it? |

| Help me book a conference room for my team meeting next Tuesday from 10 to 11 AM. | |

| Research, ideate, and plan | Summarize our best-performing campaigns from last year and give me three new ideas for our upcoming holiday promotion. |

| My customer is Cymbal Insurance and I'm meeting with their Head of Procurement on Thursday. Find their latest earnings report and give me a list of talking points. | |

| We are considering consolidating our shipping with a single logistics provider. Can you research the pros and cons of a "single-provider" strategy, and then identify the top three logistics partners for a company of our size? | |

| Learn about people and projects | Find all the documentation related to our Go-To-Market strategy for the new product line. |

| Who is Hao S. and what projects are they currently working on? |

For more ways to use Gemini Enterprise in your work, see Example use cases.

Why use Gemini Enterprise?

Gemini Enterprise connects content across your organization to generate grounded and personalized answers using Google's premier knowledge discovery tools.

With Gemini Enterprise, you can:

- Process large volumes of enterprise data from multiple formats and platforms.

- Sync and search across data from SaaS systems, such as Salesforce, Jira, and Confluence.

- Enforce access-controlled search results and generative answers at scale.

- Streamline agentic workflows and eliminate the need to switch between multiple AI assistance and agent applications.

- Create and configure AI assistants offering generative answers from enterprise or public data.

- Use out-of-the-box agents, available to you in the Agents gallery.

- Assemble your own no-code agent with Agent Designer.

- Develop, deploy, and troubleshoot code using Gemini Code Assist Standard.

Gemini Enterprise offers an array of unique advantages for enterprises:

- Built-in trust through SSO and user-level access.

- Google intelligence that learns from user behavior and anticipates the next question.

- Broad connectivity across cloud platforms, CRMs, productivity tools, and legacy systems.

- Enterprise-wide customization with user-specific personalization.

- Real-time feedback and adaptation that continuously improves results.

- Customizable RAG agent grounded on enterprise data from a wide variety of supported formats.

- Scalability across geographies and languages.

NotebookLM Enterprise: AI-powered research and knowledge assistant

NotebookLM Enterprise is an AI-powered research and knowledge assistant that helps you to summarize information, brainstorm ideas, and write with confidence. You may use NotebookLM Enterprise alongside your Gemini Enterprise experience to create notebooks that contain your notes, sources, and ideas.

Next steps

For more information about Gemini Enterprise, see the following pages.

To learn about Gemini Enterprise and related products, see:

To learn about editions and specific features, see Compare editions of Gemini Enterprise.

To get started with using Gemini Enterprise, see Get started with Gemini Enterprise.