Objetivos

Usa Dataproc Hub para crear un entorno de cuaderno de JupyterLab de un solo usuario que se ejecute en un clúster de Dataproc.

Crea un cuaderno y ejecuta una tarea de Spark en el clúster de Dataproc.

Elimina el clúster y conserva el cuaderno en Cloud Storage.

Antes de empezar

- El administrador debe concederte el permiso

notebooks.instances.use(consulta Asignar roles de Gestión de Identidades y Accesos (IAM)).

Crear un clúster de JupyterLab de Dataproc desde Dataproc Hub

Selecciona la pestaña Notebooks gestionados por el usuario en la página Dataproc → Workbench de la consola de Google Cloud Google Cloud.

En la fila que muestra la instancia de Dataproc Hub creada por el administrador, haz clic en Abrir JupyterLab.

- Si no tienes acceso a la consola de Google Cloud , introduce en tu navegador web la URL de la instancia de Dataproc Hub que te haya compartido un administrador.

En la página JupyterHub → Opciones de Dataproc, selecciona una configuración de clúster y una zona. Si está habilitada, especifique las personalizaciones que quiera y haga clic en Crear.

Una vez creado el clúster de Dataproc, se te redirigirá a la interfaz de JupyterLab que se ejecuta en el clúster.

Crear un cuaderno y ejecutar una tarea de Spark

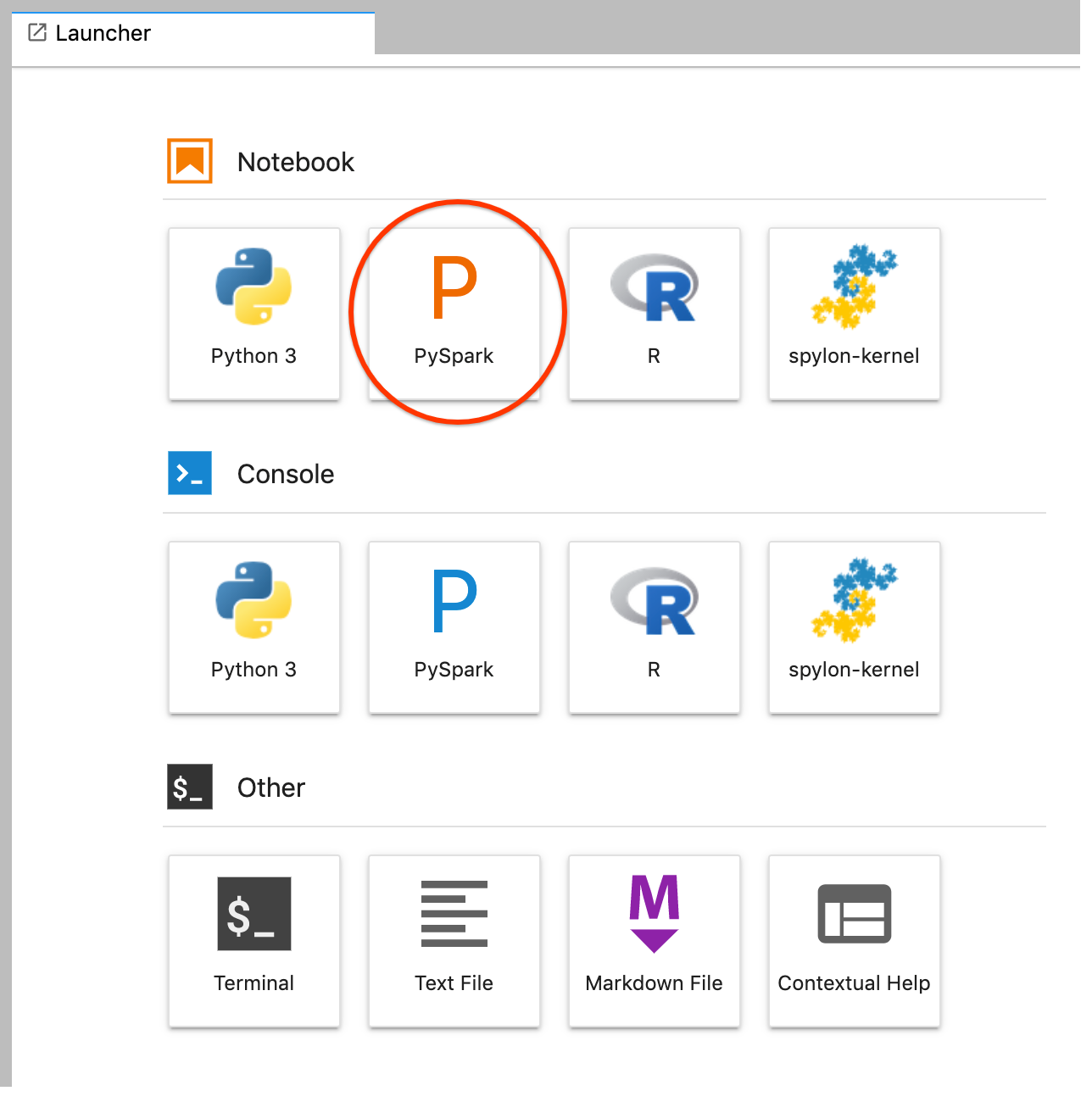

En el panel de la izquierda de la interfaz de JupyterLab, haz clic en

GCS(Cloud Storage).Crea un cuaderno de PySpark desde el menú de aplicaciones de JupyterLab.

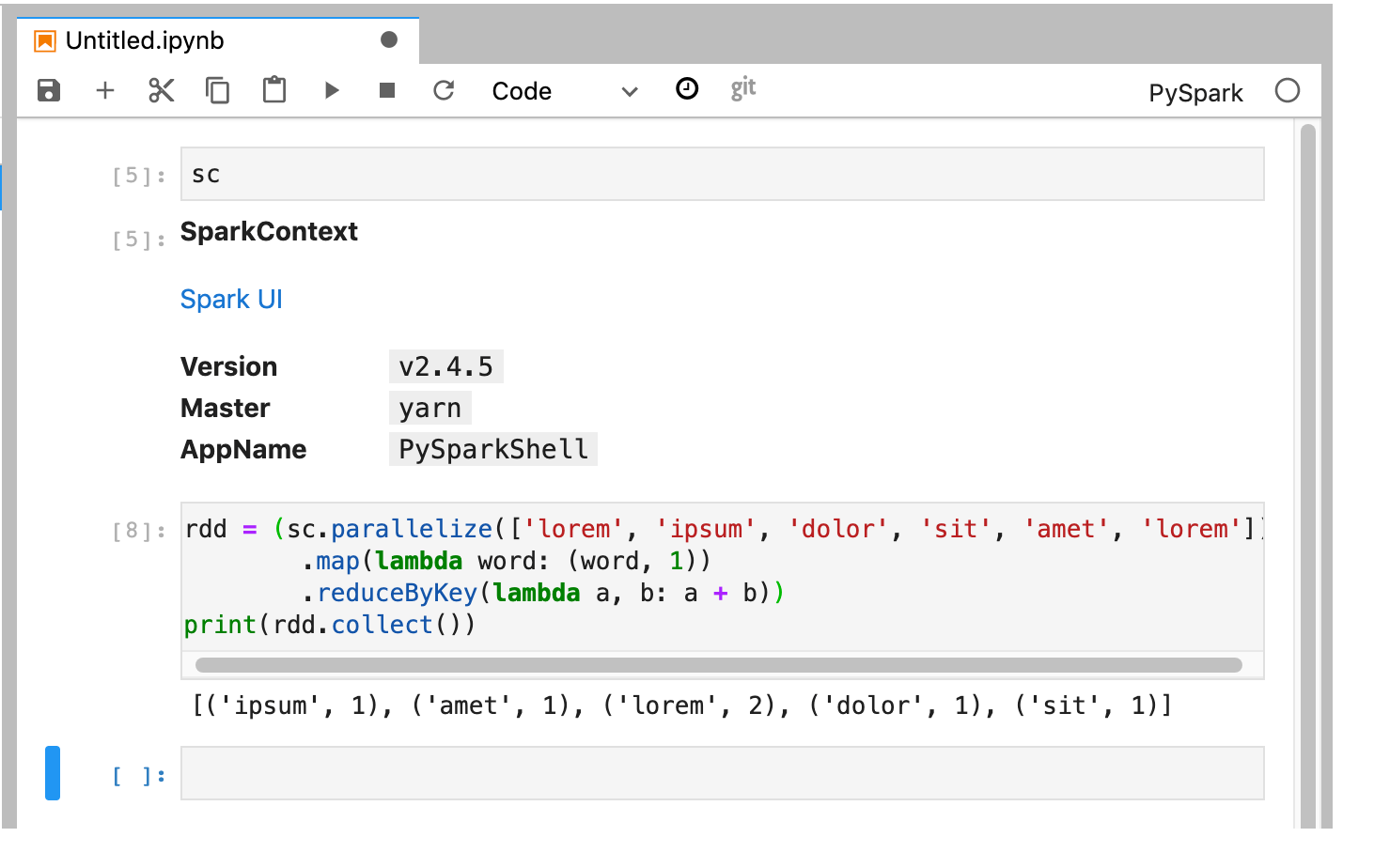

El kernel de PySpark inicializa un SparkContext (con la variable

sc). Puedes examinar el SparkContext y ejecutar una tarea de Spark desde el cuaderno.rdd = (sc.parallelize(['lorem', 'ipsum', 'dolor', 'sit', 'amet', 'lorem']) .map(lambda word: (word, 1)) .reduceByKey(lambda a, b: a + b)) print(rdd.collect())

Ponle un nombre al cuaderno y guárdalo. El cuaderno se guarda y permanece en Cloud Storage después de eliminar el clúster de Dataproc.

Apagar el clúster de Dataproc

En la interfaz de JupyterLab, selecciona File (Archivo) → Hub Control Panel (Panel de control de Hub) para abrir la página Jupyterhub.

Haz clic en Detener mi clúster para cerrar (eliminar) el servidor de JupyterLab, lo que elimina el clúster de Dataproc.

Siguientes pasos

- Consulta Spark y los cuadernos de Jupyter en Dataproc en GitHub.