Announcing launch of event-driven transfer for Cloud Storage

Ajitesh Abhishek

Product Manager, Google Cloud

Anup Talwalkar

Software Engineer, Google Cloud

Storage Transfer Service now supports serverless, real-time replication capability.

Customers have told us they need to move data in real-time with all the benefits Storage Transfer Service (STS) offers - scheduling, retries, checksumming, details logs etc.

Why? To smoothly replicate data between Cloud Storage buckets. Customers need asynchronous, scalable service to aggregate data in a single bucket for data processing and analysis, keep buckets across projects/regions/continents in sync, share data with partners and vendors, maintain dev/prod copy in sync, keep a secondary copy in cheaper storage class etc.

Another use case for this capability is cross-cloud analytics. Customers need an automated, real-time replication of data from AWS S3 to Cloud Storage to take advantage of Google Cloud’s analytics and machine learning capabilities.

Introducing event-driven transfer

STS now offers Preview support for event-driven transfer - serverless, real-time replication capability to move copy from AWS S3 to Cloud Storage and copy data between multiple Cloud Storage buckets.

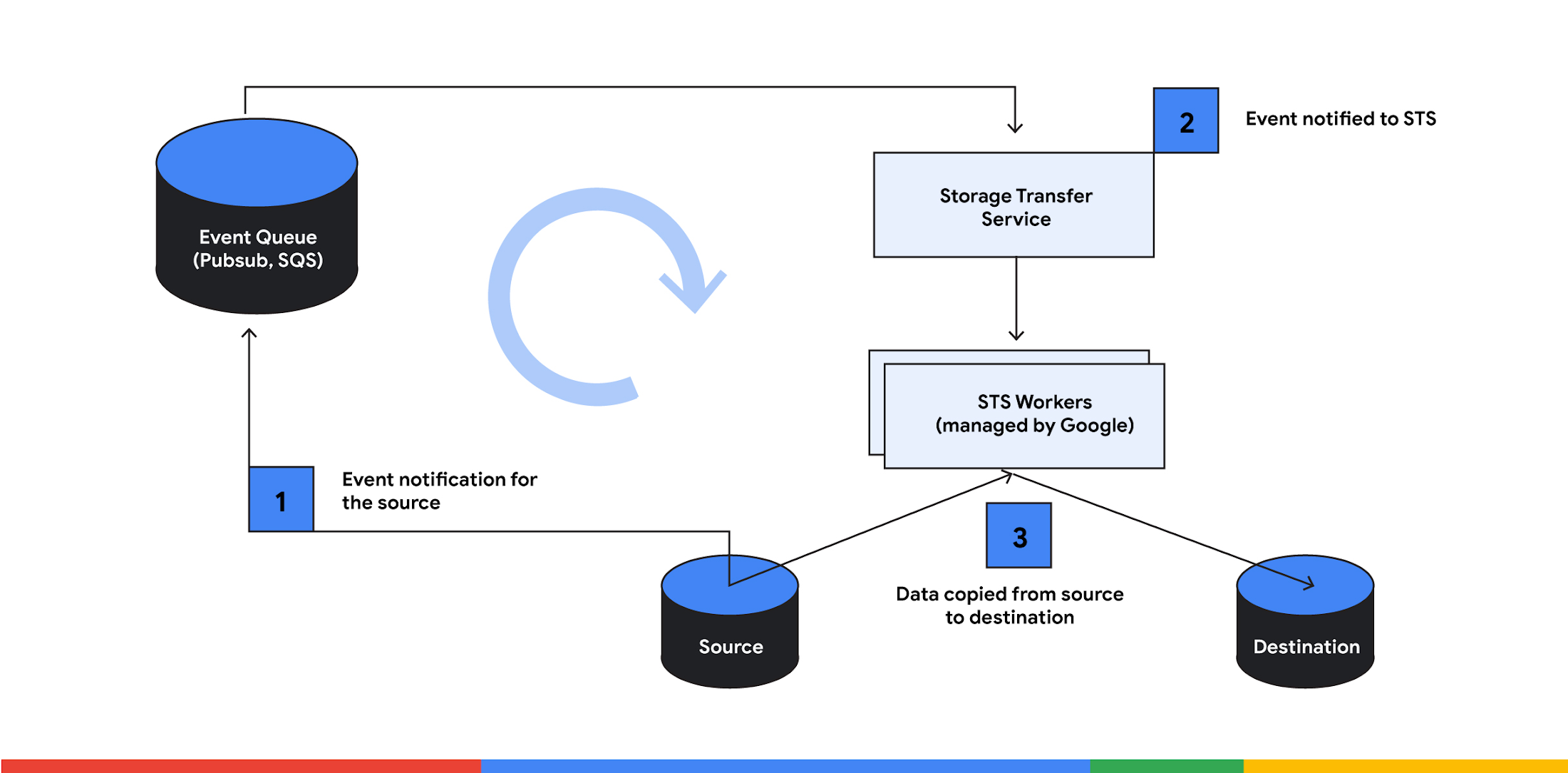

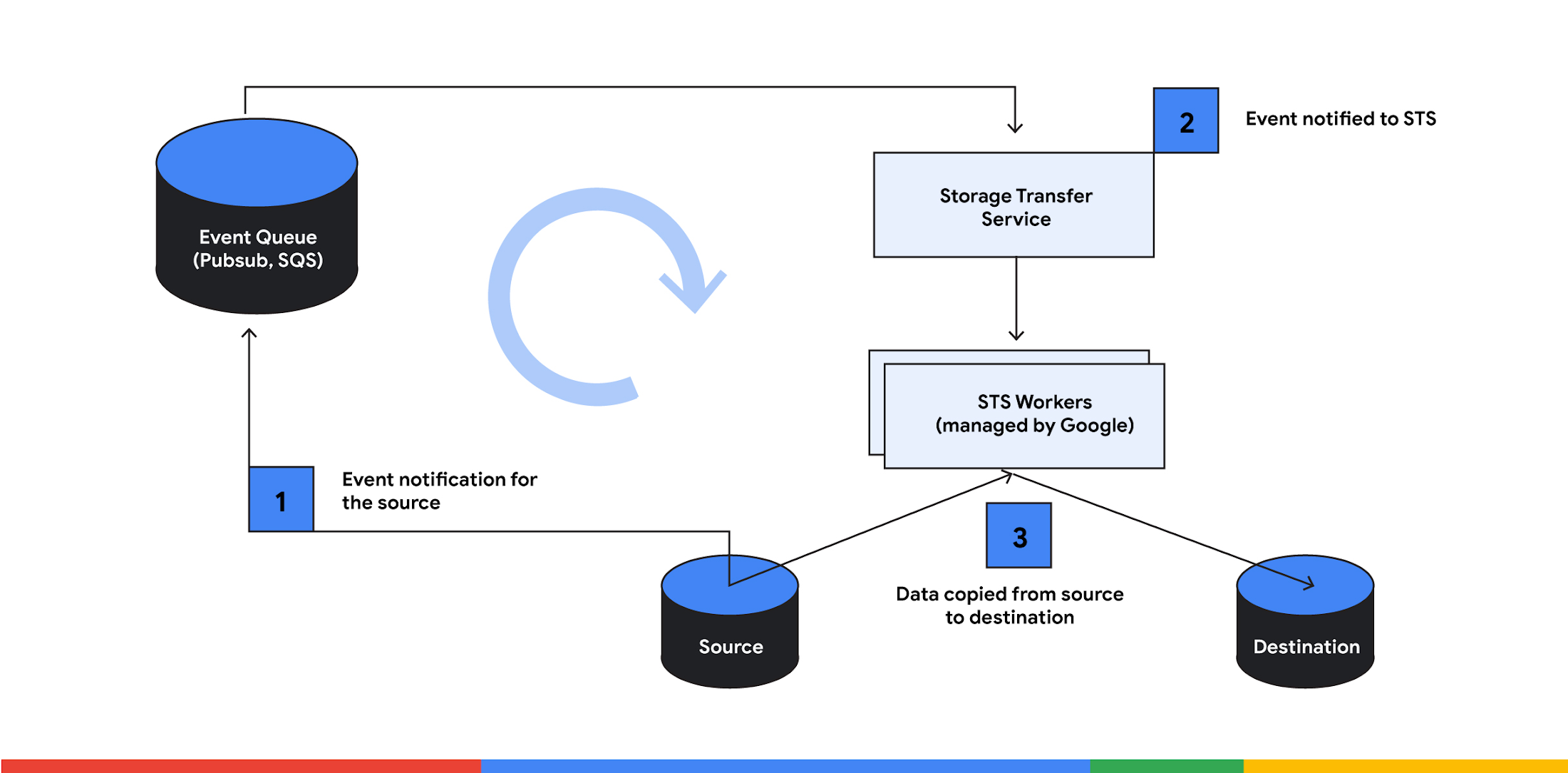

For performing event-driven transfer, STS relies on Pubsub and SQS. Customers have to set up the event notification and grant STS access to this queue. Using a new field - “Event Stream” - in the Transfer Job, customers can specify event stream name and control when STS starts and stop listening for events from this stream

Once the Transfer Job is created, STS starts consuming the object change notifications from the source. Any object change or upload will now trigger a change notification, which the service acts on in real-time to copy the object to the destination.

This is great news for customers who use STS batch transfer for the scenarios where data freshness carries significant business value: low-RPO backup, event-driven analytics, live migration etc. For Batch transfer, STS starts by work discovery - listing the sources and destination with a minimum frequency of 1 hr, which can be time consuming for buckets with billions of objects. As a result, for a large bucket, new objects created at the source don't reflect at the destination for hours. In contrast, event-driven transfer can copy data to destination within minutes of object creation and change at the source.

With event-driven transfer, you can set up automatic, ongoing replication of data between buckets with just a few clicks. It comes with a range of supporting features including filtering based on prefixes, handling retries, checksumming, detailed transfer logs via Cloud Logging and progress tracking via Cloud Monitoring. In addition to being easy to set up and use, it’s highly scalable and flexible, allowing replication of data between buckets in the same project, across different projects, or even across different Cloud Storage regions and continents.

Configuring event-driven transfer

You can create an event-driven transfer between Cloud Storage buckets in three steps:

Create a pubsub subscription that listens to changes on the Cloud Storage bucket

Assign permission for STS to copy data between buckets and listen to this pubsub subscription

Create a Transfer Job with Event Stream configuration

To simplify this further, here is a walkthrough of how you can use event-driven transfer using the gcloud command-line tool.

Create event notification

To start with, create a Pub/Sub notification for the source Cloud Storage bucket and a pull subscription for the topic:

Replace SOURCE_BUCKET_NAME with the name of your source bucket, TOPIC_NAME with the name of the topic you want to create, and SUBSCRIPTION_ID with the name of the subscription you want to create.

STS will use this subscription to read messages about changes to objects in your source bucket.

Assign permissions

STS uses a Google Managed service account to perform transfer between Cloud Storage buckets. For a new project, this service accounts can be provisioned by making googleServiceAccounts.get API call. Makes sure you assign following roles or equivalent permissions:

Read from the source Cloud Storage bucket:

Role: Storage Legacy Bucket Reader (roles/storage.legacyBucketReader) and Storage Object Viewer (roles/storage.objectViewer)

Permissions: storage.buckets.get and storage.objects.get

Write to the destination Cloud Storage bucket:

Role: Storage Legacy Bucket Writer (roles/storage.legacyBucketWriter)

Permissions: storage.objects.create

Permission to subscribe the subscription created in the previous step:

Role: roles/pubsub.subscriber

Permissions: pubsub.subscriptions.consume

For test setup, you can assign permissions using the following command. Use specific permission as highlighted above in the production workload.

Create Transfer Job

As a last step, create an Event-driven Transfer Job with EventStream configuration. Transfer Job coordinates the data movement between source and destination based on event notification generated from the source,

Replace SOURCE_BUCKET_NAME with the name of your source bucket, DESTINATION_BUCKET_NAME with the name of your destination bucket, and SUBSCRIPTION_ID with the subscription ID, which is of the form projects/<project_id>/subscriptions/<subscription_name>.

This creates a Transfer Job that waits for notifications on the Pub/Sub subscription and replicates data within minutes of being notified of the change at source Cloud Storage bucket.

You can follow similar steps to create event-driven transfer from AWS S3 to Cloud Storage bucket.

You can do it now

Event-driven transfer is an extremely powerful solution that can help you automate data transfer and processing tasks, saving time and resources. We look forward to seeing all of the creative and innovative ways that you will put it to use.

STS event-driven transfer is available in all Google Cloud regions. The region for execution of the transfer is based on the region of the source Cloud Storage bucket. To see where transfer jobs can be created, check the location list. There is no additional charge for using Storage Transfer Service Event-driven transfer, and you can get all the details on the Storage Transfer Service pricing page.