Designing Multi-regional Internal Load Balancing with Google Cloud iLB + Cloud DNS

Ammett Williams

Developer Relations Engineer

Gaurav Madan

Customer Engineer, Networking

Google Cloud has several load balancer types. For internal load balancing within Google Cloud, customers have 3 options:

Internal HTTPS load balancing

Internal TCP proxy load balancing

Internal TCP/ UDP load balancing

Google Cloud internal HTTP(S) load balancer is a proxy-based, regional Layer 7 load balancer that enables you to run and scale your services behind an internal IP address.

Google Cloud internal regional TCP proxy load balancer is a proxy-based regional load balancer to host your TCP services.

Google Cloud internal TCP/UDP load balancer is a pass-through , regional Layer 4 load balancer for TCP or UDP traffic and comes handy in cases where you need to forward the original packets unproxied.

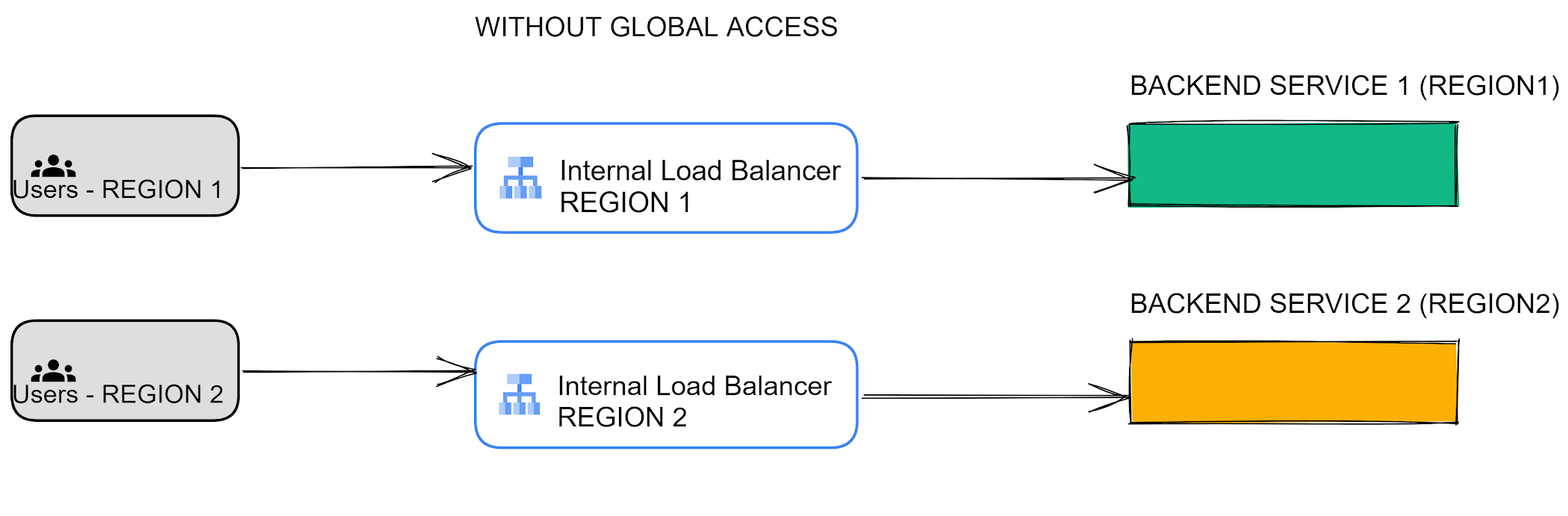

One of the common themes across all 3 kinds of internal load balancers is that all of them are ‘Regional’ in nature. Regional load balancing is used when your backends are in one region or when you have jurisdictional compliance requirements for traffic to stay in a particular region. The following figure depicts the ‘regional’ behavior of an internal load balancer

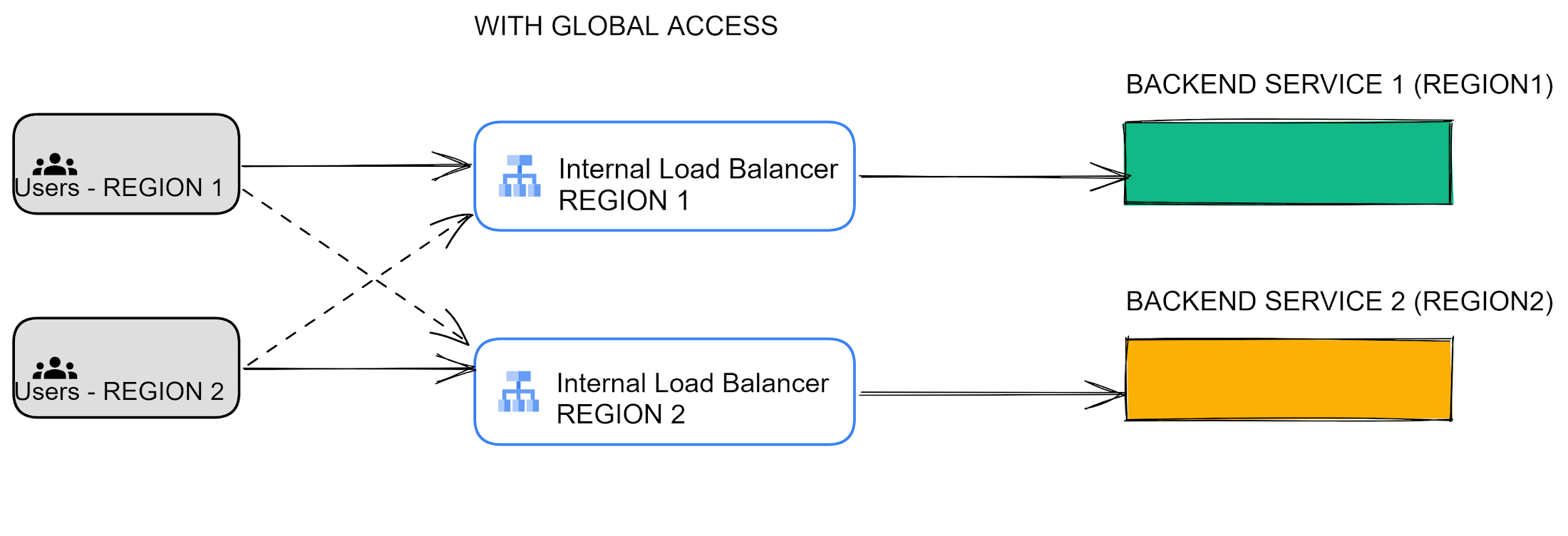

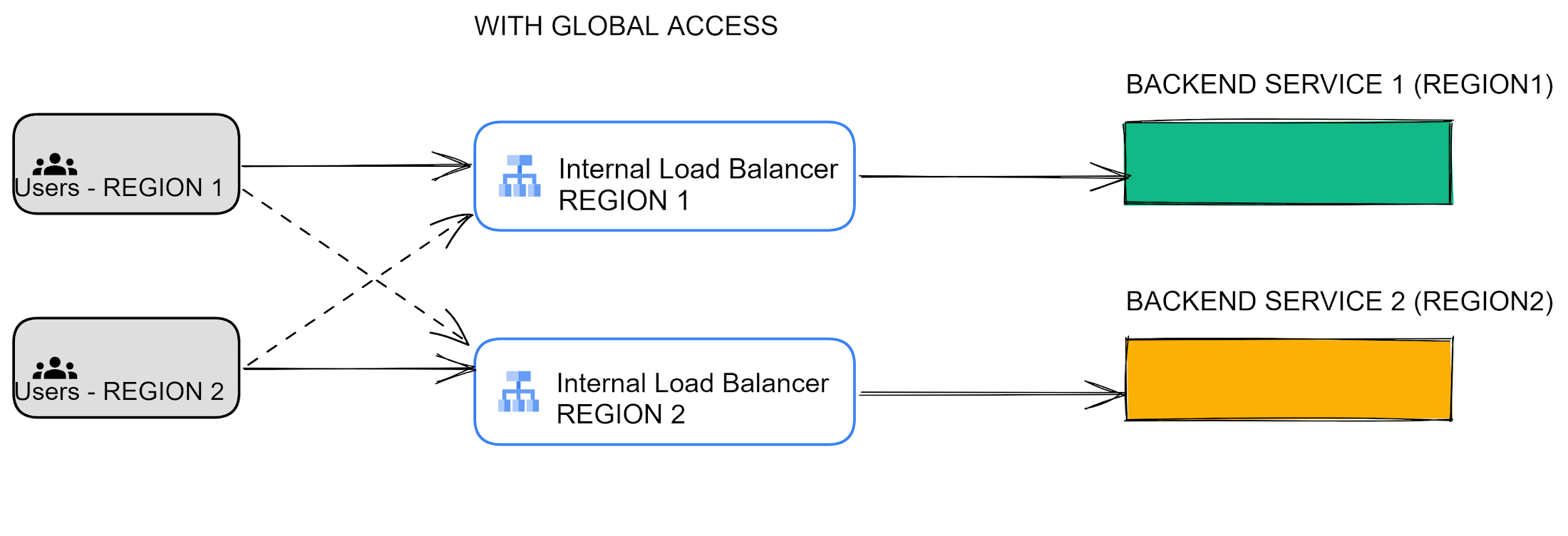

Another aspect of internal load balancing is to determine how clients can access the internal load balancer .With the global access feature of internal load balancers, clients from any region send traffic to services deployed in a specific region via the load balancer. Your services can be deployed on Compute Engine, Google Kubernetes Engine (GKE), Cloud Run, on-premises or in other public clouds, and can communicate with clients across the globe using this feature with the Internal HTTP(S) or TCP proxy Load Balancers.

The following picture depicts difference between ‘global access’ feature turned Off vs turned On

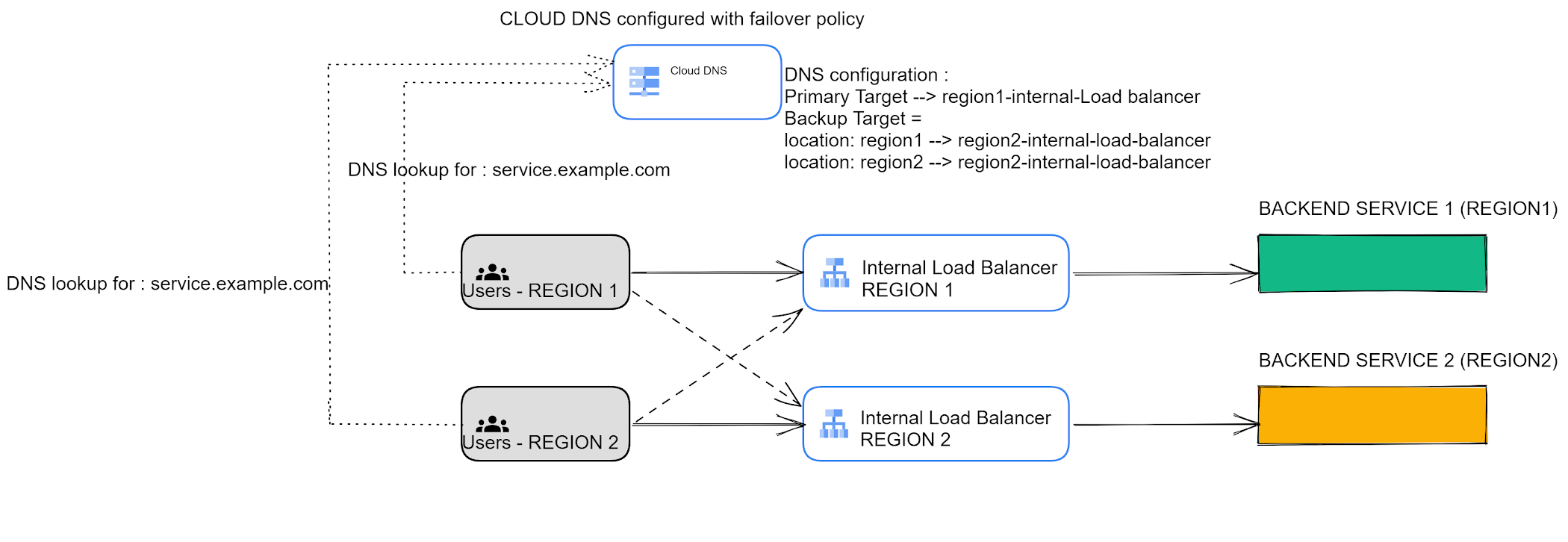

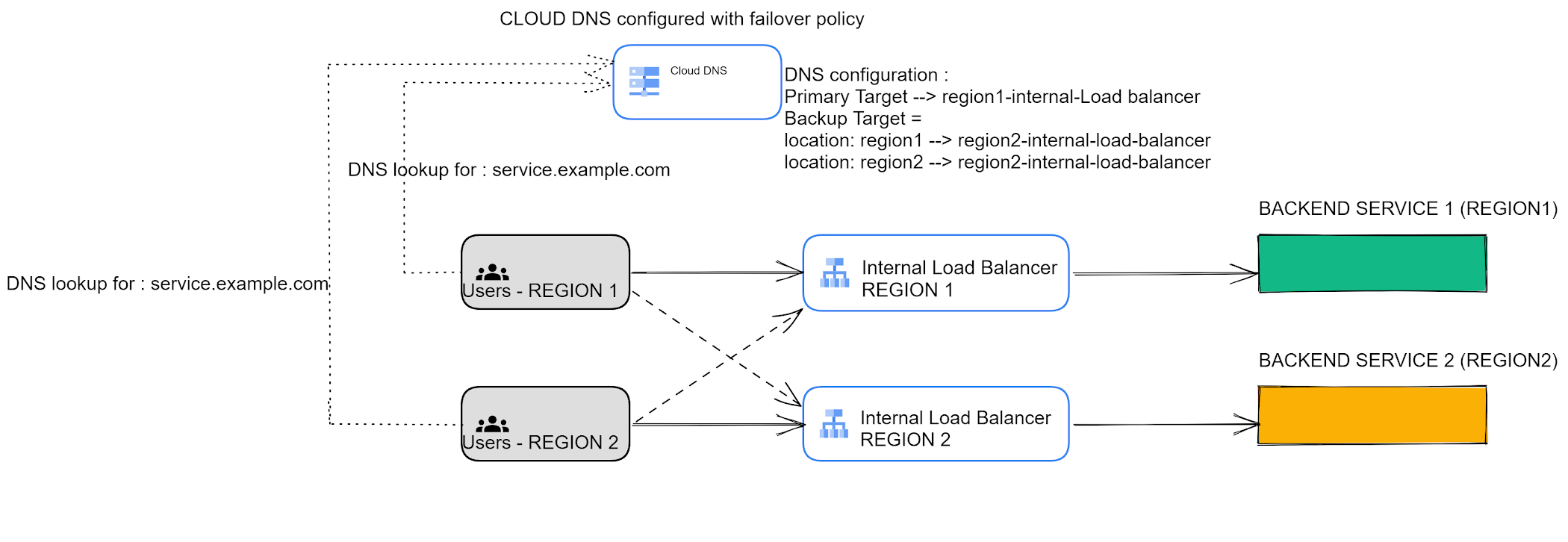

With the global-access feature in use, it becomes important for users to have a mechanism in place which can direct the user traffic to appropriate internal load balancer based on the health state of regional backend services. For example, an application owner may need to configure the application behavior in such a way that users from region 1 can automatically switch to internal load balancer 2 if the backend service in region 1 is unavailable / unhealthy. This is where Cloud DNS comes into play.

Cloud DNS supports health checks for internal TCP/UDP load balancers that have global access enabled. For an internal TCP/UDP load balancer, Cloud DNS gets direct health signals from the individual backend instances and the same thresholding algorithm is applied.

The failover routing policy in Cloud DNS lets customers set up active backup configurations. With a failover routing policy, you can configure primary and backup IPs for a resource record. In normal operation, Cloud DNS will respond to queries with the IP addresses provisioned in the primary set. When all IP addresses in the primary set fail (health status changes to unhealthy), Cloud DNS starts serving the IP addresses in the backup set.

The following example shows the options that a customer is presented with when he/she attempts to configure a record set with routing-policy in Cloud DNS. This is where a customer can configure Primary as well as backup targets based on the client's incoming region.

Selecting Failover option

Failover image

More on load balancing

To learn more about load balancing in Google Cloud you can check out the following:

Hand-on codelab - Multi-region failover using Cloud DNS Routing Policies and Health Checks for Internal TCP/UDP Load Balancer

YouTube - What’s new for load balancing and service networking

Blog - Connect from anywhere: Internal HTTP(S) Load Balancers are now globally accessible

Documentation - DNS health Check

Documentation - DNS failover Policies

Documentation - Choose a Load balancer

Be sure to check out the economic advantages of Google Cloud Networking and learn more about networking, by visiting https://cloud.google.com/products/networking.