How to analyze Fastly real-time streaming logs with BigQuery

Simon Wistow

VP of Product Strategy, Fastly

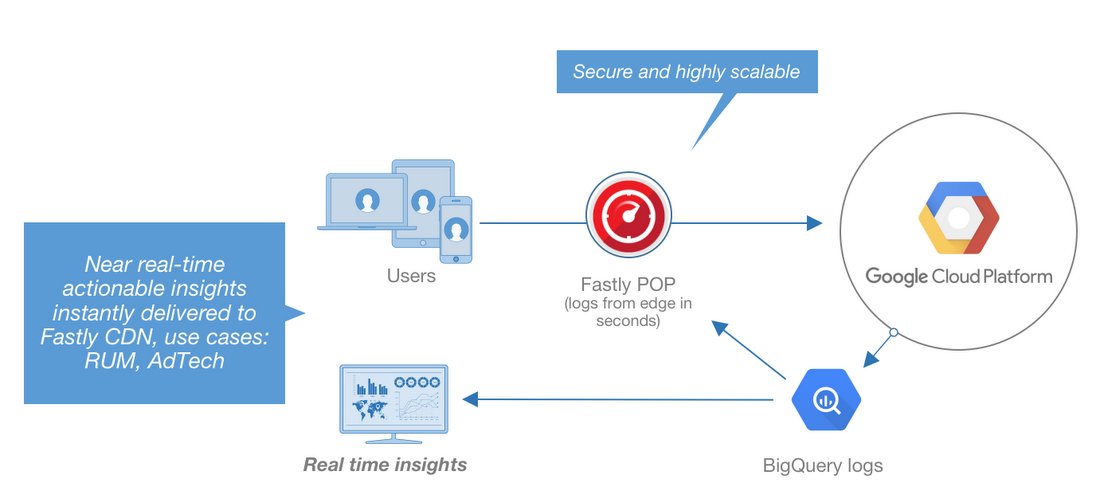

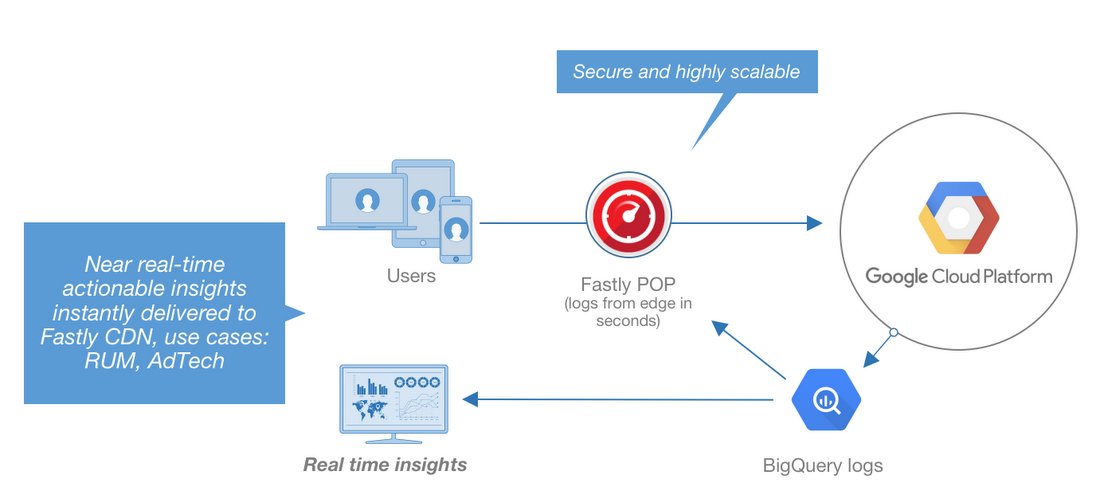

Editor’s note: Today we hear from Fastly, whose edge cloud platform allows web applications to better serve global users with services for content delivery, streaming, security and load-balancing. In addition to improving response times for applications built on Google Cloud Platform (GCP), Fastly now supports streaming its logs to Google Cloud Storage and BigQuery, for deeper analysis. Read on to learn more about the integration and how to set it up in your environment.

Fastly’s collaboration with Google Cloud combines the power of GCP with the speed and flexibility of the Fastly edge cloud platform. Private interconnects with Google at 14 strategic locations across the globe give GCP and Fastly customers dramatically improved response times to Google services and storage for traffic going over these interconnects.

Today, we’ve announced our BigQuery integration; we can now stream real-time logs to Google Cloud Storage and BigQuery, allowing companies to analyze unlimited amounts of edge data. If you’re a Fastly customer, you can get actionable insights into website page views per month and usage by demographic, geographic location and other dimensions. You can use this data to troubleshoot connectivity problems, pinpoint configuration areas that need performance tuning, identify the causes of service disruptions and improve your end users’ experience. You can even combine Fastly log data with other data sources such as Google Analytics, Google Ads data and/or security and firewall logs using a BigQuery table. You can save Fastly’s real-time logs to Cloud Storage for additional redundancy; in fact, many customers back up logs directly into Cloud Storage from Fastly.

Let’s look at how to set up and start using Cloud Storage and BigQuery to analyze Fastly logs.

Fastly / BigQuery quick setup

Before adding BigQuery as a logging endpoint for Fastly services, you need to register for a Cloud Storage account and create a Cloud Storage bucket. Once you've done that, follow these steps to integrate with Fastly.- Create a Google Cloud service account

BigQuery uses service accounts for third-party application authentication. To create a new service account, see Google's guide on generating service account credentials. When you create the service account, set the key type to JSON. - Obtain the private key and client email

Once you’ve created the service account, download the service account JSON file. This file contains the credentials for your BigQuery service account. Open the file and make a note of theprivate_keyandclient_email. - Enable the BigQuery API (if not already enabled)

To send your Fastly logs to your Cloud Storage bucket, you'll need to enable the BigQuery API in the GCP API Managerhttps://console.cloud.google.com/apis/library

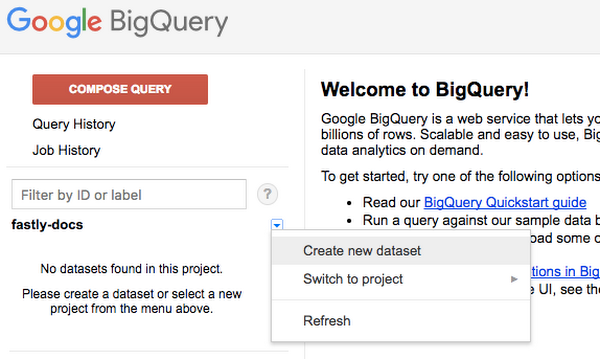

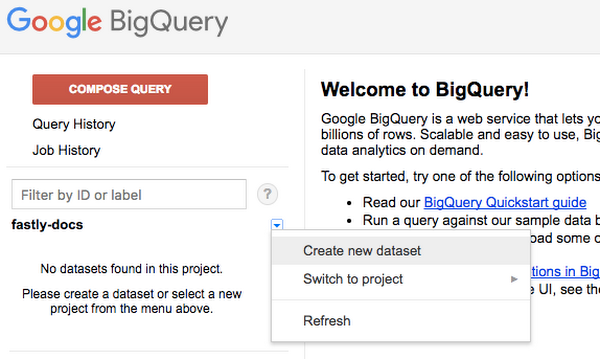

- Create the BigQuery dataset

After you've enabled the BigQuery API, follow these instructions to create a BigQuery dataset:

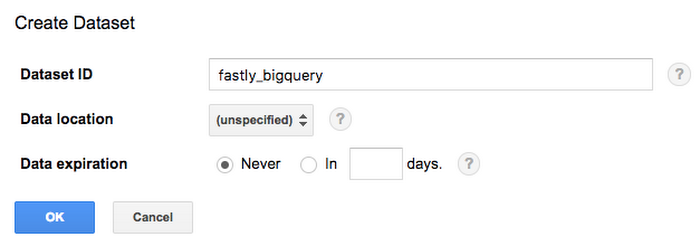

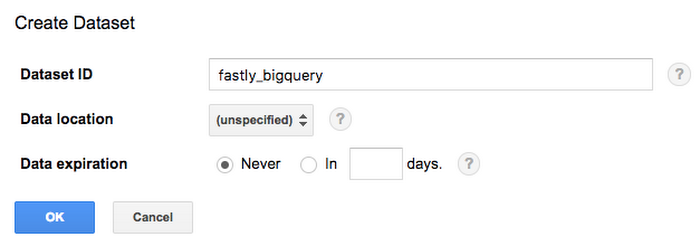

The Create Dataset window appears.

- In the Dataset ID field, type a name for the dataset (e.g., fastly_bigquery), and click the OK button.

After you've created the BigQuery dataset, you'll need to add a BigQuery table. There are three ways of creating the schema for the table:

- Edit the schema using the BigQuery web interface

- Edit the schema using the text field in the BigQuery web interface

- Use an existing table

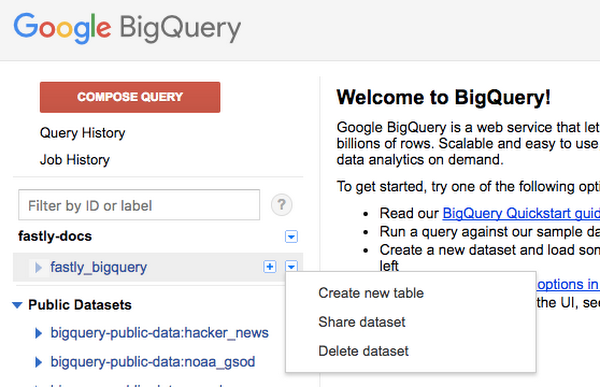

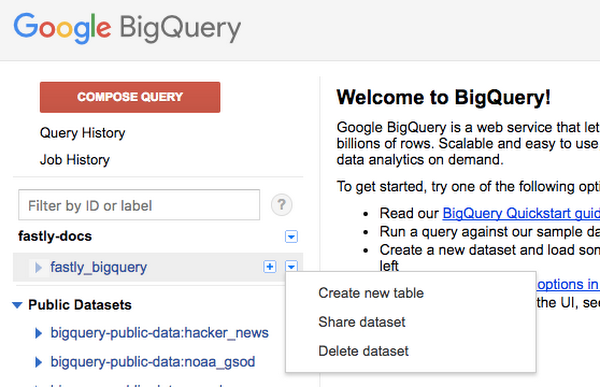

As per the BigQuery documentation, click the arrow next to the dataset name on the sidebar and select Create new table.

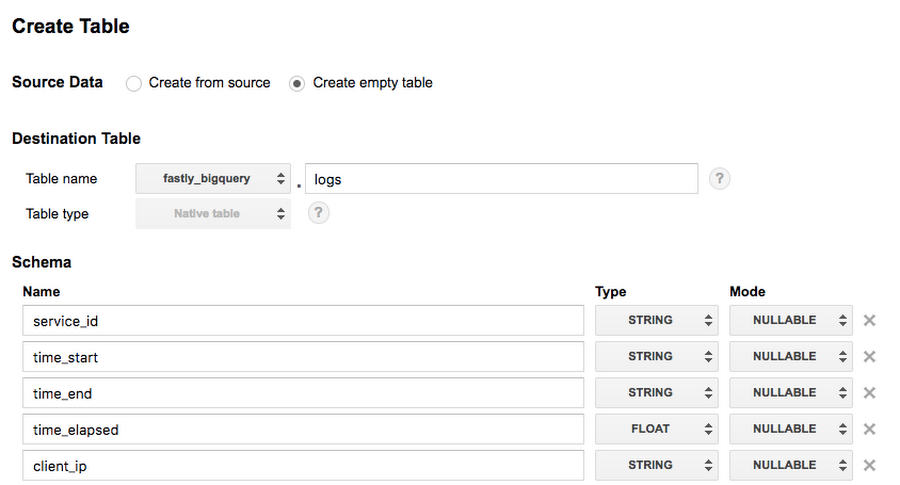

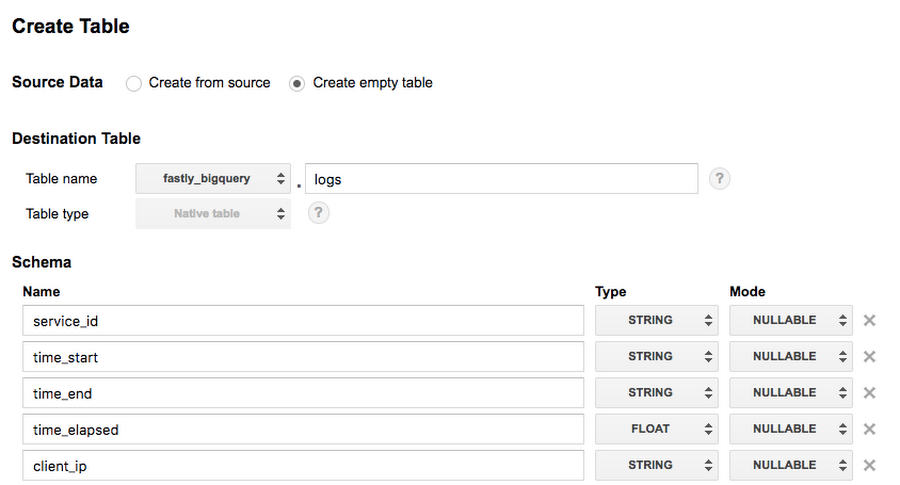

The Create Table page appears:

- In the Source Data section, select Create empty table.

- In the Table name field, type a name for the table (e.g.,

logs). - In the Schema section of the BigQuery website, use the interface to add fields and complete the schema. Click the Create Table button.

Follow these instructions to add BigQuery as a logging endpoint:

- Review the information in our Setting Up Remote Log Streaming guide.

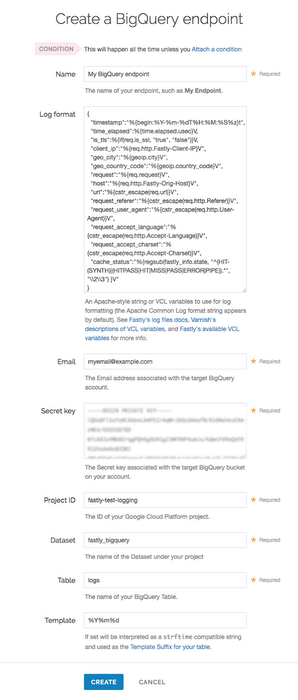

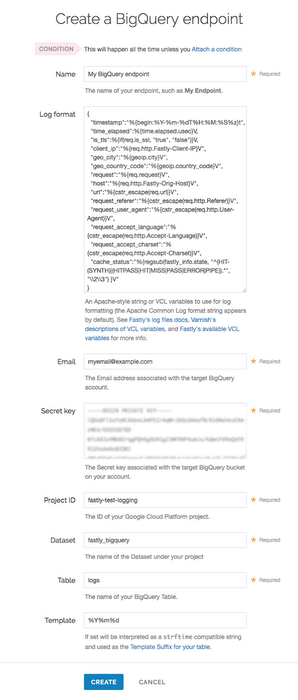

- Click the BigQuery logo. The Create a BigQuery endpoint page appears:

- In the Name field, supply a human-readable endpoint name.

- In the Log format field, enter the data to send to BigQuery. See the example format section for details.

- In the Email field, type the

client_emailaddress associated with the BigQuery account. - In the Secret key field, type the secret key associated with the BigQuery account.

- In the Project ID field, type the ID of your GCP project.

- In the Dataset field, type the name of your BigQuery dataset.

- In the Table field, type the name of your BigQuery table.

- In the Template field, optionally type an

strftimecompatible string to use as the template suffix for your table.

Click the Activate button to deploy your configuration changes.

Formatting JSON objects to send to BigQuery

The data you send to BigQuery must be serialized as a JSON object, and every field in the JSON object must map to a string in your table's schema. The JSON can have nested data in it (e.g., the value of a key in your object can be another object). Here's an example format string for sending data to BigQuery:Example BigQuery schema

The textual BigQuery schema for the example format shown above would look something like this:When creating your BigQuery table, click on the "Edit as Text" link and paste this example in.