What’s happening in BigQuery: New persistent user-defined functions, increased concurrency limits, GIS and encryption functions, and more

Evan Jones

Technical Curriculum Developer, Google Cloud

We’ve been busy this summer releasing new features for BigQuery, Google Cloud’s petabyte-scale data warehouse. BigQuery lets you ingest and analyze data quickly and with high availability, so you can find new insights, trends, and predictions to efficiently run your business. Recently added BigQuery features include new user-defined functions, faster reporting capabilities, increased concurrency limits, and new functions for encryption and GIS, all with the goal of helping you get more out of your data faster.

Read on to learn more about these new capabilities and get quick demos and tutorial links so you can try these features yourself.

BigQuery persistent user-defined functions are now in beta

The new persistent user-defined functions (UDFs) in BigQuery let you create SQL and JavaScript functions that you can reuse across queries and share with others. Setting up these functions allows you to save time and automate for consistency and scalability. For example, if you have a custom function that handles date values a certain way, you can now create a shared UDF library, and anyone who has access to your dataset can invoke those date values in their queries. UDFs can be defined in SQL or JavaScript. Here’s an example:

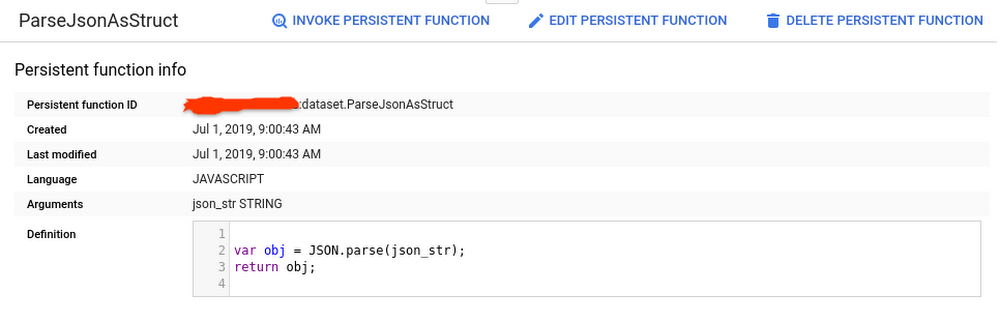

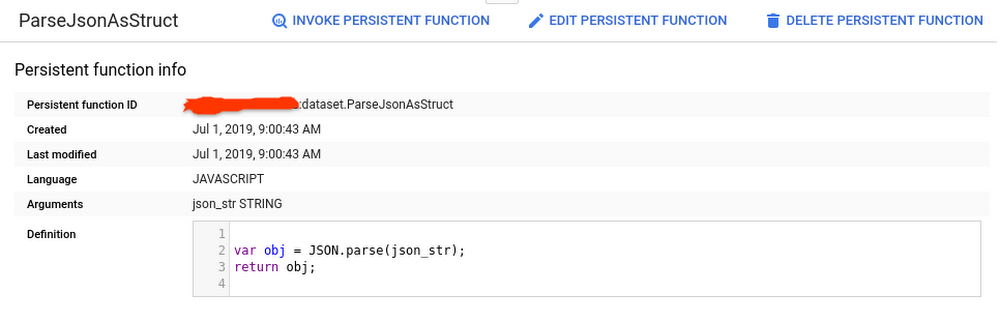

Creating a function to parse JSON into a SQL STRUCT

Ingesting and transforming semi-structured data from JSON objects into your SQL tables is a common engineering task. With BigQuery UDFs, you can now create a persistent Javascript UDF that does the parsing for you. Here, we’ll take a JSON string input and convert it into multiple fields in a SQL STRUCT.

First we’ll define the function in this query:

After executing the query, click the “Go to function” button in the BigQuery UI to see the function definition:

You can now execute a separate query to call the UDF:

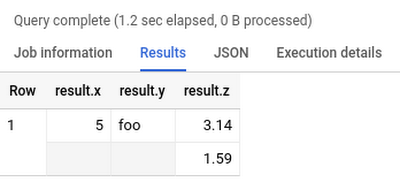

And voila! Our JSON string is now a SQL STRUCT:

Share your Persistent UDFs

The benefit of persistent UDFs is that other project team members can now invoke your new function in their scripts without having to re-create it or import it first. Keep in mind that you will need to share the dataset that contains your UDFs in order for them to access it.

Learn more:

Documentation: CREATE FUNCTION statement

More examples: New in BigQuery—Persistent UDFs by Felipe Hoffa

Default concurrency limit has doubled

To help enterprises get insights faster, we’ve raised the default concurrent rate limit for on-demand, interactive queries from 50 to 100 concurrent queries per project in BigQuery. This means you can run twice as many queries at the same time. As before, you can simply request to have your quota increased above this default limit. Please note that queries with results returned from the query cache, dry run queries, and queries run in batch mode do not count against this limit.

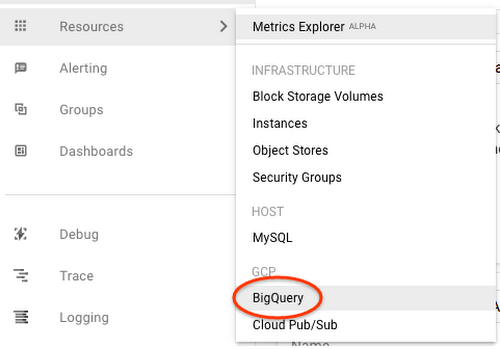

You can monitor your team’s concurrent queries in Stackdriver and visualize them in Data Studio.

Learn more:

Documentation: Quotas and limits

Tutorial: BigQuery monitoring with Stackdriver

Tutorial: Visualize billing data with Data Studio

BigQuery’s new user interface is now GA

We introduced the new BigQuery user interface (UI) last year to make it easier for you to uncover data insights and share them with teammates and colleagues in reports and charts. The BigQuery web UI is now generally available in the Google Cloud Platform (GCP) console.

You can check out key features of the new UI in the quick demo below:

Easily discover data by searching across tables, datasets, and projects

Quickly preview table metadata (size, last updated) and total rows

Start writing queries faster by clicking on columns to add.

If you haven’t seen the new UI yet, try it out by clicking the blue button in the top right of your Google Cloud console window.

Learn more:

Documentation: BigQuery Web UI

BigQuery’s GIS functions are now GA

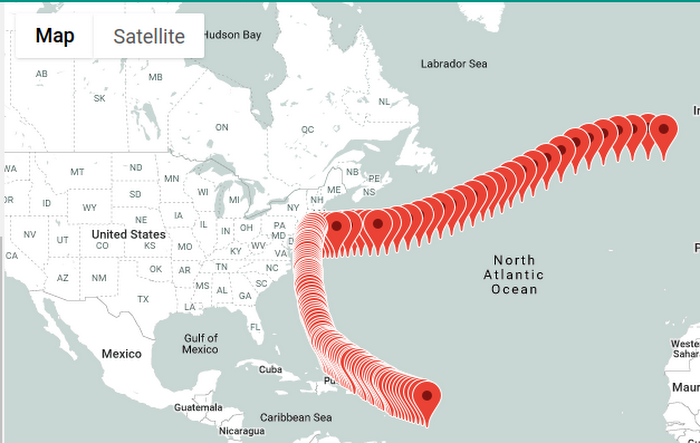

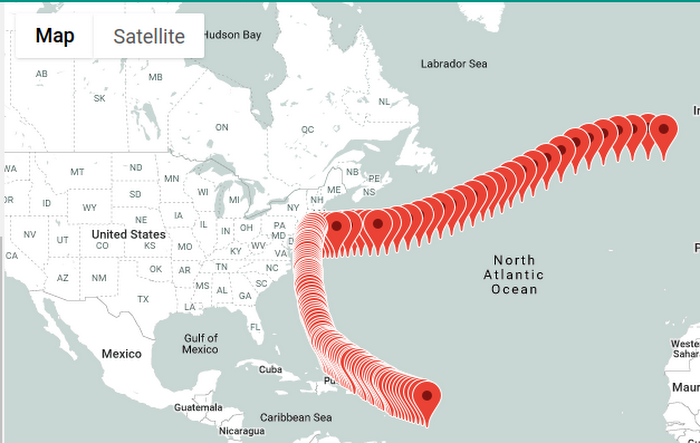

We’re continually working on adding new functionality to BigQuery so you can expand your data analysis to other data types. You might have noticed in the BigQuery Web UI demo that there’s now a field for hurricane latitude and longitude. These Geographic Information System (GIS) data types are now natively supported in BigQuery, as are the GIS functions to analyze, transform, and derive insights from GIS data.

Here’s a look at using BigQuery GIS functions and this tutorial to plot the path of a hurricane:

Applying GIS functions to geographic data (including lat/long, city, state, and zip code) lets analysts perform geographic operations within BigQuery. You can more easily answer common business questions like “Which store is closest for this customer?” “Will my package arrive on time?” or “Who should we mail a promotion coupon to?”

You can also now cluster your tables using geography data type columns. The order of the specified clustered columns determines the sort order of the data. For our hurricane example, we clustered on `iso_time` to increase performance for common reads that want to track the hurricane path sorted by time.

Learn more:

Documentation: BigQuery GIS

Demo: BigQuery Public Dataset and GIS demo plotting U.S. lightning strikes

Tutorial: Plot the path of a hurricane

AEAD encryption functions are now available in Standard SQL

BigQuery uses encryption at rest to help keep your data safe, and provides support for customer managed encryption keys (CMEKs), so you can encrypt tables with specific encryption keys you control. But in some cases, you may want to encrypt individual values within a table. AEAD (Authenticated Encryption with Associated Data) encryption functions, now available in BigQuery, allow you to create keysets that contain keys for encryption and decryption, use these keys to encrypt and decrypt individual values in a table, and rotate keys within a keyset.

This can be particularly useful for applications of crypto-deletion or crypto-shredding. For example, say you want to keep data for all your customers in a common table. By encrypting each of your customers’ data using a different key, you can easily render that data unreadable by simply deleting the encryption key. If you’re not familiar with the concept of crypto-shredding, you’ve probably already used it without realizing it—it’s a common practice for things like factory-resetting a device and securely wiping its data. Now you can do the same type of data wipe on your structured datasets in BigQuery.

Learn more:

Understand crypto-deletion, crypto-shredding, and more: AEAD Encryption Concepts

Documentation: AEAD Encryption Functions

Documentation: AEAD.ENCRYPT() example code

Check out a few more updates worth sharing

Our Google Cloud engineering team is continually making improvements to BigQuery to accelerate time-to-value for our customers. Here are a few other recent highlights:

You can now run scheduled queries at more frequent intervals. The minimum time interval for custom schedules has changed from three hours to 15 minutes. Faster schedules means fresher data for your reporting needs.

The BigQuery Data Transfer Service now supports transferring data into BigQuery from Amazon S3. These Amazon S3 transfers are now in beta.

Creating a new dataset? Want to make it easy for all to use? Add descriptive column labels within SQL using SQL DDL labels.

Clean up your old BigQuery ML models with new SQL DDL statement support for DROP MODEL.

In case you missed it

For more on all things BigQuery, check out these recent posts, videos and how-tos:

To keep up on what’s new with BigQuery, subscribe to our release notes and stay tuned to the blog for news and announcements And let us know how else we can help.