How Mr. Cooper is using AI to increase speed and accuracy for mortgage processing

Sudhir Sundararam

Executive Principal Architect at Mr. Cooper

Vivek Puri

Customer Engineer, Google Cloud

Editor’s note: Mr. Cooper Group is an industry-leading mortgage services provider serving customers through servicing, originations, and digital real estate solutions. Using Google Cloud AI and ML solutions, they created a highly reliable, cloud native document analysis and processing platform to process lending documents and unlocked new levels of accuracy and operational efficiency that help them to scale and control the cost at the same time. Read on to hear how they did it.

Mr. Cooper is one of the largest home loan servicers in the country focused on delivering a variety of servicing and lending products, services and technologies to homeowners. Our goal is to shorten the time for loan servicing to increase efficiency and customer satisfaction and are looking for technologies that go beyond typical OCR to identify, classify and extract value out of the document. This would enable getting the right document and document data, to the right person, at the right time thereby improving the overall digital experience for the end customer

To realize these goals, we have to innovate and evolve with at least 3 key metrics: throughput (amount of document pages processed /minute), accuracy (accurately identify and extract information) and cost savings (cost per document page). Additionally, to address both internal customers and external partners, we have to provide an API based integration and a seamless search experience for documents and extracted data.

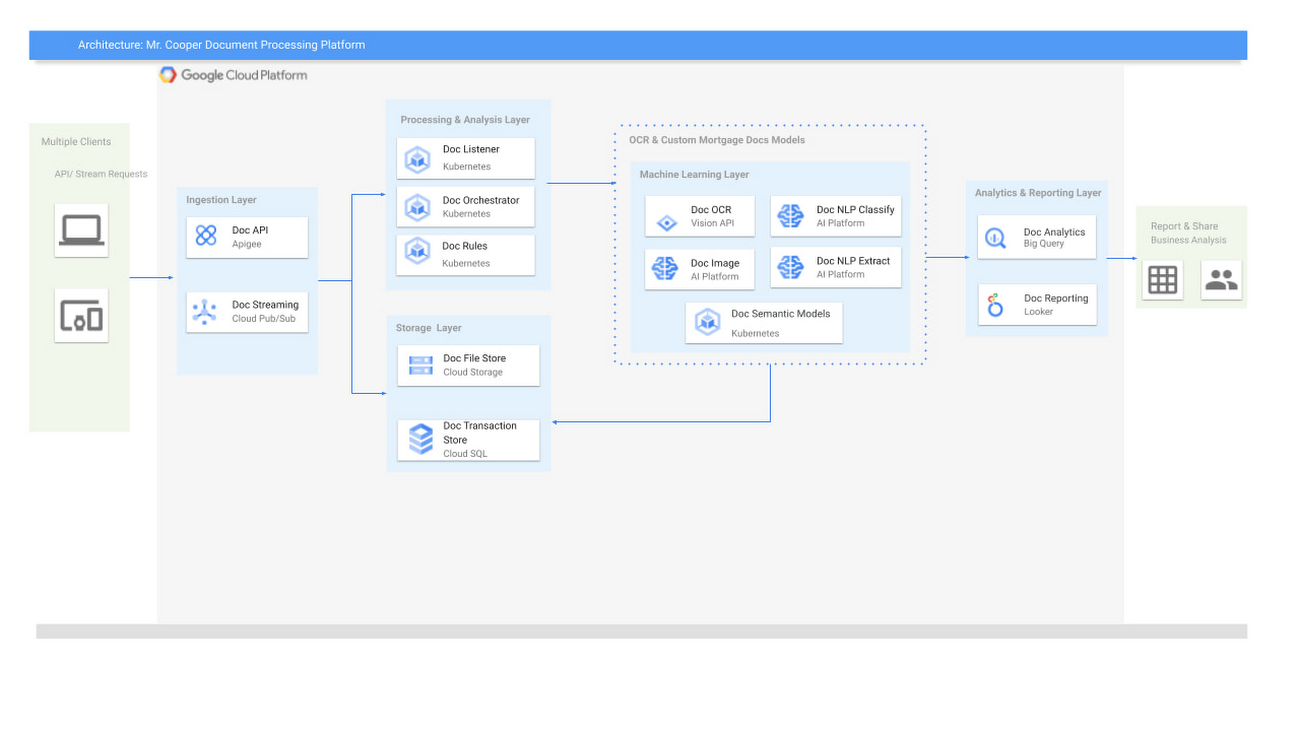

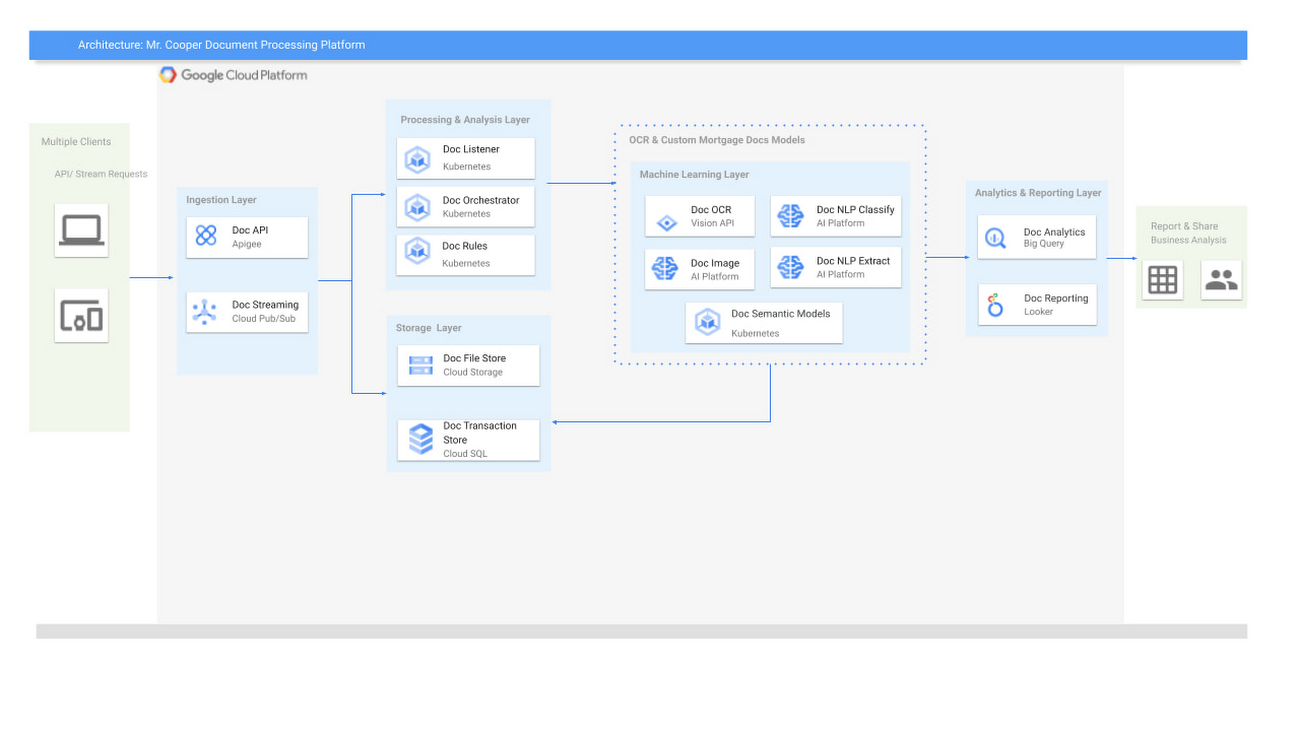

After several pilot verifications and technology spikes, we decided to zero in on the following technology stack: Document AI (including Vision AI, Cloud AutoML), Cloud Storage, Vertex AI, Google Kubernetes Engine (GKE), Cloud SQL, BigQuery, Looker and Apigee on Google Cloud.

Here are the advantages we discovered with Google Cloud machine learning services that allowed us to improve performance, better manage our costs, and gain critical smart analytics capabilities:

Document AI: The Document AI technology stack, which includes Vision AI and AutoML Natural Language, provides high precision in data processing and helps us understand the documents early in the supply chain, thereby reducing cost and improving efficiency of a highly reliable pipeline.

Cloud Storage: This provides us with a landing zone to ingress and egress the documents in a safe and efficient manner, and with Interconnect and VPC Service Controls to ensure that the pipeline is secure.

Cost-optimized Kubernetes apps: We were very impressed by GKE’s cost optimizations. We were able to run the nodes at >90% CPU load and managed GKE also provided us with PCI compliance.

Fully managed relational database service for MySQL: With Cloud SQL, we were able to scale our databases effortlessly, without compromising performance or availability. We also saw a significant reduction in maintenance costs.

Serverless, highly scalable, and cost-effective data warehouse: By integrating BigQuery into our new architecture, we have the analytics capabilities we need, with zero operational overhead.

Vertex AI (formerly AI Platform): Helped us to craft various models that are specific to the mortgage documents we need to process.

Apigee: The API management platform helped us by providing a common layer to consume the data as APIs, and provided monitoring and monetization functionality.

Looker: Integration into Looker provided us with a unified surface to access data across the platform.

Building a container-based document pipeline

To start, we kept our architecture modular and designed around lightweight containers and managed services from Google Cloud AI, so that the care and feeding of the server infrastructure was taken care of. To avoid significant manual refactoring and to handle rapid changes in workloads, we built everything as code (Infrastructure as Code). We jived with the Google Cloud AI team to help us validate the architecture and to bring the best of Google to Mr. Cooper.

The design to use containers was based on more efficient resource utilization of container-based artifacts and IaaC (using terraform) was already a part of our technology stack, so it was relatively easy to spin up an entire pipeline in a short period of time.

Google’s expertise with regards to developing and running artificial intelligence at scale using managed services was a key differentiator in choosing them as a partner. It was through this deep partnership and tight collaboration that we were able to build and execute the right strategy and achieve our desired outcomes.

Our team at Mr. Cooper was able to develop and train state-of-the-art machine learning models on mortgage specific documents with very high accuracy along with opportunities to retrain the models using humans in the loop as appropriate.

From there, we strived to improve the accuracy of our models by either training the models with additional documents, using models in ensemble fashion and decoupling various parts of the application using an async approach to processing. To achieve this, we mainly relied on these Google Cloud AI services:

Google Cloud Vertex AI

GKE with Cluster Autoscaling and cluster multi-tenancy to run the code

Cloud SQL to manage the databases

While there are more components in our new architecture, because we chose managed services, this did not add additional overhead to our teams. Instead, we focused on achieving our goal of maximizing throughput, improving accuracy and decreasing the cost of the platform.

The whole platform was based on an API-first approach. Through Apigee, we exposed these APIs for internal as well as external use to unlock cost savings and improve customer experiences for homeowners.

How does Vertex AI and Document AI fit in the picture?

Once the documents came into the platform, the Kubernetes Engine at Google with Asynchronous events managed the whole process from landing of the documents through the whole supply chain including state management and any user inputs.

There were various classification and extraction cycles that needed to be done on these documents once in the pipeline, where Document AI and Vertex AI from Google Cloud helped us manage multiple versions of custom mortgage models that would extract, classify and store the metadata at scale.

To continue to improve accuracy, our team at Mr. Cooper continues to update existing ML models and train new ML models as document format changes or data drift occurs from heterogeneous sources.

Building a successful partnership

Looking back, this initiative was incredibly beneficial because it provided us with a wealth of information that, when cross referenced, has the opportunity to open up new monetization opportunities, unlock cost savings, and improve customer experiences, especially during these unprecedented times.

In terms of data, we ended up with accuracy of over 95% for critical documents, a peak throughput of 4000 pages/min, an average throughput of 2000 pages/min. This increased our document processing efficiency by 400%, which significantly reduced our costs.

It was not only the incredible technology that drove us to choose Google Cloud, but also their team’s unique knowledge of what it takes to scale. Google has nine products with over one billion users each and is uniquely positioned to offer expertise in achieving peak performance at scale.

This collaborative partnership with their teams helped guide us on our journey to accomplish this critical strategic initiative.