Google Cloud announces updates to Gemini, Imagen, Gemma and MLOps on Vertex AI

Amin Vahdat

VP/GM, AI & Infrastructure, Google Cloud

With access to the widest variety of foundation models from any hyperscale provider, robust infrastructure options, and a deep set of tools for model development and MLOps, Vertex AI is a one-stop platform for not only building generative AI apps and agents, but also deploying and maintaining them. Today, at Google Cloud Next, we’re introducing exciting model updates and platform capabilities that continue to enhance Vertex AI:

-

Gemini 1.5 Pro is now available in public preview in Vertex AI, bringing the world’s largest context window to developers everywhere. Imagen 2.0, our family of image generation models, can now be used to create short, 4-second live images from text prompts. We’re also making image editing generally available in Imagen 2.0, including inpainting/outpainting and digital watermarking. Additionally, we’re adding CodeGemma to Vertex AI, a new model from our Gemma family of lightweight models.

-

Because response accuracy is critical for gen AI services, we are expanding our grounding capabilities in Vertex AI, including the ability to directly ground responses with Google Search, now in public preview. Vertex AI users now have access to fresh, high-quality information that significantly improves accuracy of model responses.

-

To help our customers manage and deploy models in production, we’re expanding our MLOps capabilities for gen AI, including new prompt management and evaluation services for large models. These features make it easier for organizations to get the best performance from gen AI models at scale, and to iterate more quickly from experimentation to production.

Let’s dive deeper into these announcements.

Giving customers the best selection of enterprise-ready models

We’re doubling down on our mission to give customers the best selection of enterprise-ready models. In just the last two months, we've added access in Vertex AI to a variety of cutting-edge first-party, third-party, and open models, from Google’s Gemini 1.0 Pro to Gemma, the lightweight family of open models based on the research and technology we used to create Gemini, and Anthropic’s Claude 3 family of models.

Announced in February, Gemini 1.5 Pro is now in public preview, bringing the power of the world’s first 1 million-token context window to customers. This breakthrough allows natively multimodal reasoning over enormous amounts of data specific to a request.

We’re seeing customers create entirely new use cases, including building AI-powered customer service agents and academic tutors, analyzing large collections of complex financial documents, detecting gaps in documentation, and exploring entire codebases or data collections via natural language.

United Wholesale Mortgage is using Gemini 1.5 Pro to enrich the underwriting process and to automate the mortgage application process, for example.

SAP is exploring opportunities to include the model in the SAP generative AI hub, which facilitates relevant, reliable, and responsible business AI and provides instant access to a broad range of large language models.

TBS, one of the main commercial broadcasters in Japan, is using Gemini 1.5 Pro to automate metadata tagging on their large media archives, significantly improving efficiency for finding materials in production process.

And Replit is testing Gemini 1.5 Pro to generate, explain, and transform code with higher speed, accuracy, and performance.

In addition, we are announcing that Gemini 1.5 Pro on Vertex AI now supports the ability to process audio streams including speech, and even the audio portion of videos. This enables seamless cross-modal analysis that provides insights across text, images, videos, and audio — such as using the model to transcribe, search, analyze, and answer questions across earnings calls or investor meetings.

Imagen delivers advanced generative media capabilities

While the Gemini models are great for advanced reasoning and general-purpose use cases, task-specific gen AI models can help enterprises deliver specialized capabilities. We’re seeing organizations like Shutterstock and Rakuten leverage Imagen 2.0 to generate high-quality, highly accurate images at enterprise scale.

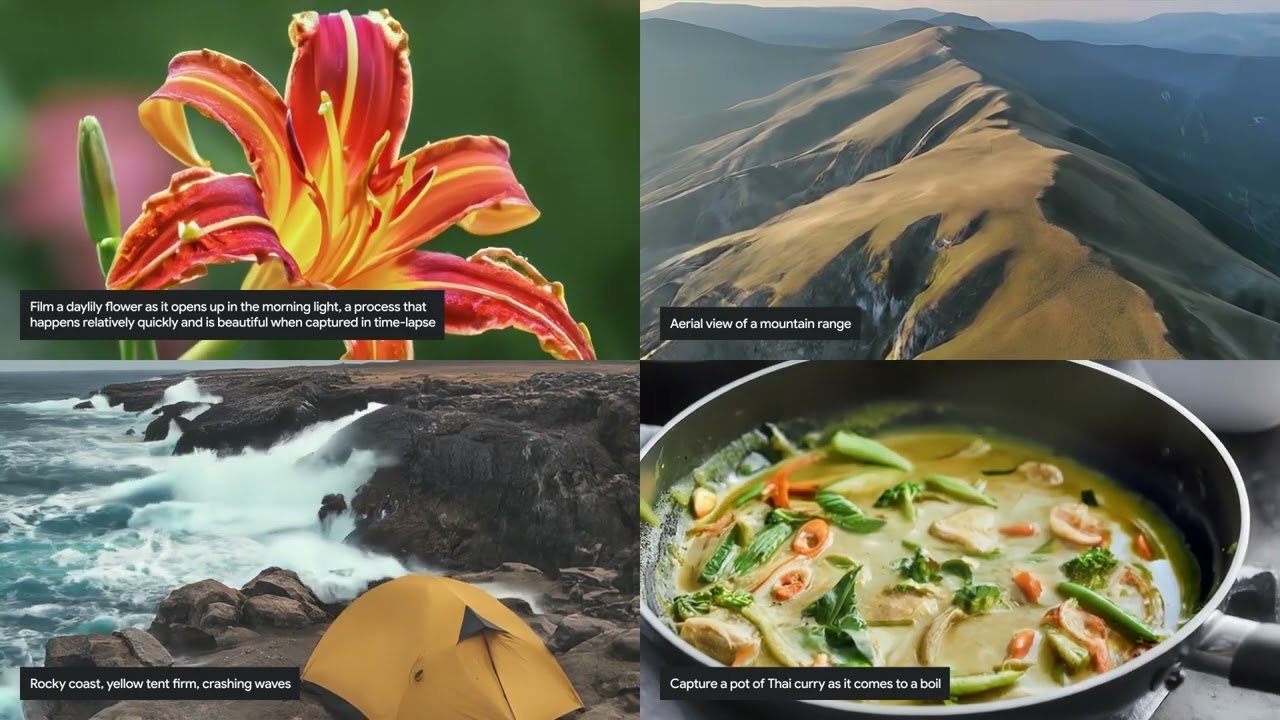

Today’s preview of text-to-live image capabilities makes Imagen even more powerful for enterprise workloads. This allows marketing and creative teams to generate animated images such as GIFs, and more, from a text prompt. Initially, live images will be delivered at 24 frames per second (fps) with a resolution of 360x640 pixels and a duration of 4 seconds, with plans for continuous enhancements.

Given the focused design of this model for enterprise applications, it’s adept at themes such as nature, food imagery, and animals. It can generate a range of camera angles and motions while supporting consistency over the entire sequence. Upholding our commitment to trust between creators and users, Imagen for live image generation is equipped with safety filters and digital watermarks.

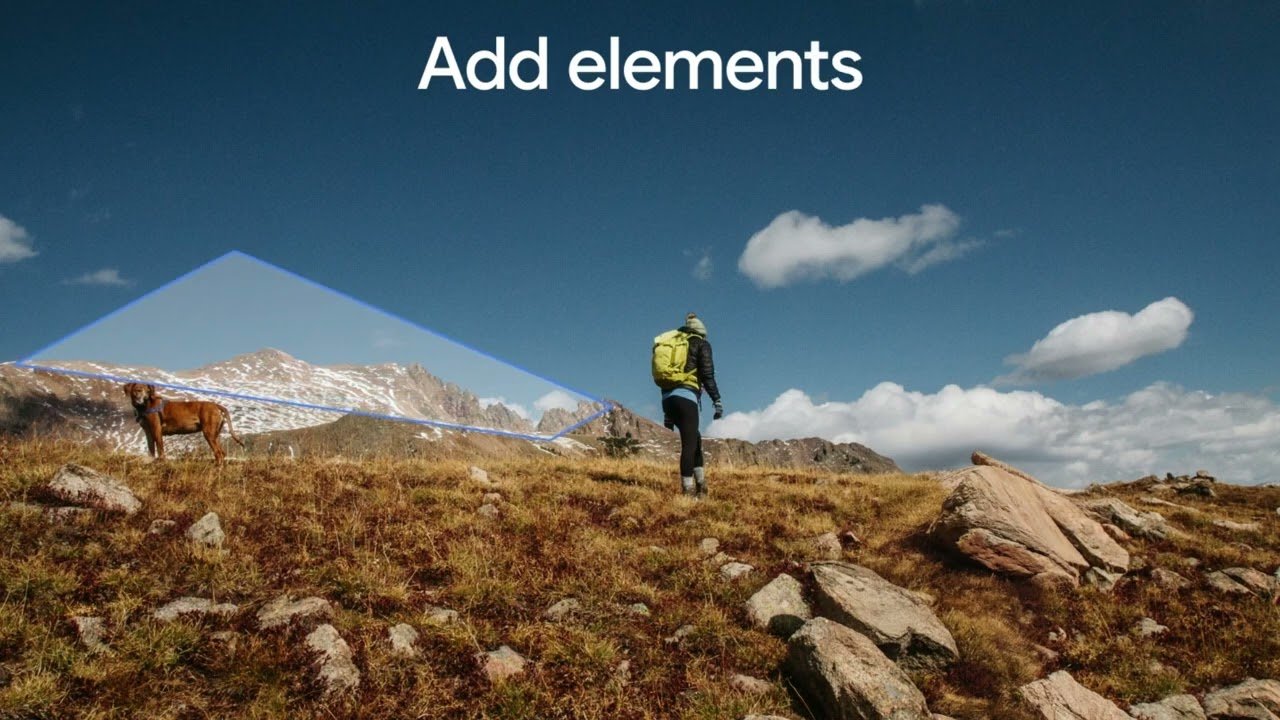

We’re also updating Imagen 2.0’s image generation capability with advanced photo editing features, including inpainting and outpainting. Now generally available for Imagen 2.0 on Vertex AI, these features make it easy to remove unwanted elements in an image, add new elements, and expand the borders of the image to create a wider field of view. Additionally, our digital watermarking feature, powered by Google DeepMind’s SynthID, is now generally available, enabling customers to generate invisible watermarks and verify images and live images generated by the Imagen family of models.

Connect foundation models to sources of enterprise truth

Foundation models are limited by their training data, which can quickly become outdated and may not include information that the models need for enterprise use cases. Today, we are announcing that organizations can ground models in Google Search, giving customers access to the combined power of Google’s latest foundation models and access to fresh, high-quality information. This means users get results that are rooted in one of the most trusted sources of information, built on decades of experience ranking and understanding information quality.

We also offer multiple ways for enterprises to leverage retrieval augmented generation, or RAG, which lets organizations ground model responses in enterprise data sources, using methods like semantic similarity to search documents and data stores.

At Google Cloud, we call this concept of grounding on search and enterprise data “Enterprise Truth,” and we see it as a foundation for building the next generation of AI agents — agents that go beyond chat to proactively search for information and accomplish tasks on behalf of the user.

Get the best performance from gen AI models at scale

We’ve expanded Vertex AI’s MLOps capabilities to meet the needs of building with large models, letting customers work on all AI projects with a common set of features, including model registry, feature store, pipelines to manage model iteration and deployment, and more. With this common set, customers can continue to benefit from their existing MLOps investments while meeting the needs of their gen AI workloads.

Today’s announcements make it easier for organizations to get the best performance from gen AI models at scale, and to iterate more quickly from experimentation to production:

-

Vertex AI Prompt Management targets some of the biggest gen AI pain points we hear from customers: experimenting with prompts, migrating prompts, and tracking prompts and parameters. Vertex AI Prompt Management, now in preview, provides a library of prompts for use among teams, including versioning, the option to restore old prompts, and AI-generated suggestions to improve prompt performance. Customers can compare prompt iterations side by side to assess how small changes impact outputs, and the service offers features like notes and tagging to boost collaboration.

-

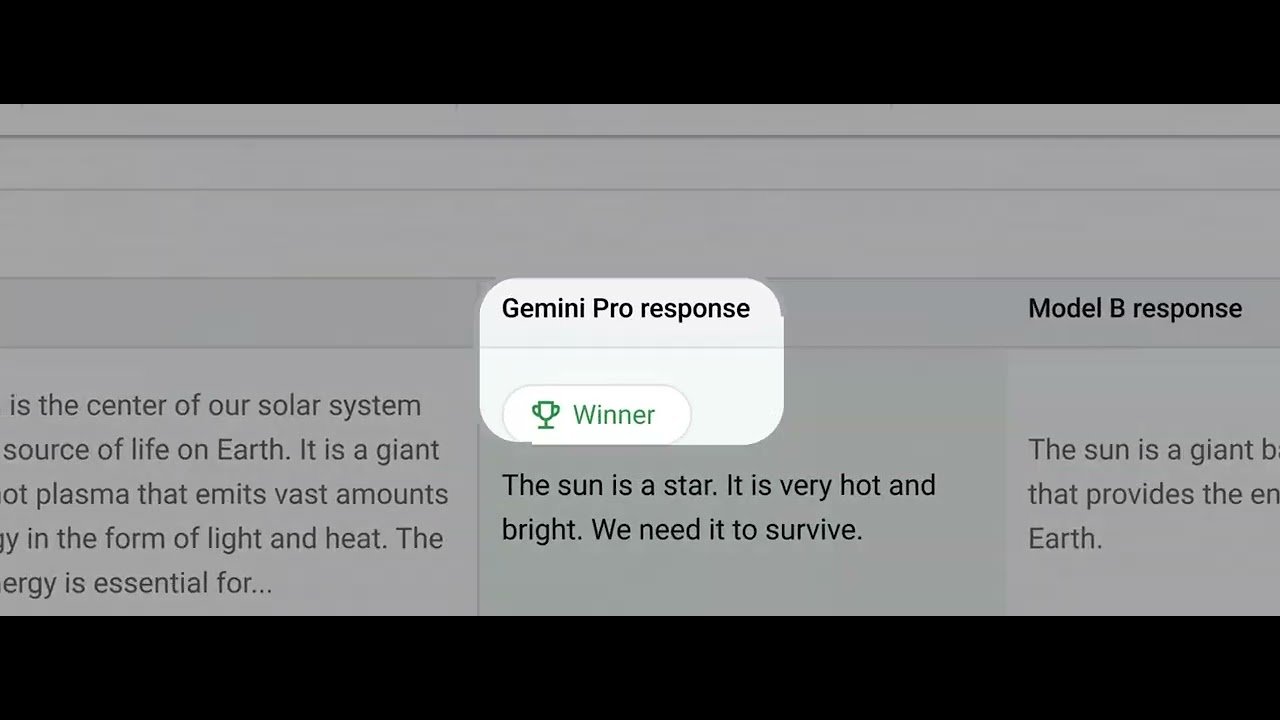

Evaluation tools in Vertex AI help customers compare models for a specific set of tasks. We now support Rapid Evaluation in preview to help users evaluate model performance when iterating on the best prompt design. Users can access metrics for various dimensions (e.g., similarity, instruction following, fluency) and bundles for specific tasks (e.g., text generation quality). For a more robust evaluation, AutoSxS is now generally available, and helps teams compare the performance of two models, including explanations for why one model outperforms another and certainty scores that help users understand the accuracy of an evaluation.

“AutoSxS represents a huge leap forward in our generative AI model evaluation capabilities. Automating evaluation was a key success factor for getting LLMs in production,” said Stefano Frigerio, Head of Technical Leads, Generali Italia.

Last but not least, today we’re also expanding data residency guarantees — which cover data stored at-rest for Gemini, Imagen, and Embeddings APIs on Vertex AI — to 11 new countries: Australia, Brazil, Finland, Hong Kong, India, Israel, Italy, Poland, Spain, Switzerland, and Taiwan. Customers can now also limit machine learning processing to the United States or European Union when using Gemini 1.0 Pro and Imagen. Joining 10 other countries we announced last year, these new regions give customers more control over where their data is stored and how it is accessed, making it easier for customers to satisfy regulatory and security requirements around the world.

Take the next step in the gen AI journey

To deliver on the promise of gen AI, organizations need to balance model and infrastructure capabilities against cost, ensure models anchor their reasoning in the appropriate data, and adapt MLOps for deploying, managing, and maintaining models at scale. With today’s announcements, Vertex AI customers can satisfy these requirements faster and more easily than ever, letting them focus on AI-powered innovation instead of maneuvering adoption complexity. We look forward to continuing the gen AI journey with organizations around the world — try Vertex AI capabilities in the Google Cloud console today, new customers get $300 in free credits to explore. Click here if you want to learn more about the Vertex AI portfolio.