Learn why and how to migrate monolithic workloads to containers

Olivier Bourgeois

Developer Relations Engineer

Alongside the rise in popularity of cloud computing, there has also been an ongoing movement towards lighter and more flexible workloads. Yet, there is still a significant share of legacy applications in enterprises both large and small running in expensive and harder-to-maintain virtual machine (VM) environments. These workloads are often crucial to the enterprises’ wellbeing, but often come with heavy operational burdens and fees. What if there was an easy way to migrate these complex VMs to a more cloud-native environment, without any source code changes?

In this article, I go through some important definitions, talk about the advantages of modernization and example modernization journeys, and finally close with a reference to a real world scenario where a multi-process monolithic application gets migrated to a lightweight container using Migrate for Anthos and GKE.

What’s in a name?

First, let’s go through some important definitions.

Application: Complete piece of software, containing possibly many features. Applications are typically seen to the end-user as a single unit or blackbox. Some examples of applications are mobile apps, and websites.

Service: Standalone component of an application. Typically, applications are composed of many services which are more or less indistinguishable from the end-user. Examples include a database, or a website frontend service.

Virtual machine: Emulation or virtualization of a computer machine or operating system. Each virtual machine contains its own copy of the operating system it emulates, as well as all libraries and dependencies required to run relevant applications and services.

Monolithic application: Architecture type where an application and its services are built and deployed as a single unit. These applications generally run on bare metal or in a virtual machine.

Container: Isolated user instance allowed by an operating system kernel. Containers share the same underlying operating system while only being able to see and interact with their own processes and applications, which allows them to be a much lighter alternative to multiple virtual machines.

Microservice: Deployment unit composed of a single service, rather than multiple services. These services generally run inside of lightweight containers (one service per container). To accommodate for their relative distance, microservices can communicate with each other via predefined APIs.

Advantages of microservices & cloud-based systems

With definitions out of the way (don’t worry, there isn’t any pop quiz later), let’s focus on a core question: why move from monolithic architectures running in virtual machines towards lightweight microservices running in containers?

Independence: Given that microservices are smaller, more distinct units of deployment, they can be independently tested and deployed without having to build and test a larger monolith every time a small change comes in.

Language-agnostic: Microservices can easily be implemented in different languages and frameworks depending on what suits each service best, rather than having to opt for a single framework for a larger monolith.

Ease of ownership: Because of the inherent containerization and boundaries between services, It is much easier to give ownership of specific microservices to different teams than it is with one monolithic application where boundaries between components are more fuzzy.

Ease of development: Since each team only has to take care, for the most part, of their own service, API, and testing, it is much easier to develop feature and iterate over a component of the application than with a monolith where the entire application has to be rebuilt or redeployed (even if only one component is modified). This can be augmented with CI/CD tools such as Cloud Build and Cloud Deploy.

Scalability: While monolith applications are difficult to horizontally scale (adding additional replicas of a workload), microservices are able to scale independently of each other. This is in contrast to monolithic applications where scaling one component inherently means scaling every component at once.

Fault-tolerance: Since there are multiple points of failure and redundancy (through horizontal scaling for example), one service can fail while others continue running as expected. Conversely, if a single component fails in a monolith application, it often means that the entire application is failing. Additionally, it is much easier to determine if and when specific services are failing.

Reduced costs: Each service living in their own compartment means that overall costs can be reduced by only paying for resources used. For a monolithic application running in a virtual machine you have to pay for the entire virtual machine’s worth of compute resources, regardless of usage. With microservices, you only pay for the compute resources used in a given period of time. This can be done with the help of automated scaling such as GKE Autopilot or serverless hosting like Cloud Run.

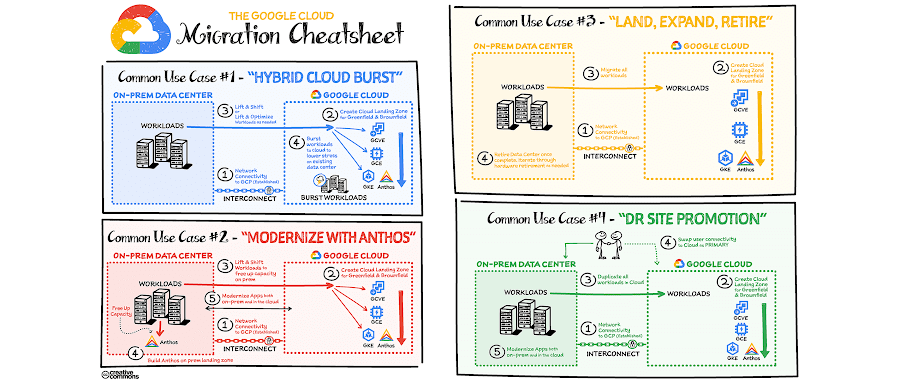

The migration journey

At a high level, there are five sequential phases in the migration journey with Migrate for Anthos and GKE.

- Discovery: In this first phase, you identify the workloads to be migrated, and you assess them for dependencies, ease and type of migration. This assessment also includes technical information such as storage and database to be moved, your application’s network requirements such as open ports, and service name resolution.

- Migration planning: Next, you break down your set of workloads into groups that are related and should migrate together. You then determine the order of migration for these subsets based on desired outcomes and intra-service dependencies.

- Landing zone setup: Before the migration can proceed, you configure the deployment environment for the migrated containers. This includes creating or identifying a suitable GKE or Anthos cluster to host your migrated workloads, creating VPC network rules and Kubernetes network policies, as well as configuring DNS.

- Migration and deployment: Once the deployment environment has been set up and is ready to receive migrated containers, you then run Migrate for Anthos and GKE to containerize your VM workloads. Once those processes are completed, you deploy and test the resulting containers.

- Operate and optimize: Lastly, you leverage tools provided by Anthos and the Kubernetes ecosystem to maintain your services. This includes but is not limited to setting up access policies, encryption and authentication, logging and monitoring, as well as continuous integration and continuous deployment pipelines.

How do I get started?

Complementary to this article, I have written a multi-part tutorial (Migrating a monolith VM) which follows through the migration steps of a real world application. In that scenario, a fictional bank of the (very original) name Bank of Anthos is in the middle ground between legacy monolith and containerization. As part of its production infrastructure, it contains many containerized microservices running in a Kubernetes cluster, as well as one large monolith containing multiple processes and an embedded database.

Through the course of the tutorial, you learn how to leverage Migrate for Anthos and GKE to easily lift and shift the monolith processes into its own lightweight container as well as making use of GKE-native features and quick source code iteration.