Hosting successful live events with Google Cloud

Prakash Daga

Product Manager

Nazir Kabani

Customer Engineer

Hosting a live event requires a significant level of planning and preparedness. At Google Cloud, we've supported customers that delivered live tentpole events at planet scale, most recently, football matches for FIFA World Cup 2022 using Media CDN for worldwide distribution. The characteristics of this workload - a large number of people unexpectedly and simultaneously watching - are unique. This article describes a number of best practices and lessons learned from working with our media partners, touching on infrastructure planning, security, and use of Google Cloud products - Live Stream API for transcoding, Google Cloud Storage for origin, Media CDN for distribution, cloud logging and monitoring for operations requirement.

Considerations for hosting a live event :

Hosting a live event is a journey, which starts with the acquisition of rights for the content and ends on the last day of the live event. Let’s divide the journey into 6 steps, for ease of understanding and tracking:

Finalizing the video delivery architecture

Capacity planning

Implementing security

Freezing configurations

Notify, identify and resolve

Test fallback plans and start small

Finalizing the video delivery architecture

Google Cloud’s VPC and network are uniquely architected - the VPC is a global VPC with subnets in each region, which makes it a lot simpler when dealing with a multi-region architecture in order to improve your service availability.

Consider the following design deployments patterns while preparing for hosting a live event, which by nature will see a lot of concurrent connections and access.

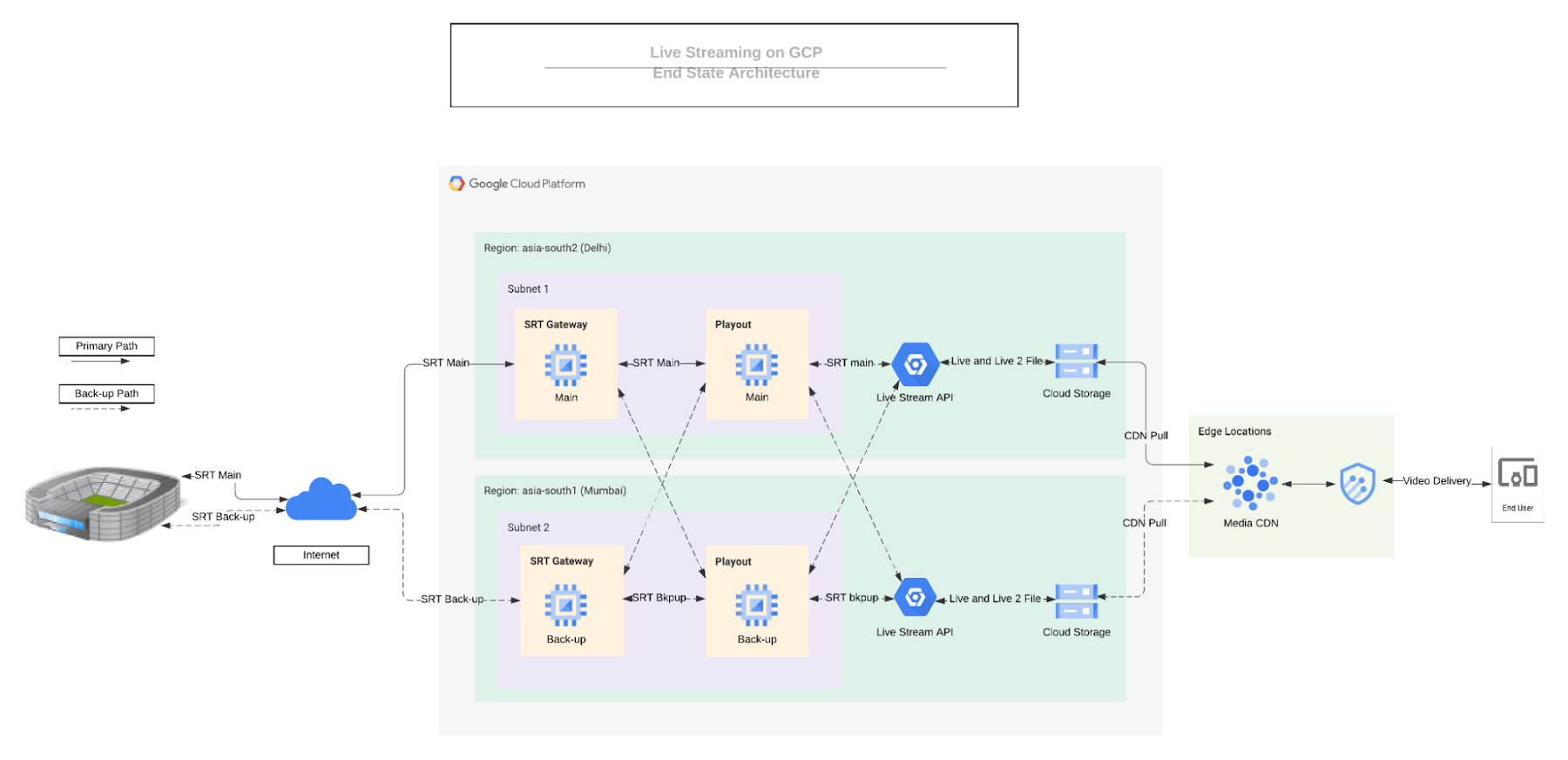

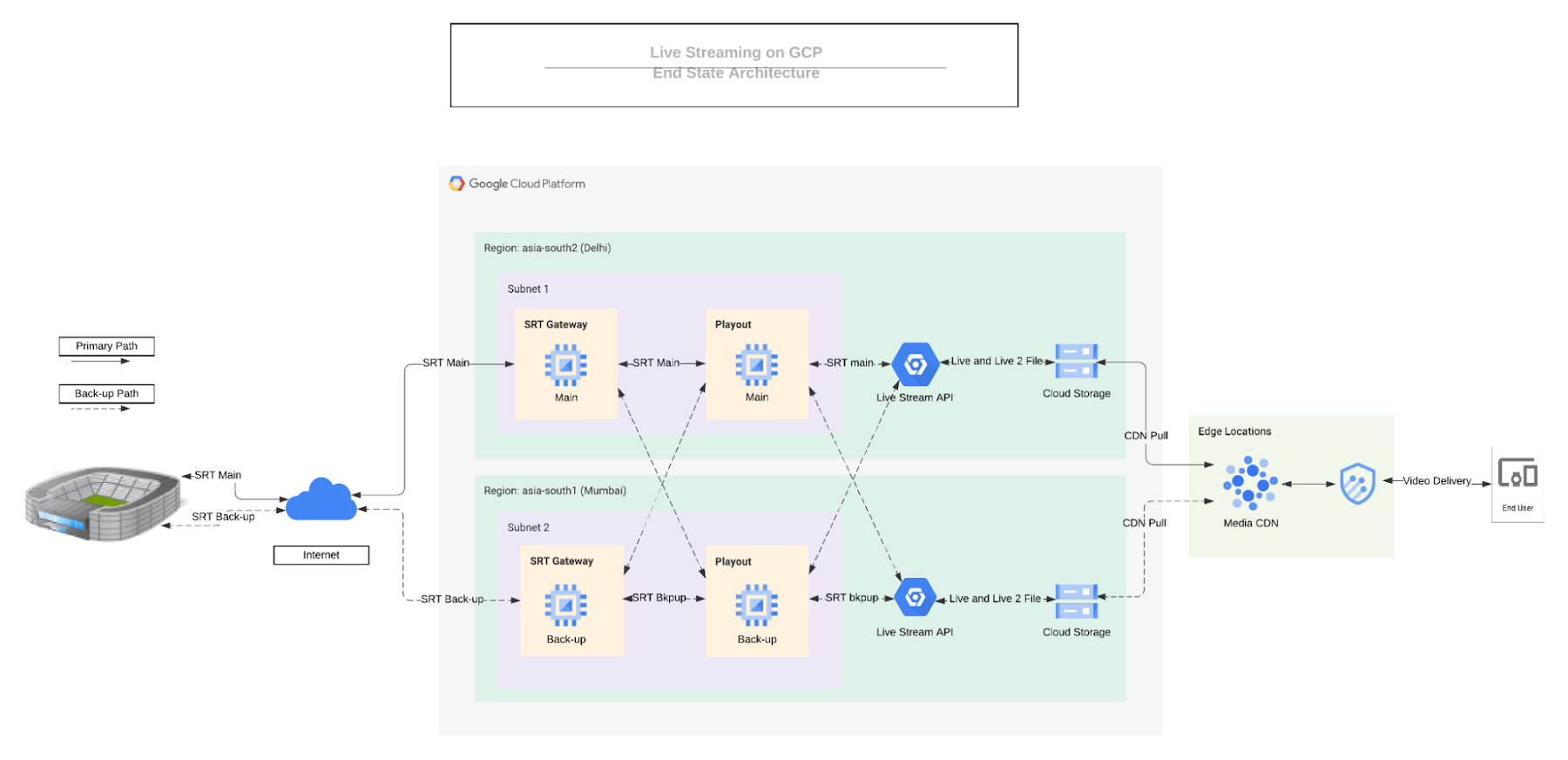

1. Redundancy deployment for greater resiliency: while planning redundancy with two different zones within same region is always an option, one of the alternate options could be to do deployments in two Google Cloud regions (similar to the one depicted below), where primary playout server (for switching between streams from Ground and local studio or insert SCTE markers) and Live stream API is deployed in a Google Cloud region near to your end-user and back-up in another region nearby (for e.g, primary in Mumbai and back-up Delhi).

2. Using SRT for Ingest: SRT (Secure Reliable Transport) is more reliable compared to RTMP or RTP as a source stream coming from teleport or directly from the stadium.

3. Using Google Cloud Storage as an origin: Instead of just-in-time-packaging (JITP) and origin, use Google Cloud Storage (GCS) as an origin. Managing and scaling JITP is a challenge specially in a high concurrency environment. If infra scaling is not happening at the pace of user growth then any sudden spike in request to origin may cause 5xx errors from origin. On the other hand GCS scales really well with default bandwidth quota per project is 200 Gbps (egress to media cdn and cloud CDN is exempt from this quota), and 300K soft limit for QPS, both of these can be increased after raising support tickets.

4. Media CDN for Delivery: Media CDN is built on top of Live Google's existing infrastructure and network built over decades to deliver youtube videos and large binaries, which supports millions of concurrent users daily on Google’s own applications. Along with infrastructure (thousands of local metro deployments) and network advantage, Media CDN’s capabilities like Origin Shielding, Request coalescing, Origin failover, retry and timeouts, Real time logging, etc brings unique advantages when it comes to managing high concurrency events.

5. Cloud Armor: most of these sporting events have security as a legal requirement, use Google Cloud Armor’s security capabilities to meet these requirements.

Capacity Planning

Planning capacity for Media CDN comes with two major requirements: peak egress rate and peak queues per second (QPS). Both of those requirements can be calculated based on configuration parameters and business data.

Peak egress rate: it can be calculated using a business assumption of number of concurrent users (or historic data of concurrent users) and average user playback bitrate

For e.g., 5 Mn concurrent users x 1 Mbps average bitrate = 4.76 Tbps ~ 5 Tbps peak egress rate

Peak QPS: multiply concurrent users with 2 and divide by segment duration (because at the end of each segment, the player will request a new manifest file and a new video segment = 2 requests - this is only applicable for HLS v3 because audio and video segments are muxed, for other separate audio / video / subtitles consider multiplier accordingly)

For e.g, (5 Mn concurrent users x 2) / 6s segment duration = 1.66 Mn QPS

Implementing Security

Most sports bodies have compliance requirements like token authentication to avoid pilferage, implementation of DRM, geo blocking, copyright issues, etc. Let’s discuss how to manage them without affecting your scaling needs.

Token Authentication to avoid pilferage: Media CDN natively supports Dual token authentication to validate requests with short-token and long token. Customers who would like to revoke specific signed URLs' from bad actors, violating concurrency rules or piracy attempts to access content ahead of the token expiration time, can do that using Network Actions (In private preview - reach out to your account team to get access during private preview).

Origin Protection: Media CDN can pull content from any internet-reachable HTTP(S) endpoint. In some cases, our customers might wish for authentication of requests going to origin, in order to only allow Media CDN to pull content, and prevent unauthorized access. Cloud Storage supports this through IAM permissions.

Implementation of DRM: As mentioned previously, Managing and scaling JITP is challenging in a high concurrency environment. If infra scaling is not happening at the pace of user growth then any sudden spike in request to origin may cause 5xx errors from origin. There are solutions which offers DRM integration only with packager. JITP can become bottleneck during ramp, however Google Cloud’s live stream API and some of our ISV partner solutions support pre-packaging with DRM (this live stream api functionality is in private-preview) and publishing to GCS bucket, which makes operations way easier then managing scaling issues of JITP. (You will have to consider scaling of DRM proxy servers - many of our partners have achieved this without any issues in the past).

Geo Blocking and IP blocking: Cloud Armor edge security policies enable you to configure filtering and access control policies based on geo locations and IP address for content that is stored in cache.

Copyright claims: Most public OTT platforms like YouTube, provide a content identification system to easily identify and manage copyright-protected content. Please reach out to your content rights team and follow these platform’s guidelines.

Freezing configurations

The following key elements play a major role in avoiding incidents. Configuring them properly is critical when planning for a live event.

Live Stream API configuration:

Segment duration: segment duration plays a role in user’s perceived latency vs QoE of end users. Lower segment duration might increase rebuffering and reduce QoE because of congestion with end-user networks which are beyond delivery architecture. Ideally a 4 to 6 second segment is a really good balance between both and in some cases it will also reduce delivery costs by reducing the number of HTTP requests served by CDN.

Finalizing bitrate ladder: finalize your bitrate ladder to balance between quality and average bitrate knowing that a higher average bitrate will increase peak egress rate. You will have to balance between both to not exceed the approved capacity.

One can also (remove top bitrate) during live events when peak egress / peak concurrent users will exceed the approved number or initial assumption you might have considered. However, this is risky and if you have not tested this before then it’s recommended to test this beforehand in the staging environment, before implementing in the live production. (To do this, your master manifest cache TTL should be less than your segment duration, otherwise there will be a quick spike in 4xx errors when you remove top bitrate from the Live stream API).

Path and Segment nomenclature: keep encoder publishing path to GCS bucket, segment nomenclature, Vary, ETag and Last-Modified header behavior consistent between both the origins (primary and back-up) for origin failover

Media CDN Configuration:

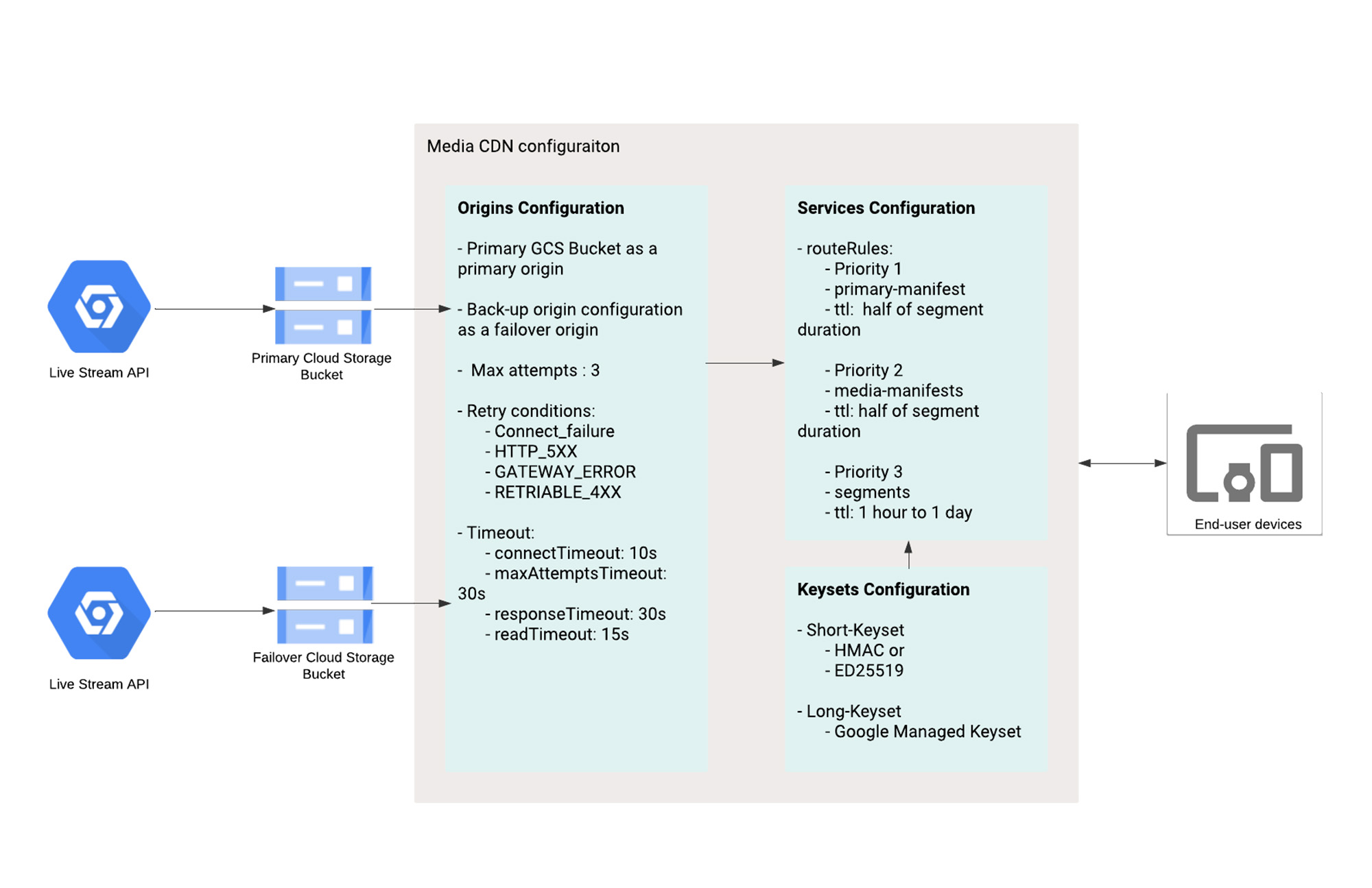

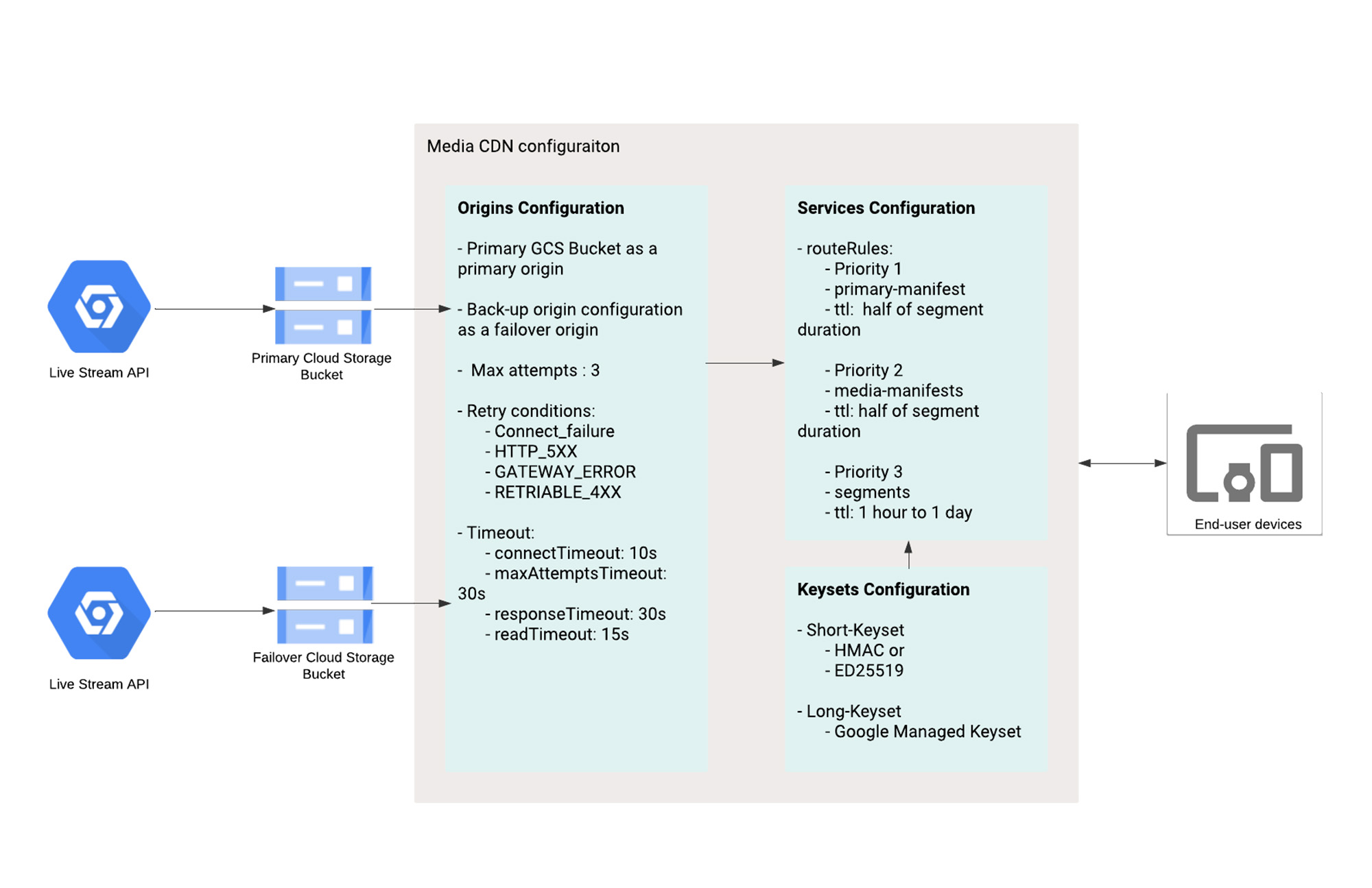

The next important part is to configure Media CDN to manage failovers and provide maximum cache performance.

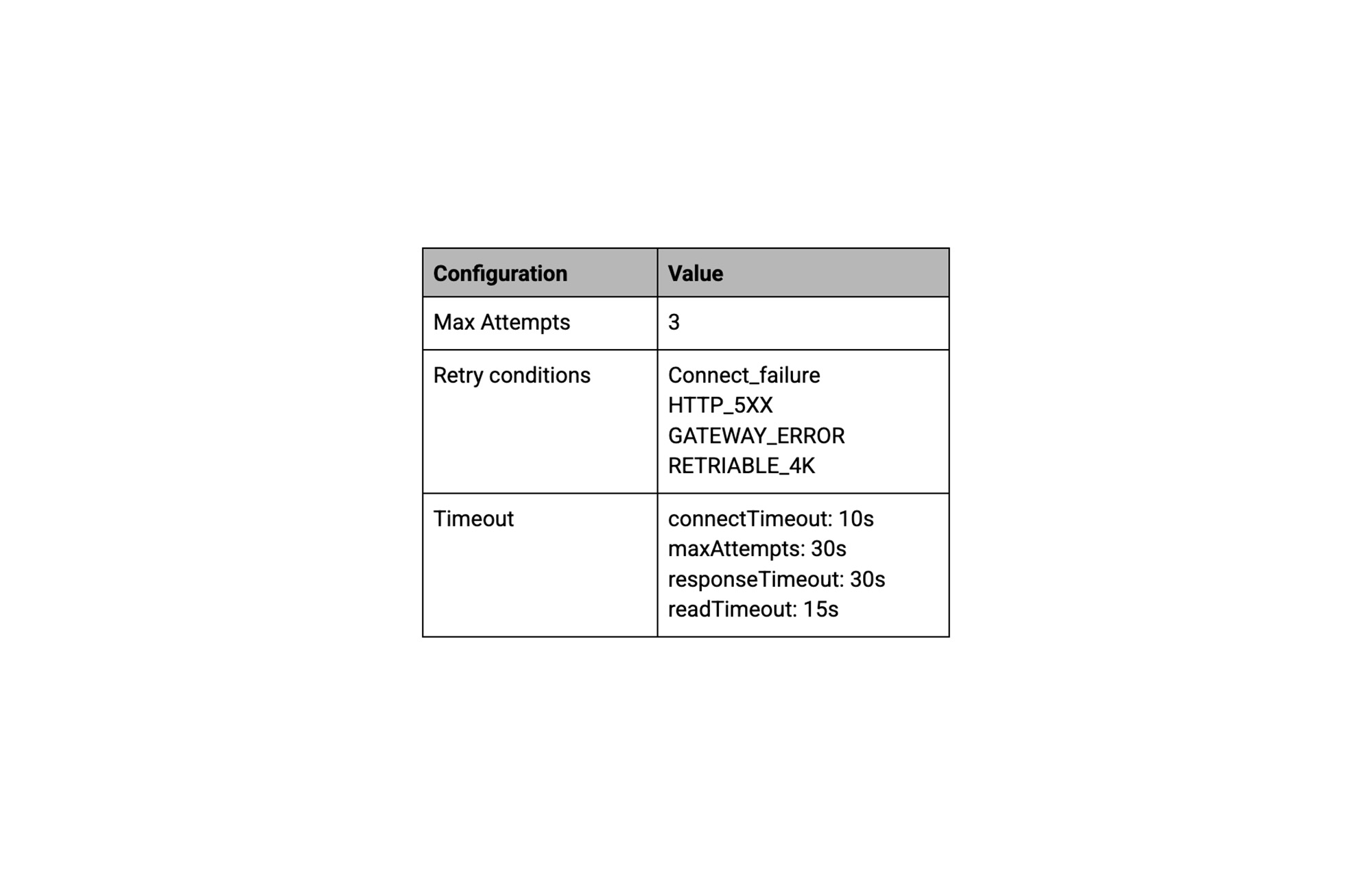

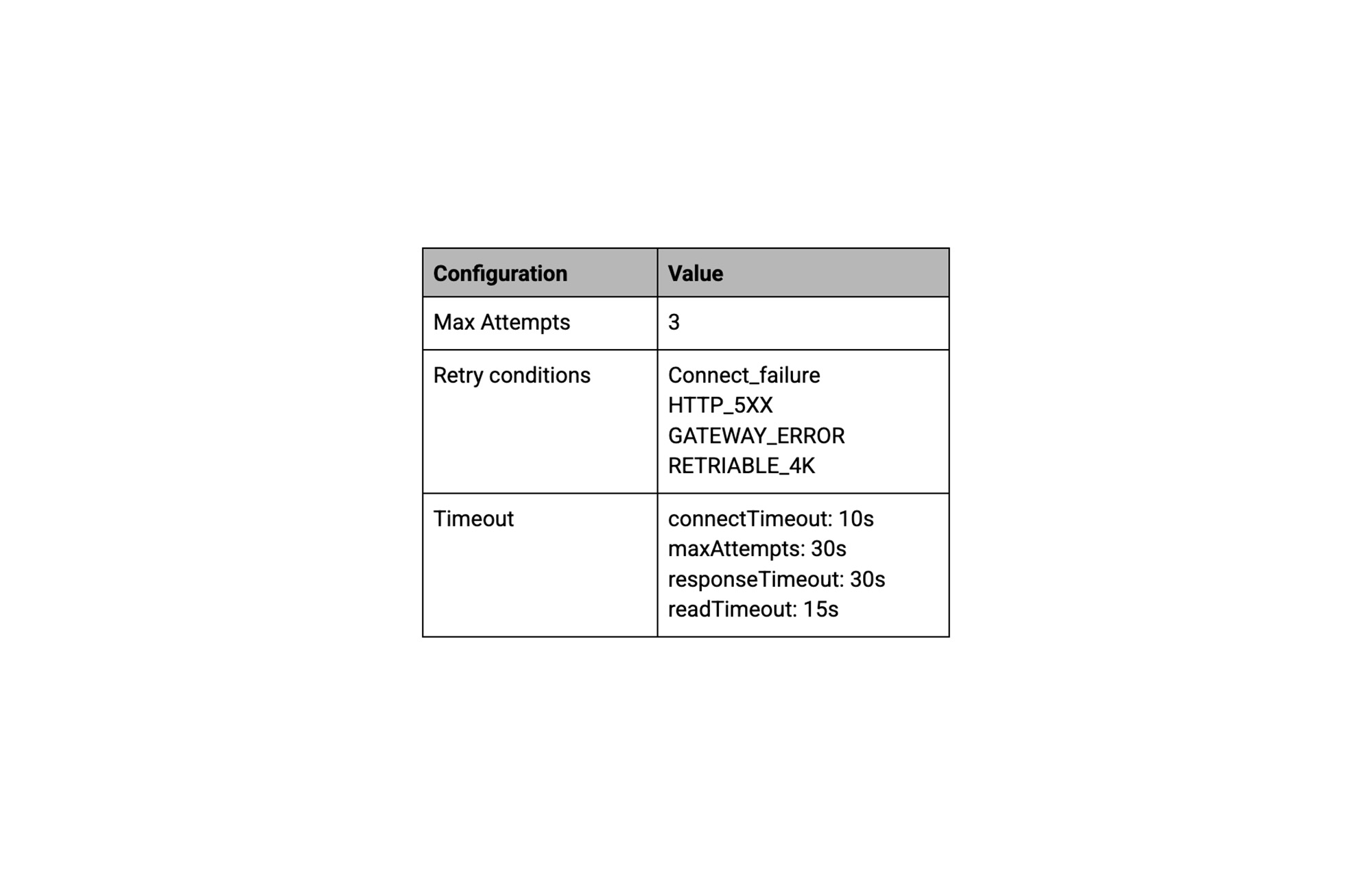

Origin failover: configure primary origin and failover origin in Media CDN origins configuration and define following origin failover configuration:

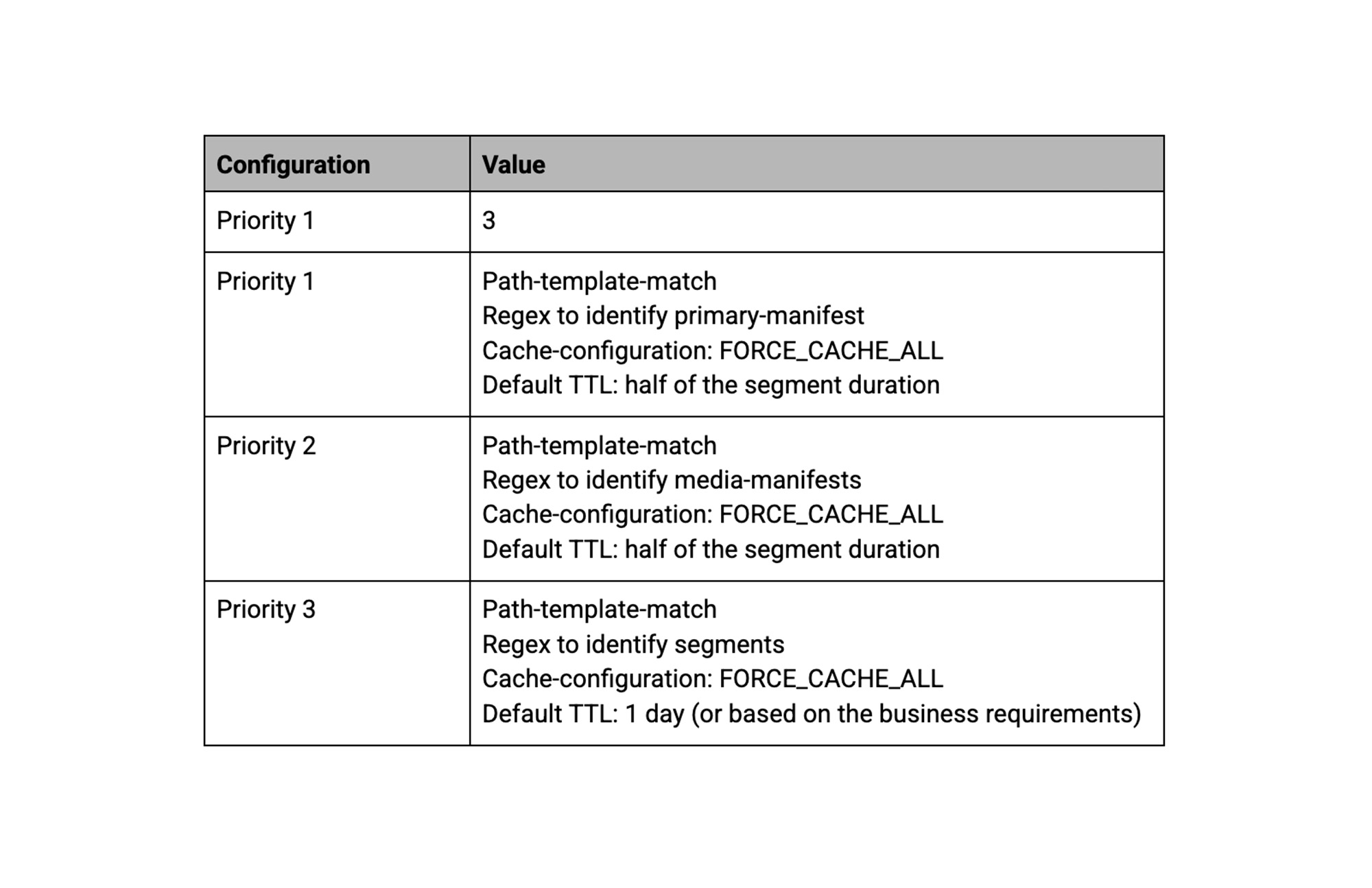

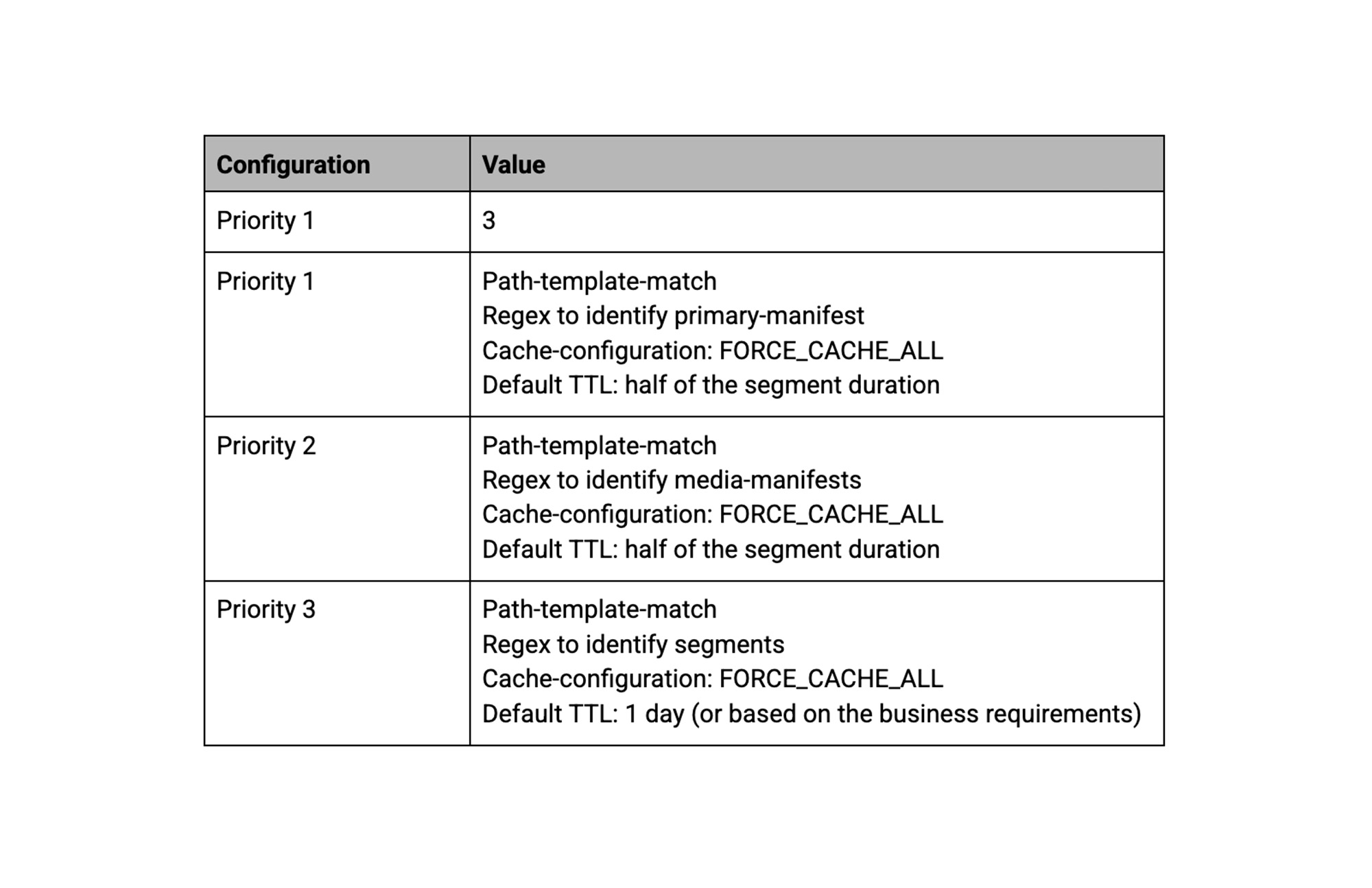

Services Configuration: In media CDN service configuration, define following cache modes and TTL for better caching and also flexibility to modify bitrate ladder on-the-fly if required.

Service Configuration routeRules:

Setting up observability and prepare for the event

This section has 3 parts: notify, identify and resolve.

Notify:

Google Cloud provides alerting functionality to Email, SMS, Slack, Pub/Sub/ PagerDuty etc via notification channels, however configuring correct policies are important to help you get notifications about any incident quickly.

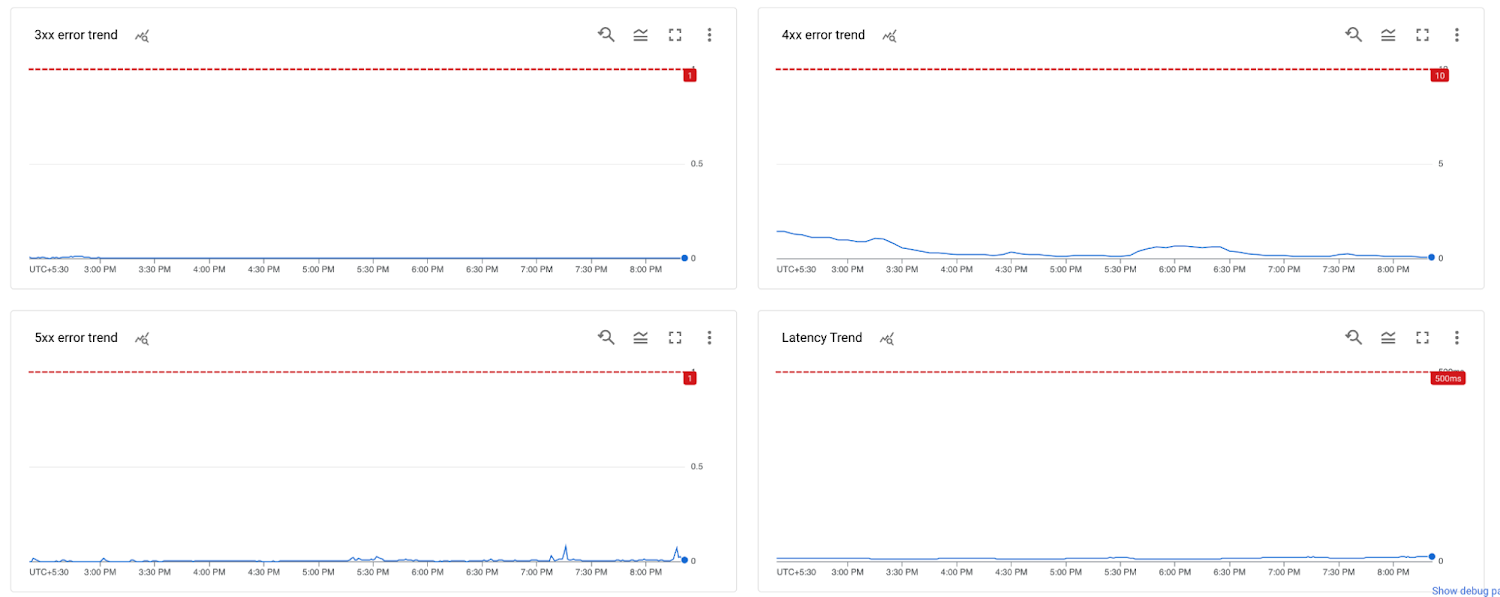

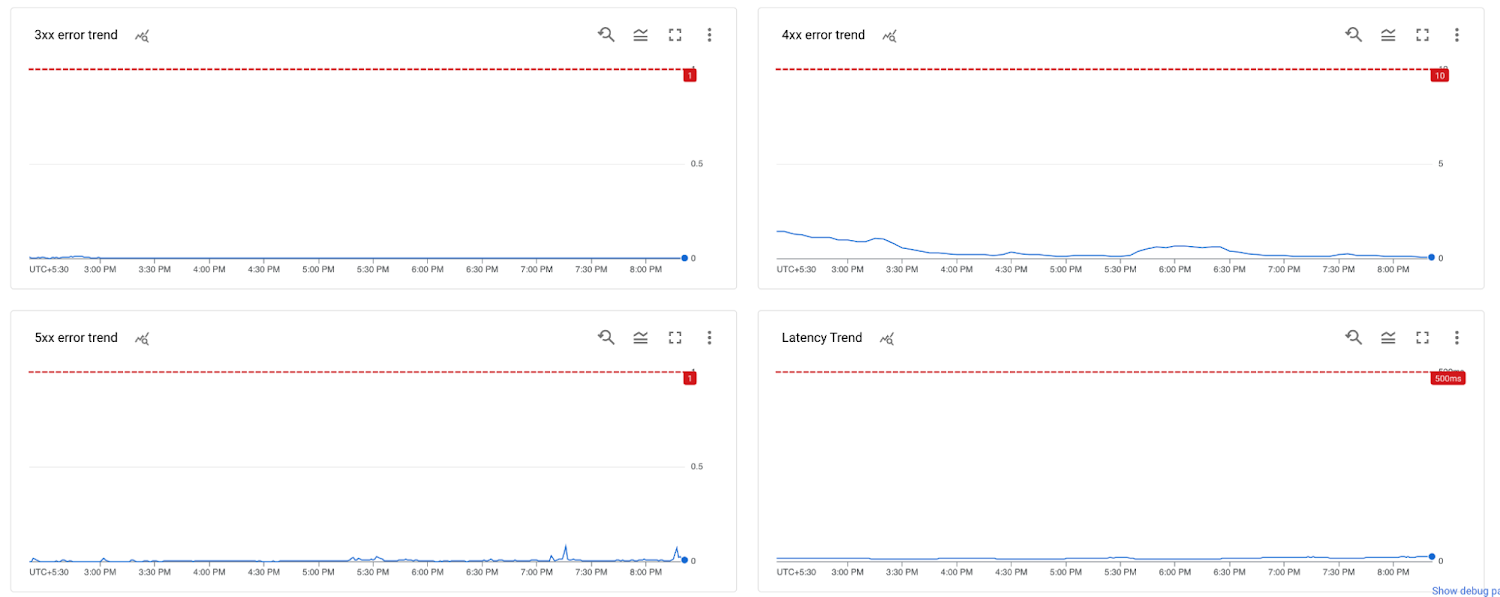

Mostly, trends of below 4 metrics will help you identify any incidents in its early stage.

5xx errors should be less than 1% of total requests

3xx responses should be less than 1% of total requests (unless you have redirects configured in your service)

4xx errors should be less than 10% of total requests (These can be users trying to attempt urls which are not available or attempt with expired signed tokens - hacking attempts)

P95 latency should be less than 1 seconds (depends on your segment duration and average playback bitrate)

Google Cloud alerting supports ratio based alerts using MQL, Use below MQL to configure alerts for 5xx, 4xx and 3xx responses.

You can configure alerts for latency spikes using the console or use below MQL to configure the latency alerts.

You can also use these MQLs to create a monitoring dashboard like the one below, which will help your monitoring team to monitor trends proactively and report or open support case in case they will find any issue.

Identify

Media CDN logs each HTTP request between the client and edge to Cloud Logging. Media CDN brings a unique advantage by delivering logs in near real-time.

You can use Cloud logging’s log explorer functionality along with logging query language to quickly deep dive into media CDN logs to identify problem areas.

At large volumes of requests, you might prefer to sample logs rather than capture a log for every request, and rely on metrics for proactive monitoring and investigation.

Also, to filter logs before storing them—for example, only capturing pertinent fields to reduce the total log volume that you need to store and query—you can configure log exclusions rules, which allow you to define a query (filter) that includes (or excludes) fields prior to storage.

You can also set up multiple filters—for example, capturing all cache miss requests, or all requests for a specific hostname, and only taking a sample of all logs as mentioned above.

Resolve

From past experience, many issues are configuration related and good engineering and DevOps team will address them in house. But it’s also essential to have a Planned Event support to cover critical phases of high-traffic events, Google Cloud’s planned event support offers following services and more…

Before your event, Google technical experts conduct an Architecture Essentials Review to identify and solve potential issues in advance, like quota or storage capacity.

During your event, you receive an accelerated response time for P1 cases, with First Meaningful Response in 15 minutes.

After your event concludes, you receive a Performance Summary Report, which contains a retrospective analysis of how your cloud environment performed during the event. The report also identifies opportunities to improve future events.

Please contact your account team for more information about planned event support.

Test fallback plans and Start small

It’s a time to test end to end and start with relatively smaller live events.

Before we start with small events, test following and ensure nothing breaks.

Test failover of primary chain component one by one and check playback is not interrupted because of origin failover configuration of Media CDN.

Try removing top bitrate from primary encoder, this may result in some 4xx error spike because users with previously downloaded master manifest will keep trying for top profile, get 404 errors and try lower bitrate profile. (this needs to be handled by player gracefully)

Google Cloud’s CDN portfolio

Google Cloud end-to-end content delivery platform supports a wide range of use-cases such as Web Acceleration, Gaming, Social Network, Education, Video on Demand, Live Streaming, etc. Google Cloud’s content delivery platform is further augmented with additional capabilities such as:

The HTTP/3,QUIC Protocol

Ecosystem Integration like the Transcoder API, or the Live Stream API

Dynamic Ad insertion via the Video Stitcher API,or using GAM Integration with the Google CDN

Real time logging and monitoring via Google Cloud Operations Suite

WAF security with Cloud Armor

Read our customer case studies here:

Inshorts : Delivering essential real-time video content to India’s farthest reach with Media CDN

Sharechat : Building a scalable data-driven social network for non-English speakers globally

Tokopedia : Scaling to accommodate major shopping events with Google Kubernetes Engine

Wix : Empowering everyone to create the advance website they need from design to development

Nordeus: Driving new gaming experiences for millions of users with a scalable infrastructure