Google Cloud networking in depth: Cloud Load Balancing deconstructed

Babi Seal

Product Manager, Google Cloud

Google has eight services that serve over one billion users every day. To offer the best availability and user experience for these services, we at Google engineered load-balancing infrastructure that scales on demand, utilizes resources efficiently, is secure and optimized for latency. This same load-balancing infrastructure is what we provide to you for your applications, in the form of the Google Cloud Load Balancing family. Unlike traditional load-balancing solutions, each of our load-balancing solutions are designed as large-scale distributed software-defined systems that scale-out and are highly resilient.

In this blog we will cover our portfolio of load-balancing offerings. We will start with our internet-facing load balancers that deliver Google’s massive edge-as-a-service to you via Network Load Balancing and Global Load Balancing. We’ll present benefits of container-native load balancing and show you how to secure the edge and optimize for latency and cost. Since many of you have services that are internal to Google Cloud, we’ll then cover your Internal Load Balancing options. We will wrap up by showing you how we can help you grow your cloud footprint and manage multi-cloud and heterogeneous services with internal layer-7 load balancing and Traffic Director for global service mesh.

Maglev for fast and reliable Network Load Balancing

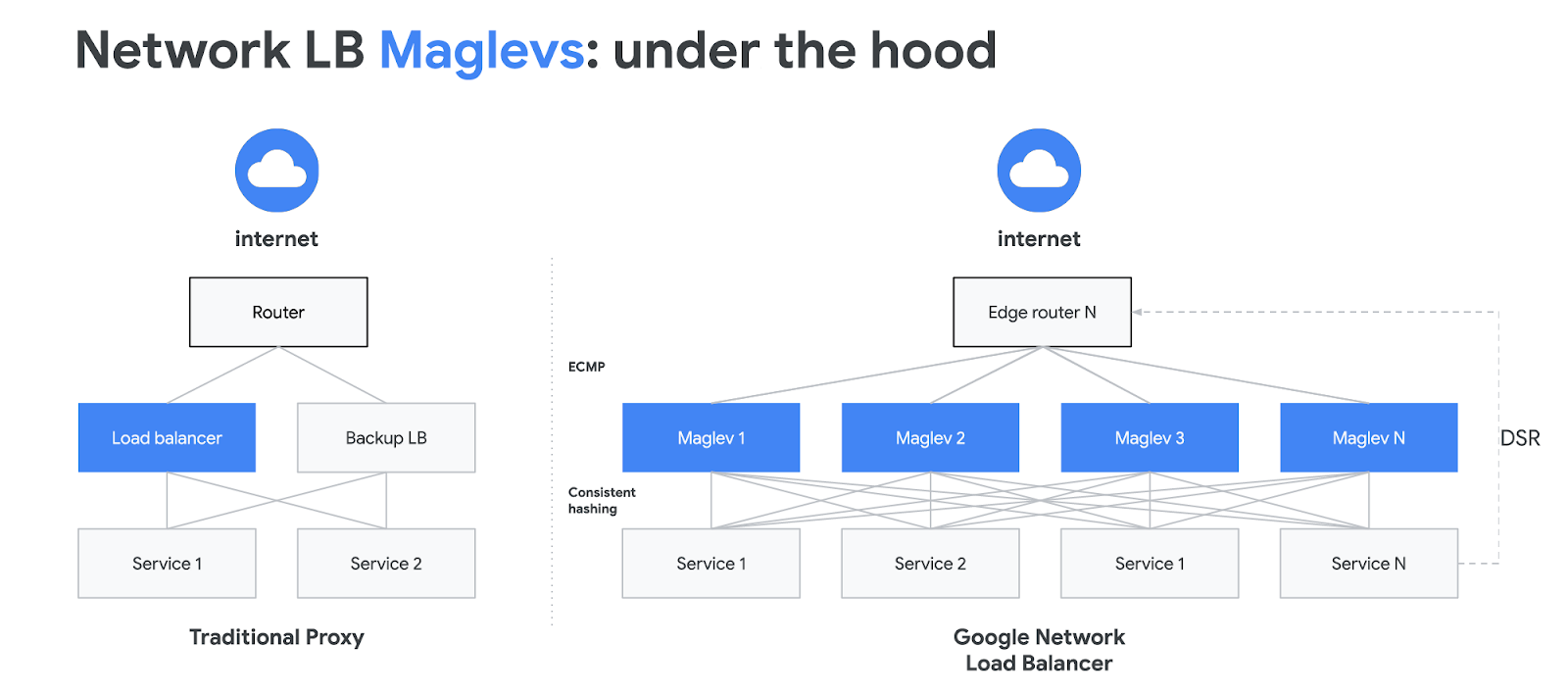

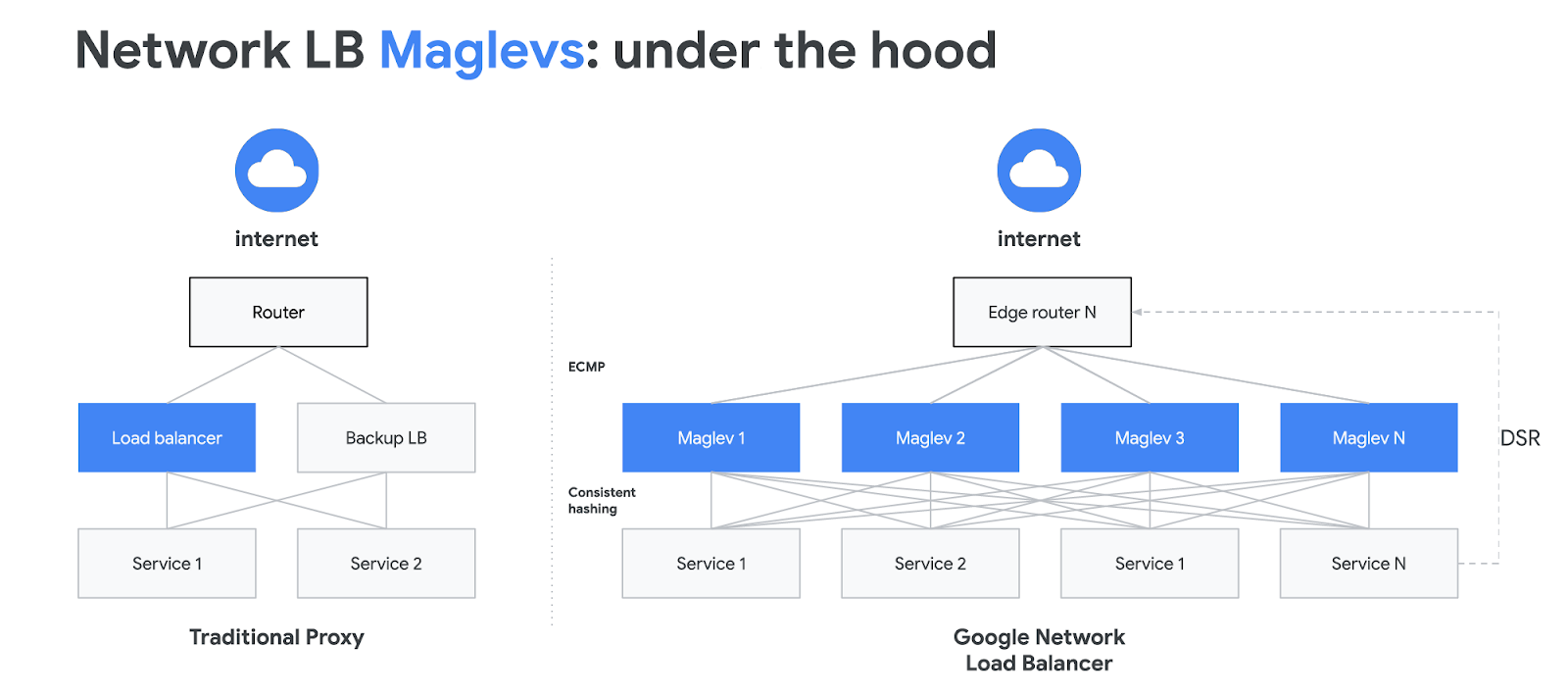

For load-balancing external layer-4 TCP/UDP traffic, we offer Network Load Balancing built using our Maglevs. In production since 2008, Maglevs load balance all traffic that comes into our data centers, and distribute traffic to front-end engines at our network edges. The layer-4 traffic is distributed to a set of regional backend instances using a 5-tuple hash consisting of the source and destination IP address, protocol and source and destination port.

Maglev was a break from traditional load balancers in that it is software-based and operates in an active-active scale-out architecture. With Maglev Consistent Hashing, Maglev-based load balancers evenly distribute traffic over hundreds of backends as well as minimize the negative impact of unexpected faults on connection-oriented protocols. Network Load Balancing is a great solution for lightweight L4-based load balancing where you want to preserve the client IP address all the way to the backend instance and also perform TLS termination on these instances.

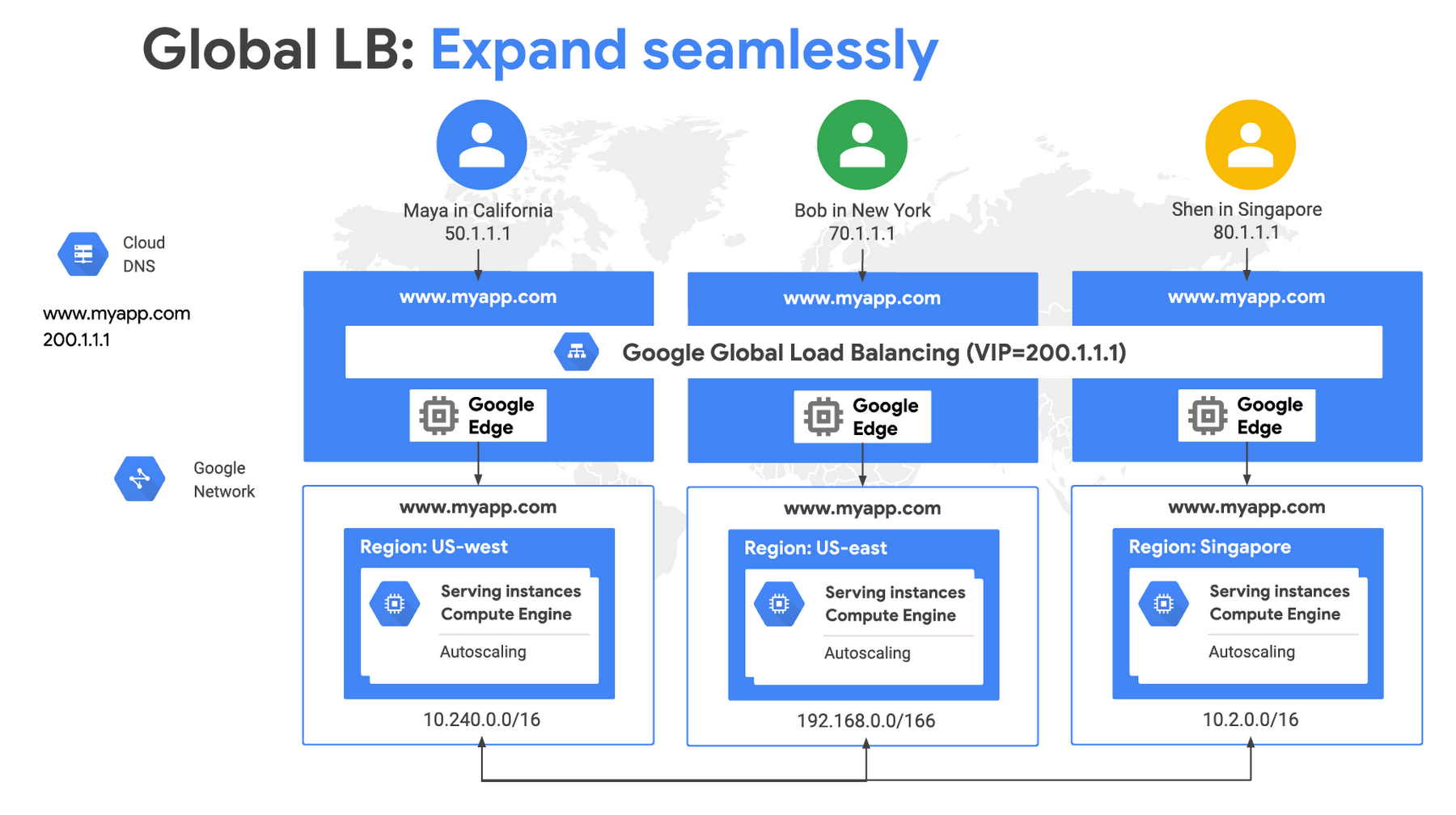

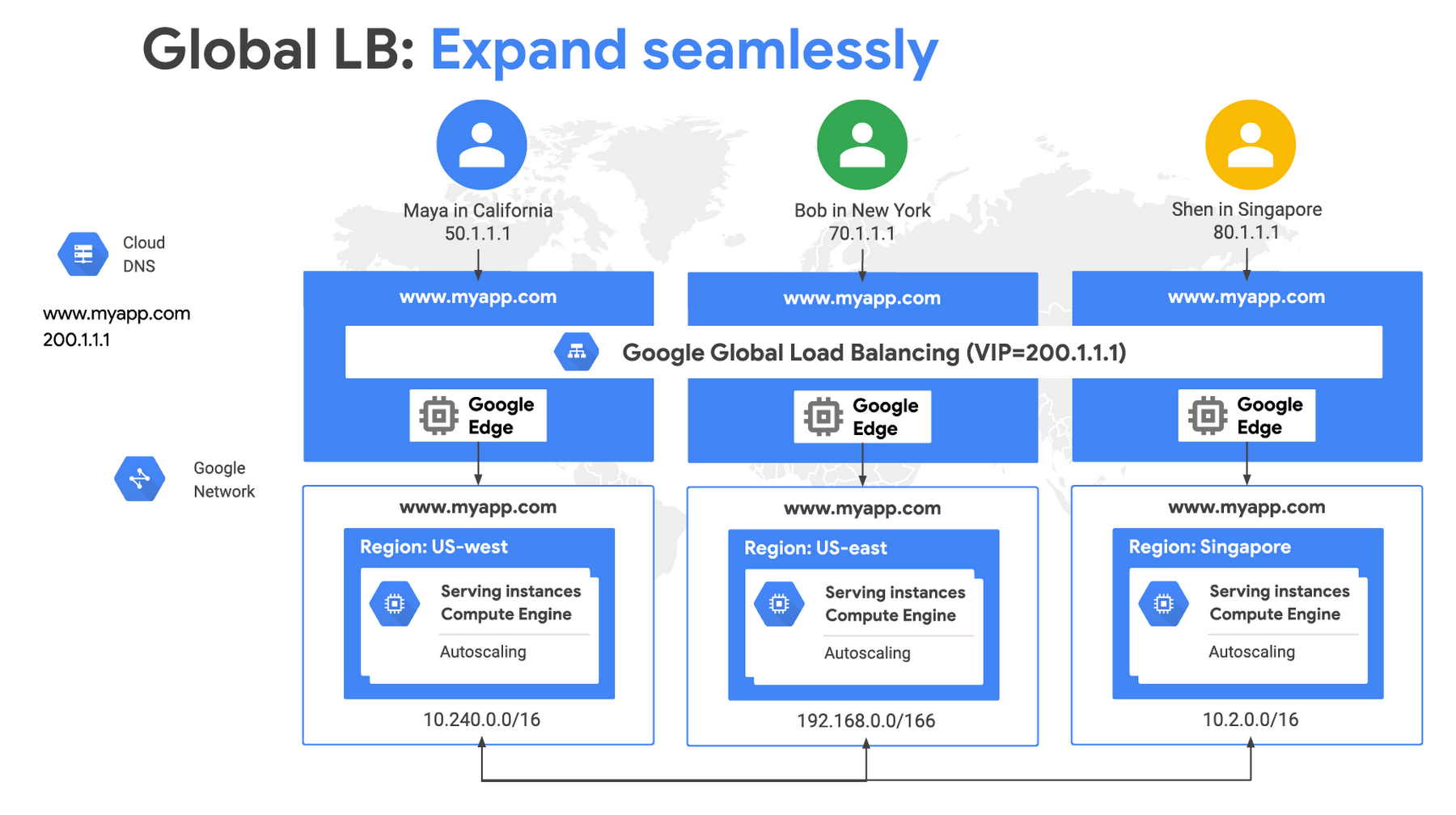

Global Load Balancing for a single VIP, global reach

For our global load-balancing solution, we pushed load balancing to the edge of Google’s global network to front end the global load-balancing capacity behind a single Anycast Virtual IPv4 or IPv6 address. You can deploy capacity in multiple regions without having to modify the DNS entries or add new load balancer front-end IP address (VIPs) for new regions. You don’t have to deal with the challenges of traditional DNS-based load balancers such as clients caching IP addresses or regional siloed resources resulting in sub-optimal load balancing and utilization of backends instances.

With global load balancing, you get cross-region failover and overflow. Global LB’s traffic distribution algorithm automatically directs traffic to the next closest instance with available capacity in the event of failure of or lack of capacity for instances in the region closest to end user.

Global LB delivers first class support for both VMs and containers. For containers, we built an abstraction called Network Endpoint Groups (NEG), which is essentially a group of IP address and port pairs. NEGs enable you to directly specify a container endpoint as opposed to first directing traffic to the node on which it resides and then redirecting to the container using kube-proxy. As a result, you can deliver lower latency, greater throughput and higher fidelity health checks for your services using NEGs.

Secure the edge

To secure your service, we recommend taking a defense-in-depth approach. We also recommend that you deploy TLS for data privacy and integrity purposes. We do not charge extra for encrypted vs. unencrypted traffic. We offer HTTPS and SSL proxy in our global load-balancing family. We also offer Managed Certificates to reduce the work of procuring certs and managing their lifecycle. With SSL policies you can specify the minimum TLS version and SSL features that you wish to enable on your HTTP(S) and SSL proxy load balancers. We also offer multiple pre-configured profiles, including a custom one that lets you allows specify the ciphers and SSL features you want to use.

With Google’s global network and global load-balancing, Google is able to mitigate and dissipate layer-3 and layer-4 volumetric attacks. To protect against application layer attacks, we recommend using Cloud Armor attached to your Global HTTP(S) load balancer. Use this in concert with Identity Aware Proxy to authenticate users and authorize access to your backend services.

Optimize for latency and cost

Make the web faster

We spend a lot of time at Google working to make the web faster. QUIC is a UDP-based encrypted transport optimized for HTTPS and HTTP/2 is foundational for gRPC support. Google cloud load balancing supports QUIC traffic to the load balancer and supports multiplexed streams of HTTP/2 to the load balancer, followed by load balancing these multiple HTTP/2 streams to the backend.

Google Cloud CDN runs on our globally distributed edge points, so you can reduce network latency when serving website content, offload content origins and reduce serving costs. Just set up HTTP(S) Load Balancing and then enable CDN by clicking a single checkbox.

Optimize for performance or cost with Network Tiers

With Network Tiers, you can optimize your workload for performance with Premium Tier, which takes advantage of Google’s performant network, or optimize for cost with Standard Tier, where your return traffic travels over regular ISP networks like other public clouds but incurs lower egress costs.

Internal Load Balancing for private services

Many Google Cloud customers have private workloads that need to be protected from the public internet. Those services need to scale and grow behind a private VIP that is accessible only by internal instances. For such users we offer regional layer-4 Internal Load Balancing based on our Andromeda network virtualization stack. Similar to our HTTP(S) Load Balancer and Network Load Balancer, Internal L4 Load Balancing is neither a hardware appliance nor an instance-based solution, and can support as many connections per second as you need since there’s no load balancer in the path between your client and backend instances.

What’s next?

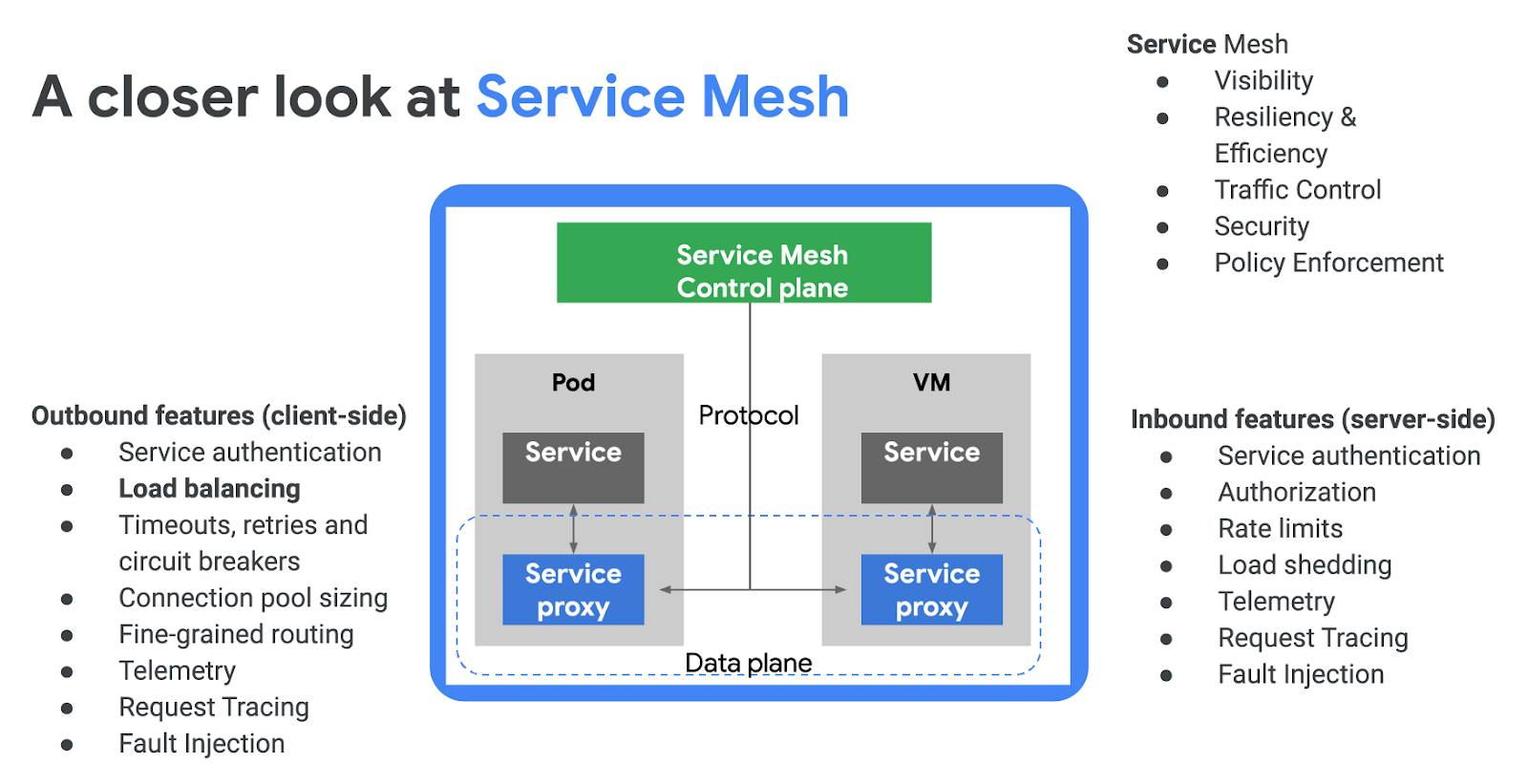

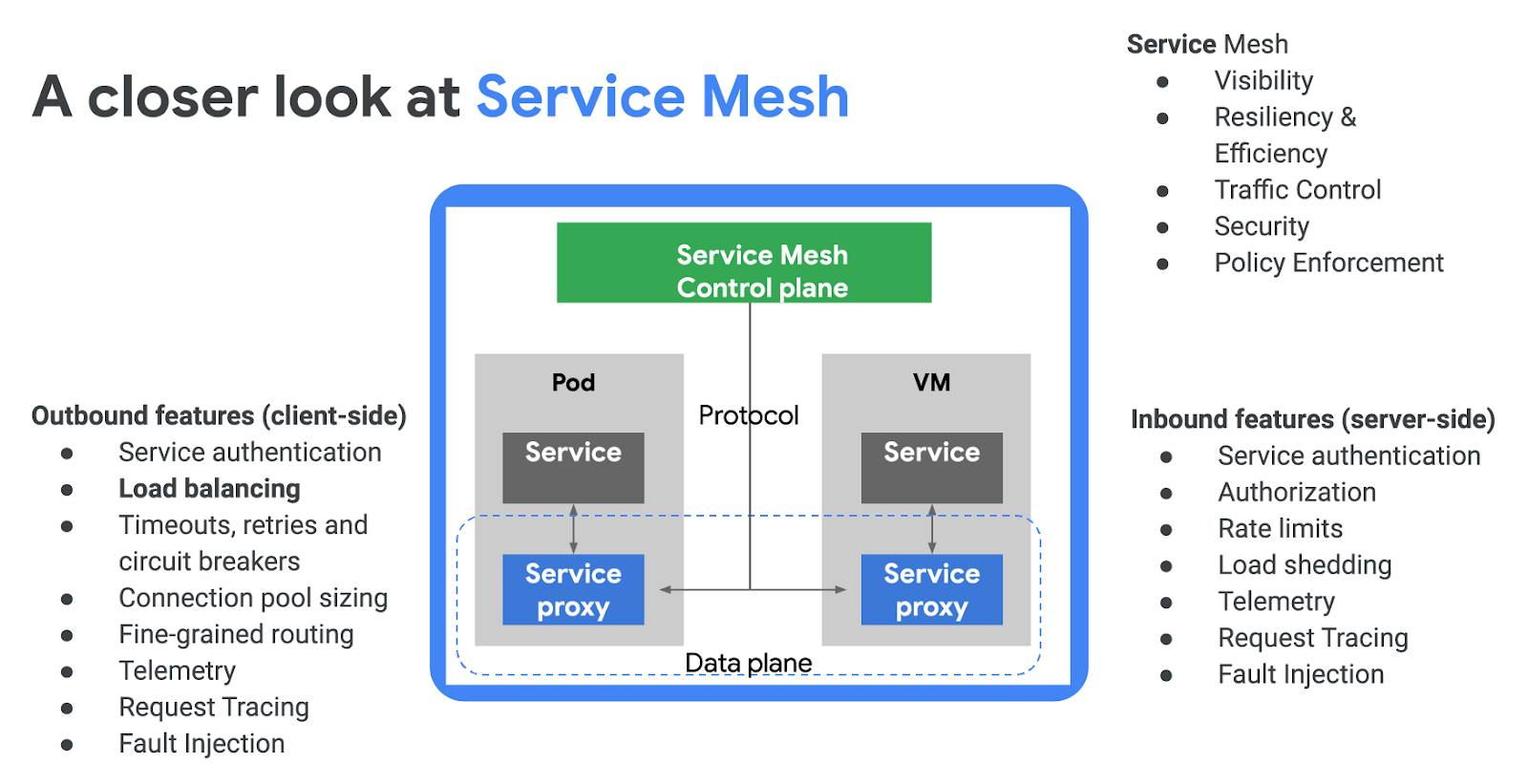

For business agility, many organizations are transitioning from monolithic applications to microservices, looking for a uniform way to create and manage heterogenous and multi-cloud services with security, observability and resiliency. This is where service mesh comes in, providing software-defined networking (SDN) for services, including load balancing. With service mesh, networking complexity is abstracted away to the service mesh’s data-plane, which is implemented as a service proxy such as Envoy, leaving you free to focus on building business logic. Envoy is a performant, feature-rich and open-source service mesh data plane that you can configure and manage via the service mesh’s control plane (such as Istio). Google is a key contributor to both the Envoy and Istio open-source initiatives.

We recently launched Traffic Director, a GCP-managed traffic management control plane for service mesh. Traffic Director communicates with the service proxies in the data plane using open-source xDS APIs to enable global load balancing, scalable health checking, autoscaling, resiliency and policy-driven traffic steering.

Learn more

To learn more about Cloud Load Balancing, start with the Next ‘19 talks on Google Cloud Load Balancing Deep Dive and Best Practices, Traffic Director and Envoy-based ILB for Production Grade Service Mesh & Istio and read the documentation. We’d love your feedback on these features and what else you’d like to see from our load balancing portfolio. You can reach us at gcp-networking@google.com.