Introducing Shared VPC for Google Kubernetes Engine

Manjot Pahwa

Product Manager, Google Cloud, Google

[Editor's note: This is one of many posts on enterprise features enabled by Kubernetes Engine 1.10. For the full coverage, follow along here.]

Containers have come a long way, from the darling of cloud-native startups to the de-facto way to deploy production workloads in large enterprises. But as containers grow into this new role, networking continues to pose challenges in terms of management, deployment and scaling.

We are excited to introduce Shared Virtual Private Cloud (VPC) in beta to help Google Kubernetes Engine tackle scale and reliability needs of container networking for enterprise workloads.

Shared VPC for better control of enterprise network resources

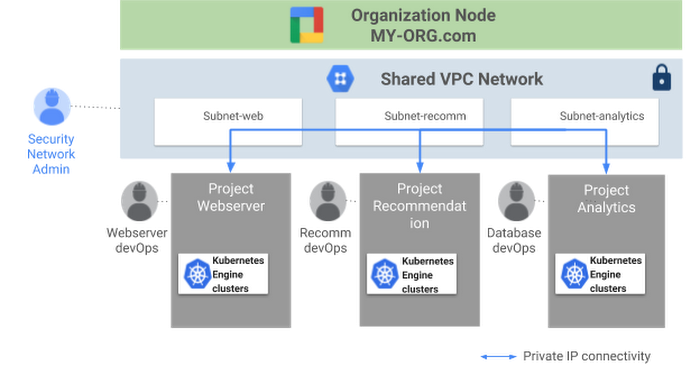

In large enterprises, you often need to place different departments into different projects, for purposes of budgeting, access control, accounting, etc. And while isolation and network segmentation are recommended best practices, network segmentation can pose a challenge for sharing resources. In Compute Engine environments, Shared VPC networks let enterprise administrators give multiple projects permission to communicate via a single, shared virtual network without giving control of critical resources such as firewalls. Now, with the general availability of Kubernetes Engine 1.10, we are extending the shared VPC model to connect Kubernetes Engine clusters with versions 1.8 and above—connecting from multiple projects to a common VPC network.

Before Shared VPC, it was possible to achieve this setup in a crippled, insecure way, by bridging projects in their own VPC with Cloud VPNs. The problem with this approach was that it required N*(N-1)/2 connections to obtain full connectivity between each project. An additional challenge was that the network for each cluster wasn’t fully configurable, making it difficult for one project to communicate with another without a NAT gateway in between. Security was another concern since the organization administrator had no control over firewalls in the other projects.

Now, with Shared VPC, you can overcome these challenges and compartmentalize Kubernetes Engine clusters into separate projects, for the following benefits to your enterprise:

- Sharing of common resources - Shared VPC makes it easy to use network resources that must be shared across various teams, such as a set of IPs within RFC 1918 shared across multiple subnets and Kubernetes Engine clusters.

- Security - Organizations want to leverage more granular IAM roles to separate access to sensitive projects and data. By restricting what individual users can do with network resources, network and security administrators can better protect enterprise assets from inadvertent or deliberate acts that can compromise the network. While a network administrator can set firewalls for every team, a cluster administrator’s responsibilities might be to manage workloads within a project. Shared VPC provides you with centralized control of critical network resources under the organization administrator, while still giving flexibility to the various project admins to manage clusters in their own projects.

- Billing - Teams can use projects and separate Kubernetes Engine clusters to isolate their resource usage, which helps with accounting and budgeting needs by letting you view billing for each team separately.

- Isolation and support for multi-tenant workloads - You can break up your deployment into projects and assign them to the teams working on them, lowering the chances that one team’s actions will inadvertently affect another team’s projects.

Here is what, Spotify, one of the many enterprises using Kubernetes Engine, has to say:

Kubernetes is our preferred orchestration solution for thousands of our backend services because of its capabilities for improved resiliency, features such as autoscaling, and the vibrant open-source community. Shared VPC in Kubernetes Engine is essential for us to be able to use Kubernetes Engine with our many GCP projects.

Matt Brown, Software Engineer, Spotify

How does Shared VPC work?

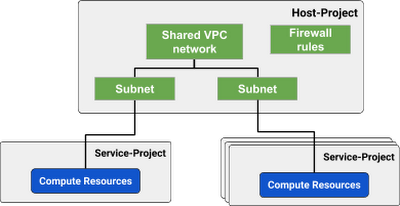

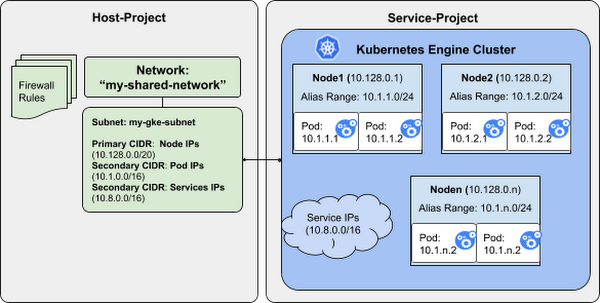

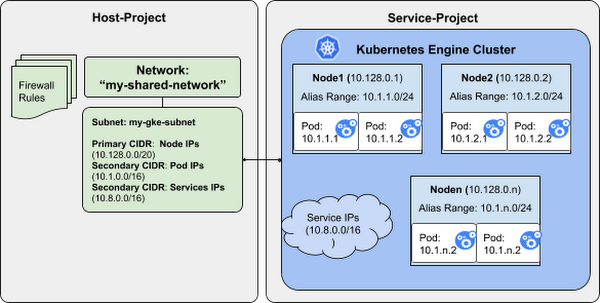

Host project contains one or more shared network resources while the service project(s) map to the different teams or departments in your organization. After setting up the correct IAM permissions for service accounts in both the host and service projects, the cluster admin can instantiate a number of Compute Engine resources in any of the service projects. This way, critical resources like firewalls are centrally managed by the network or security admin, while cluster admins are able to create clusters in the respective service projects.

Shared VPC is built on top of Alias IP. Kubernetes Engine clusters in service projects will need to be configured with a primary CIDR range (from which to draw Node IP addresses), and two secondary CIDR ranges (from which to draw Kubernetes Pod and Service IP addresses). The following diagram illustrates a subnet with the three CIDR ranges from which the clusters in the Shared VPC are carved out.

The Shared VPC IAM permissions model

To get started with Shared VPC, the first step is to set up the right IAM permissions on service accounts. For the cluster admin to be able to create Kubernetes Engine clusters in the service projects, the host project administrator needs to grant the compute.networkUser and container.hostServiceAgentUser roles in the host project, allowing the service project's service accounts to use specific subnetworks and to perform networking administrative actions to manage Kubernetes Engine clusters. For more detailed information on the IAM permissions model, follow along here.

Try it out today!

Create a Shared VPC cluster in Kubernetes Engine and get the ease of access and scale for your enterprise workloads. Don’t forget to sign up for the upcoming webinar, 3 reasons why you should run your enterprise workloads on Kubernetes Engine.