Google Patents Public Datasets: connecting public, paid, and private patent data

Ian Wetherbee

Tech Lead, Google Patents

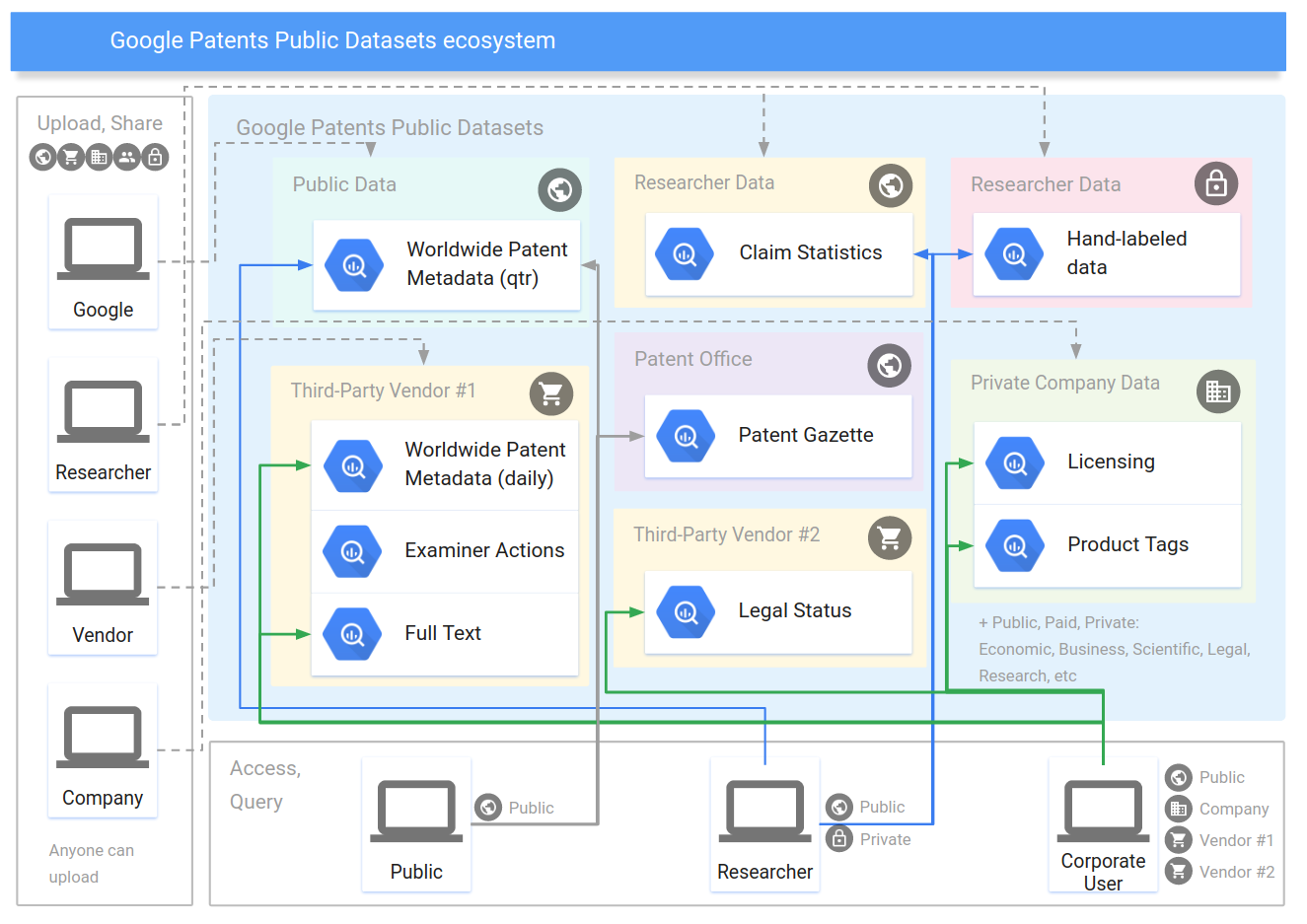

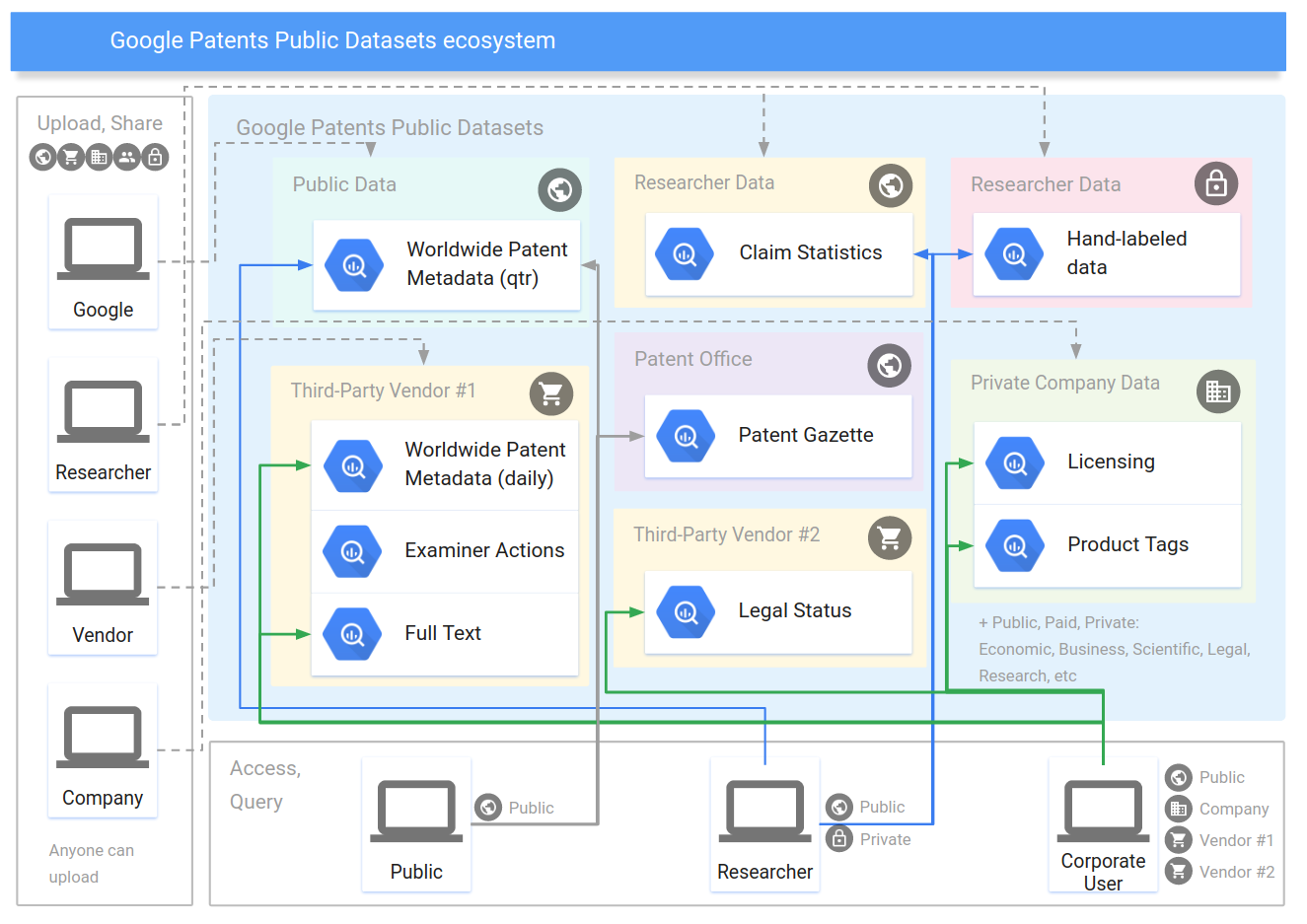

Google has long history of making patent data universally accessible and useful, starting more than 10 years ago by scanning old US patents into Google Patents. Today, Google is launching Google Patents Public Datasets on BigQuery, with a collection of publicly accessible, connected database tables for empirical analysis of the international patent system.

Google Patents is great for asking search-oriented questions, such as finding different types of engines or patents by Nikola Tesla, and today you can answer a much broader range of questions with SQL using these datasets like "what percentage of patents have more than one inventor?" or "what funding does the government provide to promote innovation in certain patent areas?" Academics, economists and policy researchers ask these kinds of questions to study how the patent system is working and where it can be improved.

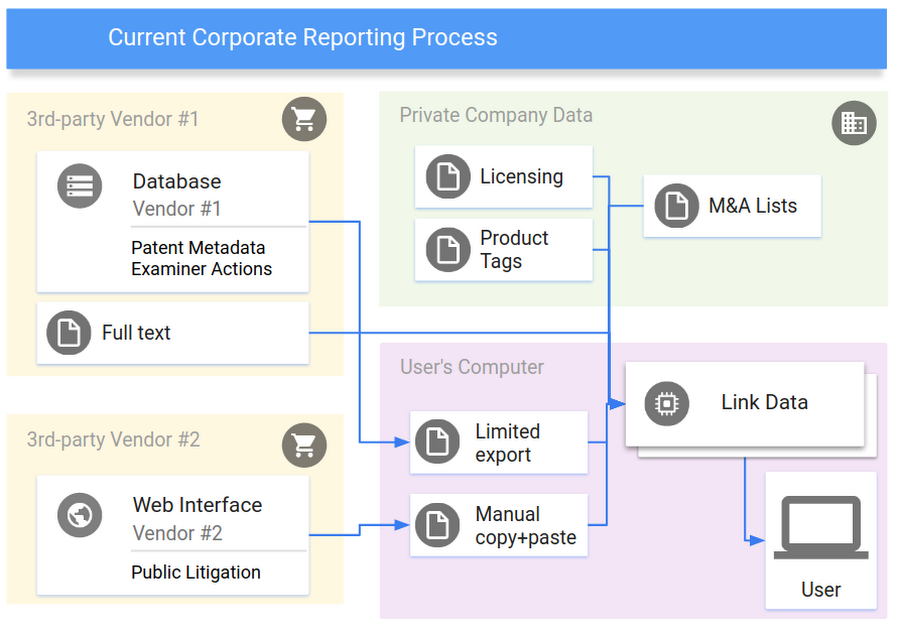

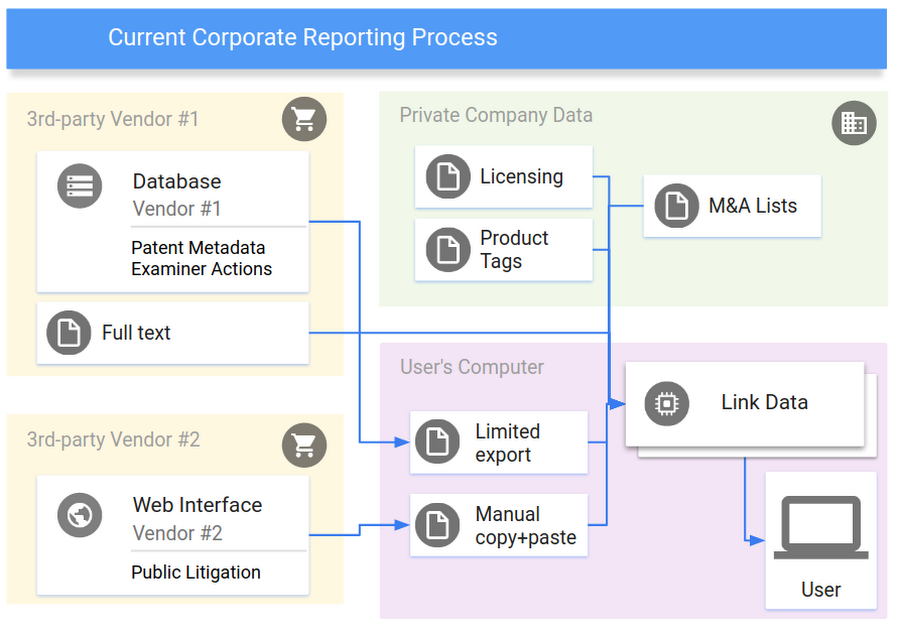

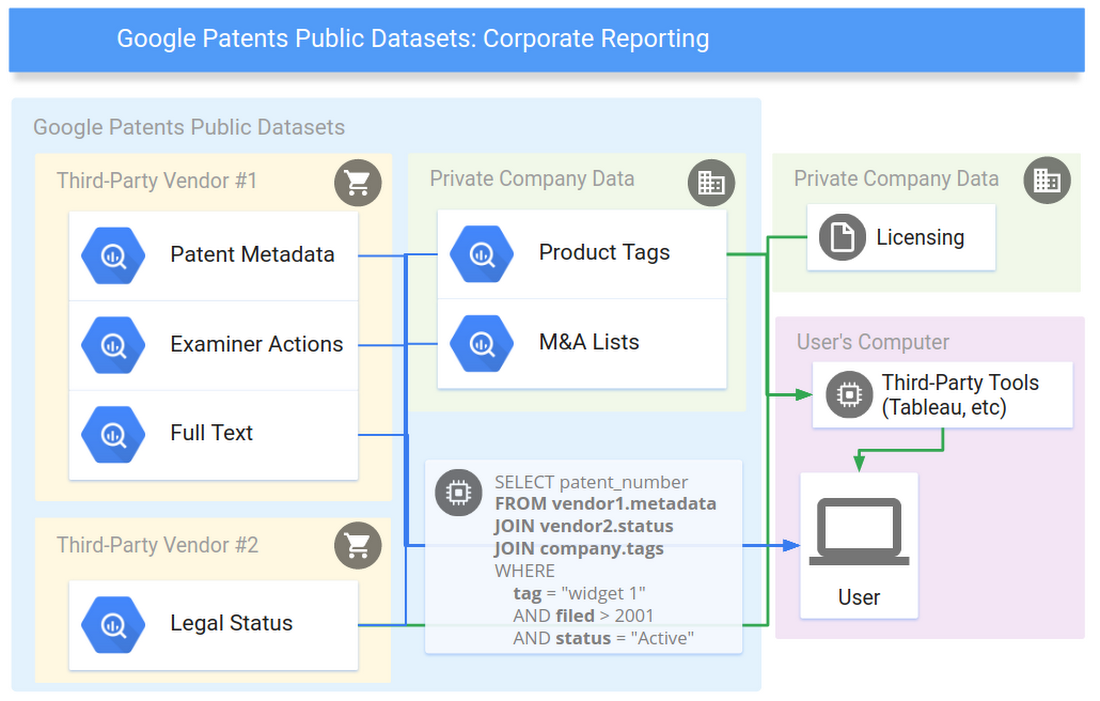

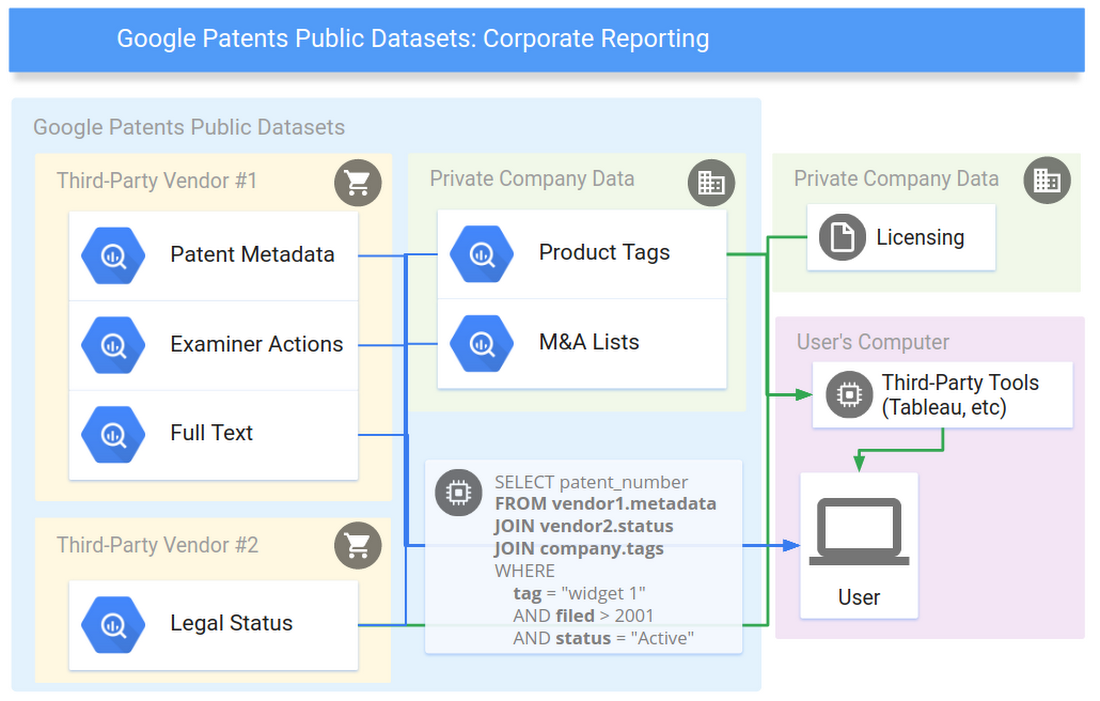

In addition, enterprise users often maintain collections of private data about patents, such as an internal tagging system that corresponds to specific product lines, and they want to connect that information with other patent datasets to generate reports and analyze investment areas. Now companies can combine their private data with public and paid datasets to ask "what are my active patents and pending patent applications?", "which of my patents in what technology areas are expiring soon?" or "what are the top companies that cite the patents I've tagged with [widget #57]?".

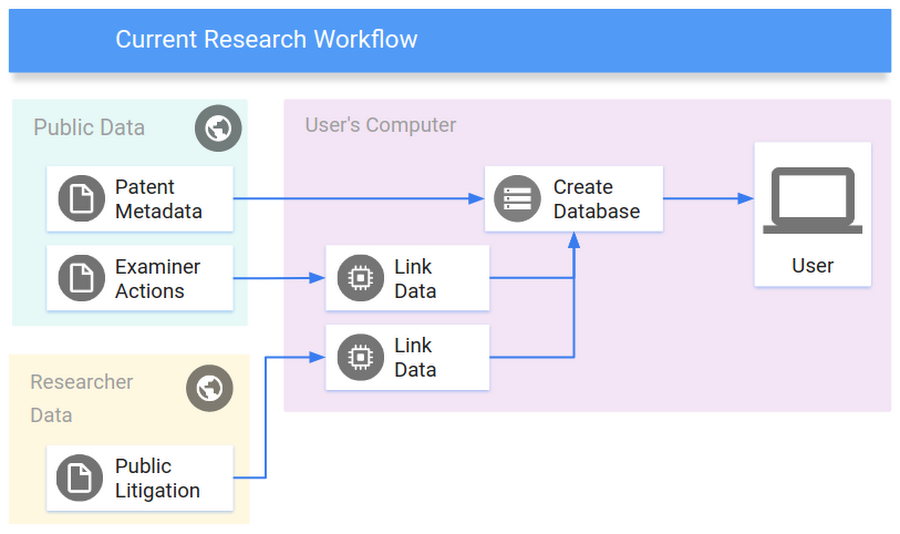

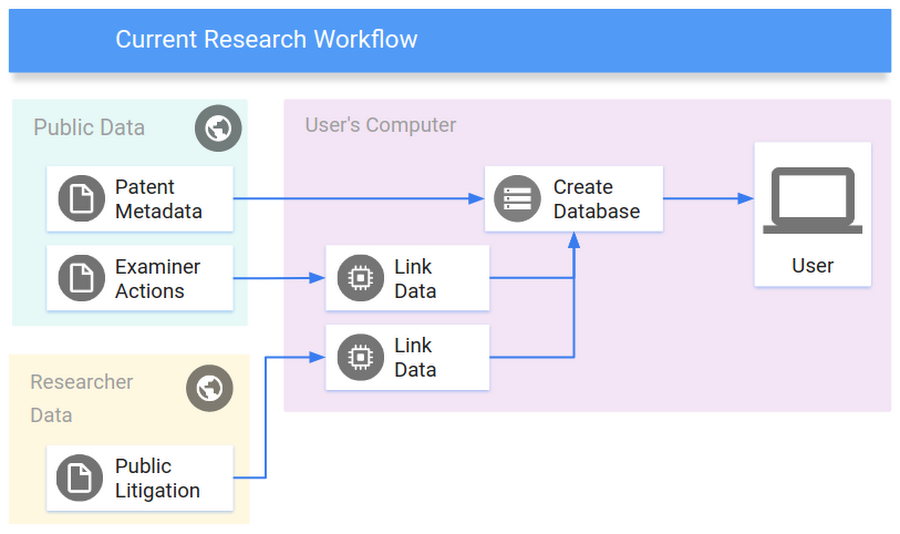

Patent information accessibility is critical for examining new patents, informing public policy decisions, managing corporate investment in intellectual property, and promoting future scientific innovation. The growing number of available patent data sources means researchers often spend more time downloading, parsing, loading, syncing and managing local databases than conducting analysis. With these new datasets, researchers and companies can access the data they need from multiple sources in one place, thus spending more time on analysis than data preparation.

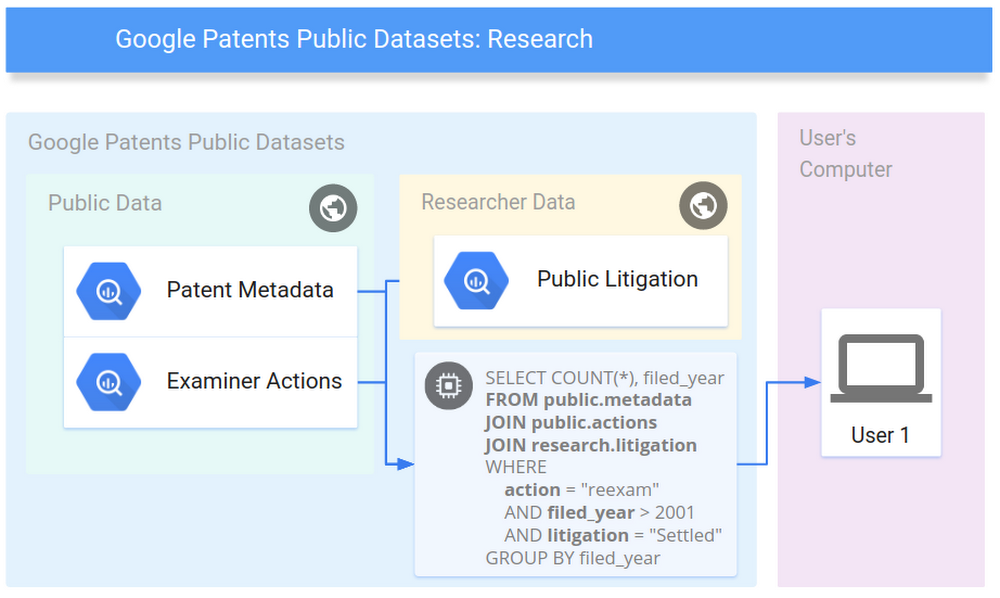

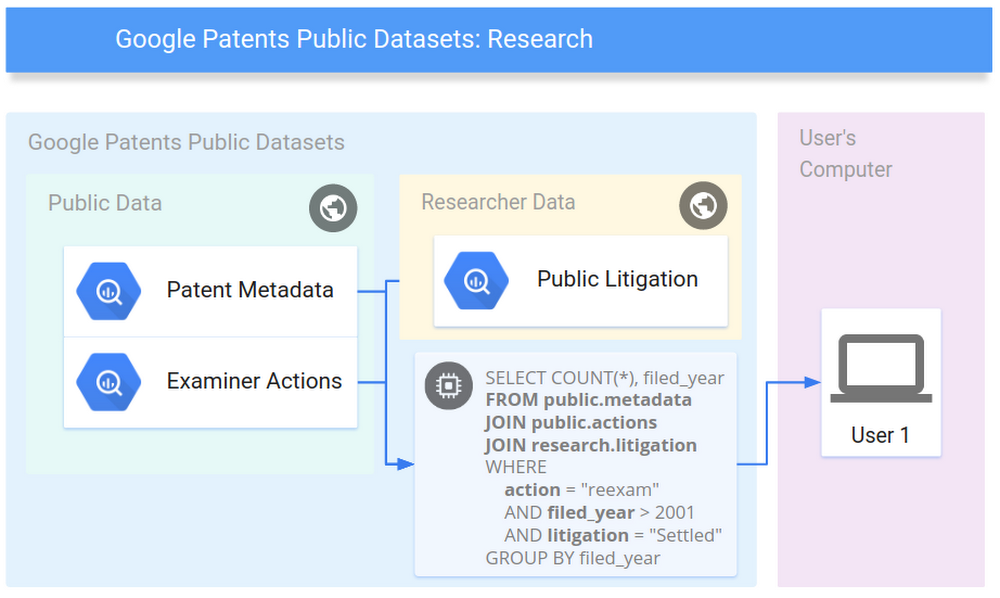

The core of these datasets is the public Google Patents Public Data table of worldwide bibliographic information on more than 90 million patent publications from 17 countries and US full text, provided by IFI CLAIMS Patent Services. We are also providing a Google Patents Research Data table containing English machine translations for all titles and abstracts from Google Translate, similarity vectors, extracted top terms, and more. Common research datasets from patents, chemistry, and litigation have also been uploaded.

Starting today, users can access information collected by other researchers and patent data providers in the same database, and mix them with private data to generate reports or investigate questions with the full freedom of SQL, without setting up a database of their own.

Commercial providers are also making their patent data available for purchase in BigQuery, starting with IFI CLAIMS Patent Data Enrichments including legal status information and standardized assignee names. CPA Global is also providing a set of their value-added standards data at no charge so researchers can study the impact of standards patents. Accessing these datasets through BigQuery gives users an up-to-date database managed by data providers, so users get the flexibility of a database without the engineering cost of maintaining one.

There are several third-party tools that can access BigQuery, such as Tableau and Looker, and provide an easier interface than SQL for querying data. For corporate users that have sensitive data that cannot leave their network, some of these tools can be used to fetch the data needed from BigQuery and process it on your computer together with the sensitive data.

BigQuery’s feature set makes this ecosystem possible: a single, huge database that can join together two or more tables uploaded by different people, access controls to easily share tables (like Google Docs), petabyte scale, and a serverless payment model split between query and storage.

Example queries for researchers

Patent data is invaluable for studying historical and present-day innovation. There are many areas to study using the 18 initial datasets. But these example queries show the flexibility of having a connected collection ready to analyze without setup. After the analysis is finished, researchers can upload and share their results as a new dataset to encourage reproducibility and allow others to promote and to build on top of their work.Peak year for patent filings per classification

Economists often study patent filings as one of many indicators of historical innovation trends. The Cooperative Patent Classification scheme is very detailed and can be used to aggregate sets of patents over time. For example, if we group by classification and year, we see that patent filings for "vehicles drawn by animals" peaked in 1909 with 212 applications, "methods of steam generation and steam boilers" in 1924 with 510 applications, "organic dyes" in 1973 with 2018 applications, and "amplifiers" in 2013 with 2368 applications. View other classifications in BigQuery.

Source: “Google Patents Public Data” by IFI CLAIMS Patent Services and Google, CC BY 4.0, “Cooperative Patent Classification” by the EPO and USPTO, for public use.

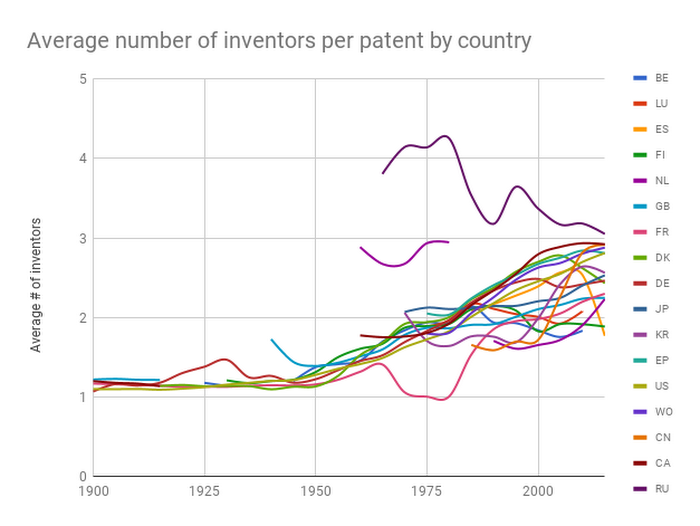

Average number of inventors per patent, by countryYou might also find it interesting to learn how inventors interact and work together. In this query you can track the average number of inventors on each patent per country over time. View in BigQuery.

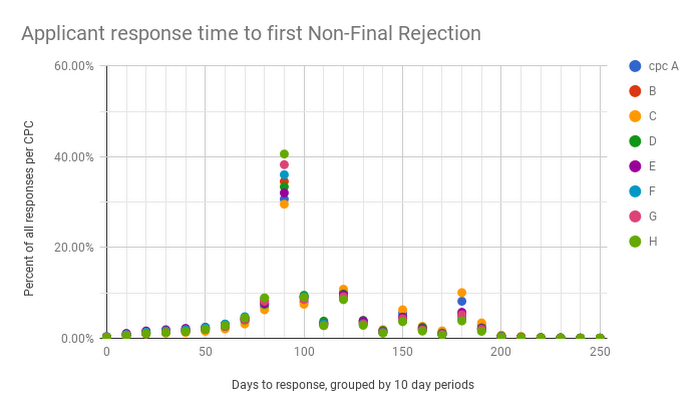

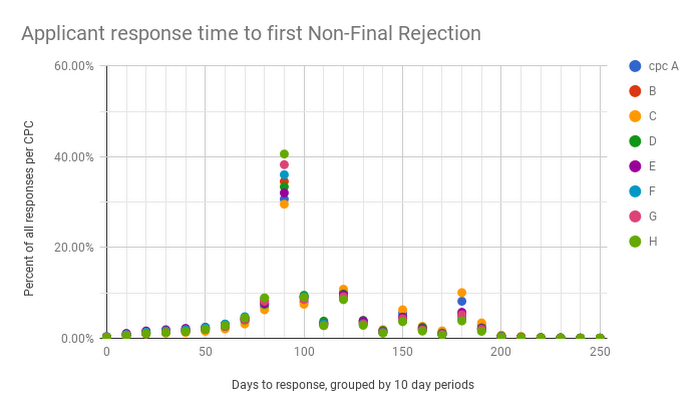

How long do applicants wait to respond to the first Non-Final Rejection?

Studying the interaction between applicants and examiners can also be a source of insight. Patents are examined through a series of back-and-forth "office actions" between the patent office and the applicant. The patent office records codes (rejection, grant, abandoned, etc) and in this dataset, you can query over 341 million USPTO events.

If you group by the 8 high-level patent classification codes, as in our example, you can see certain areas get quicker responses to the first Non-Final Rejection -- the examiner does a search and responds with one or more problems in the patent application). Applicants can then address the problems with a response. Applications in the H (Electricity) and G (Physics) classifications have faster responses on average, and C (Chemistry) applications often take longer to respond to rejections. Most responses fall right around the 90 day mark. View in BigQuery.

What's the longest patent examination path taken?

One such path is US 09/810,962, which had the longest series of reject-response-reject with 14 Request for Continued Examination (RCE) rounds between 2001 and 2016 before being abandoned. View other long examination paths in BigQuery.

Source: “Google Patents Public Data” by IFI CLAIMS Patent Services and Google, used under CC BY 4.0, "Patent Examination Data System" by the USPTO, for public use.

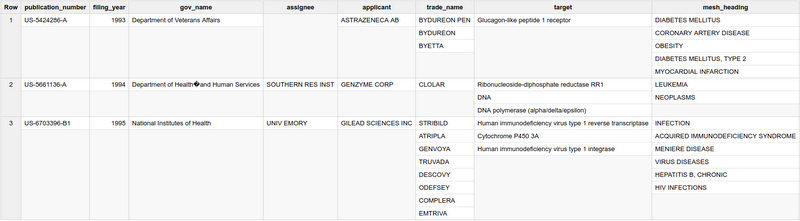

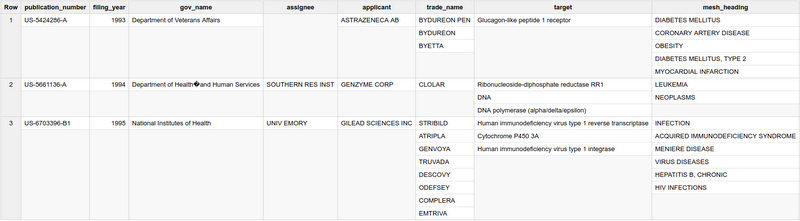

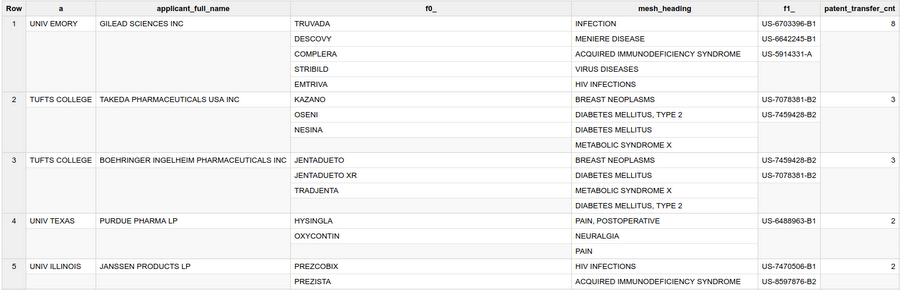

Patents with FDA approved drugs and government interest statements

The FDA Orange Book contains approved drugs and their associated patents. When a government funds a patent or the government has legal interest in a patent, the patent includes a statement disclosing the interest. We analyze these two datasets with one query to find drug patents that have government interest, and join in additional information about the drugs to see who applied for the drugs, their trade names, the targets they act on, and the drug indication's MESH heading. This query used to take a lot of work to perform, using a large chemistry-and-drug database (ChEMBL) and a large database of information extracted from patents (PatentsView). See the full results, or View in BigQuery.

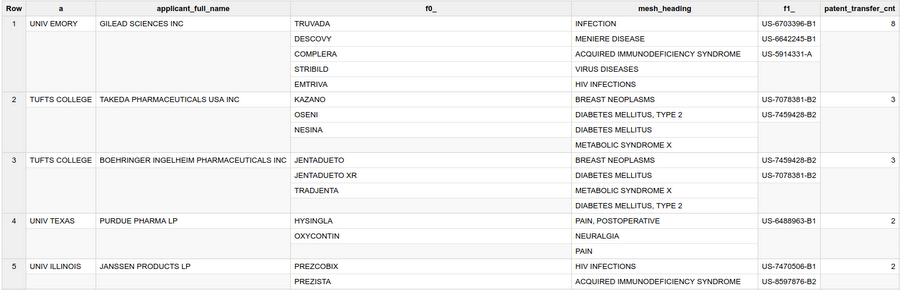

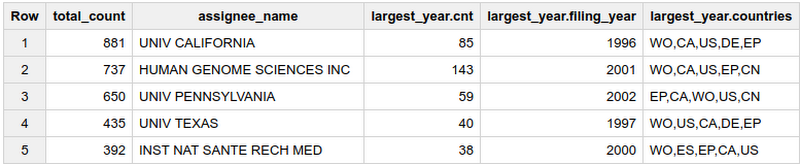

Technology transfer of FDA drug patents

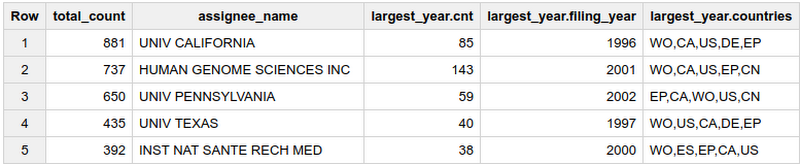

Patents of substances or applications that go on to become drugs are often developed at research institutions and licensed to companies for manufacturing through a technology transfer program. We can join ChEMBL data with Google Patents Public Data to find which patent assignees that look like research institutions (University, College, Institute) have the tightest connections with which manufacturers.? See the full results, or View in BigQuery.

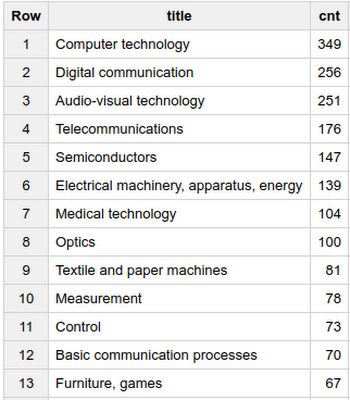

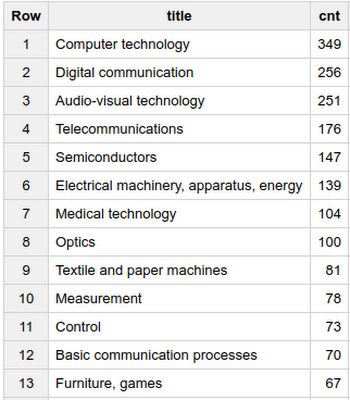

US International Trade Commission by WIPO industry classification

The USITC investigates complaints of unfair trade practices, including patent infringement. You can study what the high-level industry category of the patents involved in investigations are. View in BigQuery.

Sources: “PatentsView” by the USPTO, US Department of Agriculture (USDA), the Center for the Science of Science and Innovation Policy, New York University, the University of California at Berkeley, Twin Arch Technologies, and Periscopic, used under CC BY 4.0, "US International Trade Commission 337Info Unfair Import Investigations Information System" by the USITC, for public use.

Example queries for companies

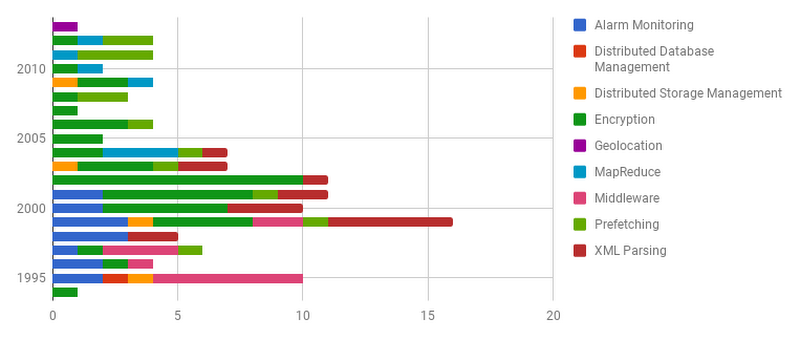

Enterprise users can use the public datasets or purchase access to BigQuery tables uploaded by data vendors and join them quickly with their internal data to produce reports.Portfolio filings per year

This simple query graphs the patents in your portfolio by their filing date and hand-labeled tags. As an example portfolio, we're using a partial list of Google's OPN patents we've uploaded into a BigQuery table. Querying your own portfolio is as easy as uploading a CSV file, and you control the access permissions. You can quickly export your result set to Google Sheets or download as CSV to create graphs or continue processing the data table for your reports. View in BigQuery.

You can easily switch the aggregation to use any level of the CPC hierarchy, or inventors, or any other category you can calculate. View in BigQuery.

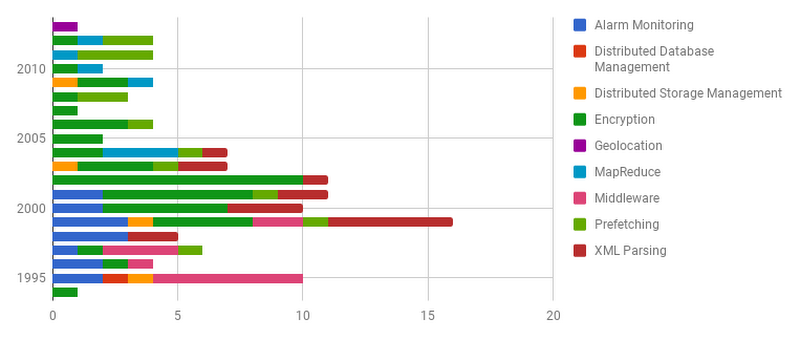

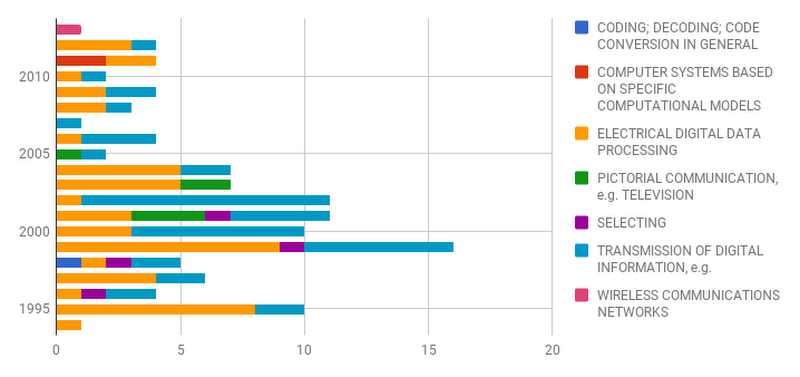

Patent landscaping

Enterprise users often want a high-level overview of a specific technology to see in aggregate who is in the space, when and where they are filing, etc. This example shows a simple landscape of patent filings by assignee in A61K48 (gene therapy). You might combine this result with several other queries on top inventors, filings over time, sub-classification activity, reassignments and more, which can also be answered in BigQuery. View in BigQuery.

Forward citations to a set of patents grouped by assignee and classification

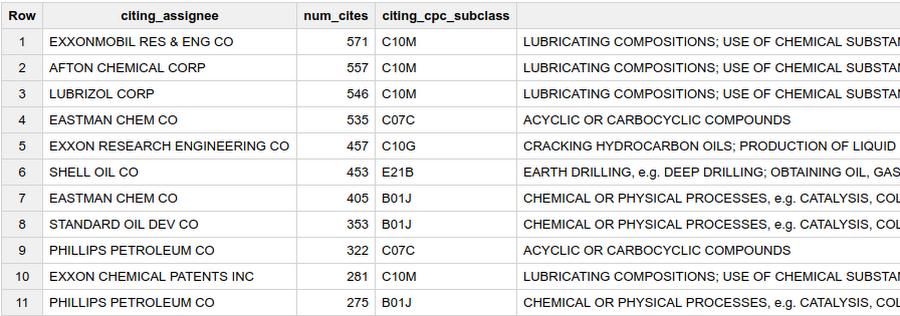

Another method of landscaping is to see what other assignees or inventors cite certain patents. This example aggregates the forward citations to "Standard Oil Co". View in BigQuery.

See other examples from data providers on the IFI CLAIMS blog, the CPA Global blog, and our GitHub repository.

Automated patent landscaping

Included in our GitHub repository is an example implementation of Automated Patent Landscaping (Abood, Feltenberger, 2016), a semi-supervised machine learning methodology that can be used to find patents related to any topic for which you can find a representative seed set of patents. Our implementation uses Long Short-Term Memory (LSTM) artificial neural networks and a pre-built word2vec word embedding model trained on ~6 million patent abstracts. Please take a look at this Jupyter notebook to guide you through the process of creating a sample patent landscape using hair dryer patents as a seed set.

Patent offices around the world support both researchers and industry with their data products. The European Patent Office (EPO) collects the trusted backbone of worldwide patent information, the United States Patent and Trademark Office (USPTO) Office of the Chief Economist provides many valuable datasets that we have included, and the USPTO, EPO and other offices continue to open up their data with new public APIs and downloads. These datasets are then improved, cleaned and extended: ultimately, they become commercial or research products, which go on to help the patent system. Without their work to make datasets more widely available, and the work of many others, the Google Patents Public Datasets project would not have been possible.

BigQuery for Data Providers

For data providers, BigQuery is a unique way to sell data in an instantly useful format to customers. The typical options for data distribution are either in bulk format through CSV/XML downloads, or through a web interface, but both have downsides. Bulk formats allow flexibility at the expense of the customer programming and maintaining their own databases, while web interfaces are easy to access, but cannot easily be extended with new paid or private sources of data, and have a fixed set of possible ways to query and display the data. Now customers can get the same flexibility of a database with the easy access of a web interface to connect private data and display it in dashboards and other visualization tools.The flexibility and improved usability of patent data in BigQuery helps users arrive at an answer faster, with less redundant data cleanup, normalization, and update work. Researchers can use it to share access to the data they've collected and make it reproducible, companies can join data against their internal records, tool providers can more easily integrate many different data sources, and data providers can focus on selling the best value-added data directly to a wider range of customers in a usable format.

Please contact contact us if you’d like to become a data partner for patent data or any other type of data from the Commercial Datasets program.