Built with BigQuery: Solving scale and complexity in retail pricing with BigQuery, Looker, Analytics Hub and more

Dr. Ali Arsanjani

Director, AI/ML Partner Engineering, Head of AI Center of Excellence, Google Cloud

Mike Ryan

SVP, Product & Science, Revionics

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialContext and Background

Maintaining optimal pricing in the retail industry can be challenging when relying on manual processes or platforms that are not equipped to handle the complexity and scale of the task. The ability to quickly adapt to factors affecting pricing has also become a critical success factor for retailers when pricing their products.

As a whole, the retail industry is working to absorb and respond to changes in the way customers buy and receive products, and to how pricing affects competitive advantage. For example, within some vertical domains, customers’ expectations for the price of products differ when buying online as compared to in-store, but are expected to align in others. Additionally, because of the ease with which shoppers can assess the prices across competitors, retailers are looking for ways to retain their most valuable customers; loyalty pricing, private label strategies, and bespoke promotional offers are seen as key aspects of possible solutions in this regard.

Whether in an everyday, promotional or clearance sense, the need to maintain optimal pricing requires a forward-looking mechanism that employs AI/ML capabilities based on multiple sources of input to provide prescriptive decision-making. Such an AI/ML platform can encourage specific buying behaviors aligned to a retailer’s strategy, for example, to rebalance inventory, expand basket sizes or increase private label brand sales. A pricing process can be thought of as having four stages:

Transfer and processing of information about a retailer’s operational structure, customer behavior related to its products, and other elements impacting supply and demand

Synthesis of information that represents the relationships between prices for products available across a retailers’ sales channels and business outcomes vis-a-vis financial metrics

Decision making, about price-related activities such as price increases / decreases or promotional offers, driven by human or software systems

Actions to actualize pricing decisions and to inform stakeholders affected by changes, driven by human or software systems

In the Solution Architecture section we will examine these stages in detail.

Use-cases: Challenges and Problems Resolved

The complexity of pricing in the retail industry, particularly for Fast Moving Consumer Goods (FMCG) retailers, can be significant. These retailers often have over 100,000 items in their assortments being sold at thousands of stores, and must also consider the impact of online shopping and customer segmentation on pricing decisions. Different buying behaviors across these dimensions can affect the recommendations of a pricing system, and it is important to take them into account in order to make accurate and effective pricing recommendations. An AI/ML-driven platform can provide greater agility and manage complexity to make more informed pricing decisions.

Speed is a critical factor in the retail industry, particularly for retailers selling Specialty Goods who face intense competitive pricing pressure in certain key products. In this environment, the ability to respond quickly to changes in the market and customer demand can be the difference between staying relevant and losing business to competitors. Automation using AI/ML, enabling real-time, on-demand price changes and promotions is a key factor in the evolving retail industry, particularly in the context of ecommerce and digital in-store systems like Electronic Shelf Labels (ESLs). These systems provide on-demand price changes and promotions that can positively alter customer behavior by increasing the basket size during a session.

To make this possible, the decision-making and delivery mechanisms behind these systems need to be driven by a flexible, programmatically accessible AI/ML engines that learn and adapts over time.

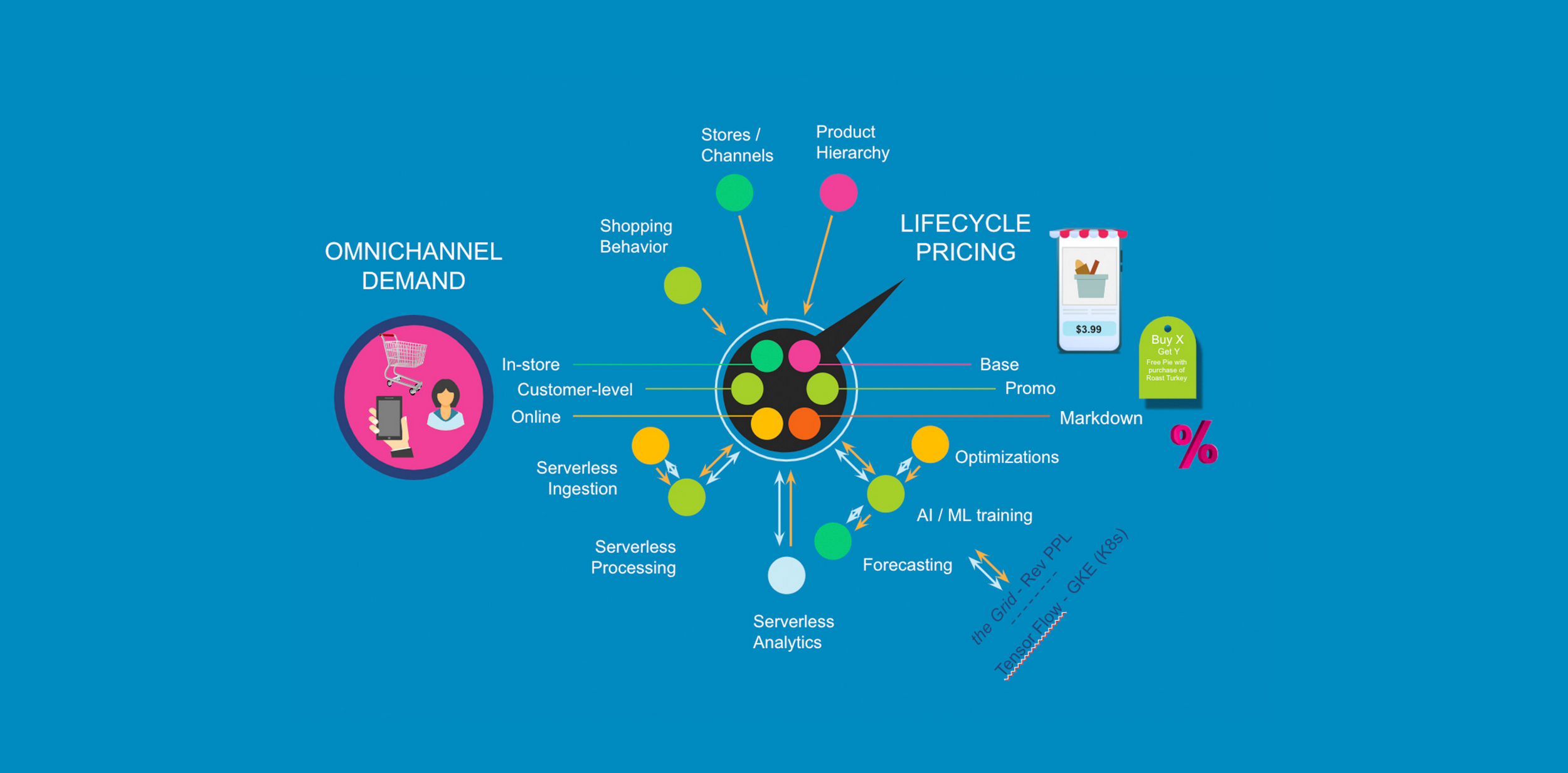

High level Architecture of Revionics Using GCP

Revionics’ product, Platform Built for Change, is a new platform that aims to address the significant changes occurring in the retail market by providing a flexible, scalable, intelligent and extensible solution for managing pricing processes. A foundational design principle for the platform is that it can be easily adapted, through configuration rather than code changes, to support a wide range of approaches and states of maturity in pricing practices. By externalizing dependencies of changes from the underlying code, the platform allows retailers to make changes more easily and quickly adapt to new requirements.

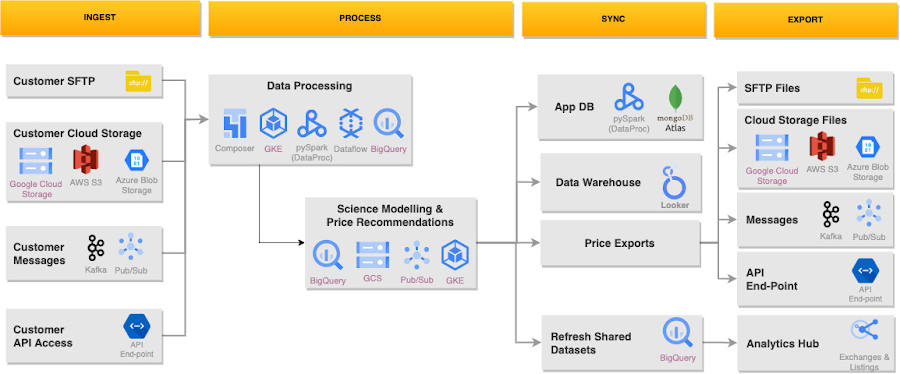

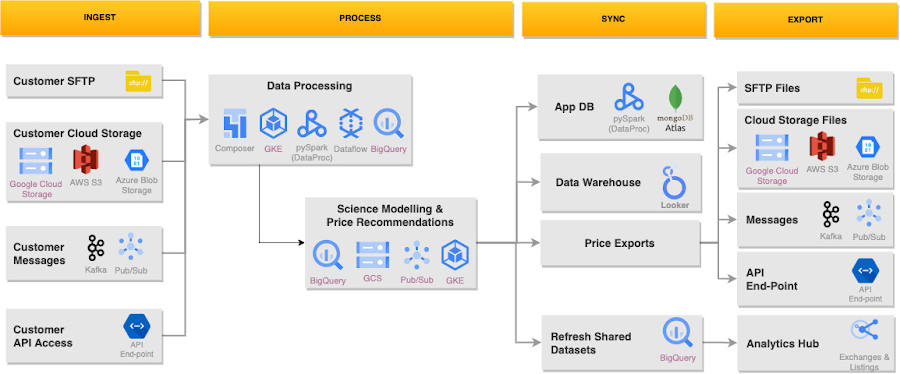

The above diagram shows Revionics solution where we can see GCP serverless technologies used across all different layers, from ingestion to export. The key services used are:

Data Storage: Google BigQuery, GCS (blob storage), MongoDB Atlas

Data Processing: Google BigQuery, Google DataProc, Google Dataflow

Data Streaming: Kafka, Google PubSub

Orchestration: Cloud Composer (Airflow), Google Cloud Functions

Containerization & Infra Automation: GKE (Kubernetes)

Analytics: Google Looker

Data Sharing: Google Analytics Hub

Observability: Google Cloud Logger, Prometheus, Grafana

Solution Architecture

We will discuss the key problems, challenges to solve and how the various stages of the solution; Ingest, Process, Sync and Export have enabled Revionics to address the need for speed, scale, and automation, all while solving increasing complexity and evolving challenges in Retail pricing.

1. Transfer and processing of information

As Revionics is a SaaS provider, supporting retailers at the first stage of the pricing process - the transfer and processing of information - essentially boils down to overcoming one major challenge: wide variability. In our domain, variability comes from several data sources shown in Figure 2 below:

Each retailer’s pricing practice and technical environment exist in various states of maturity and sophistication

Entire sources of data may be included in some cases, but excluded in others

API usage and streaming may be plausible with some customers, whereas SFTP transfers are the only available means for others

Data quality, completeness and correctness vary by retailer according their upstream processes

Both large batch and near real-time use cases need to be supported

The Data transformation logic that feeds into science modeling will differ according to a combination of grouping and configuration choices based on the retailer’s operations and objectives. Essentially, there is no single “golden data pipeline”.

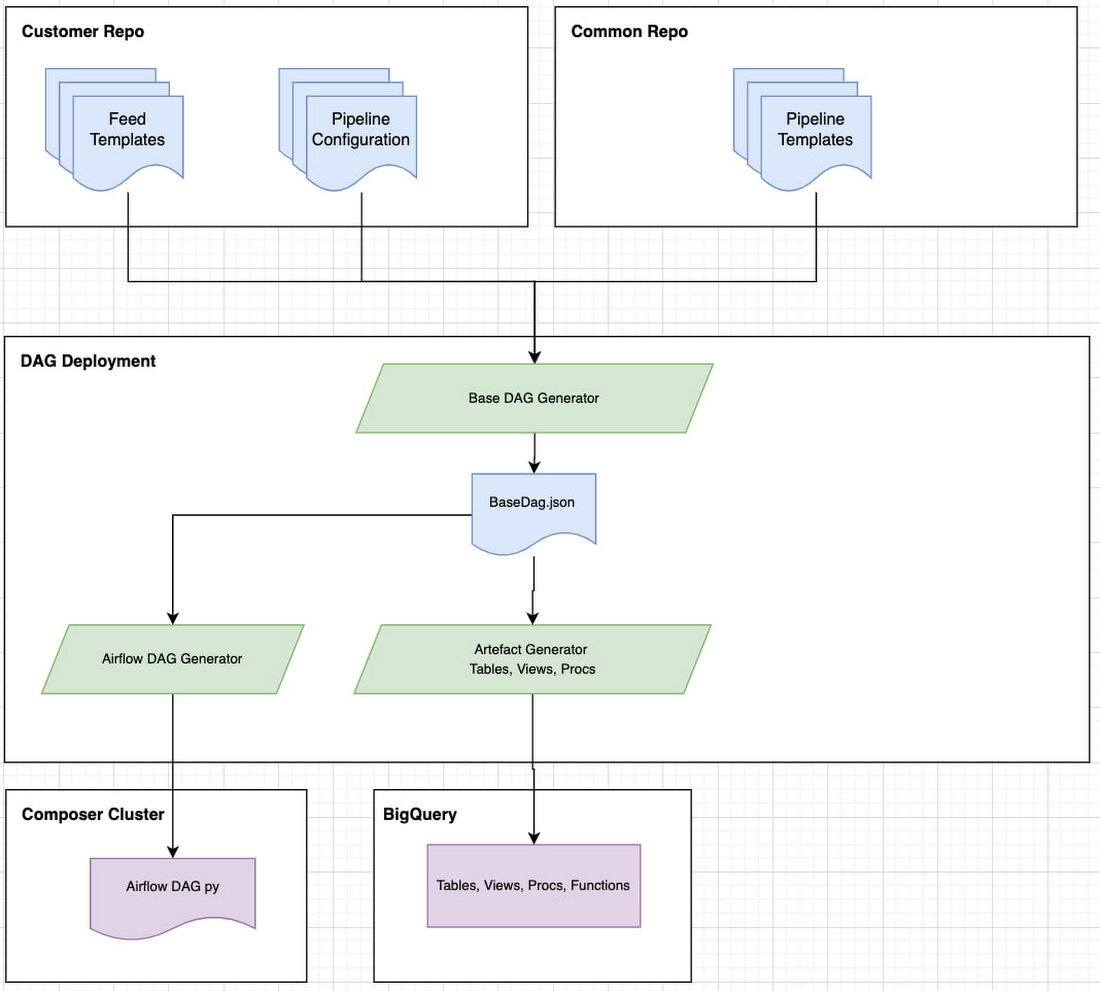

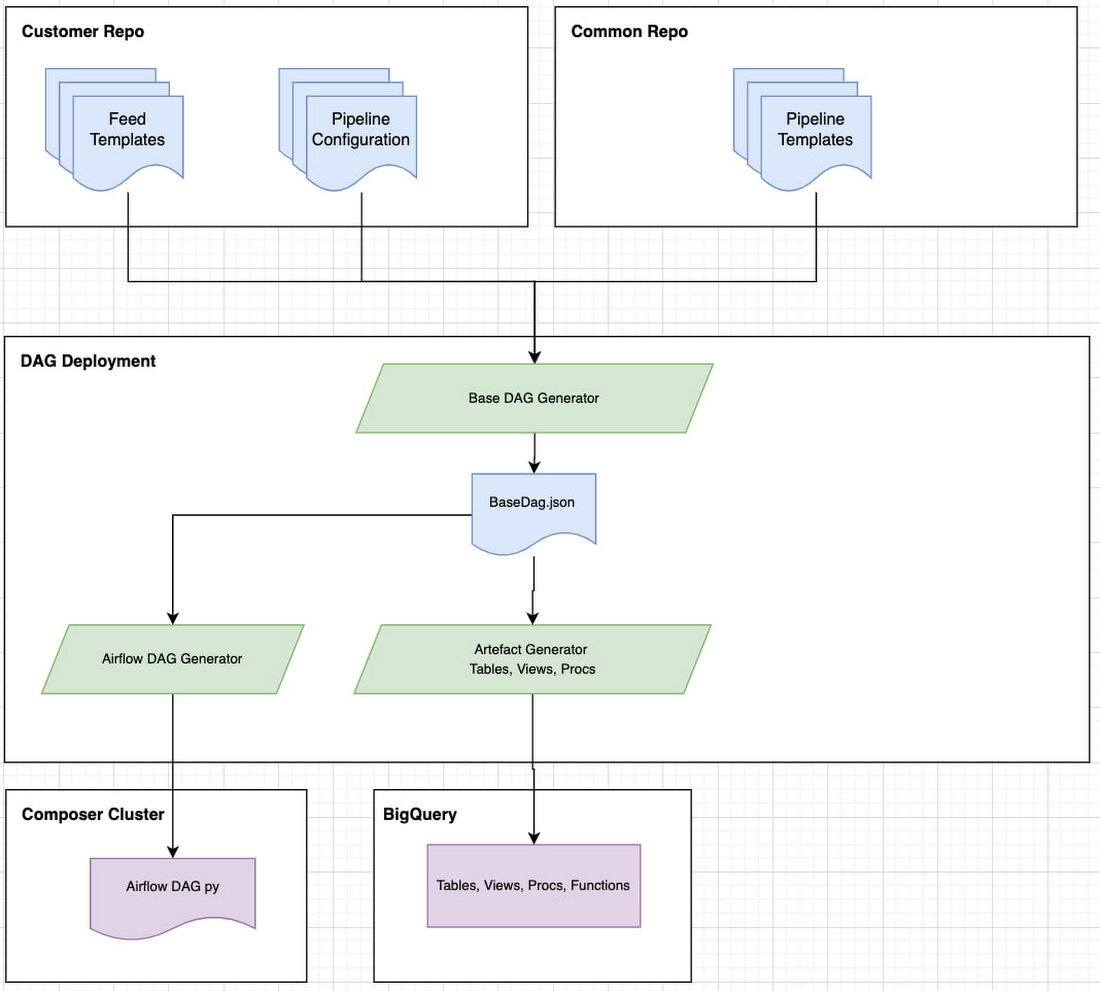

In order to describe how the variability challenges are addressed, let’s drill into the Ingest and Processing portions of the architecture in the above diagram of a Customer Workflow example. There are three primary concepts:

a feed is a representation of a streamed or scheduled batch data source which has a number of methods for handling file formats and for plugging into various data technologies

a pipeline represents a combination of transformations taking in feeds and other pipelines

a DAG (directed acyclic graph) is generated by the configuration or wiring together of feeds and pipelines as well as supporting methods that execute validation and observability tasks. The generated DAG represents the full workflow for ingesting and processing information in preparation for Revionics’ Science platform

Let’s explore the benefits of the solution that leverages GCP, as depicted in the DAG Generation flow diagram in Figure 3, below:

The logical flow is a combination of Templates, Configuration and a Library of modular processing methods to generate workflows for ingesting, combining, transforming and composing data in a variety of ways. These are abstracted in a set of human readable configurations, simplifying the setup and support accessible to non-engineers. The DAG Generator outputs a JSON file for the entire workflow that can easily be understood.

The Data Platform natively delivers support capabilities such as validations and observability. Configurable validation checks are insertable at various levels for inspecting schema, looking for anomalies, and running statistical checks. Similarly, event logs, metrics and traces are collected by Cloud Logger and consolidation within BigQuery, to easily explore building dashboards or building ML models.

From an architectural standpoint, there is very minimal intervention needed to scale, operate and manage infrastructure. The workflow logic is represented in an execution agnostic fashion by a DAG JSON file that orchestrates method calls and artifact creation such as Tables, Views, Stored Procedures etc. The DAGs become instruction sets for Airflow, ultimately executed on a Composer Cluster (serverless). DataProc reads from GCS or SQL procedures on BigQuery to do all the heavy lifting in terms of combining data, aggregating, ML feature prep, etc.

The bulk of event-driven processing is achieved using Cloud Functions or DataFlow, PubSub or Kafka and Dataflow. The architecture provides a high level of reliability and zero degradation in performance as requirements for scale and speed vary. The platform thus provides the ability for the team to focus on building novel approaches to challenging problems and being cost conscious on infrastructure spend.

2. Synthesis of information (balancing scale and skill)

At the heart of Revionics’ pricing process is an AI/ML engine used to synthesize the combined data signals that express the sources and contexts for demand of retail goods over zones, stores and customer segments.

Trained models learn to explain the influence of a multitude of features, such as seasonal effects, inflation trends, cannibalization across products, and competitive pricing on the quantity of products sold at a particular location and/or to a particular customer segment. These models are then used to forecast the impact of changes in price or in the structure of promotional offers given all known contexts prevalent during the time interval of interest.

The forecasts drive optimization processes that balance one or more business objectives (e.g. profit, margin, revenue or total units) while adhering to constraints based on the retailer’s operations and desired outcomes.

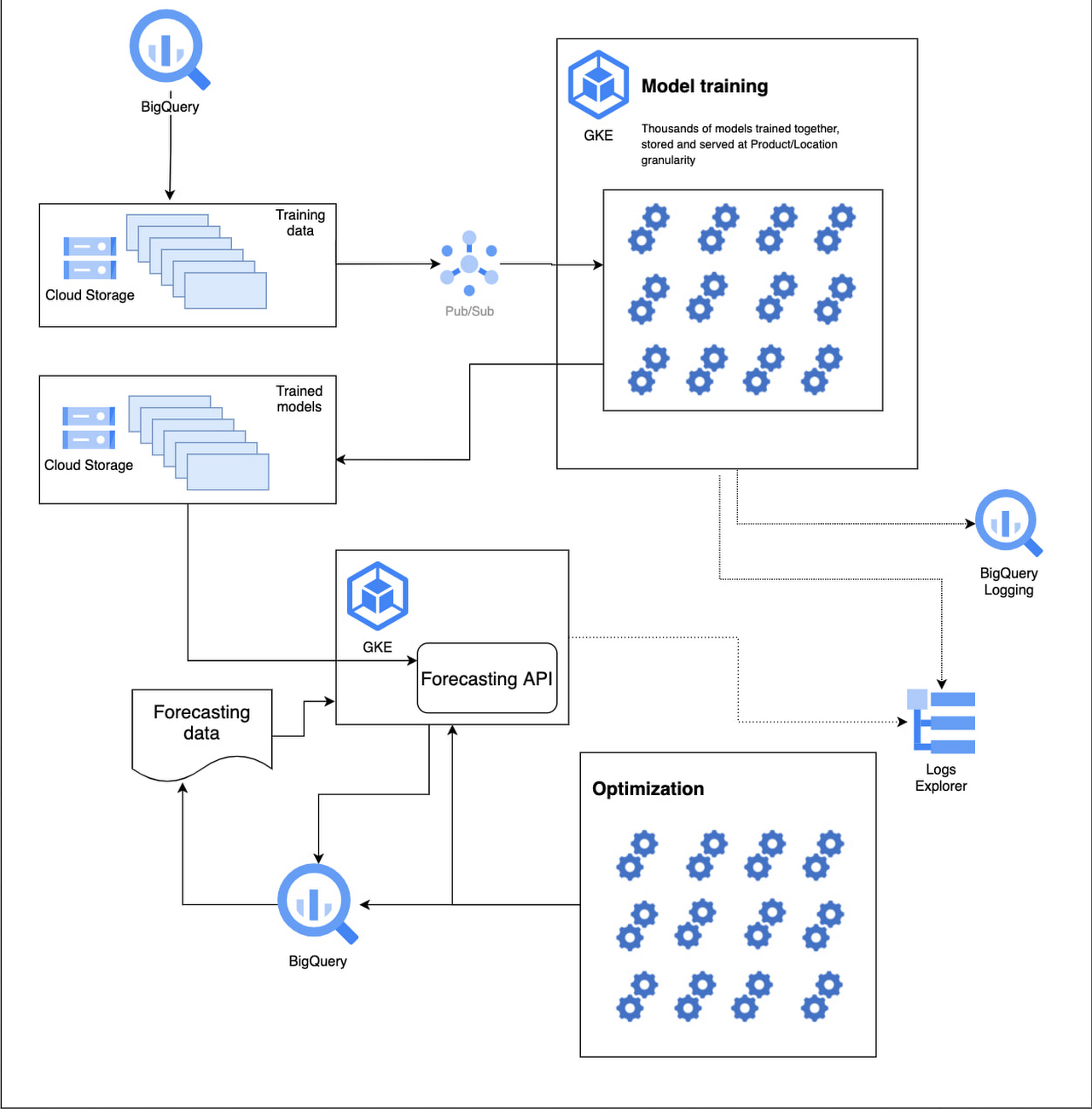

For example, in Figure 4, we are showing the data science modeling aspects employed by Revionics, leveraging the benefits of the GCP platform:

A retailer may want to optimize profit on its private label brands, while maintaining a minimum and maximum relative price differential to certain premium brands in a product category.

A retailer may look to set a markdown or clearance schedule while capturing as much revenue as possible using only discount multiples of 10%.

A retailer may want to optimize the discount level of a brand of flat screen TVs to maximize profitability over the whole category along with its related products.

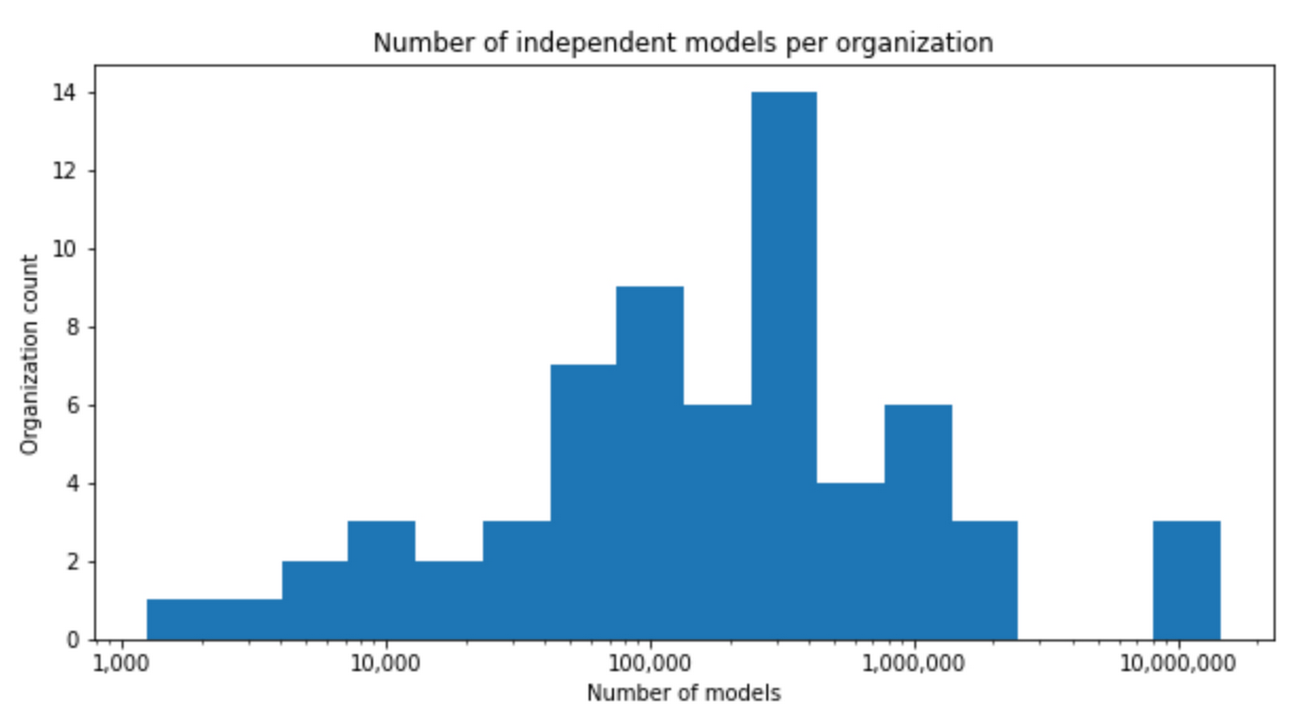

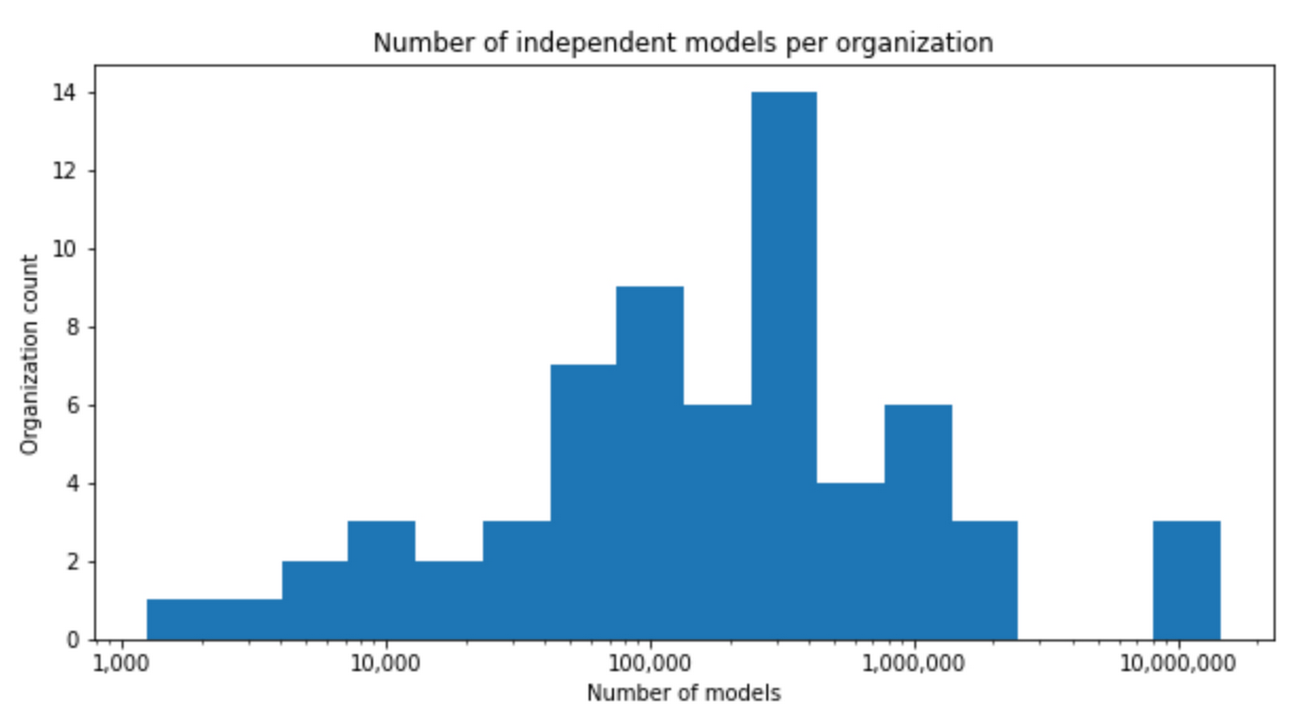

The primary technical challenge within the AI/ML domain for pricing is in balancing scalability with predictive skill during modeling, forecasting and optimization. Keep in mind that our median customer has 500,000 independent models (see Figure 5) that need to be trained per the histogram below; each training run is inherently iterative and computationally intensive. While the details of the science are beyond the scope of this blog, from a system perspective, Revionics architecture combines:

Two proprietary AI/ML frameworks, Probabilistic Programming Language (PPL) and the Grid, for expressing pricing domain behavior, and dynamically provisioning infrastructure to orchestrate separable modeling jobs based on statistical dependencies. PPL for expressing AI/ML models of pricing domain behavior; and the Grid infrastructure for orchestrating millions of parallel, separable modeling jobs.

Google-led open source platforms: TensorFlow for its rich machine learning library and framework and TensorFlow Probability for probabilistic modeling methods

Several of Google Cloud serverless services for data storage, compute, messaging, containerization & logging - GCS, BigQuery, PubSub, GKE & Logs Explorer.

Again, what is noteworthy about this solution is the breadth of challenges and capabilities that don’t have to be solved by Revionics because the services sit on infrastructure where sizing, configuration, monitoring, deployment and scaling are managed as a platform service.

Revionics’ modeling framework leverages a Hierarchical Bayesian methodology that can optimize each individual retailer’s product, store and customer relationships. This is a key aspect of differentiation from a predictive skill perspective, as complex relationships between entities can be preserved with a learned reduction of an otherwise intractable problem space.

3. Decision making and Action

The DAG generation-based architectural pattern used in the last two steps of the pricing process, handles the need for Automated Intelligence described earlier. Note, the purpose of the final steps in the pricing process are:

To expose outputs from the data science modeling and price recommendations to teams or systems for decision making about price-related activities such as increases / decreases or promotional offers

To take actions, or to actualize pricing decisions, as well as to inform stakeholders affected by the changes being made

The Automated Intelligence enabled by the Google Cloud Platform enables us to scale these steps by providing well-designed APIs that allow users to integrate with and build on a platform’s output. However, AI/ML -based applications have specific challenges that need to be overcome in pricing.

One of these challenges is to build trust through explainability and greater transparency of the decisions behind the models. Because AI/ML software is non-deterministic, poorly understood by non-practitioners, and often replaces human processes, confidence isn’t easy to engender.

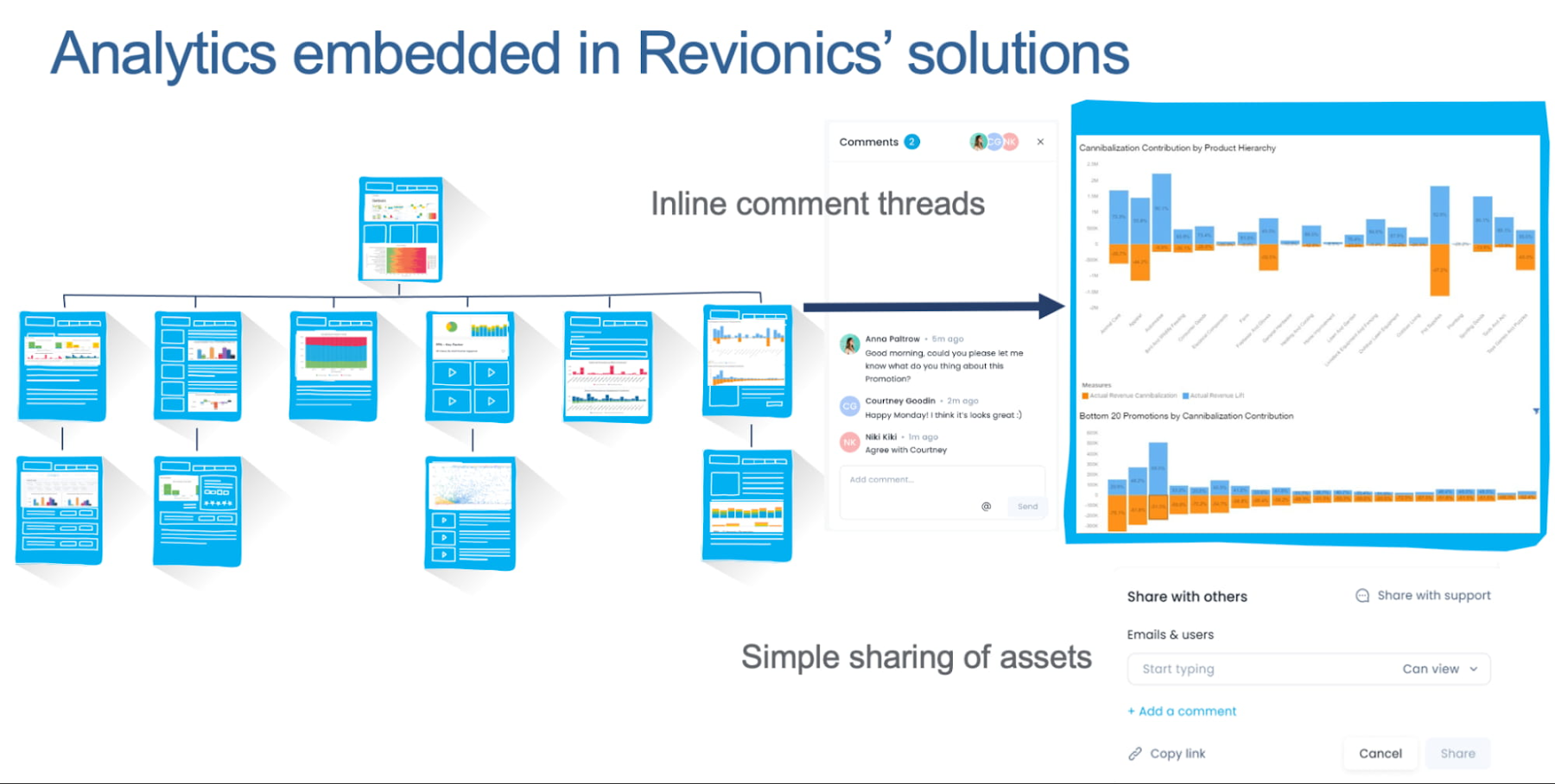

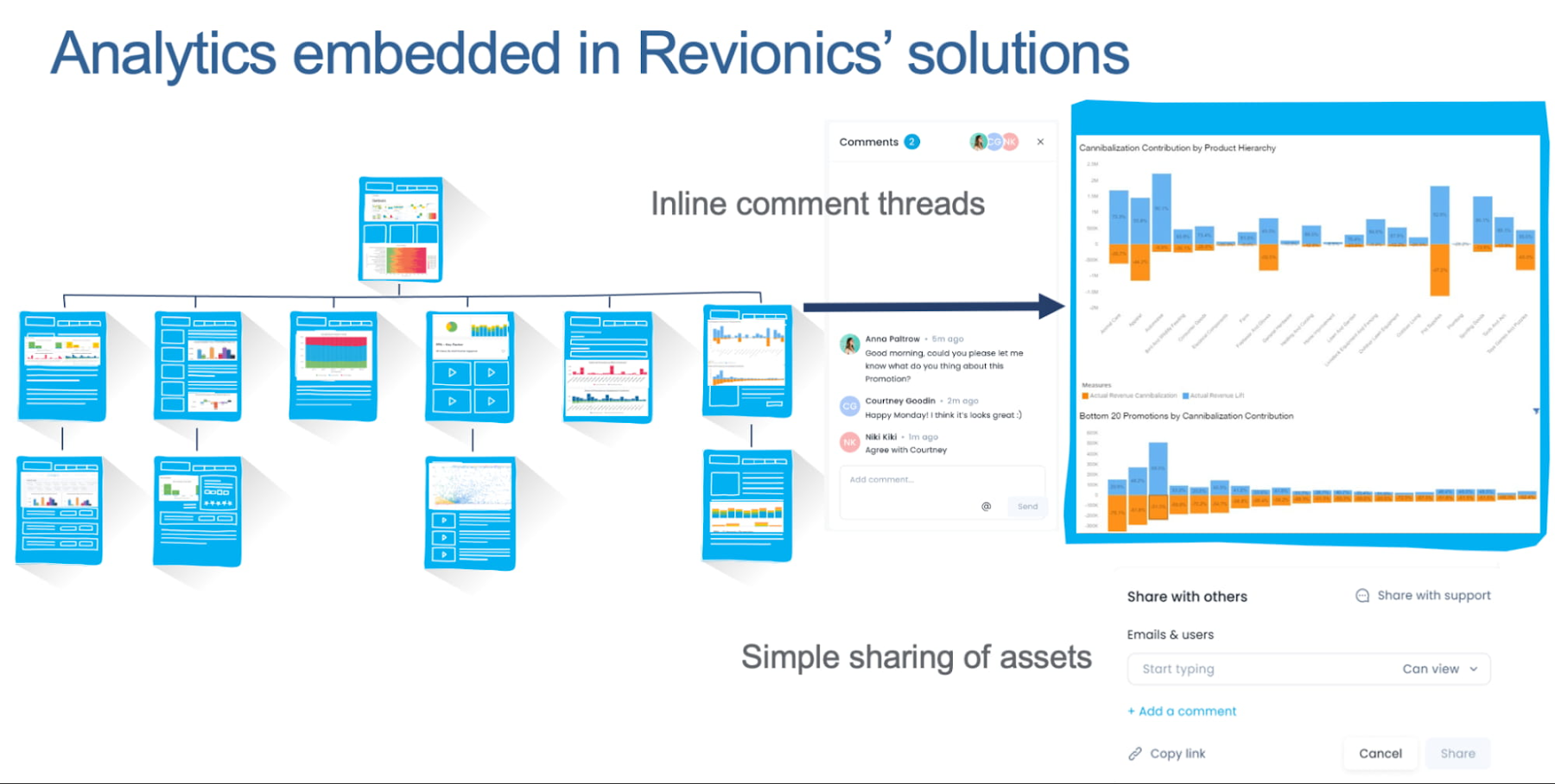

Visibility is the best asset for creating trust, which is why Revionics uses BigQuery and Looker at the center of our approach. With these technologies, reports and visualizations are interwoven into all aspects of the Revionics solution, creating a clear line of sight from data to decisions – this gives users visibility:

Forecasted business results from recommendations - for example, by making it easy for a user to understand the weighting of profit and revenue in a multi-objective optimization resulting in a particular price recommendation

Statistical confidence around decision variables - by showing visualizations and metrics such as 95% credibility intervals around price elasticity, for example

AI/ML model output analytics over time - including histograms, statistical metrics, outlier detection and the like that express the health of the models

Figure 6: Analytics Embedded in Solution

Automated Intelligence. The Looker + BigQuery combination is particularly effective because:

Performance in high-dimensional analytics. Its ability to maintain performance at scale in the context of high-dimensional analytics without the need for manual intervention.

Personalization. Its capacity for personalization and business relevance views and reports that reflect the specific conditions and metrics representative of the business.

Collaboration. In order to gain the confidence of people whose jobs are not pricing (e.g. category managers, merchandisers, executives), users have to be able to engage with and share analytical content in a completely frictionless way. In the Revionics solution, people within the organization who have no user login nor experience with the tool can view any analytical asset as well as engage with in-app comment threads.

Triggers. Additionally, analytical assets, such as reports and datasets, can also be scheduled or triggered for export.

Enhancing Performance and Managing Cost. Due to the scale of the data that is needed to drive downstream processes, APIs or even distributed streaming-based egress approaches are not always ideal. To resolve this, Revionics is exploring the use of Google’s Analytics Hub. The service gives an ability to create and securely share BigQuery datasets, tables and views that are simply available within the customer’s environment, which is an incredibly powerful tool for increasing the impact of Automated Intelligence. The benefit of exchanging data via the Analytics Hub is that we can preserve flexibility for users at scale in the system they are already likely to be using to drive their analytics, stream, and execute large transformations. In addition, Analytics Hub provides the levers to create exchanges and listings in Revionics’ SaaS solution without having to move data, thereby being incredibly cost optimized.

In particular, use cases that require very granular data from Revionics’ pricing system to be combined with a retailer’s other source data are very well served here. In a more tangible sense, we foresee users automating eCommerce-related capabilities, merchandising processes and digital marketing systems, in addition to any number of operational use cases that we have yet to conceive.

Outcomes

By building on the Google Cloud Platform and data cloud, Revionics has built and hosted solutions that have yielded numerous benefits. Some of the notable outcomes are:

Enhanced speed and agility: By replacing customer-specific stored procedures and scripts with human readable configurations, the solution has lowered the barrier for variable data transformation logic and better validation logic. All of these have made the solution easier to configure and more agile.

Improved stability: As the reliance on data quality is fairly high, several constructs in the solution have ensured data hygiene has improved leading to meeting SLAs and reduced downtime. Collectively, the customer support issues have lowered.

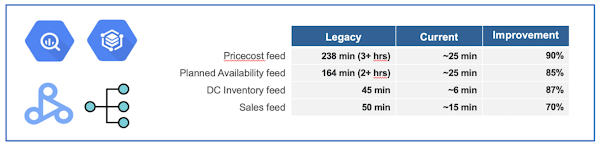

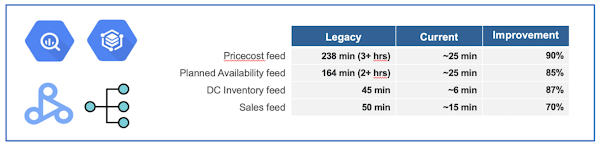

Rapid Data processing: Below graphic shows the multi-fold improvement in various parts of the data processing pipeline with progressively increasing volumes.

Faster Technical implementations: The time to value has been quicker enabled by design and performance. For instance: Test cycles and customer feedback sped up leading to quality standards being achieved faster. Historical loads have run significantly faster.

Increased accuracy: The forecast accuracy grew by a greater percent while maintaining training and optimization over the first phase of customers migrating to the new platform.

Decision making: The rising statical and decision confidence led to higher-impact results and better SUS (system usability scores).

Click here to learn more about Revionics.

The Built with BigQuery advantage for ISVs

Google is helping tech companies like Revionics build innovative applications on Google’s data cloud with simplified access to technology, helpful and dedicated engineering support, and joint go-to-market programs through the Built with BigQuery initiative, launched in April as part of the Google Data Cloud Summit. Participating companies can:

Get started fast with a Google-funded, pre-configured sandbox.

Accelerate product design and architecture through access to designated experts from the ISV Center of Excellence who can provide insight into key use cases, architectural patterns, and best practices.

Amplify success with joint marketing programs to drive awareness, generate demand, and increase adoption.

BigQuery gives ISVs the advantage of a powerful, highly scalable data warehouse that’s integrated with Google Cloud’s open, secure, sustainable platform. And with a huge partner ecosystem and support for multi-cloud, open source tools and APIs, Google provides technology companies the portability and extensibility they need to avoid data lock-in.

Click here to learn more about Built with BigQuery.

We thank the Google Cloud and Revionics team members who co-authored the blog: Revionics: Aakriti Bhargava, Director Platform Engineering. Google: Sujit Khasnis, Cloud Partner Engineering