Comparing GKE Autopilot cost efficiency to traditional managed Kubernetes

Victor Szalvay

Product Manager

Stan Drapkin

Chief Technologist, Cloud, EPAM Systems, Inc.

As the default and recommended mode of cluster operation in Google Kubernetes Engine (GKE), we’ve told you about how GKE Autopilot can save you up to 85% on your Kubernetes total cost of ownership (TCO)1 compared with traditional managed Kubernetes. That’s a lot of potential savings — to say nothing of the operational savings that GKE Autopilot users enjoy.

But how? Where do these cost efficiencies come from? More to the point, how can you use Autopilot mode to save yourself the most money? In this blog post, we dig into how GKE Autopilot pricing works, and explore the types of considerations you should be factoring into your Kubernetes cost-efficiency analysis.

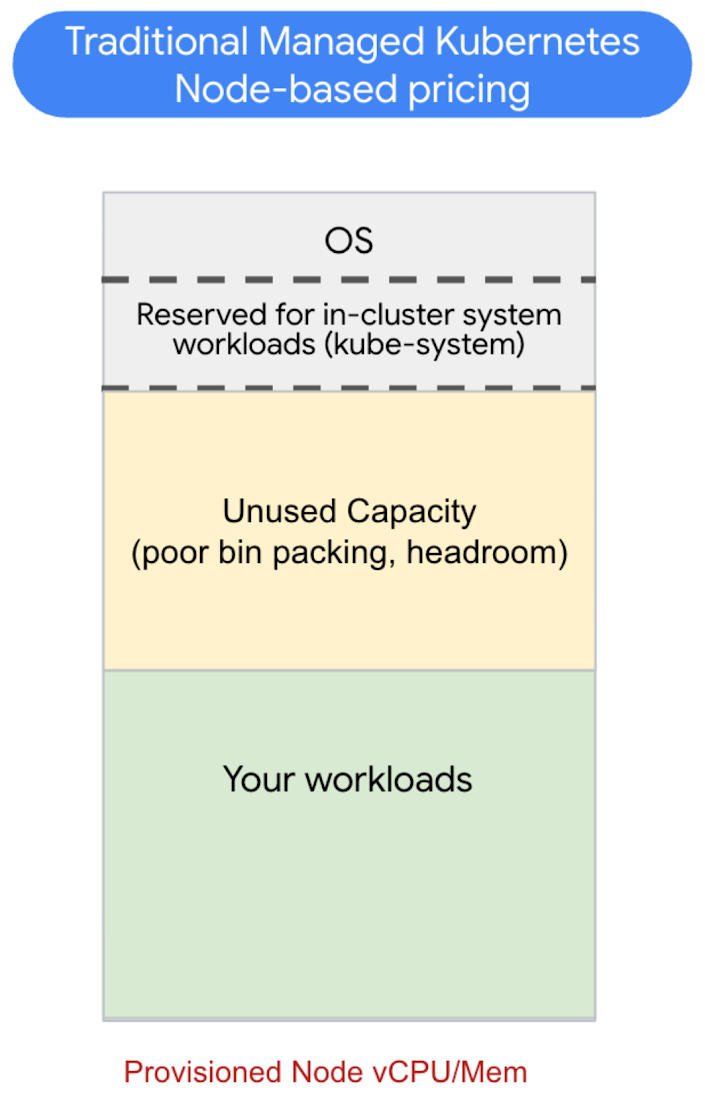

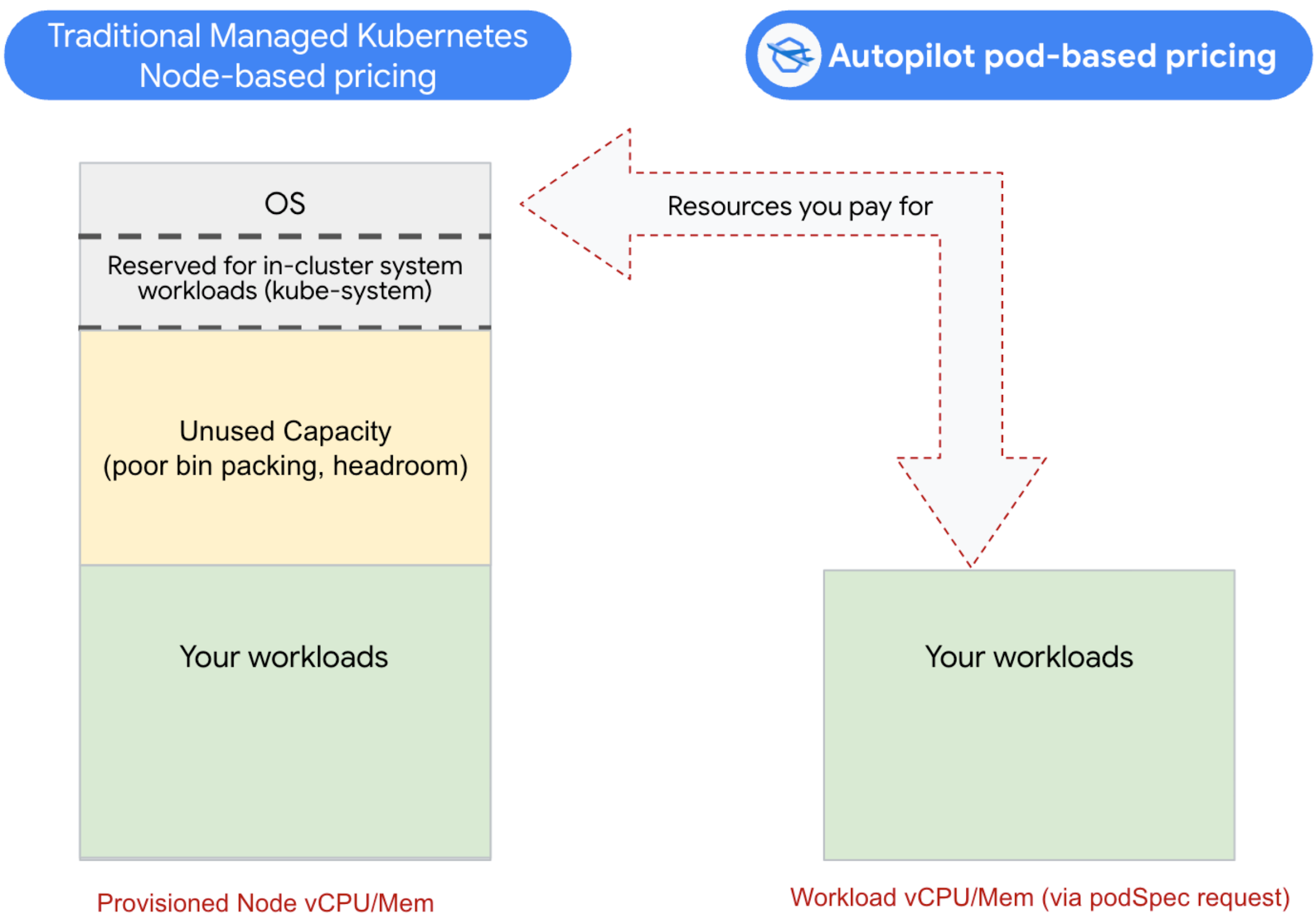

Traditional managed Kubernetes pricing model

But first, let’s review how pricing works in a traditional managed Kubernetes environment. Kubernetes clusters run their workloads on nodes — underlying compute and infrastructure resources (usually virtual machines, or VMs). With traditional managed Kubernetes, you are indirectly paying for those provisioned nodes including their CPU/Memory ratios and other attributes, e.g., which Compute Engine machine family they are running on.

Kubernetes uses those available node resources to run and scale its workloads. This usually happens automatically through Kuberentes scheduling. And how are you billed for this? You pay for the entirety of the underlying nodes, regardless of how efficiently those nodes are utilized by workloads. In other words, it’s up to you to make efficient use of the provisioned resources.

Further, only a portion of those nodes are actually available for your workloads. In addition to your containers, nodes also run operating systems (e.g. Container Optimized OS), and several top-priority system-level Kubernetes system components. Cloud providers also often run additional services on your Kubernetes nodes. All of those chew up resources. So depending on your node capacity, a sizable chunk of your node isn’t even available to use, yet you are still paying for the whole thing.

Even if you weren’t paying for all these system-level resources, it would still be challenging to cost-optimize a traditional managed Kubernetes environment. If you think of nodes as “bins” of resources, then “bin packing” is the art of efficiently running your workloads in the available node capacity. Since a node has finite resources, you have to carefully consider the shape and size of your provisioned nodes to match your workload resource requirements. In practice, this is difficult to do well consistently; you have to balance leaving enough headroom for scale events and keep on top of workload changes like scaling and pod lifecycle.

GKE Autopilot pricing model

So, having established that you’re likely not bin packing your Kubernetes clusters anywhere near 100% efficiency, what’s the alternative? GKE Autopilot mode provides a novel approach: pay only for the resources your workload requests, and leave the bin-packing to GKE. In this model, each workload specifies the resources it needs — the machine family type, CPU, GPU, memory, storage, spot, etc. — and you only pay for those requested resources while the workload is running.

With Autopilot, you don’t pay for the OS, system workloads, or any unused capacity on the node. In fact, node efficiency is left entirely for GKE to manage. This has the added benefit of letting each workload define what it needs with regards to machine families and node characteristics, without needing to provision finite node pools.

So what’s the catch? Compared to node/VM pricing, Autopilot resource unit pricing is more expensive. But that does not mean GKE Autopilot is pricier than traditional managed Kubernetes overall. In fact, there’s a break-even point in terms of utilization under which Autopilot is actually cheaper than managed Kubernetes.

A sample cost comparison

To illustrate how to do a cost analysis for your own workloads, let’s look at running the same workloads in a high-availability configuration on both traditional managed Kubernetes and GKE Autopilot mode, and compare the costs. To keep it simple, we’ll stick with vCPU and memory, although you could easily add storage to this framework.

On traditional managed Kubernetes, we provision a cluster with 12 e2-standard-8 type nodes, spread across three zones for HA. This adds up to 96 vCPU / 384 GB memory, for a total list-price cost of about $2,545 per month.

For Autopilot, we just specify the workloads' required resources in the pod spec, without having to set up node pools. After deploying, we use GKE’s built-in cost optimization tool to analyze our actual usage, and find that our workloads have a mostly static usage pattern, with periodic spikes. We therefore set our request requests to about 15% above that static baseline and configure Horizontal Pod autoscaling (HPA) to deal with the spikes.

We know that our target environment requires a certain number of replicas, each of which requests CPU and RAM via the resources.requests Pod specification, totaling 48 vCPU and 192 GB of RAM for about 96% of the time. HPA increases the replica count threefold during scale events for the remaining 4% of the time (about an hour per day). With this setup, the total list-price cost on Autopilot is approximately $2,250 per month — about 12% less than the equivalent setup on managed Kubernetes.

You may have some questions about this example. For one, why didn’t we just provision fewer nodes on GKE Standard to begin with? For two reasons:

Because bin packing is difficult in practice. We will not be able to achieve the same level of bin packing on GKE Standard that we get on Autopilot, so we provision extra capacity to ensure we don’t hit any unintended limits.

There are some spikes in our usage and we need spare capability available to handle it.

All told, GKE Autopilot makes more efficient use of resources, so there is less guesswork and fewer chances of creating an inefficient setup.

Guest perspective: How EPAM customers save with Autopilot

by Stan Drapkin – North American Google Cloud Chief Technologist, EPAM Systems, Inc.

We recommend Autopilot for cost-conscious customers

There are several reasons why GKE Autopilot is widely adopted by our customers. First, it simplifies the management of Kubernetes clusters by automating many tasks, such as node provisioning, scaling and upgrades, which can be time-consuming and error-prone. This frees up developers and operators to focus on building and deploying applications rather than managing infrastructure.

Second, GKE Autopilot provides a highly secure and reliable Kubernetes environment by default. It implements industry best practices for security and includes features like node auto-repair, which automatically replaces unhealthy nodes, and horizontal pod autoscaling, which automatically adjusts resources based on application demand.

Comparing costs on Autopilot

There’s no one generic benchmark that adequately quantifies GKE Autopilot vs. GKE Standard, because every application is different. The best way to compare is to deploy an application on both GKE Standard and GKE Autopilot and compare the costs incurred.

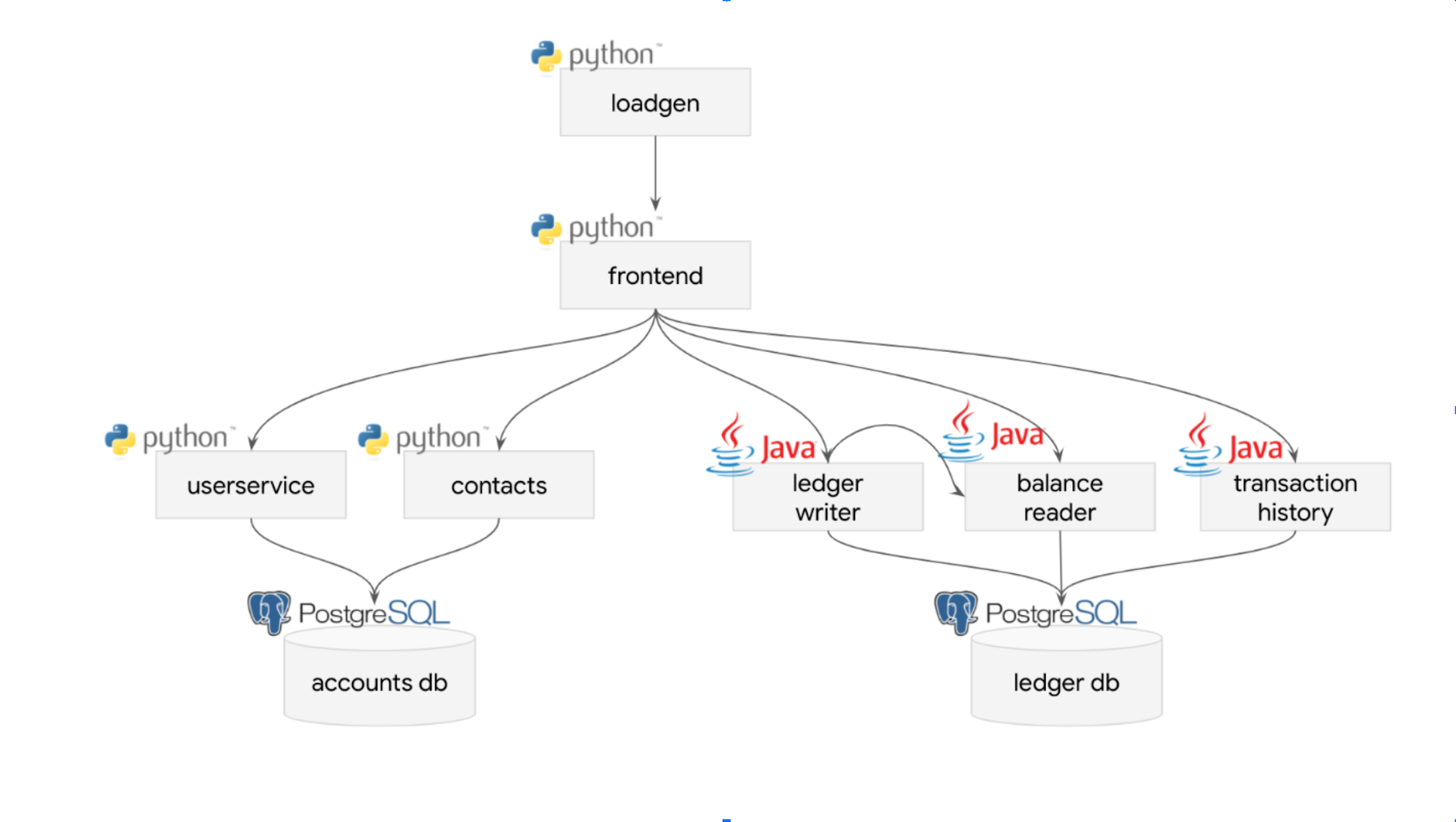

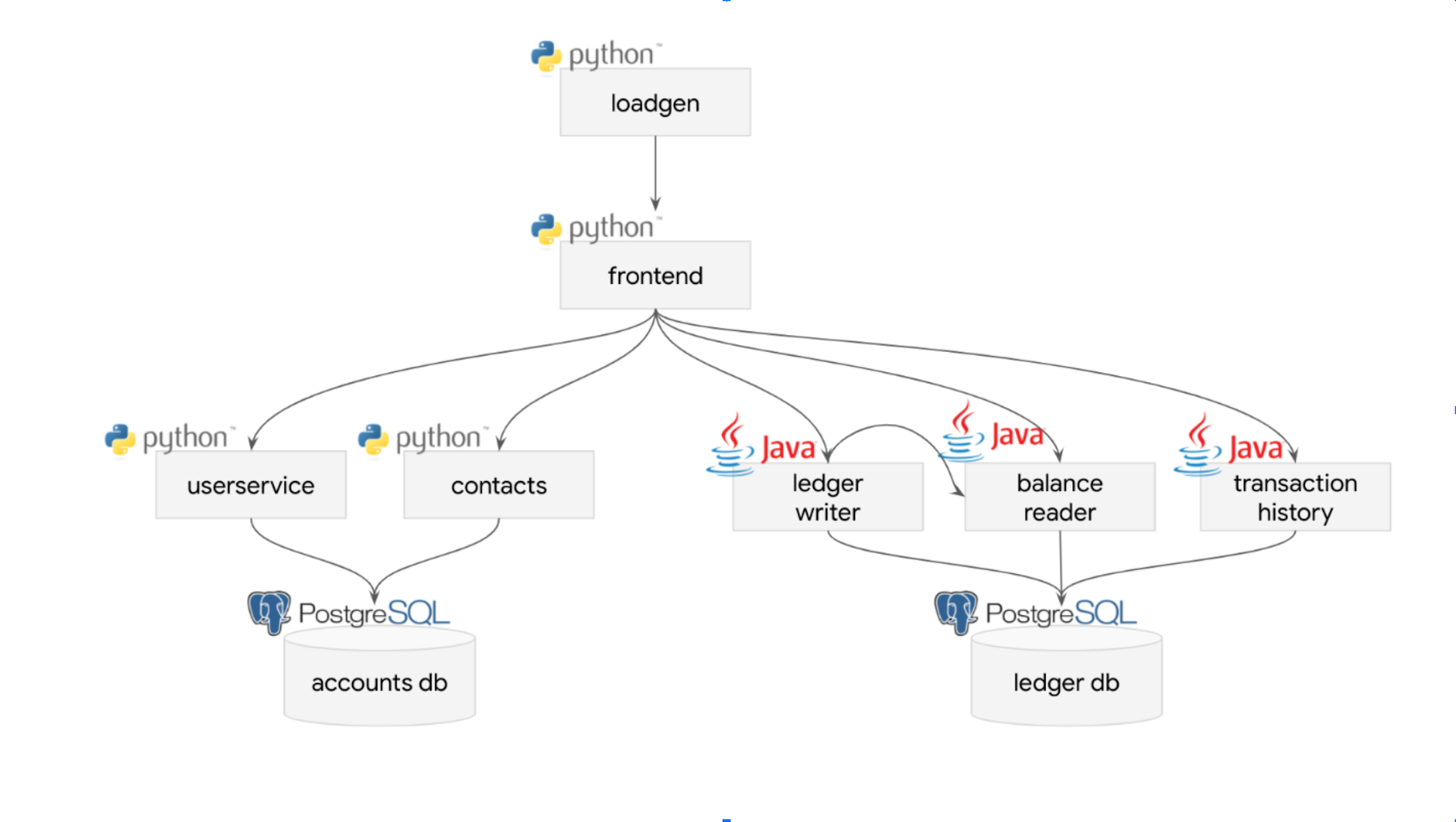

One sample GKE Autopilot deployment scenario we’ve comparison-tested with synthetic data is the Open-Source Bank of Anthos (BoA), which simulates a payment processing network. You too can deploy this sample app by following this tutorial.

A BoA deployment consists of two data-stores and seven services (four Python and three Java) and is a reasonable “real-world” test, as far as sample apps go:

In our tests, we saw up to 40% cost reduction on GKE Autopilot as compared to Standard.

We also extrapolated the costs for both workloads on an annual basis. For the GKE Standard workload, we would incur $3,317 USD in cloud costs for the year. However, for the GKE Autopilot workload it would only be $1,906 USD — a difference of $1,411, or 42.5%. This was a simple workload, so you can’t make any generalizations (especially not for already optimized workloads), but it does show Autopilot's potential for specific cases.

Customers have discovered that GKE Autopilot offers a hassle-free, secure and economical solution for running their Kubernetes workloads. This is why we recommend our customers to consider adopting it as their primary choice for running containerized applications on Google Cloud.

Soft savings with GKE Autopilot

There are other benefits to GKE Autopilot than just raw dollar savings. Again and again, GKE Autopilot customers tell us they don’t need to provision as much headroom for their applications, and they have to perform much less day-to-day management. Let’s take a deeper look.

Autopilot minimizes headroom requirements

Autopilot can help you cut down on how much spare headroom you need to provision, including in high availability (HA) setups. If you anticipate an increase in load, provisioning extra headroom provides for options like HPA to scale to meet increased demand. Similarly, for redundancy in a HA setup, you may need to have multiple nodes in several geographically separated zones.

Autopilot spins up new hardware on demand in response to scaling, so you don’t need to pre-allocate infrastructure headroom that sits idle. And in an Autopilot HA setup, you only need to deploy pods per zone rather than duplicates of the entire node, which again, is likely much less expensive. Autopilot also makes it easy to duplicate only specific workloads with higher availability requirements, and you can rely on Autopilot to shift less critical workloads to a new healthy zone on-demand.

Day 2 operations

With traditional managed Kubernetes, you manage the Kubernetes nodes including provisioning/shaping, upgrading, patching, and dealing with security incidents.

With Autopilot, Google SRE does all this, while providing a Pod SLA that’s additive to the GKE Standard SLA. At the same time, you still retain control and flexibility via maintenance policies.

The bottom line

If your team is amazing at bin packing and you need the level of control offered by directly managing nodes, a traditional managed Kubernetes platform like GKE Standard is likely your best bet. However, even if you’re doing a phenomenal job of bin packing, chances are you spend a lot of time and energy on it. In most cases, this effort rises linearly with the scale of the Kubernetes fleet, and smaller clusters wind up paying less attention to running efficiently. If your platform team needs to reclaim the effort they spend on bin packing, Autopilot can help reduce Day 2 operations and the financial risks of inefficient bin packing, while freeing you to build more powerful developer experiences.

To learn how to get up and running with Autopilot quickly, check out the following resources:

Using Compute Classes to specify machine types

Deploy a full stack workload on Autopilot

Deploying workloads like Redis, high-availability PostgreSQL, and high-availability Kafka on Autopilot

1. Forrester TEI report: GKE Autopilot - January 2023 - https://services.google.com/fh/files/misc/forrester_tei_of_gke_autopilot.pdf