Struggling to fix Kubernetes over-provisioning? GKE has you covered

Fernando Rubbo

Global Solutions Manager, AI Infrastructure

Roman Arcea

Product Manager, GKE

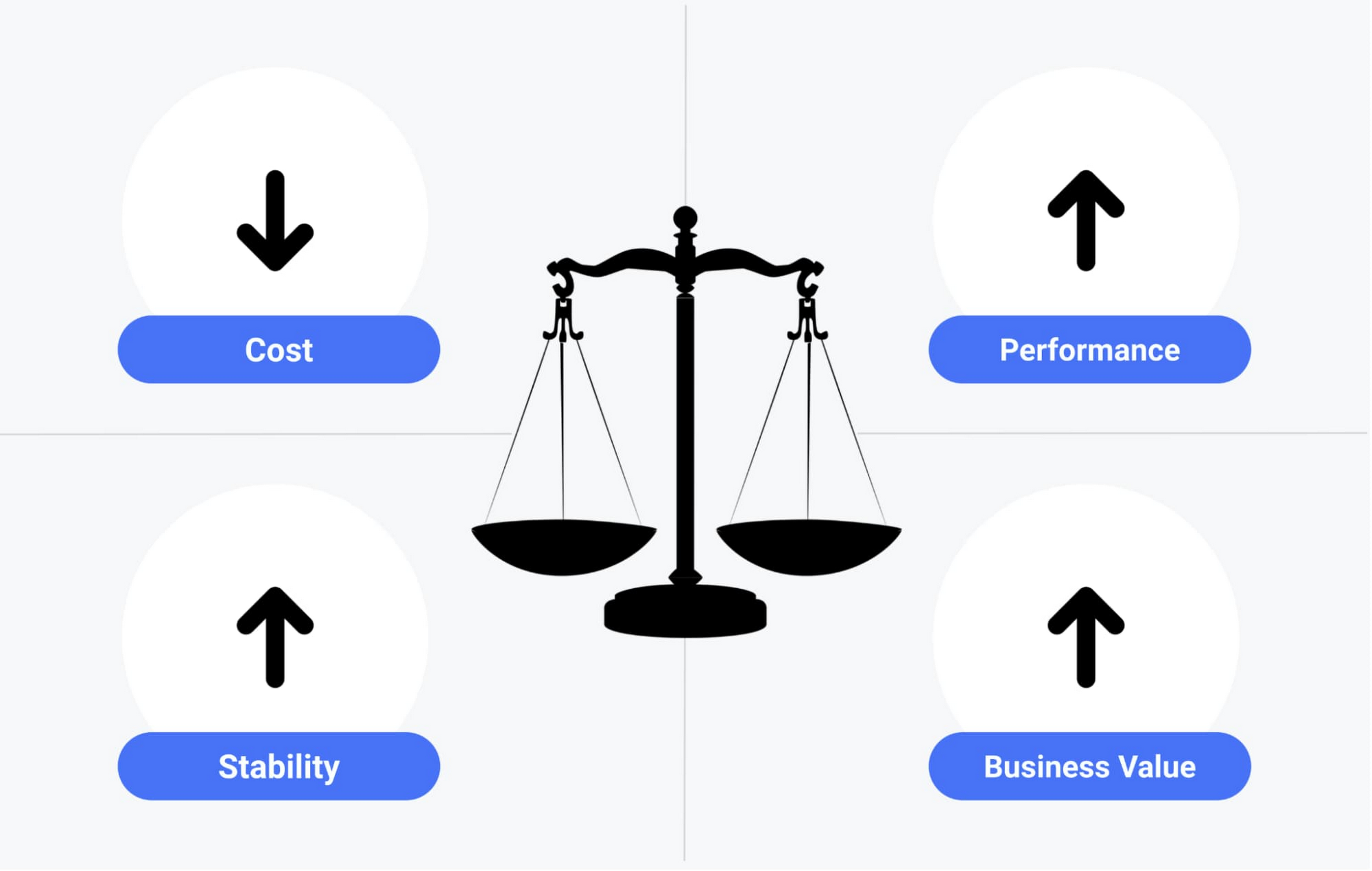

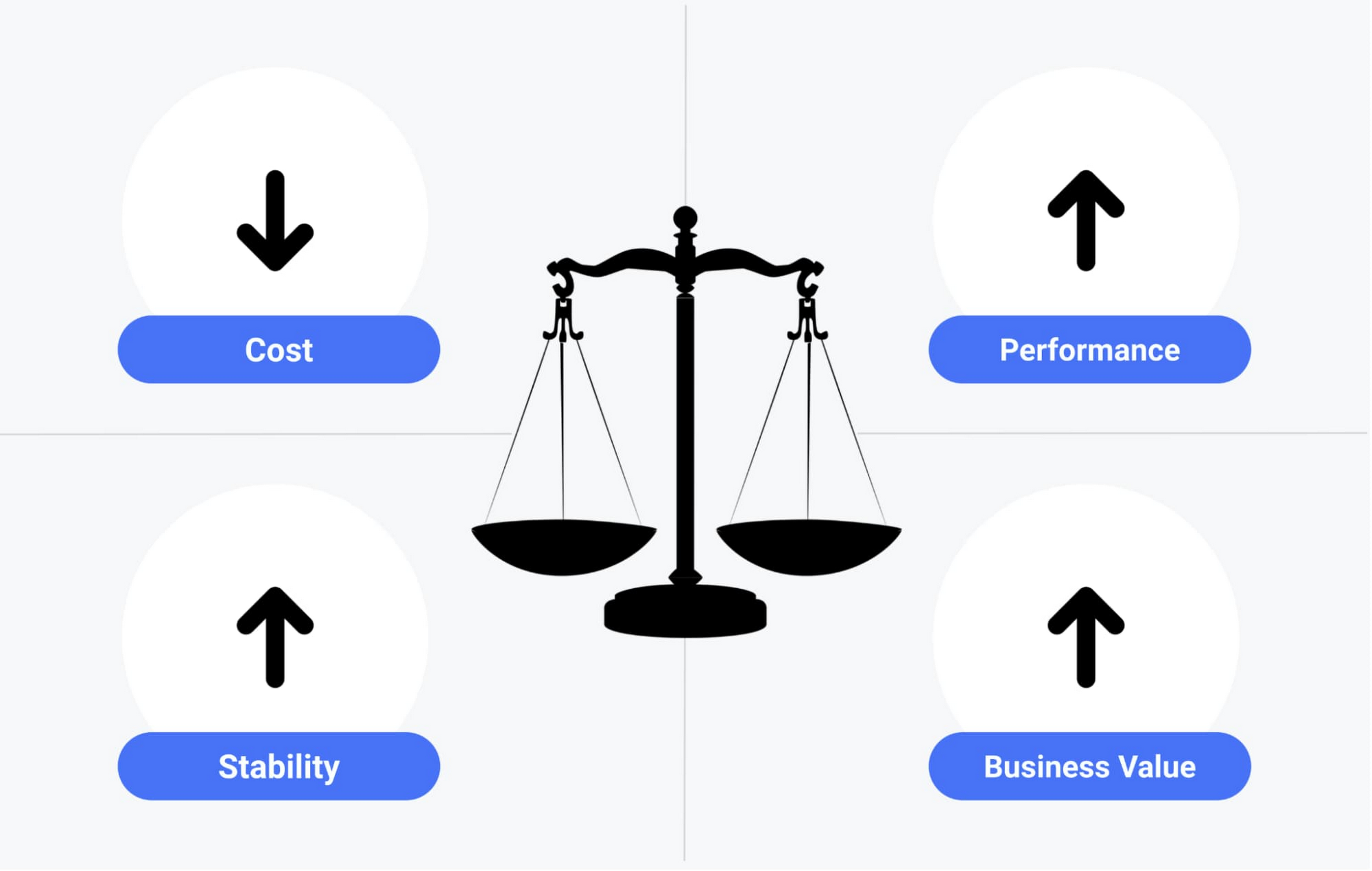

Cost optimization is one of the leading initiatives, challenges, and sources of effort for teams adopting public cloud1—especially for those just starting their journey. When it comes to Kubernetes, cost optimization is especially challenging because you don’t want any efforts you undertake to negatively affect your applications' performance, stability, or ability to service your business.

In other words, reducing costs cannot come at the expense of your users’ experience or risk to your business.

If you’re looking for a Kubernetes platform that will help you maximize your business value and at the same time reduce costs, we've got you covered with Google Kubernetes Engine (GKE), which provides several advanced cost-optimization features and capabilities built-in. This is great news for teams that are new to Kubernetes, who may not have the expertise to easily balance their applications' performance and stability, and as a result, tend to over-provision their environments to mitigate potential impact on the business. After all, an over-provisioned environment tends not to run out of headroom or capacity, ensuring that applications meet users’ expectations for performance and reliability.

Cost-optimization = reduce cost + achieve performance goals + achieve stability goals + maximize business value

While over-provisioning can provide short-term relief (at a financial cost), it’s one of the first things you should look at as part of a continuous cost-optimization initiative. But if you’ve tried to cut back on over-provisioning before—especially for other Kubernetes platforms—you’ve probably found yourself experimenting with random configurations and trying different cluster setups. As such, it’s not uncommon for teams to give up on cost optimization due to the amount of effort they put in relative to the results. Let’s take a look at how GKE differs from other Kubernetes managed services, and how it can reduce your need to over-provision and simplify your cost-optimization efforts.

The most common Kubernetes over-provisioning problems

Before jumping into GKE features and solutions that can help you optimize your costs, let's first define the three main challenges that lead to over-provisioning of Kubernetes clusters.

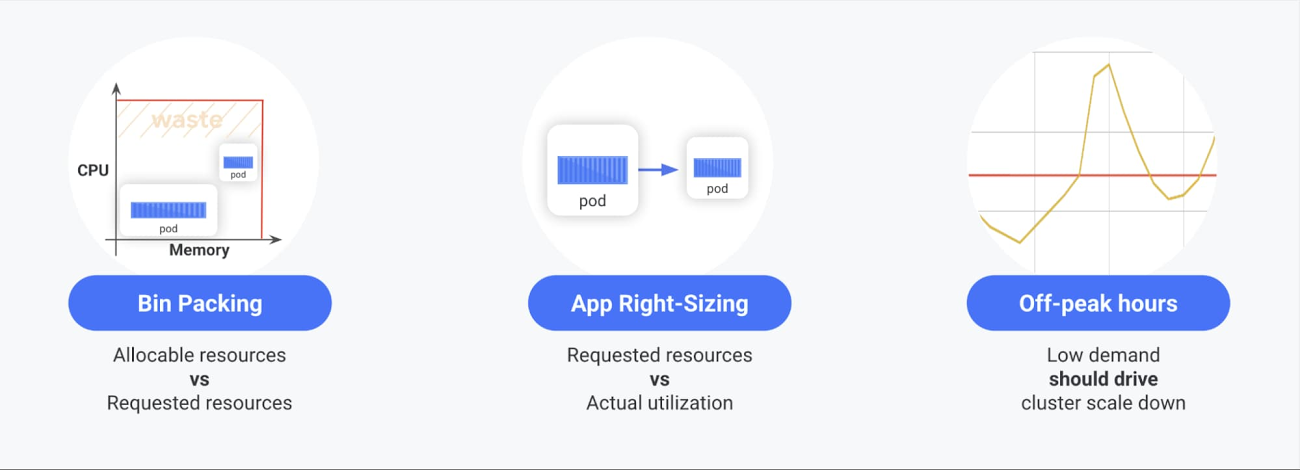

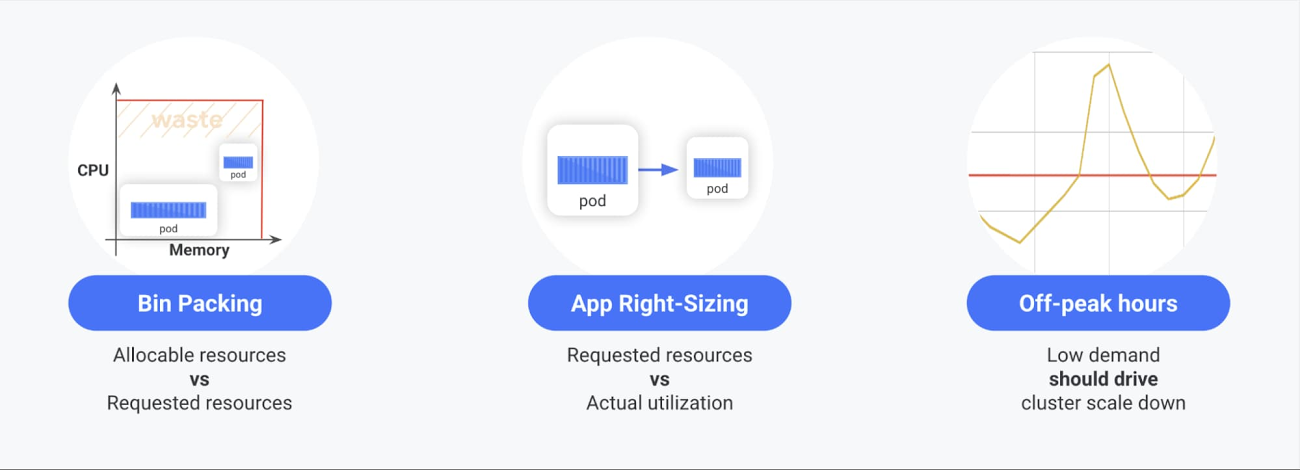

- Bin packing - how well you pack applications onto your Kubernetes nodes. The better you pack apps onto nodes, the more you save.

- App right-sizing - the ability to set appropriate resources requests and workload autoscale configurations for the applications deployed in the cluster. The more precisely you set accurate resources to your pods, the more reliably your applications will run and, in the vast majority of the cases, the more space you’ll save in the cluster.

- Scaling down during off-peak hours - Ideally, to save money during periods of low demand, for example at night, you should scale down your cluster along with actual traffic. However, there are cases when this doesn’t happen as expected, especially for workloads or cluster configurations that block Cluster Autoscaler.

In our experience, the most over-provisioned environments tend to have at least two of the above challenges. In order to effectively cost-optimize your environment, you should embrace a culture that encourages a continuous focus on the issues that lead to over-provisioning.

Tackling over-provisioning with GKE

Implementing a custom monitoring system is a common approach for reducing your reliance on over-provisioned resources. For bin packing, you can compare allocatable vs. requested resources; for app rightsizing, requested vs. used resources; and for cluster utilization, you can monitor for Cluster Autoscaler being unable to scale down.

However, implementing such a monitoring system is quite complex and requires the platform to provide specific metrics, resource recommendations, template dashboards and alerting policies. To learn how to build such a system yourself, check out our Monitoring your GKE clusters for cost-optimization tutorial, where we show you how to set up this kind of continuous cost-optimization monitoring environment, so that you can tune your GKE clusters according to recommendations without compromising your applications' performance and stability.

Another element of a GKE environment that plays a fundamental role in cost optimization is Cluster Autoscaler, which provides nodes for Pods that don't have a place to run and removes underutilized nodes. In GKE, Cluster Autoscaler is optimized for the cost of the infrastructure, meaning, if there are two or more node types in the cluster, it chooses the least expensive one that fits the current demand.

If your cluster is not scaling down as expected, take a look at your cluster autoscaler events to understand the root cause. You may have set a higher than needed minimum node-pool size or your Cluster Autoscaler may not be able to delete some nodes because certain Pods may cause temporary disruption if restarted. Some examples are: system Pods (such as metrics-server and kube-dns), and Pods that use local storage. To learn how to handle such scenarios, please take a look at our best practices. And if you determine that you really do need to over-provision your workload to properly handle spikes during the day, you can reduce the cost by setting up scheduled autoscalers.

GKE’s cost-optimization superpowers

GKE also provides many other unique modes and features that may make you forget about having to do things like bin packing and app rightsizing. For example:

GKE Autopilot

GKE Autopilot is our best ever GKE mode that also delivers the ultimate cost-optimization superpower. In GKE Autopilot, you only pay for the resources you request, effortlessly, getting rid of one of the biggest sources of waste—bin packing. That, and the fact that Autopilot automatically applies industry best practices, eliminates all node management operations, maximizes cluster efficiency and provides a stronger security posture. And, with less infrastructure to manage, Autopilot can help you cut down even further on deployment man-hours and day-two operations.

If you decide not to use GKE Autopilot but still want to use the most cost-optimized defaults, check out GKE’s built-in “Cost-optimized cluster” set-up guide, which will get you started with the key infrastructure features and settings you need to know about.

Node auto-provisioning

Bin packing is a complex problem, and even with a decent monitoring system like the one presented above, it requires constant manual tweaks. GKE removes the friction and operational costs associated with precise node-pool tweaking with node auto-provisioning, which automatically creates—and deletes—the most appropriate node pools for a scheduled workload. Node auto-provisioning is the evolution of Cluster Autoscaler, but with better cost savings and less knowledge and effort on your part.

Then, if you want to pack your node pools even more, you can further select the optimize-utilization profile, which preferences scheduling Pods on the most utilized nodes and it makes Cluster Autoscaler even more aggressive at scaling down. Beyond making cluster autoscaling fully automatic, this setup also maintains the least expensive configuration.

Pod Autoscalers

App rightsizing requires you to fully understand the capacity of all your applications or, again, pass that responsibility over to us. In addition to the classic Horizontal Pod Autoscaler, GKE also provides a Vertical Pod Autoscaler and a Multidimensional Pod Autoscaler. Horizontal Pod Autoscaler is best for responding to spiky traffic by quickly adding more Pods to your cluster. Vertical Pod Autoscaler lets you rightsize your application by figuring out, over time, your Pod's capacity in terms of cpu and memory. Last but not least, Multidimensional Pod Autoscaler lets you define these two autoscaler behaviors using a single Kubernetes resource. These workload autoscalers give you the ability to automatically rightsize your application and, at the same time, quickly respond to traffic volatility in a cost-optimized way.

Optimized machine types

Beyond the above solutions to the most common over-provisioning problems, GKE also helps you reduce costs by using E2 machine types by default. E2 machines are cost-optimized VMs that offer 31% savings compared to N1 machines. Or choose our new Tau machines, available in Q3 2021, which offer a whopping 42% better price-performance over comparable general-purpose offerings. Moreover, GKE also gives you the option to choose Preemptible VMs, which are up to 80% cheaper than standard Compute Engine VMs. (However, we recommend you to read our best practices to make sure your workload will run smoothly on top of Preemptible VMs.)

Ensuring operational efficiencies

Optimizing costs isn’t just about looking at your underlying compute capacity—another important consideration is the operational cost of building, maintaining, and securing your platform. While that often gets overlooked when calculating total cost of ownership (TCO), it’s nevertheless important to keep in mind.

To help save man-hours, GKE provides the easiest fully managed Kubernetes environment on the market. With the GKE Console, gcloud command line, terraform or Kubernetes Resource Model, you can quickly and easily configure regional clusters with a high-availability control plane, auto-repair, auto-upgrade, native security features, automated operation, SLO-based monitoring, etc.

Last but not least, GKE is unmatched in its ability to scale a single cluster to 15,000 nodes. For the vast majority of users, this removes scalability as a constraint in your cluster design and pushes the boundaries of cost, performance and efficiency for hyper-scaled workloads when you need it. In fact, we see up to 50% greater infrastructure utilization in large clusters, where key GKE capabilities have been considered and applied.

What our customers are saying about their experience with GKE

Market Logic makes a marketing insights platform and says GKE four-way auto scaling and multi-cluster support helped it minimize its maintenance time and costs.

“Since migrating to GKE, we’ve halved the costs of running our nodes, reduced our maintenance work, and gained the ability to scale up and down effortlessly and automatically according to demand. All our customer production loads and development environment run on GKE, and we’ve never faced a critical incident since.” - Helge Rennicke, Director of Software Development, Market Logic Software

See more details in Market Logic: Helping leading brands run an insights-driven business with a scalable platform

By switching to a containerized solution on Google Kubernetes Engine, Konga, Nigeria’s online marketplace, cut cloud infrastructure costs by two-thirds.

“With Google Kubernetes Engine, we deliver the same or better functionality as previously in terms of being able to scale up to match traffic, but in its lowest state, the overall running cost of the production cluster is much less than the minimum costs we’d pay with the previous architecture.” - Andrew Mori, Director of Technology, Konga

Read more in Konga: Cutting cloud infrastructure costs by two-thirds

What's next

Building a cost optimization culture and routines into your organization can help you balance performance, reliability and cost. This in turn will give your team and business a competitive edge, helping you focus on innovation.

GKE includes many features that can greatly simplify your cost-optimization initiatives. To get the most from the platform, make sure developers and operators are aligned on the importance of cost-optimization as a continuous discipline. To help, we’ve prepared a set of materials: our GKE cost-optimization best practices, a 5 minute video series (if you want to learn on the go), cost-optimization tutorials, and a self-service hands-on workshop to help you practice your skills. Moreover, we strongly encourage you to create internal discussion groups, and run internal workshops to ensure all your teams get the most out of GKE. Last but not least, watch this space! We look forward to publishing more blog posts about cost optimization on GKE in the coming months!

1. https://info.flexera.com/CM-REPORT-State-of-the-Cloud