Efficiently scale ML and other compute workloads on NVIDIA’s T4 GPU, now generally available

Chris Kleban

Group Product Manager, Google Cloud

NVIDIA’s T4 GPU, now available in regions around the world, accelerates a variety of cloud workloads, including high performance computing (HPC), machine learning training and inference, data analytics, and graphics. In January of this year, we announced the availability of the NVIDIA T4 GPU in beta, to help customers run inference workloads faster and at lower cost. Earlier this month at Google Next ‘19, we announced the general availability of the NVIDIA T4 in eight regions, making Google Cloud the first major provider to offer it globally.

A focus on speed and cost-efficiency

Each T4 GPU has 16 GB of GPU memory onboard, offers a range of precision (or data type) support (FP32, FP16, INT8 and INT4), includes NVIDIA Tensor Cores for faster training and RTX hardware acceleration for faster ray tracing. Customers can create custom VM configurations that best meet their needs with up to four T4 GPUs, 96 vCPUs, 624 GB of host memory and optionally up to 3 TB of in-server local SSD.

At time of publication, prices for T4 instances are as low as $0.29 per hour per GPU on preemptible VM instances. On-demand instances start at $0.95 per hour per GPU, with up to a 30% discount with sustained use discounts.

Tensor Cores for both training and inference

NVIDIA’s Turing architecture brings the second generation of Tensor Cores to the T4 GPU. Debuting in the NVIDIA V100 (also available on Google Cloud Platform), Tensor Cores support mixed-precision to accelerate matrix multiplication operations that are so prevalent in ML workloads. If your training workload doesn’t fully utilize the more powerful V100, the T4 offers the acceleration benefits of Tensor Cores, but at a lower price. This is great for large training workloads, especially as you scale up more resources to train faster, or to train larger models.

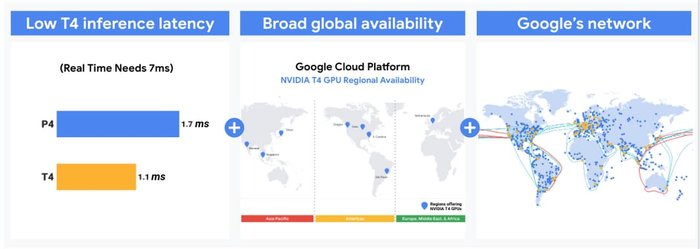

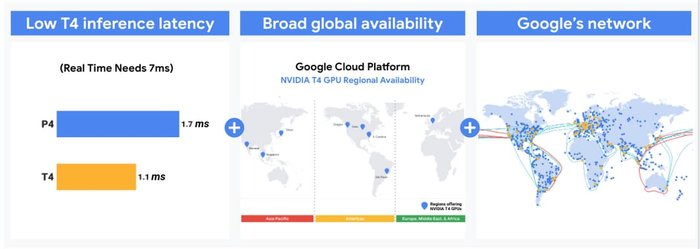

Tensor Cores also accelerate inference, or predictions generated by ML models, for low latency or high throughput. When Tensor Cores are enabled with mixed precision, T4 GPUs on GCP can accelerate inference on ResNet-50 over 10X faster with TensorRT when compared to running only in FP32. Considering its global availability and Google’s high-speed network, the NVIDIA T4 on GCP can effectively serve global services that require fast execution at an efficient price point. For example, Snap Inc. uses the NVIDIA T4 to create more effective algorithms for its global user base, while keeping costs low.

“Snap’s monetization algorithms have the single biggest impact to our advertisers and shareholders. NVIDIA T4-powered GPUs for inference on GCP will enable us to increase advertising efficacy while at the same time lower costs when compared to a CPU-only implementation.”

—Nima Khajehnouri, Sr. Director, Monetization, Snap Inc.

You can get up and running quickly, training ML models and serving inference workloads on NVIDIA T4 GPUs by using our Deep Learning VM images. These include all the software you’ll need: drivers, CUDA-X AI libraries, and popular AI frameworks like TensorFlow and PyTorch. We handle software updates, compatibility, and performance optimizations, so you don’t have to. Just create a new Compute Engine instance, select your image, click Start, and a few minutes later, you can access your T4-enabled instance. You can also start with our AI Platform, an end-to-end development environment that helps ML developers and data scientists to build, share, and run machine learning applications anywhere. Once you’re ready, you can use Automatic Mixed Precision to speed up your workload via Tensor Cores with only a few lines of code.

Performance at scale

NVIDIA T4 GPUs offer value for batch compute HPC and rendering workloads, delivering dramatic performance and efficiency that maximizes the utility of at-scale deployments. A Princeton University neuroscience researcher had this to say about the T4’s unique price and performance:

“We are excited to partner with Google Cloud on a landmark achievement for neuroscience: reconstructing the connectome of a cubic millimeter of neocortex. It’s thrilling to wield thousands of T4 GPUs powered by Kubernetes Engine. These computational resources are allowing us to trace 5 km of neuronal wiring, and identify a billion synapses inside the tiny volume.”

—Sebastian Seung, Princeton University

Quadro Virtual Workstations on GCP

T4 GPUs are also a great option for running virtual workstations for engineers and creative professionals. With NVIDIA Quadro Virtual Workstations from the GCP Marketplace, users can run applications built on the NVIDIA RTX platform to experience the next generation of computer graphics, including real-time ray tracing and AI-enhanced graphics, video and image processing, from anywhere.

“Access to NVIDIA Quadro Virtual Workstation on the Google Cloud Platform will empower many of our customers to deploy and start using Autodesk software quickly, from anywhere. For certain workflows, customers leveraging NVIDIA T4 and RTX technology will see a big difference when it comes to rendering scenes and creating realistic 3D models and simulations. We’re excited to continue to collaborate with NVIDIA and Google to bring increased efficiency and speed to artist workflows."

—Eric Bourque, Senior Software Development Manager, Autodesk

Get started today

Check out our GPU page to learn more about how the wide selection of GPUs available on GCP can meet your needs. You can learn about customer use cases and the latest updates to GPUs on GCP in our Google Cloud Next 19 talk, GPU Infrastructure on GCP for ML and HPC Workloads. Once you’re ready to dive in, try running a few TensorFlow inference workloads by reading our blog or our documentation and tutorials.