Google Cloud and Equinix: Building Excellence in ML Operations (MLOps)

Lukasz Murawski

Lead ML Engineer, Equinix

Sharmila Devi

AI Consultant, Google

In recent years, machine learning (ML) has gained tremendous popularity as a powerful tool for solving complex problems across various domains. However, building and deploying ML models at scale can be challenging, as it involves a range of tasks such as data preparation, feature engineering, model training, deployment, monitoring, maintenance and so on.

According to "The Art of AI maturity" report published by Accenture “87% of data science projects never make it into production.” This is where MLOps comes in – it can help to address the core challenges by providing a framework for managing the entire ML lifecycle, from data collection and preparation to model development, testing, and deployment. It also reduces the time from ML model development to production and increases the success rate of ML projects.

In Google Cloud, we understand how important MLOps is to successfully productionize ML models. So we collaborated with Equinix, the world’s digital infrastructure company™ and a leader in global colocation data center market share, with 248 data centers in 27 countries on five continents. We helped them by providing the advisory services on the MLOps best practices and architecture.

Let’s take a sneak peek at the MLOps requirements at Equinix and the final architecture that was proposed.

What does MLOps mean for Equinix?

After multiple discovery sessions with the Equinix Team, we identified the core requirements and pain points to address in their new MLOps architecture:

Reusability: Components such as features and pipeline components should be reused across projects to reduce costs and improve efficiency.

Foundations: The foundations of the infrastructure, such as environments, folder structure, and project hierarchy, should be well-designed to support scalability and reliability.

Early identification of problems: Problems should be identified early by including data validation, notifications, and retry mechanisms.

Cost optimization: Costs should be optimized by paying only for what is used.

Enterprise CI/CD requirements: Enterprise CI/CD requirements should be met by integrating with GitHub and GitActions.

Scaling: The infrastructure must scale to support future growth.

Security: Enterprise security requirements should be met in terms of IAM roles, network, etc.

MLOps Architecture

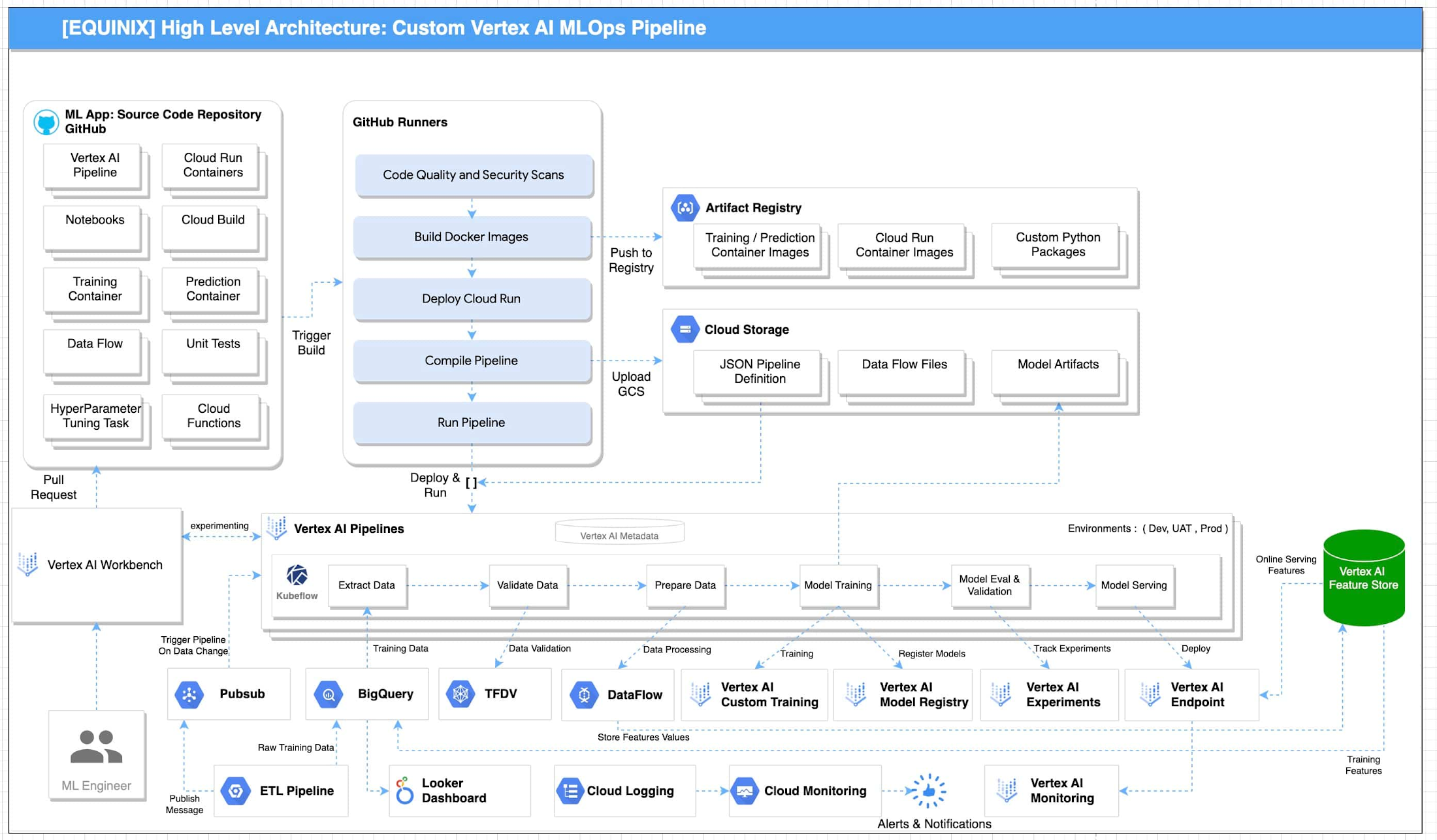

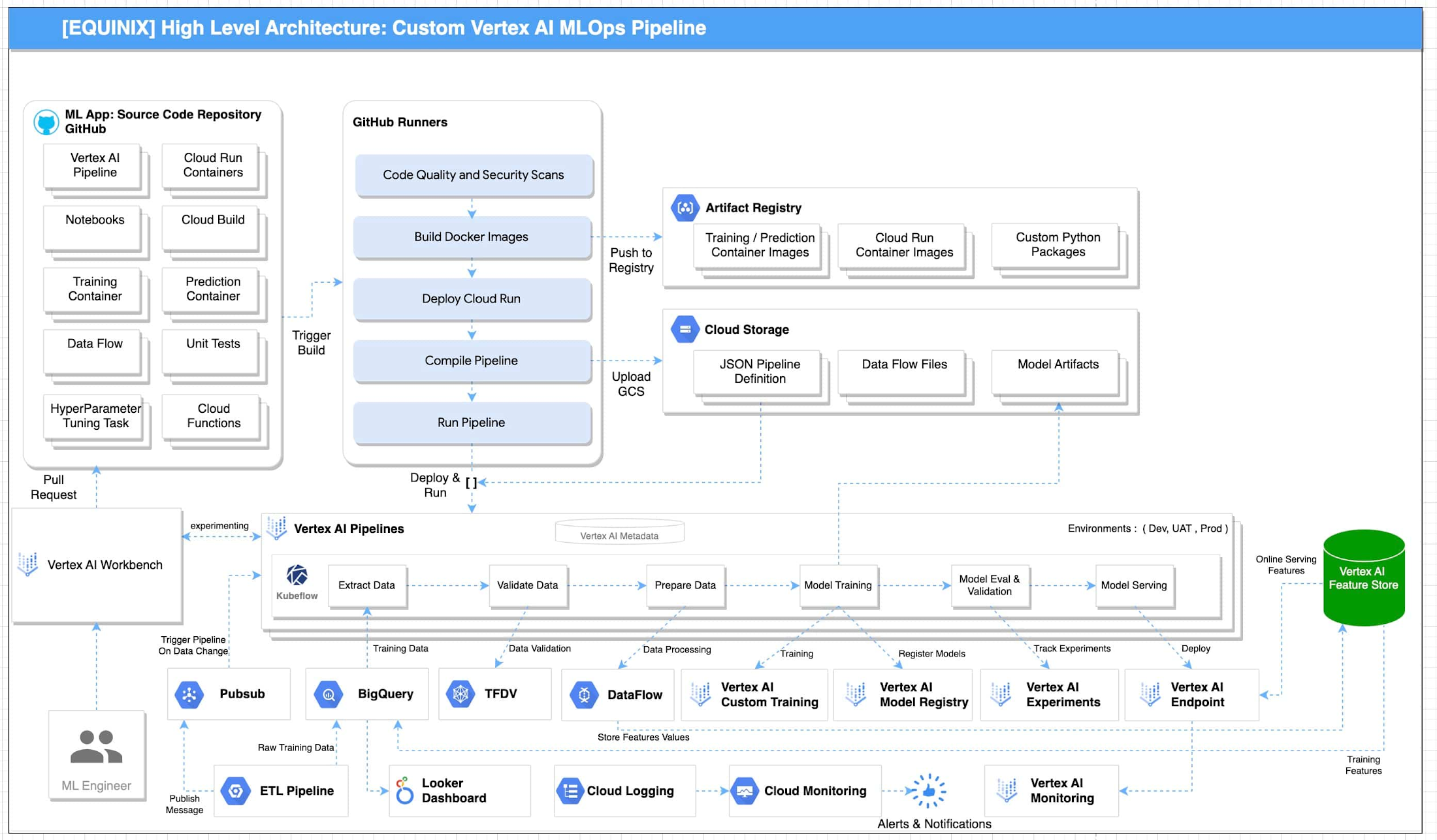

Based on the above requirements from Equinix, key design considerations were made for example - using Vertex AI Feature Store instead of Big Query for online feature serving, using DataFlow for pre-processing vs using the existing python based pre-processing and so on. After carefully assessing all the alternatives, the below reference architecture for MLOps in GCP was proposed:

Reference architecture for MLOPs using GCP is illustrated in Figure 1. This architecture includes the following pipeline stages:

Vertex AI Workbench enables data scientists to quickly explore new ideas and develop/experiment new models. The source code is saved in GitHub repository

Github Actions with self-hosted runners are integrated with Github as the source code repository. This enables continuous integration of code, quality and security scans. The artifacts generated during the process are saved in Artifact Registry and Google Cloud Storage. These artifacts are deployed to implement the pipeline.

Unit tests and integration tests can be performed during the continuous integration in Github Actions with self-hosted runners. End-to-end tests are performed on demand in the continuous integration pipeline.

Metadata about the artifacts is generated and saved in Vertex ML Metadata.

Automated triggers can be enabled to run pipelines. For example, one trigger is the availability of new training data. These triggers can run the model training pipeline and the new trained model can be pushed to Vertex AI Model Registry.

To train a new ML model with new data, the deployed Vertex AI Pipeline is executed.

To train a new ML model with new implementation, a new pipeline will be deployed through CI/CD pipeline.

“The proposed architecture design covers the requirements and scenarios that we were looking for. As our AI and ML portfolio is growing in scale and complexity, it's important to follow a clear and up-to-date architecture if we want to keep increasing the value delivered by our solutions. As part of the process, the team also acquired the skills required to fully implement it” according to Bernardo Fernandes, Data Science Senior Manager at Equinix.

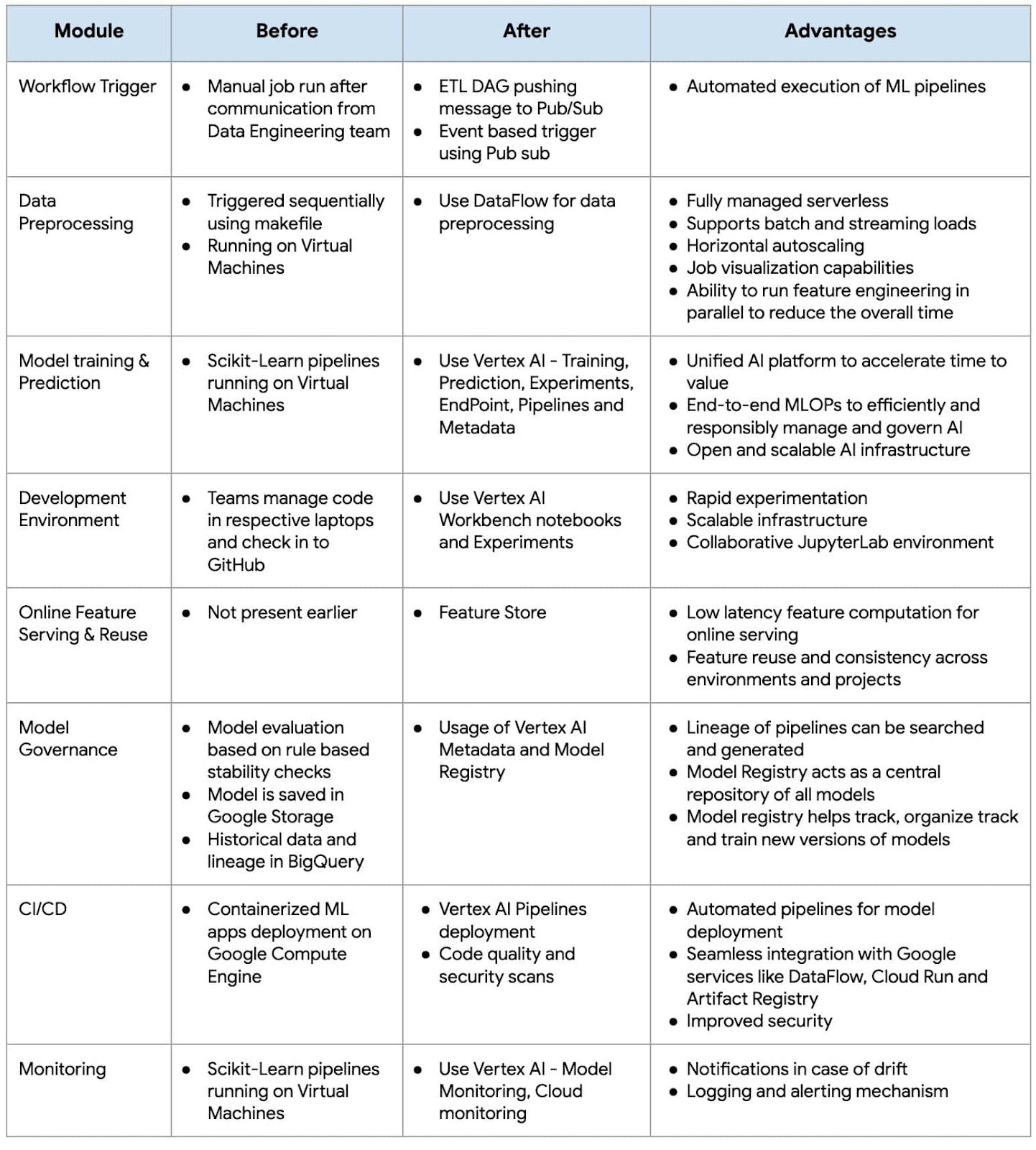

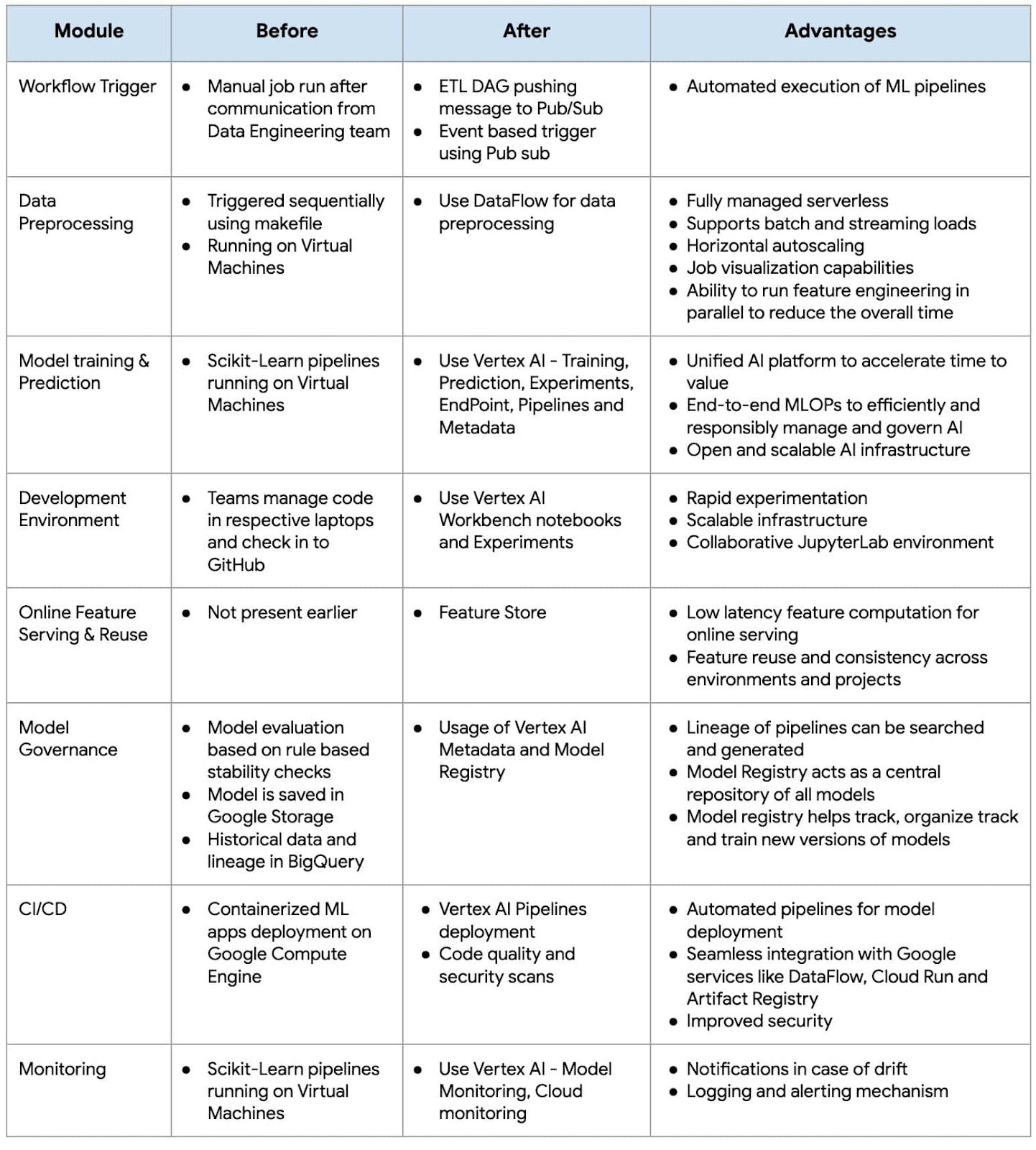

Below is the snapshot of the features before and after MLOps implementation at Equinix:

As businesses increasingly rely on machine learning to gain a competitive edge, MLOps has become a critical component of their strategy. By adopting MLOps practices, organizations can achieve faster time-to-market, better performance, and higher ROI for their machine learning initiatives.

Fast track end-to-end deployment with Google Cloud AI Services (AIS)

The partnership between Google Cloud and Equinix is just one of the latest examples of how we’re providing AI-powered solutions to solve complex problems to help organizations drive the desired outcomes. To learn more about Google Cloud’s AI services, visit our AI & ML Products page.

We’d like to give special thanks to Nitin Aggarwal, Vijay Surampudi, Parag Mhatre and Anantha Narayanan Krishnamurthy for their support and guidance throughout the project. We are also grateful to the super awesome collaboration with the Equinix Team (Ravi Pasula and Brendan Coffey, Bernardo Fernandes, Łukasz Murawski, Jakub Michałowski, Sonia Przygocka-Groszyk, Marek Opechowski, Daria Bondara, Nila Velu, Vijay Narayanan, Dharmendra Kumar, Shailesh Sukare, Arunraj Kumar Raje, Seng Cheong Lee).