Accelerate Cloud EDA workflows with NetApp and Google Cloud

Alec Shnapir

Principal Architect, Google Cloud

Chad Morgenstern

Principal Technologist, Office of the CTO, NetApp

The semiconductor industry has long sought to do more in fixed release windows; however, new factors including the move to seven, five, and three nanometer (nm) process nodes has driven the scale of compute and data required to a new extreme. Rather than choosing to build and maintain ever-larger data centers to meet that demand, major semiconductor design teams are leveraging Google Cloud. These organizations have discovered that Google Cloud’s elasticity allows them to deliver efficiency in an environment where their compute resources scale to match their needs throughout the design cycle.

The most common way to migrate EDA workflows to the cloud initially is to “lift and shift,” maintaining parity with on-premises storage systems, and plan to modernize the workflow later. Additionally, “bursting” to the cloud enables companies to spin up and down cloud compute resources when additional work needs to be done. This allows semiconductor customers to move applications more quickly and without requiring immediate modifications or leverage on-prem resources during the migration. NetApp’s partnership with Google Cloud provides a unique storage platform for semiconductor customers to lift and shift easily or burst to cloud, and modernize and extract insights out of that data in the future. First, customers can easily migrate their data with enterprise-class cloud storage solutions that can tightly integrate with on-premises NetApp systems, and are optimized and validated for Google Cloud. Then, they are able to modernize their data management with application-driven NetApp storage and intelligent data services, all deployed and managed for you in the cloud.

NetApp Cloud Volumes for Google Cloud

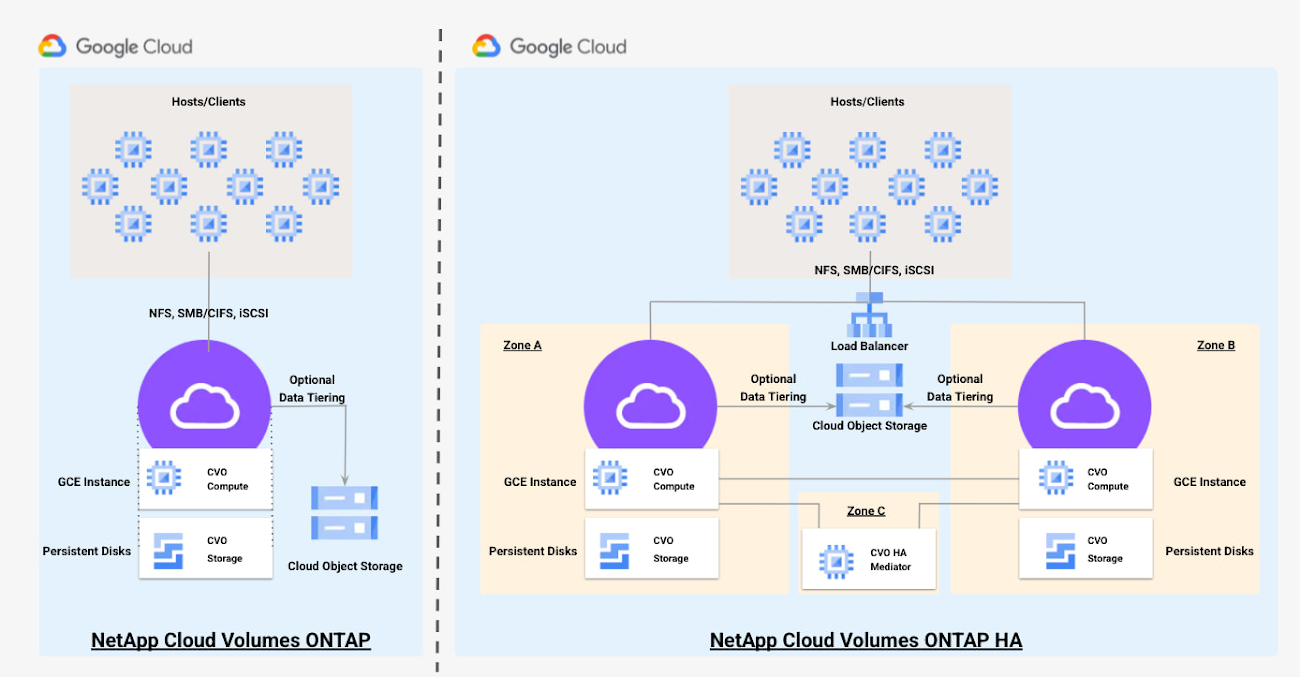

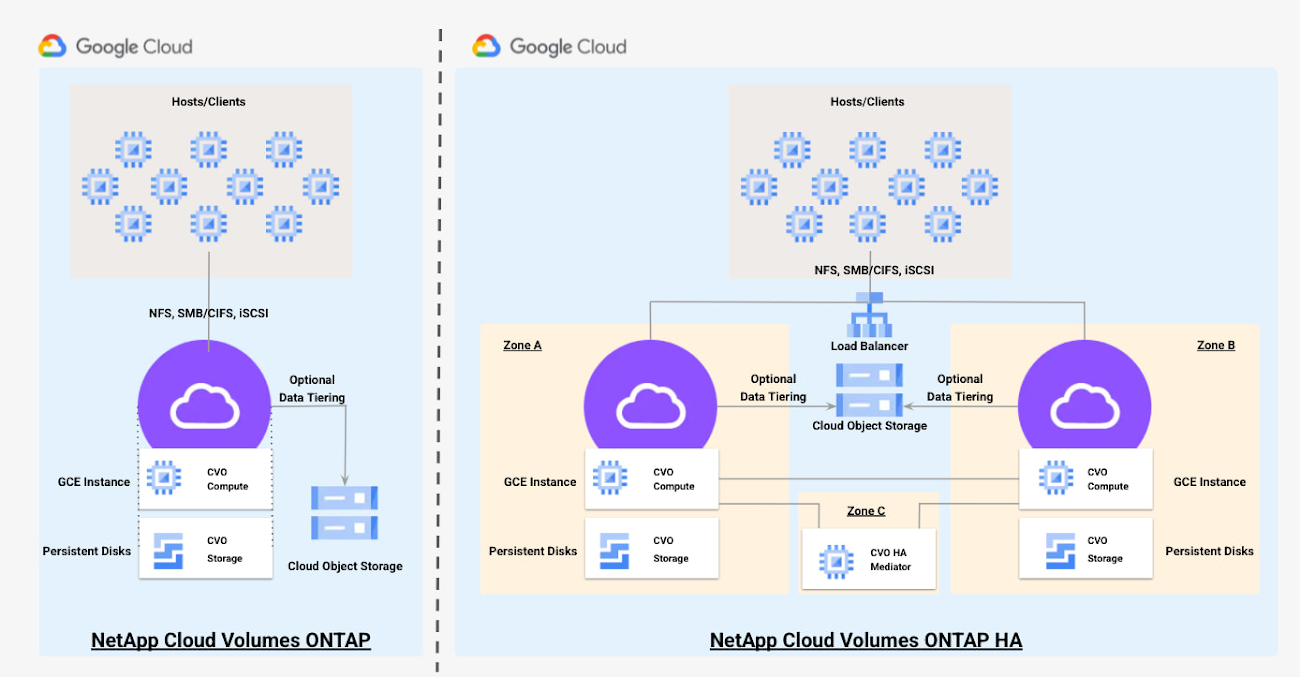

At a high level, there are two NetApp Cloud Volumes offerings in Google Cloud. NetApp Cloud Volumes Service (CVS), a fully managed storage service and NetApp Cloud Volumes ONTAP (CVO), a self-managed storage service. Unlike CVS, CVO enables customers to leverage on-premises NetApp deployments and utilize SnapMirror and/or FlexCache to seamlessly move data from on-prem to CVO in Google Cloud. With CVO for Google Cloud, you can unlock more savings and performance from Google Cloud while boosting data protection, security, and compliance. In addition, existing NetApp customers will continue to have all the features they know and love, and their investments in training and automation on-premise continue to apply.

The key benefits of using CVO:

Fast Deployment and Easy Configuration

The entire CVO solution can be deployed and deleted in minutes using either NetApp Cloud Manager or Terraform scripts

Configuration Flexibility

NetApp CVO deployment are supported in all 29 Google Cloud regions

Deployments allow flexibility in selecting compute and storage resources to match customer’s specific needs throughout the design cycle

In addition to single node CVO deployment options, customers can deploy highly available storage with a CVO HA configuration. This can be done within a single zone or across multiple zones. The CVO HA configuration provides nondisruptive operations and fault tolerance while data is synchronously mirrored between the two CVO compute nodes.

Monitoring and Logging

In addition to NetApp’s tools, Google Cloud Monitoring & Logging integration allows quick diagnosis and resolution of performance bottlenecks and configuration issues

Advanced data caching and synchronization technologies

Using NetApp data management applications, customers can mirror data between on-premises systems and the Google Cloud, cache data in either location or migrate data to the cloud

- Faster Migrations

- NetApp CVO solutions maintain parity with on-premises storage system and solution designs which allows customers to migrate their applications more quickly and without requiring modifications to their workflows

Bursting Workloads to the Cloud

Although on-premises computation is still the norm for the majority of EDA customers, increasingly the existing on-premises infrastructure is not able to meet the growing and elastic demands of today’s design requirements. Companies are finding that the elasticity provided by Google Cloud enables designers to get more done within a fixed release window and accommodates ever growing data capacities. Utilizing cloud allows design teams to dramatically improve time to market and eliminate the uncertainty of whether a fixed amount of, potentially aging, on-premises storage and compute infrastructure will be able to satisfy the EDA workload demands.

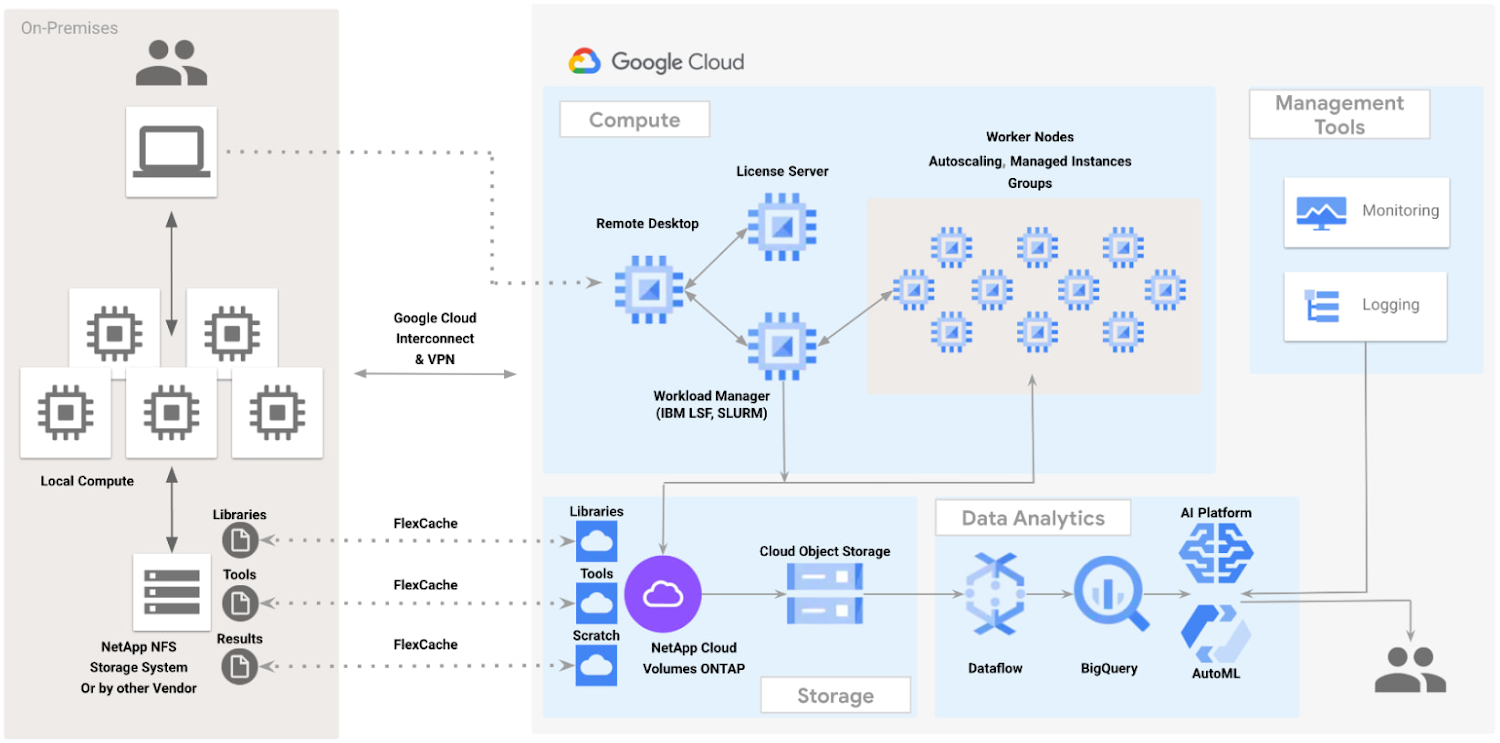

Whether building a hybrid cloud or migrating completely to the Google Cloud, the scale and gravity of data requires special handling. NetApp advanced data caching, FlexCache, and synchronization technologies can provide customers with the ability to mirror data between on-premises systems and Google Cloud, to cache data in either location, or to migrate data to the cloud while preserving existing workflows.

With NetApp FlexCache, customers can seamlessly connect their on-premises data to Google Cloud. NetApp CVO can be configured to act as both high-performance scalable storage for EDA workloads in the cloud and a cache of on-premises tools and libraries. To an EDA workload in the cloud, these tools and libraries appear to be local. And not only does it eliminate the need to mirror all of the tools and libraries to the cloud, there is no need to actively manage a separate collection of versioned tools and libraries in the cloud. Developers can get just the data they need, where and when they need it. In addition, some customers may choose to provision a FlexCache on-premises so that the results of jobs run in the cloud appear local to debugging tools running on-premises.

Measuring the Performance of Synthetic EDA Workloads

EDA workloads present a unique set of challenges to storage systems, where I/O profiles can vary with the software tools in use and the workflow design stage.

When trying to generalize and simulate EDA workloads, we make the following assumptions:

During logical design phases, when a large number of jobs run in parallel, I/O patterns tend to be mostly random, very metadata intensive and access a large number of small files

During physical design phases, the I/O patterns become more sequential, with fewer read/write jobs running simultaneously and accessing much larger files

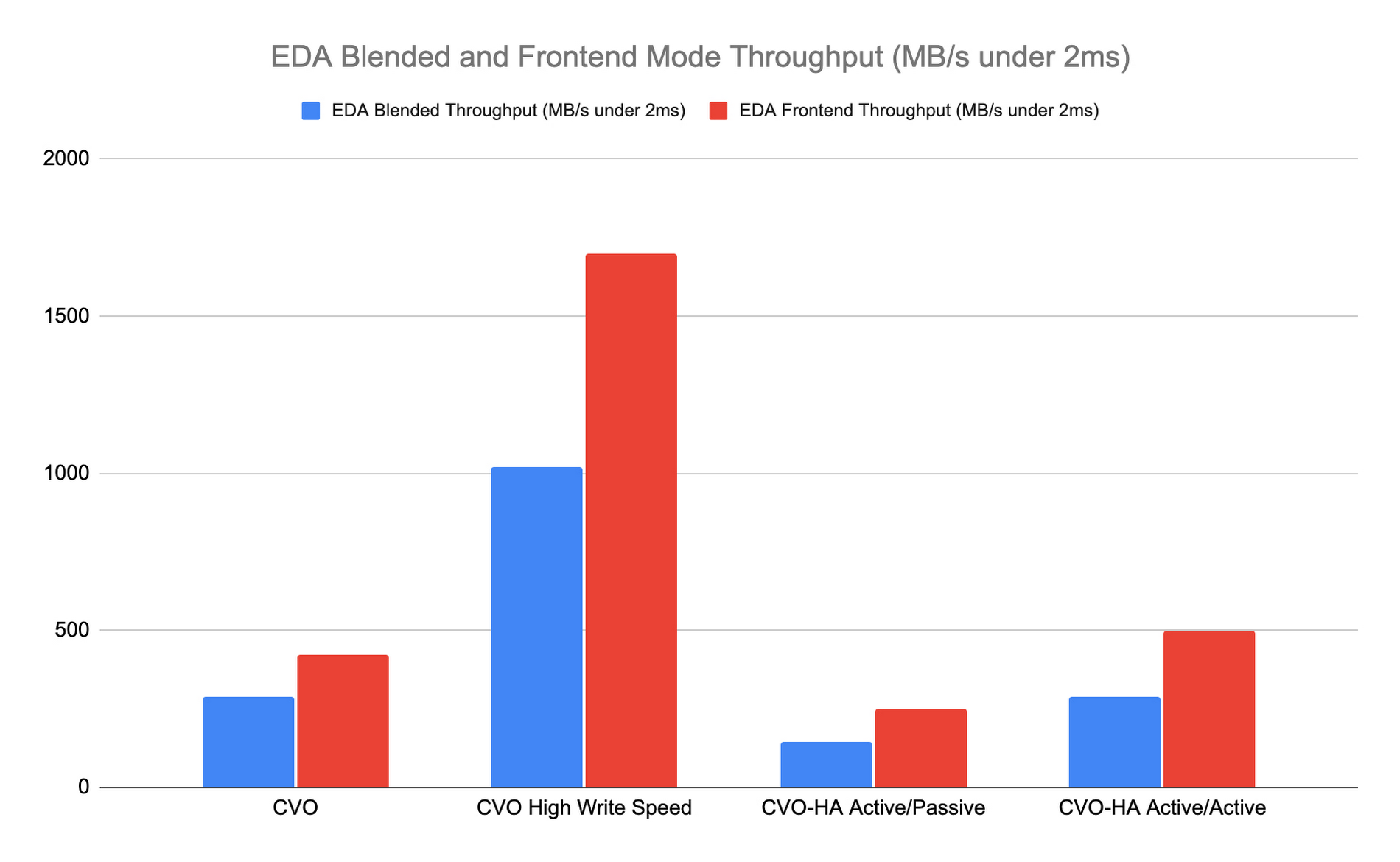

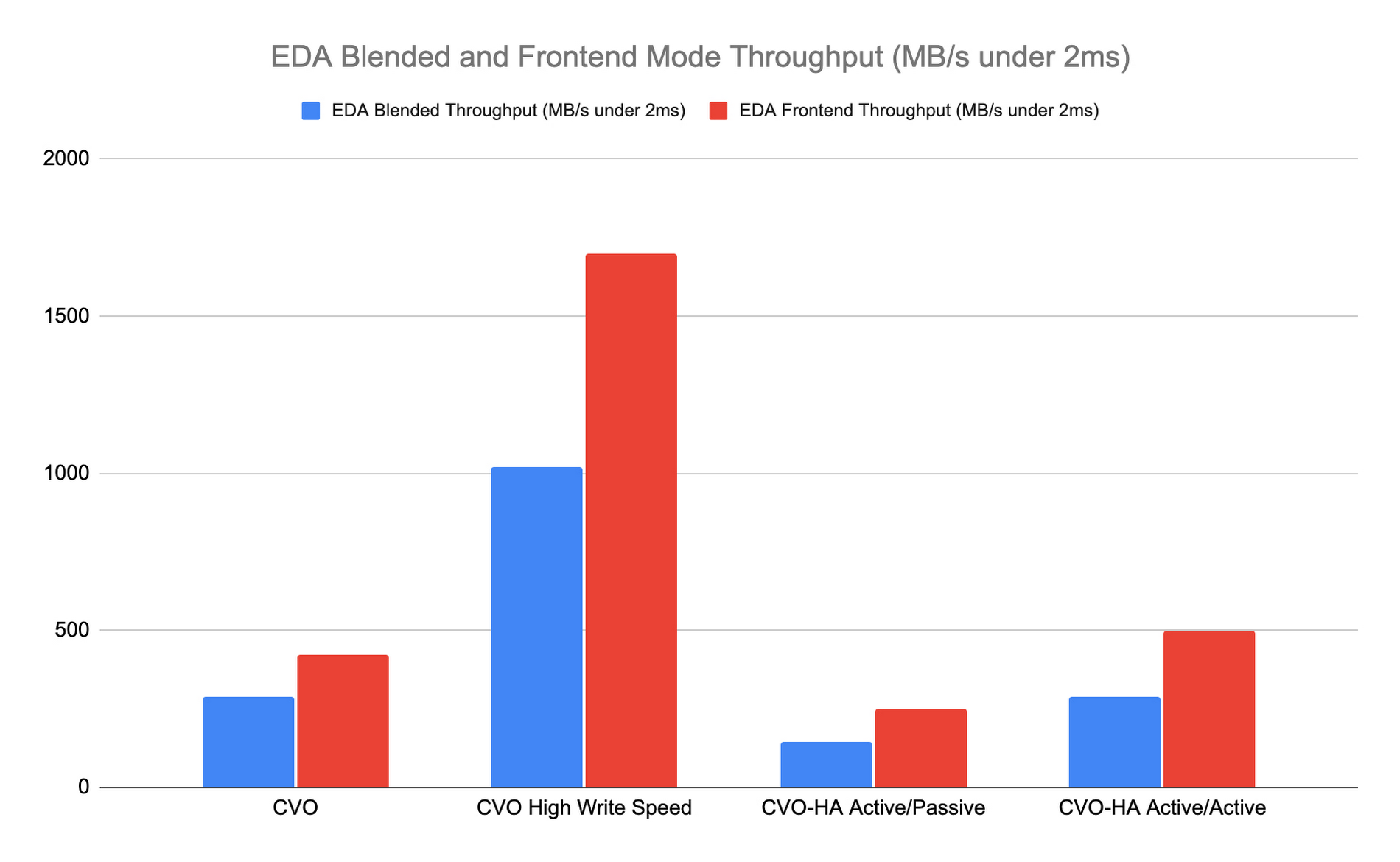

We evaluated NetApp CVO for GCP performance using a synthetic EDA workload benchmark suite. The benchmark was developed to simulate real application behavior and real data processing file system environments, where each workload has a set of pass/fail criteria that must be met in order to successfully pass the benchmark.

For best performance, CVO should be run in high write speed mode. In this mode, data is buffered in memory before it is written to disk. If CVO High Availability is desired, then the active/active configuration is recommended. In both configurations, CVO delivers the rich feature set of ONTAP and the scale of Google Cloud while preserving the customer’s workflow.

What’s next

Try the Google Cloud and NetApp CVO Solution. You can also learn more about NetApp Cloud Volumes ONTAP for EDA and learn how to Get started with Cloud Volumes ONTAP for Google Cloud.

In addition, if you are interested to learn more about silicon design on Google Cloud, you can read “Never miss a tapeout: Faster chip design with Google Cloud publication” and “Using Google Cloud to accelerate your chip design process”.

A special thanks to Guy Rinkevich and Sean Derrington from Google Cloud and Jim Holl and Michael Johnson from NetApp for their contributions.