Traffic Director explained!

Priyanka Vergadia

Staff Developer Advocate, Google Cloud

If your application is deployed in a microservices architecture then you are likely familiar with the networking challenges that come with it. Traffic Director helps you run microservices in a global service mesh. The mesh handles networking for your microservices so that you can focus on your business logic and application code, and that doesn't need to know about underlying networking complexities. This separation of application logic from networking logic helps you improve your development velocity, increase service availability, and introduce modern DevOps practices in your organization.

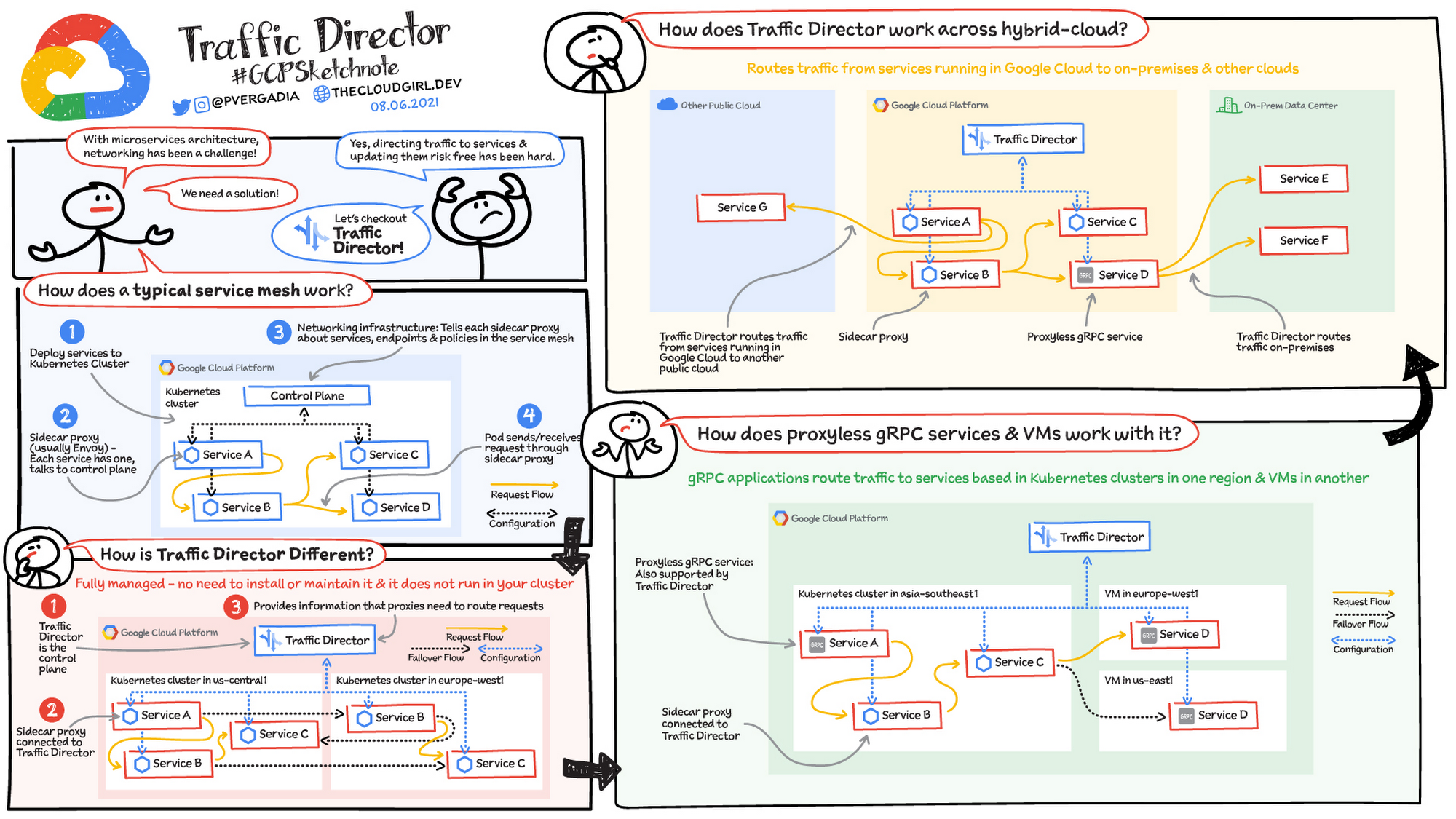

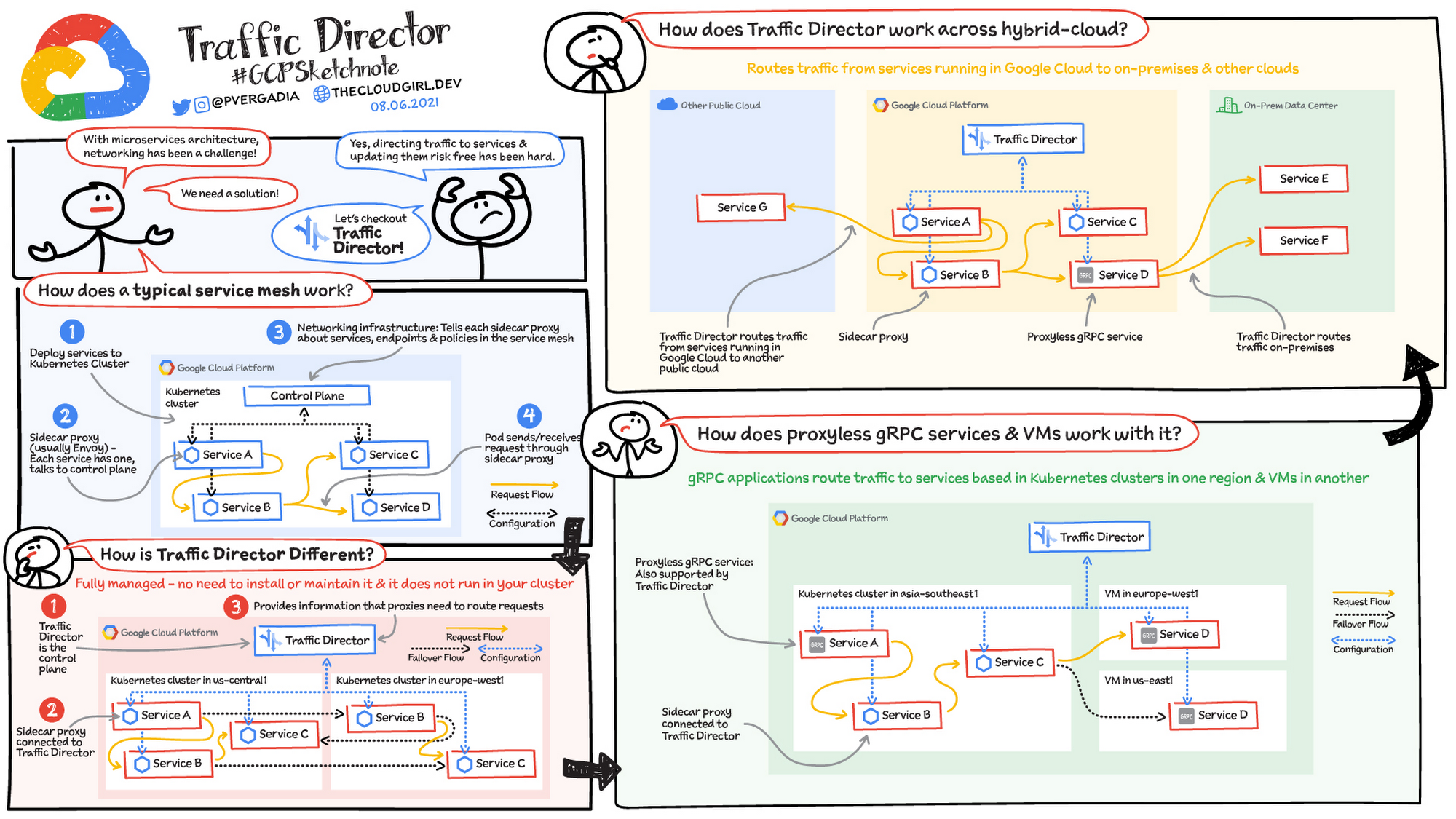

How does a typical service mesh work in Kubernetes?

In a typical service mesh you deploy your services to a Kubernetes cluster.

- Each of the services' Pods has a dedicated proxy (usually Envoy) running as a sidecar container alongside the application container(s).

- Each sidecar proxy talks to the networking infrastructure (a control plane) that is installed in your cluster. The control plane tells the sidecar proxies about services, endpoints, and policies in your service mesh.

- When a Pod sends or receives a request, the request is intercepted by the Pod's sidecar proxy. The sidecar proxy handles the request, for example, by sending it to its intended destination.

The control plane is connected to each proxy and provides information that the proxies need to handle requests. To understand the flow, if application code in Service A sends a request, the proxy handles the request and forwards it to Service B. This model enables you to move networking logic out of your application code. You can focus on delivering business value while letting the service mesh infrastructure take care of application networking.

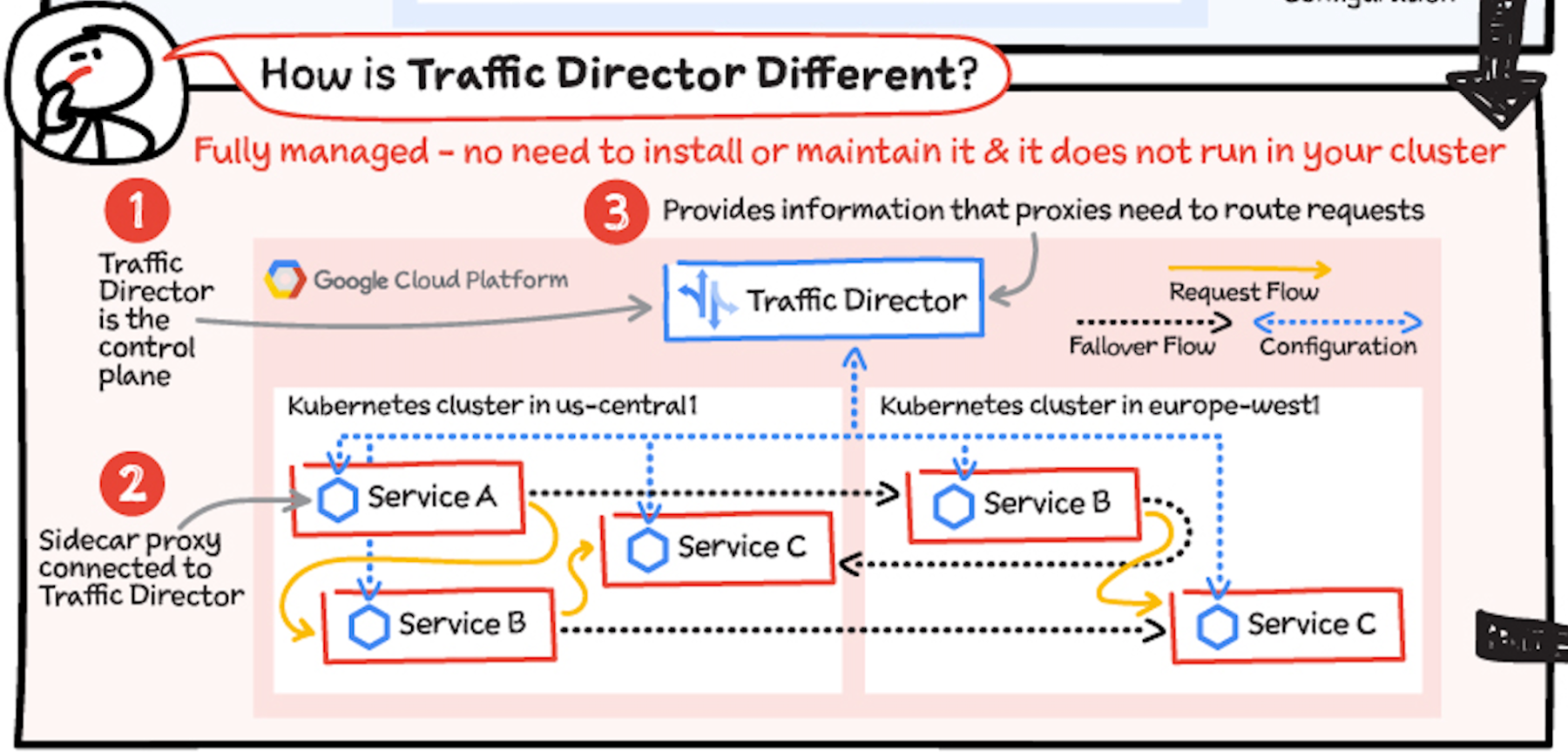

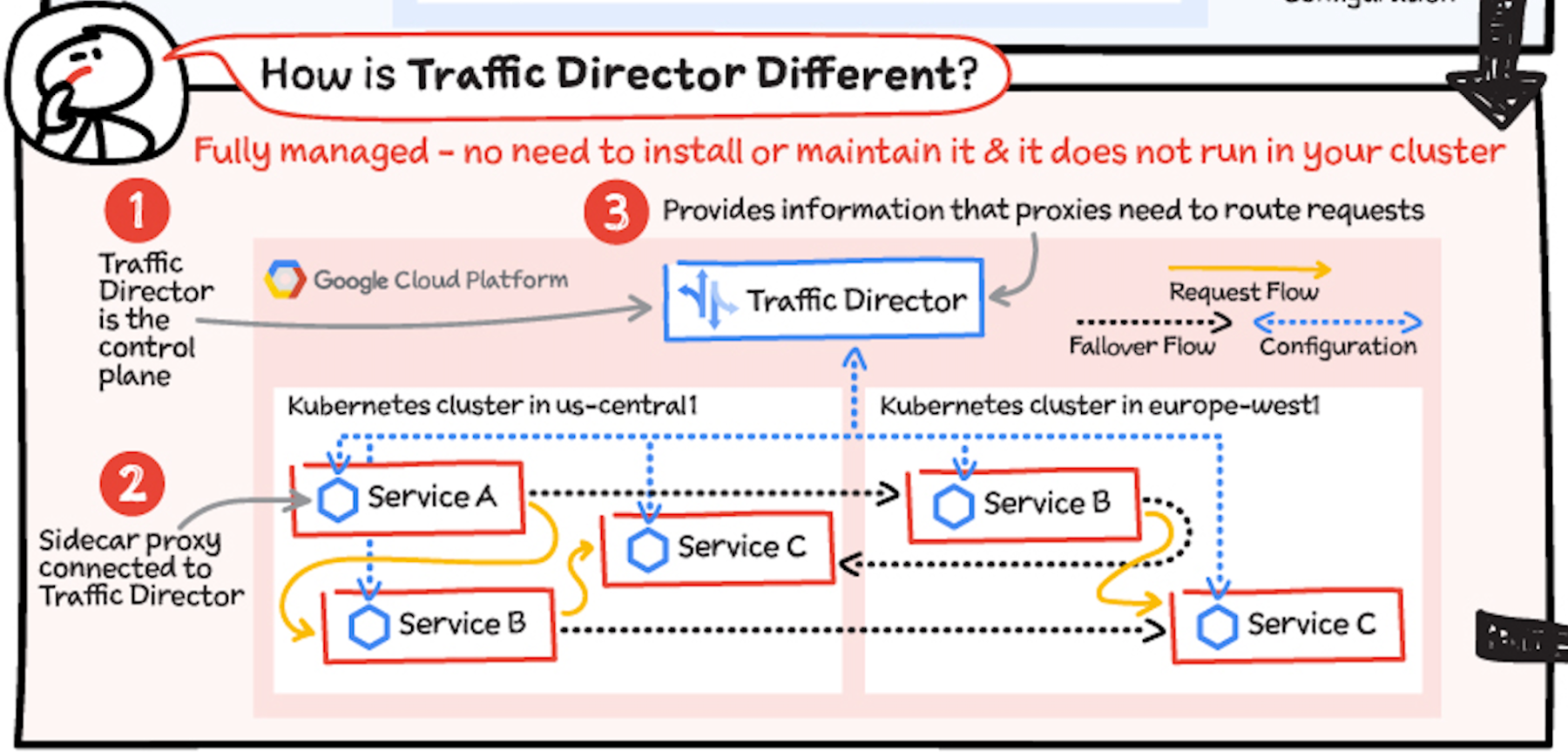

How is Traffic Director different?

Traffic Director works similarly to the typical service mesh model, but it's different in a few very crucial ways. Traffic Director provides:

- A fully managed and highly available control plane. You don't install it, it doesn't run in your cluster, and you don't need to maintain it. Google Cloud manages all this for you with production level SLOs.

- Global load balancing with capacity and health awareness, and failovers.

- Integrated security features to enable a zero-trust security posture.

- Rich control plane and data plane observability features.

- Support for multi-environment service meshes spanning across multi-cluster Kubernetes, hybrid cloud, VMs, gRPC services, and more.

Traffic Director provides the information that the proxies need to route requests. For example, application code on a Pod that belongs to Service A sends a request. The sidecar proxy running alongside this Pod handles the request and routes it to a Pod that belongs to Service B.

Multi-cluster Kubernetes: Traffic Director supports application networking across Kubernetes clusters. In this example, it provides a managed and global control plane for Kubernetes clusters in the US and Europe. Services in one cluster can talk to services in another cluster. You can even have services that consist of Pods in multiple clusters. With Traffic Director's proximity-based global load balancing, requests destined for Service B go to the geographically nearest Pod that can serve the request. You also get seamless failover; if a Pod is down, the request automatically fails over to another Pod that can serve the request, even if this Pod is in a different Kubernetes cluster.

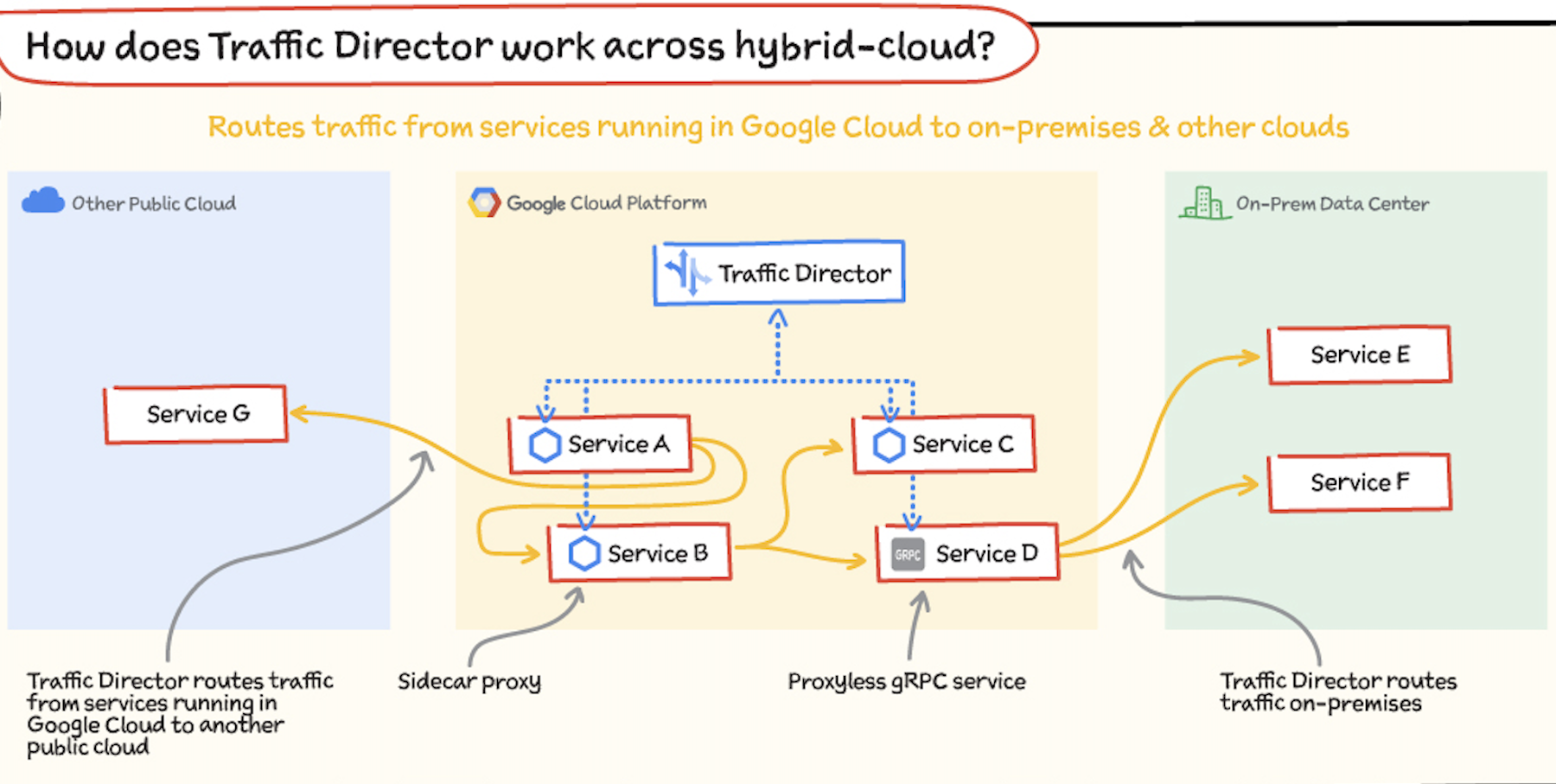

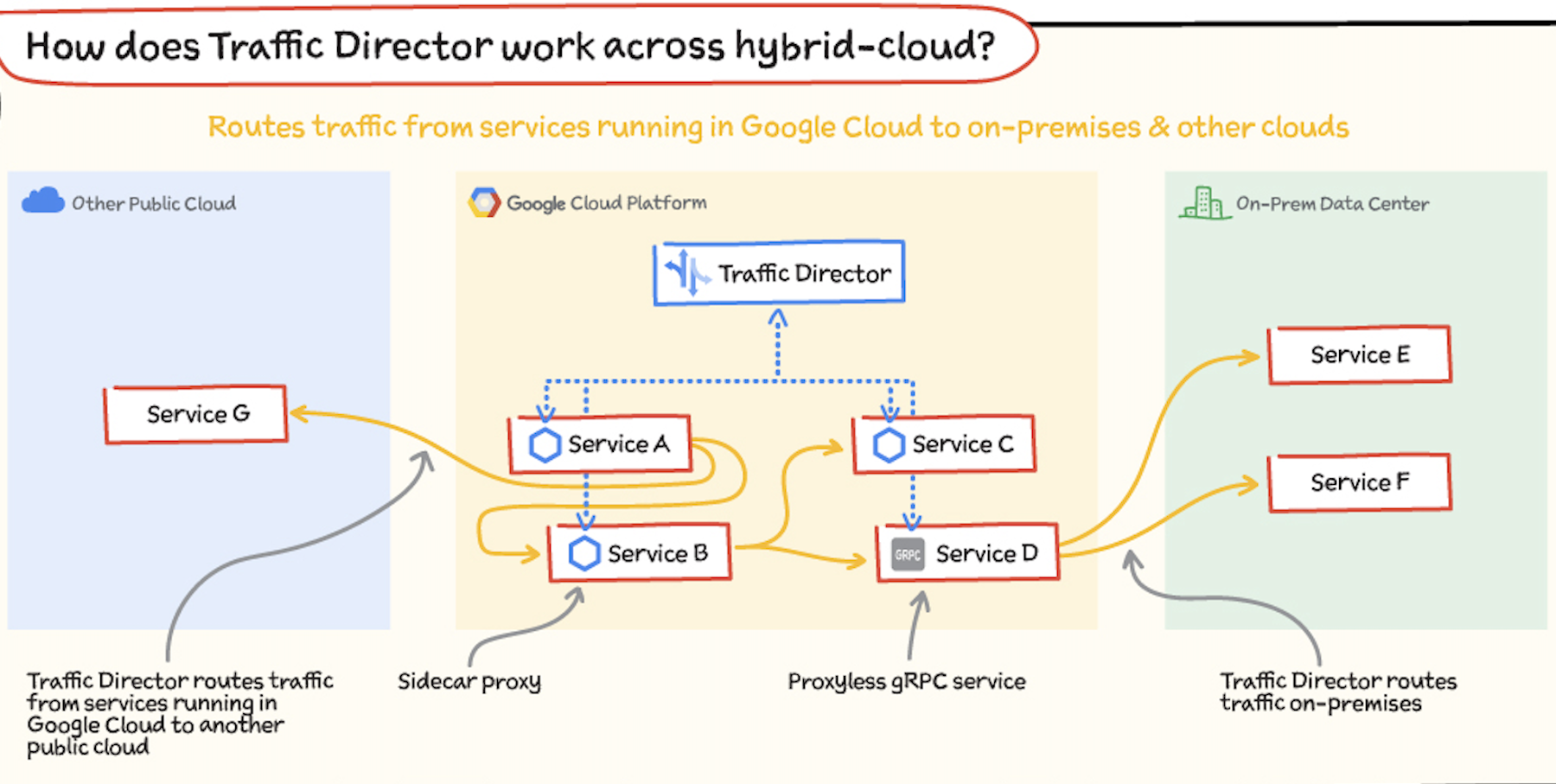

How does Traffic Director work across hybrid and multi-cloud environments?

Whether you have services in Google Cloud, on-premises, in other clouds, or all of these, your fundamental application networking challenges remain the same. How do you get traffic to these services? How do these services communicate with each other?

Traffic Director can route traffic from services running in Google Cloud to services running in another public cloud and to services running in an on-premises data center. Services can use Envoy as a sidecar proxy or a proxyless gRPC service. When you use Traffic Director, you can send requests to destinations outside of Google Cloud. This enables you to use Cloud Interconnect or Cloud VPN to privately route traffic from services inside Google Cloud to services or gateways in other environments. You can also route requests to external services reachable over the public internet.

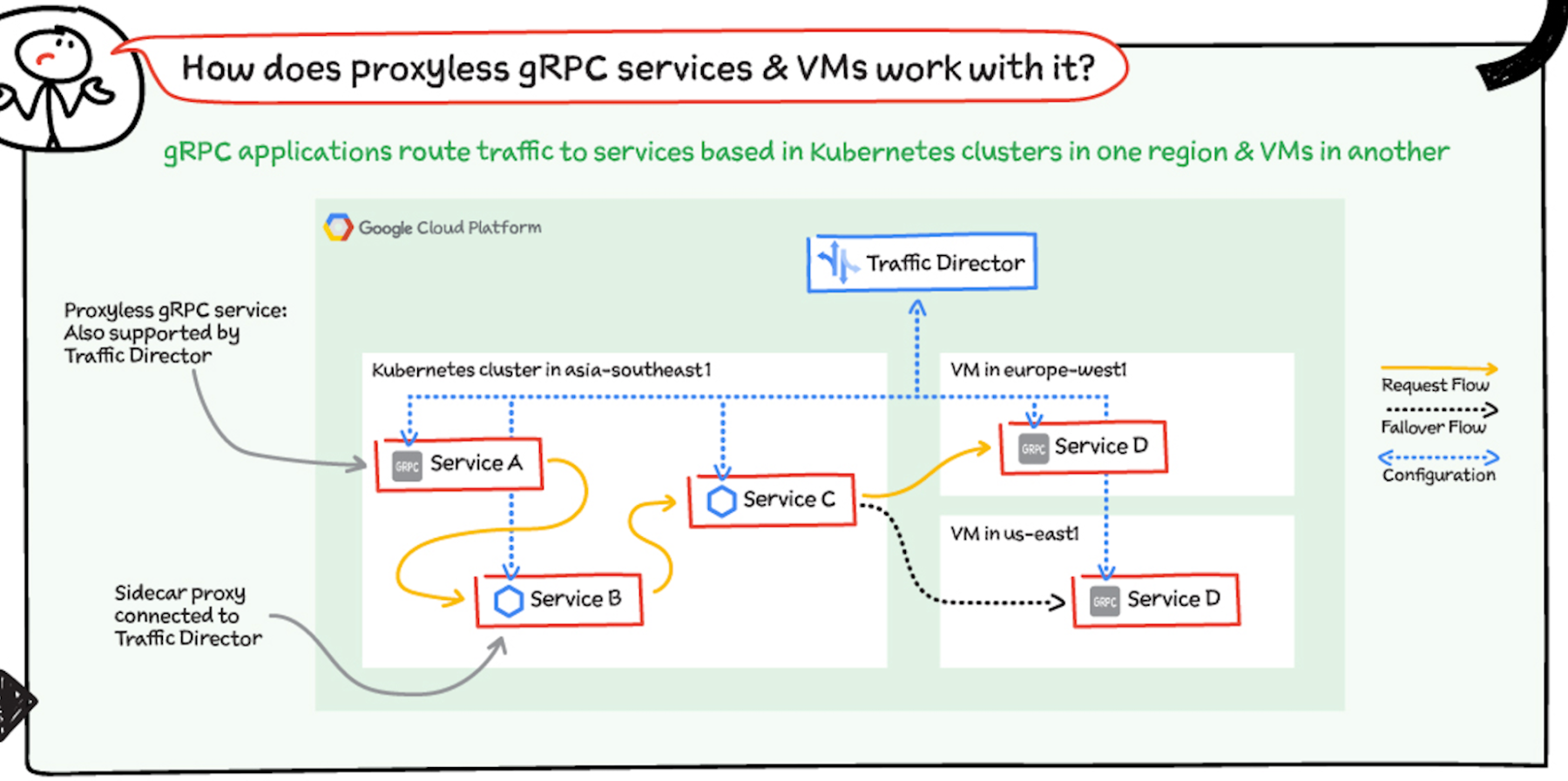

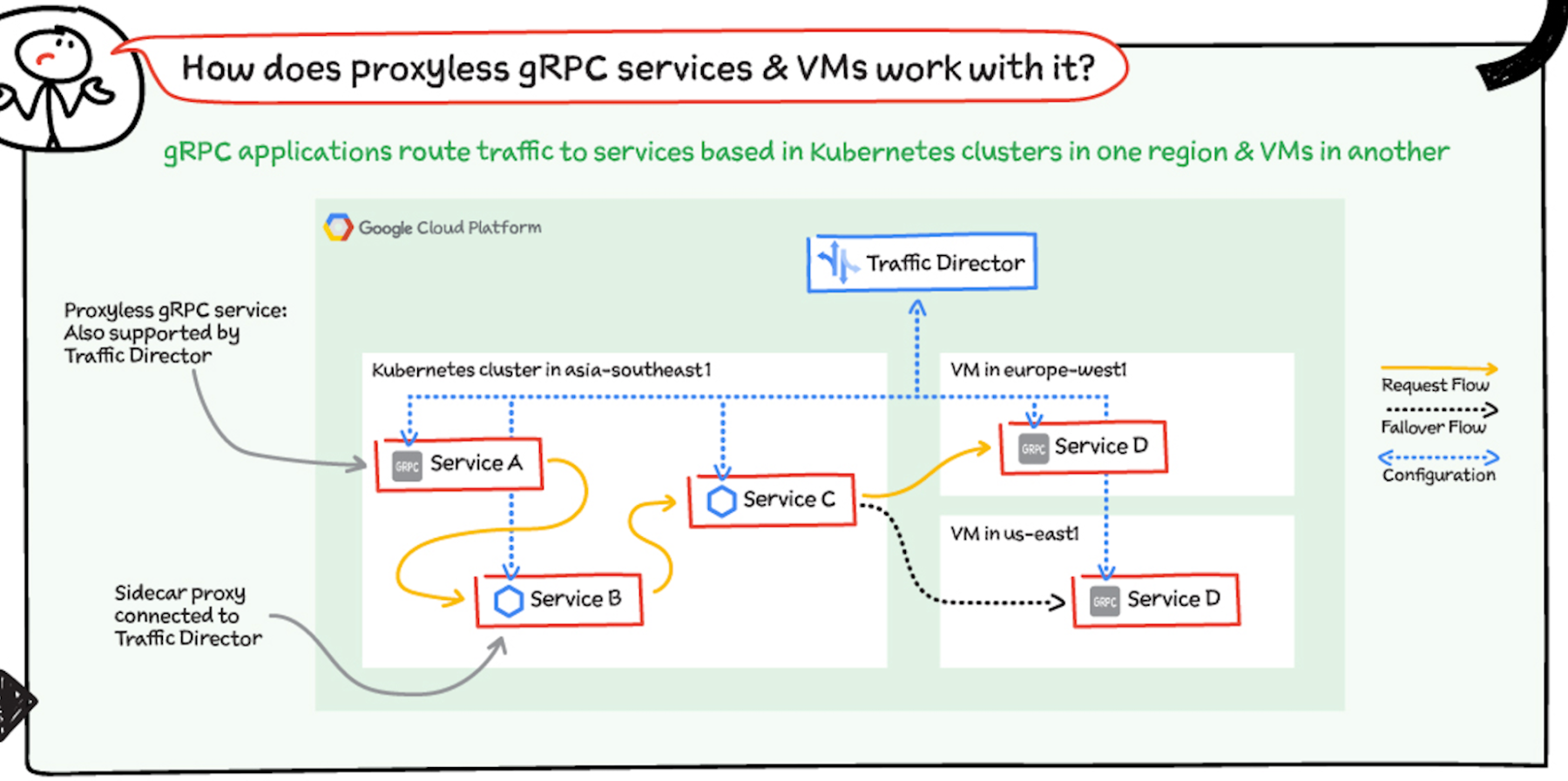

How does Traffic Director support proxyless gRPC and VMs?

Virtual machines: Traffic Director solves application networking for VM-based workloads alongside Kubernetes-based workloads. You simply add a flag to your Compute Engine VM instance template, and Google seamlessly handles the infrastructure set up, which includes installing and configuring the proxies that deliver application networking capabilities.

As an example, traffic enters your deployment through External HTTP(S) Load Balancing to a service in the Kubernetes cluster in one region and can then be routed to another service on a VM in a totally different region.

gRPC: With Traffic Director, you can easily bring application networking capabilities such as service discovery, load balancing, and traffic management directly to your gRPC applications. This functionality happens natively in gRPC, so service proxies are not required—that's why they're called proxyless gRPC applications. For more information, see Traffic Director and gRPC—proxyless services for your service mesh.

For a more in-depth look into Traffic Director check out this post and documentation.

For more #GCPSketchnote, follow the GitHub repo. For similar cloud content follow me on Twitter @pvergadia and keep an eye out on thecloudgirl.dev.