Migrating your Data Warehouse to BigQuery? Make sure to unit test your BigQuery UDFs with Dataform

Michaella Schaszberger

Strategic Cloud Engineer

Daniel De Leo

Strategic Cloud Engineer

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialWhen you’re migrating to BigQuery, you have a rich library of BigQuery native functions available to empower your analytics workloads. You can also extend this existing set of functions with your own user-defined functions (UDFs). Because we’re human and we all make mistakes, it’s a good idea to write unit tests to validate that your UDFs are behaving correctly. Dataform’s command line tool solves this need, enabling you to programmatically execute unit tests for all your UDFs.

Acquired by Google Cloud in 2020, Dataform provides a useful CLI tool to orchestrate the execution of SQL queries in BigQuery. Google Cloud’s Professional Services Organization open-sourced an example of how to use the Dataform CLI together with some template code to run unit tests on BigQuery UDFs. How much will it cost to run these tests? Nothing! Running your UDF unit tests with the Dataform CLI tool and BigQuery is free thanks to the following:

The open-sourced Dataform CLI tool is a free standalone tool that communicates only with BigQuery API.

BigQuery doesn’t charge for creating, replacing, or invoking UDFs.

Test data is provided as static values in the SQL queries that the Dataform CLI executes; no table data is scanned and no bytes are processed per query.

In the following sections, we’ll explain how you can run our example UDF unit tests and then how to start writing your own.

Run the UDF Unit Test Examples

The best way to see this testing framework in action is to go ahead and try it out yourself! The open-sourced example shows how to run several unit tests on the community-contributed UDFs in the bigquery-utils repo. Just follow these 4 simple steps:

1. Clone the bigquery-utils repo using either of the following methods:- Automatically clone the repo to your Google Cloud Shell by clicking here. (Recommended). A step-by-step tutorial will auto-launch to the right of your Cloud Shell. You can also manually launch the tutorial via the following command:

cloudshell launch-tutorial tutorial.md - Manually clone the repo and change into the correct directory by running the following:

git clone https://github.com/GoogleCloudPlatform/bigquery-utils.gitcd bigquery-utils/dataform/examples/dataform_udf_unit_test

2. Install the Dataform CLI tool: npm i -g @dataform/cli && dataform install

3. Generate the Dataform credentials file .df-credentials.json by running the following: dataform init-creds bigquery

You will be prompted to select the following:

Your dataset location (select ‘US’)

Your authentication method (select Application Default Credentials).

If you’re not running in the Cloud Shell, make sure to authenticate your environment using gcloud as shown below:gcloud auth application-default login- Your billing project ID (select the project in which you’ll run your queries).

4. Execute the unit tests by running the following: dataform test

Diving into the Details

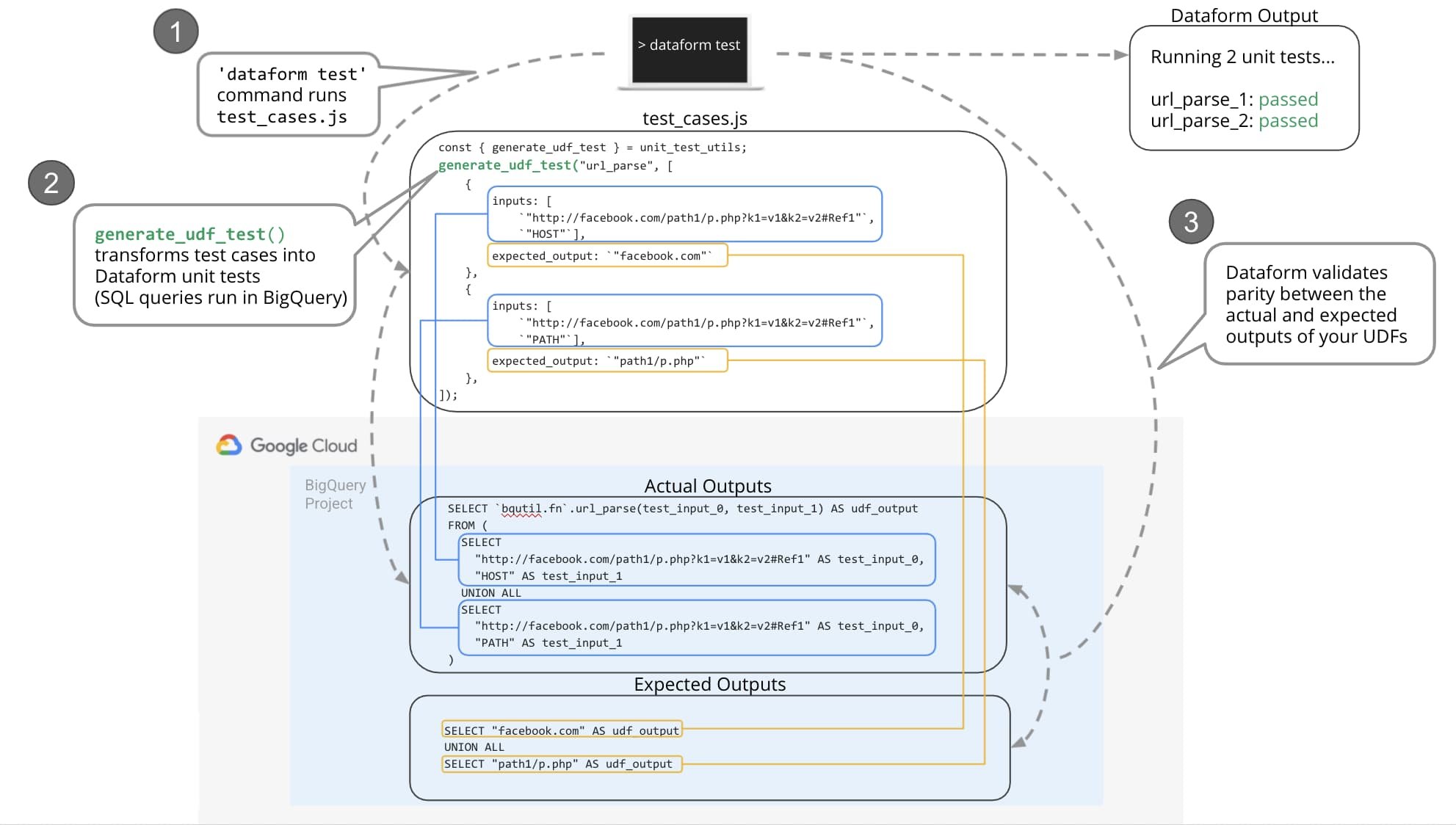

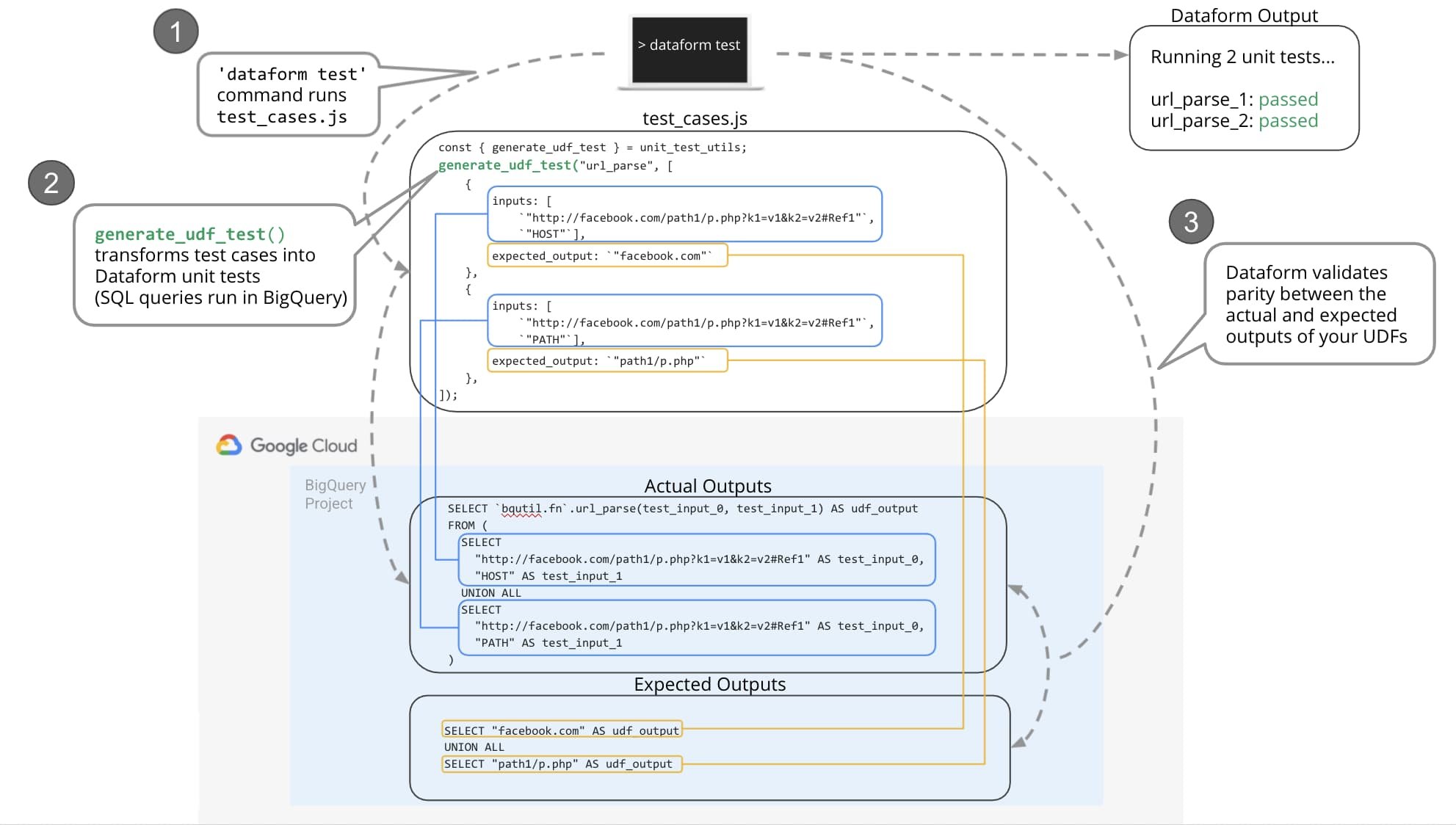

In the example provided, there is a file called test_cases.js that contains unit test inputs and expected outputs for the UDFs tested. Below is an excerpt from test_cases.js for the url_parse UDF which receives as inputs a URL and the part of the URL you want to extract, like the host or the path, and returns that specified part from the URL path.

At the top of the code snippet provided, you can see that unit_test_utils.js file exposes the generate_udf_test function. This function transforms the input(s) and expected output into the appropriate SELECT SQL statements to be run by the unit test. When you run the dataform test command, these SELECT SQL statements will be run in BigQuery. Then, Dataform will validate the output with your expectations by checking for parity between the results of the SELECT SQL statements.

If you want to look at what’s happening under the hood, navigate to your BigQuery console, then click the ‘Query History’ tab. Here, you can see the SQL queries created by the generate_udf_test function that Dataform executes in BigQuery. The following excerpt demonstrates these generated SELECT queries and how the input(s) provided in test_cases.js are passed as arguments to the UDF being tested.

The expected output you provide is then compiled into the following SELECT SQL statement which is used by Dataform to compare with the udf_output from the previous SQL statement:

When you run the dataform test command, dataform calls BigQuery to execute these SELECT SQL statements and checks for equality between the actual and expected output of these SQL queries.

The diagram above illustrates how the Dataform CLI uses the inputs and expected outputs in test_cases.js to construct and execute BigQuery SQL queries. Dataform then validates for parity between the actual and expected output of those queries.

Your Own UDF Unit Tests

You can easily write your own UDF unit tests by creating your own Dataform project directory structure and adding a test_cases.js file with your own test cases.

Follow these 5 steps to try it out:

1. While you’re still in the dataform_udf_unit_test directory, set the two environment variables below with your own values then create your Dataform project directory structure with the following commands:

2. Copy the includes/unit_test_utils.js file into your own includes/ directory, change into your new directory, and then create your credentials file (.df-credentials.json):

3. Create a new test_cases.js file:

4. Add an invocation of the generate_udf_test() function for the UDF you want to test. The generate_udf_test() function takes the following two positional arguments:

- The first argument is a string representing the name of the UDF you will test. You can either use the fully qualified UDF name (ex: bqutil.fn.url_parse) or just the UDF name (ex: url_parse). If you provide just the UDF name, the function will use the defaultDatabase and defaultSchema values from your dataform.json file.

- The second argument is an array of Javascript objects where each object holds the UDF positional inputs and expected output for a test case.

Note: If your UDF accepts inputs of different data types, you will need to group your test cases by input data types and create a separate invocation of generate_udf_test case for each group of test cases. Refer to the json_typeof UDF in the test_cases.js for an example of this implementation.

5. Run your unit tests to see if your UDF behaves as expected: dataform test

Now that you know how to run the open-sourced example, as well as how to create and configure your own unit tests using the CLI tool, you are ready to incorporate this testing strategy into your CI/CD pipelines to deploy and test UDFs in BigQuery.

Acknowledgements

Special thanks to Dan Lee and Ben Birt for the continual feedback and guidance which made this blog post and testing framework possible.