Cloud Run is now one year old: a look back

Steren Giannini

Director, Product Management

Ahmet Alp Balkan

Senior Developer Advocate

Cloud Run is built on a simple premise: combine the flexibility of containers with the simplicity, scalability, and productivity of serverless. In a few clicks, you can use Cloud Run to continuously deploy your Git repository to a fully managed environment that autoscales your containers behind a secure HTTPS endpoint. And because Cloud Run is fully managed, there’s no infrastructure management, so you can focus on delivering your applications quickly.

Cloud Run has been generally available (GA) for a full year now! Here's a recap of how Cloud Run has evolved in that time.

Enterprise ready

We've been hard at work expanding Cloud Run to 21 regions and are on track to be available in all the remaining Google Cloud regions by the end of the year.

Cloud Run services can now connect to resources with private IPs or use Cloud Memorystore Redis and Memcached by using Serverless VPC connectors, which support shared VPCs so you can connect to resources on-premises or in different projects. By routing all egress through the VPC, you benefit from a static outbound IP address for traffic originating from Cloud Run, which can be useful for making calls to external services that only allow certain IP ranges.

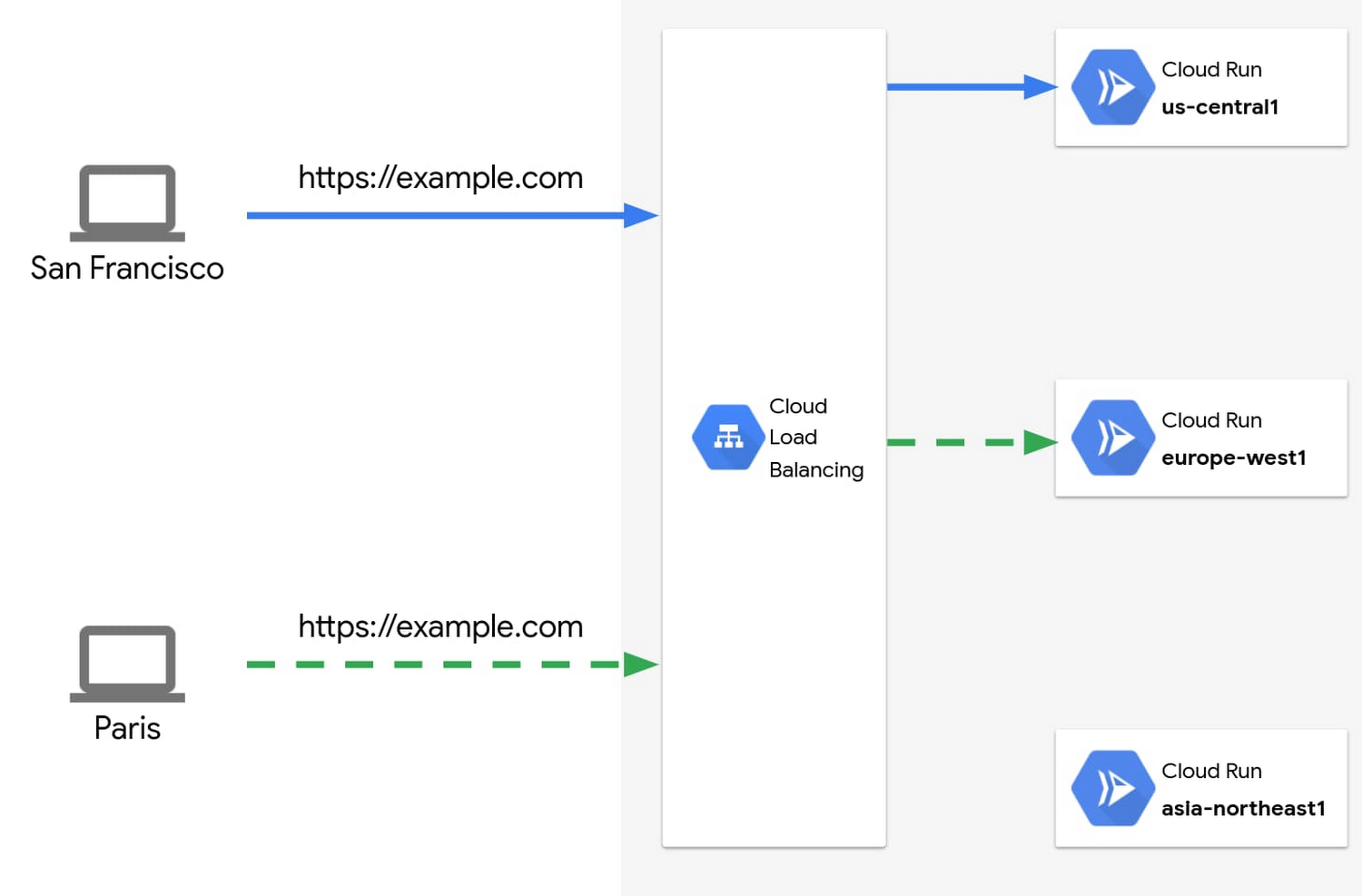

You can now also harness the power of Cloud Load Balancing with Cloud Run: bring your own TLS certificates, specify which versions of SSL you accept, or configure custom URL-based routing on your load balancer to serve content from different backends.

Global Load Balancing also enables you to run globally-distributed applications by serving traffic from multiple regions, serve static content cached on the edge via Cloud CDN, or protect your endpoints with the Cloud Armor web application firewall.

With API Gateway support, you can build APIs for your customers and run them on Cloud Run without having to implement authentication and other common concerns around hosting APIs.

With gradual rollouts and rollbacks, you can now safely release new revisions of your Cloud Run services by controlling the percentage of traffic sent to each revision, and testing specific revisions behind dedicated URLs.

Developer friendly

As developers ourselves, we are proud to work on a product that is so well received by the developer community.

Novice users are able to build and deploy an app on their first try in less than 5 minutes. It's so fast and easy that anyone can deploy multiple times a day. We love hearing your stories about how Cloud Run makes you more productive, so you can ship code faster.

In the past year, we added a number of features to improve developer productivity:

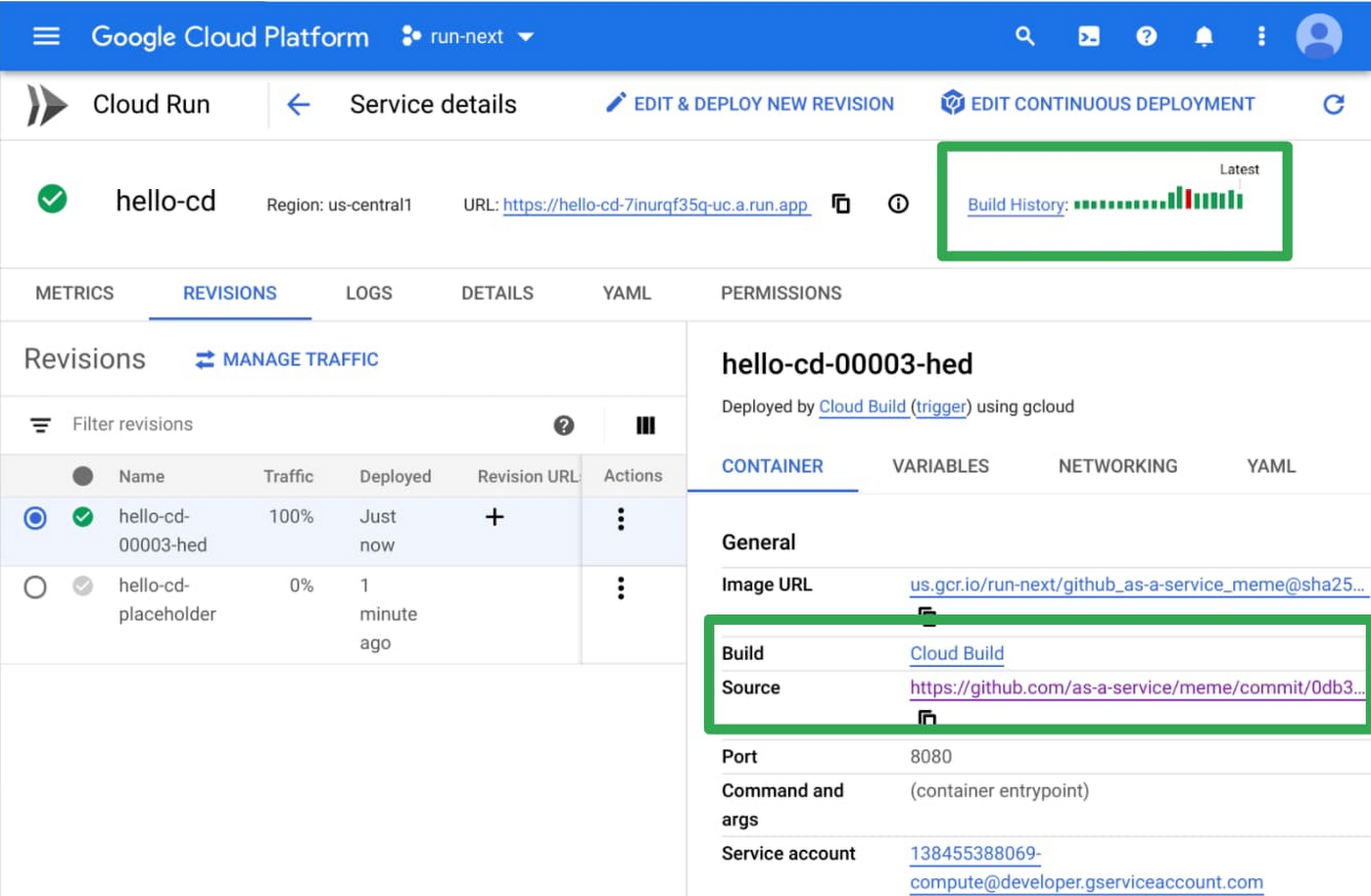

We added an easy user interface to set up Continuous Deployment from Git repositories. Every time you push a commit to a particular branch or tag a new release, it’s automatically built and deployed to Cloud Run using Cloud Build.

We also made it easier to develop applications. You can now deploy and run Cloud Run applications locally using Cloud Code. If you’re just getting started, Cloud Code can create a new application template for you, and you can run or debug your code locally in the emulator.

Hate writing Dockerfiles? With Google Cloud Buildpacks, you can now turn your application code directly into a container image for supported languages. This is great if you’re bringing an existing application to Cloud Run. Similarly, buildpacks let you convert your Cloud Functions to container images, thanks to the Functions Framework.

Then, to help you monitor the performance of your services and easily identify latency issues, requests sent to Cloud Run services are now captured out-of-the-box in Cloud Trace. If you want to do distributed tracing between your services, all you need to do is to pass on the trace header that you get to the outgoing requests and the trace spans will automatically correlate.

While Cloud Run is able to respond to requests, and to privately and securely process messages pushed by Pub/Sub, we also added the ability to trigger Cloud Run services from 60+ Google Cloud sources. And for more powerful orchestration, you can leverage Workflows to automate and orchestrate processing using Cloud Run.

Flexibility

We’re constantly pushing the limits of Cloud Run, so you can run more workloads in a fully managed environment. You can now allocate up to 4GB of memory and 4 CPUs to your container!

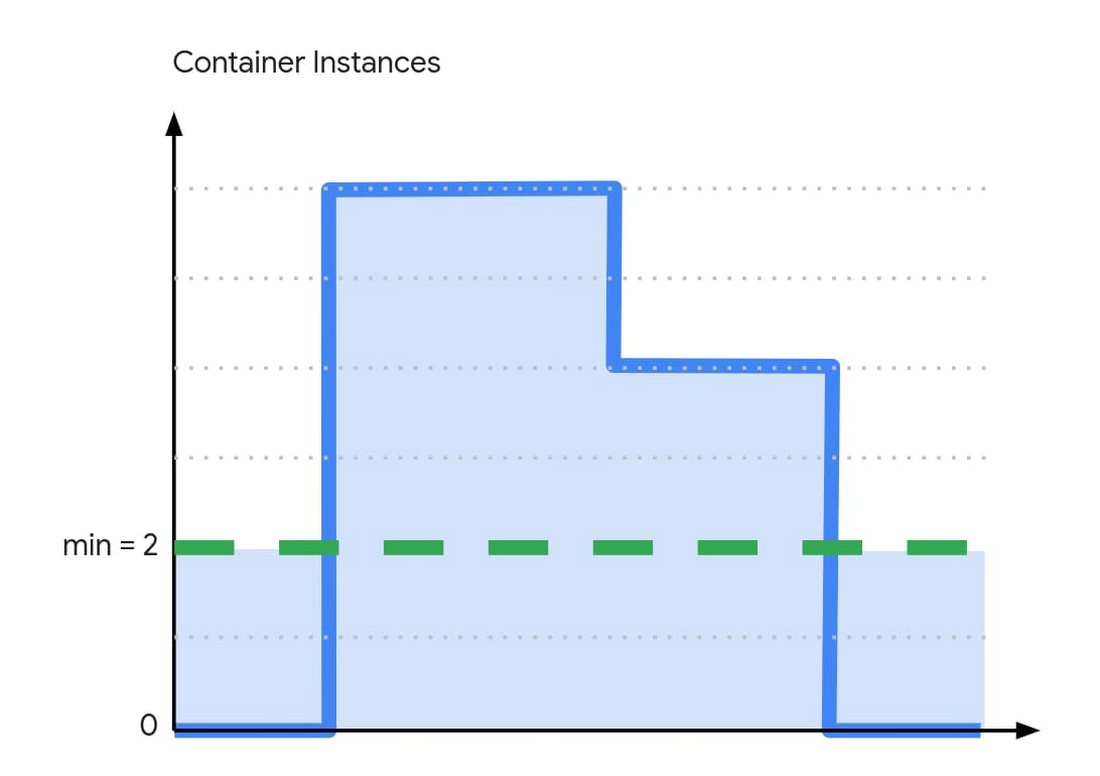

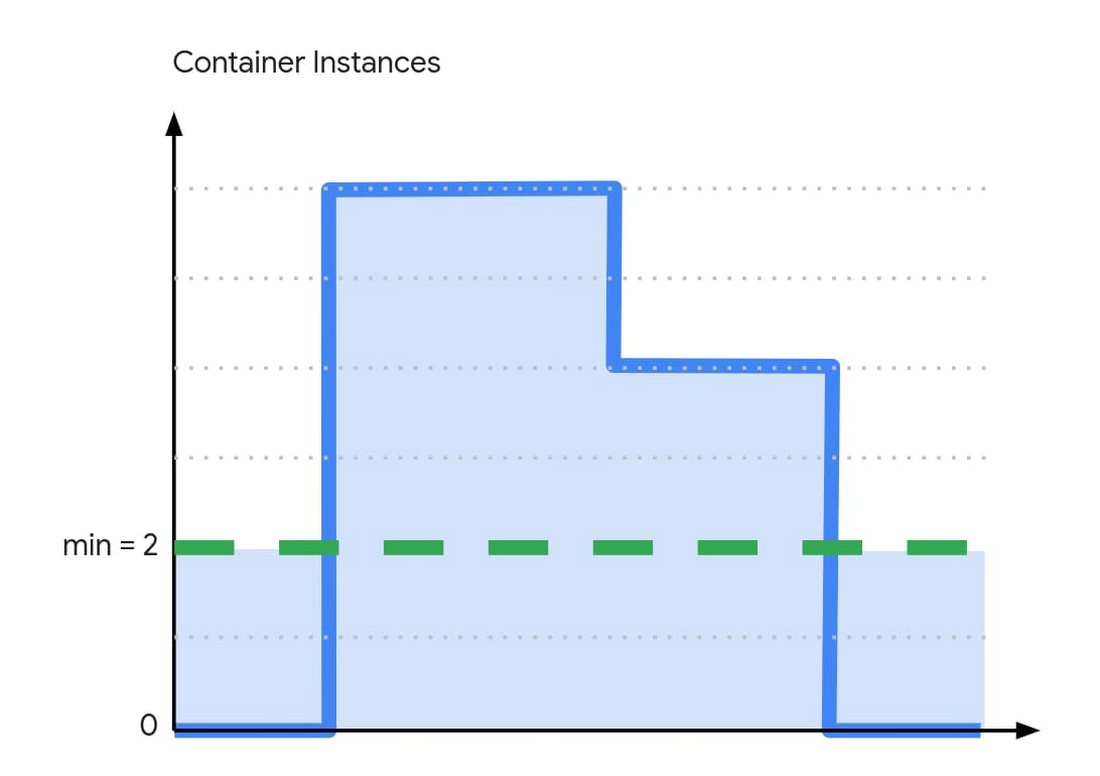

We also heard you want to minimize "cold starts," notably when scaling from zero. To help, we added the ability to keep a minimum number of warm container instances to handle requests without hitting the cold starts. These idle instances are priced cheaper than active instances when they’re not handling requests. If cold starts are bothering you, give this a try.

gRPC and server-side streaming let you reduce the time-to-first-byte of services by letting you send partial responses as they are computed, and write applications that stream data from the server side. With this capability, you are no longer limited to 32MB responses and you can implement server-sent events (SSE).

With one-hour request timeouts, a single request can now run up to an hour. Combined with server-side streaming, you can now stream large responses (such as documents or videos) or handle long-running tasks from Cloud Run.

Finally, graceful instance termination sends your process a termination signal (SIGTERM) before Cloud Run scales down your container instance. This gives you the opportunity to flush out any telemetry data that was kept local, and clean up the open connections, release locks, and so on.

What's next for Cloud Run?

It was a great first year for Cloud Run, but this is just the beginning. We’re working hard to improve Cloud Run so you can use it for more and more diverse workloads. At the same time, we’re still laser-focused on providing you with a delightful developer experience and addressing key enterprise requirements. Stay tuned for more exciting releases, like mounting secrets from Cloud Secret Manager, integration with Identity-Aware Proxy, bidirectional streaming and WebSockets.

To follow past and future Cloud Run features, take a spin through the release notes. And to learn more about these new features, check out this video. To help shape the future of Cloud Run, participate in our research studies.