Introducing HTTP/gRPC server streaming for Cloud Run

Ahmet Alp Balkan

Senior Developer Advocate

Daniel Conde

Product Manager

We are excited to announce the availability of server-side HTTP streaming for your serverless applications running on Cloud Run (fully managed). With this enhanced networking capability, your Cloud Run services can serve larger responses or stream partial responses to clients during the span of a single request, enabling quicker server response times for your applications.

With this addition, Cloud Run can now:

Send responses larger than the previous 32 MB limit.

Run gRPC services with server-streaming RPCs and send partial responses in a single request—in addition to existing support for unary (non-streaming) RPCs.

Respond with server-sent events (SSE), which you can consume from your frontend using the HTML5 EventSource API.

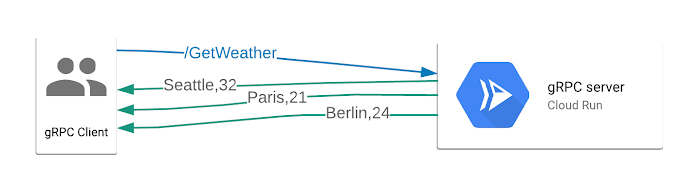

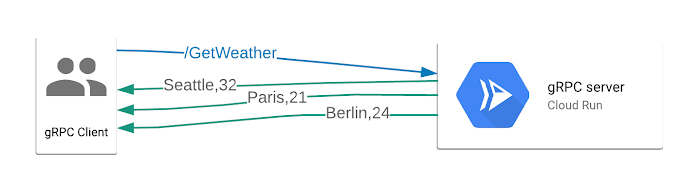

Streaming responses help you develop applications that send partial responses to clients as the responses become available, so that your applications and websites can be more responsive. Without streaming support, all the responses must be computed in full before they can be sent to the client; this delays time to first byte (TTFB) performance of your applications. In the diagram below, three partial responses are incrementally sent to the gRPC client.

Here are some example use cases for server-side HTTP streaming:

Streaming large files (such as videos) from your serverless applications

Long-running calculations that can report using a progress indicator

Batch jobs that can return intermediate or batched responses

Let's try it: Serverless gRPC streaming

Streaming support in Cloud Run brings significant performance improvements to a certain class of applications, and this is just the beginning—stay tuned as we work hard to enable more features in Cloud Run.

We've developed a sample gRPC server application that you can deploy to your Google Cloud account in a single click and run a client to stream responses from the Cloud Run-based serverless application.

Try out streaming support in Cloud Run and let us know what you think on Stack Overflow or on our issue tracker.