Global HTTP(S) Load Balancing and CDN now support serverless compute

Babi Seal

Product Manager, Google Cloud

Daniel Conde

Product Manager

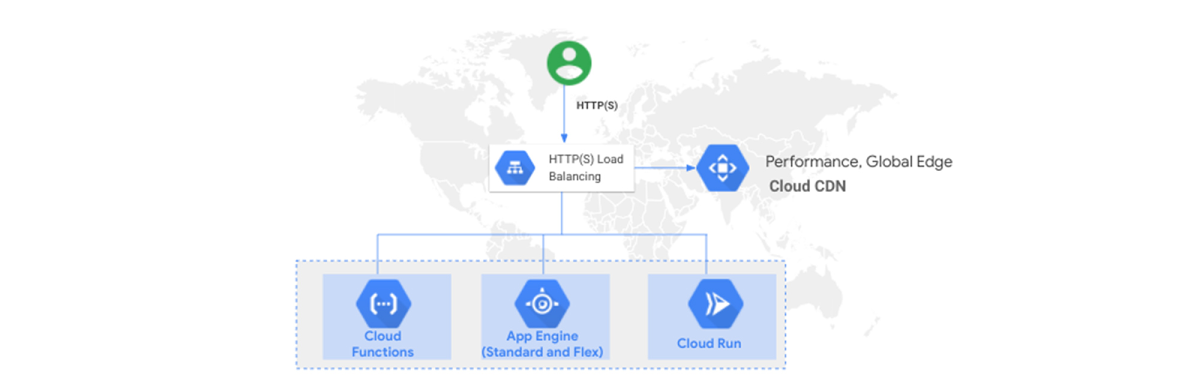

Many of you choose to develop serverless applications on Google Cloud so that you don’t have to worry about provisioning and managing the underlying infrastructure. In addition, serverless applications scale on-demand so you only pay for what you use. But historically, serverless products like App Engine used a different HTTP load balancing system than VM-based products like Compute Engine. Today, with new External HTTP(S) Load Balancing integration, serverless offerings like App Engine (standard and flex), Cloud Functions and Cloud Run can now use the same fully featured enterprise-grade HTTP(S) load balancing capabilities as the rest of Google Cloud.

With this integration, you can now assign a single global anycast IP address to your service, manage its certificates and TLS configuration, integrate with Cloud CDN, and for Cloud Run and Functions—load balance across regions. And over the coming months, we will continue to add features like support for Cloud Identity-Aware Proxy (IAP) and Cloud Armor.

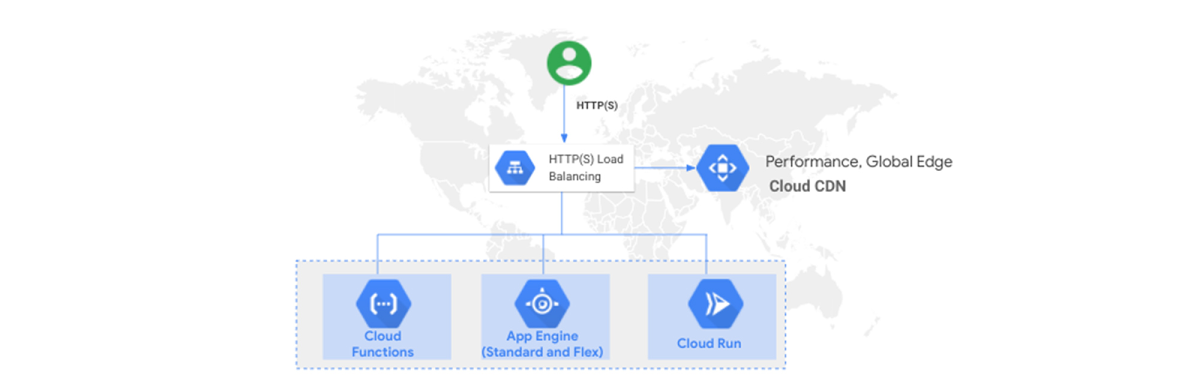

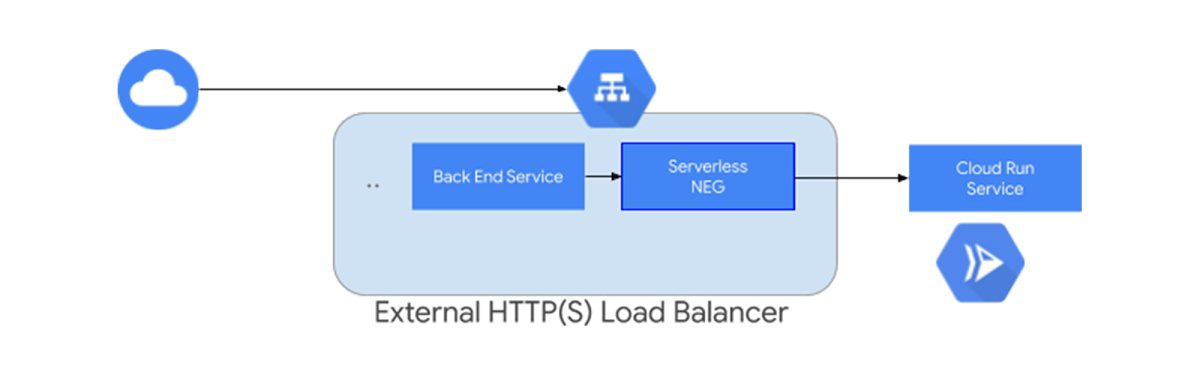

Introducing serverless Network Endpoint Groups

These new capabilities are courtesy of a foundational feature of Google Cloud networking and load balancing: network endpoint groups (NEGs). These collections of network endpoints are used as backends for some load balancers to define how a set of endpoints should be reached, whether they can be reached, and where they’re located. Google Cloud HTTP(S) Load Balancing already supports a number of different types of NEGs, like internet NEGs and Compute Engine zonal NEGs, and today, we’re expanding this list to include serverless NEGs, which allow External HTTP(S) Load Balancing to use App Engine, Cloud Functions, and Cloud Run services as backends.

Adding serverless NEGs to External HTTP(S) Load Balancer brings a whole host of benefits to your serverless workloads. Let’s take a deeper look.

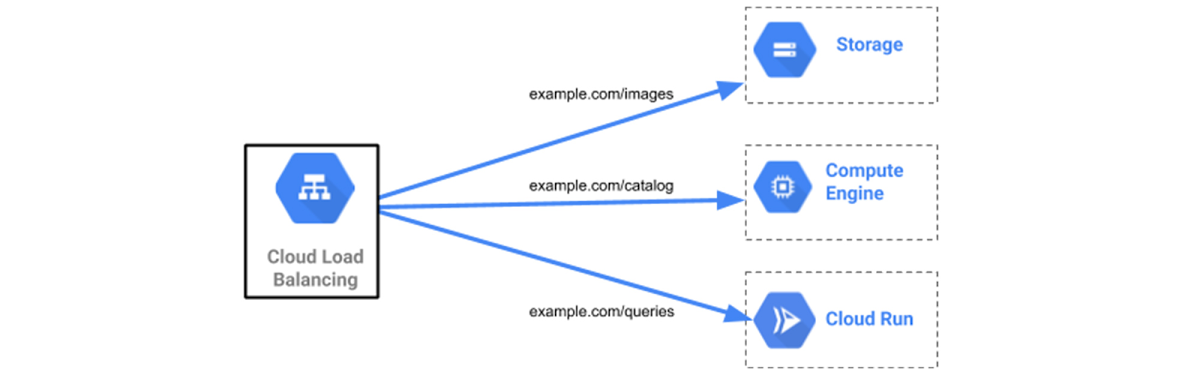

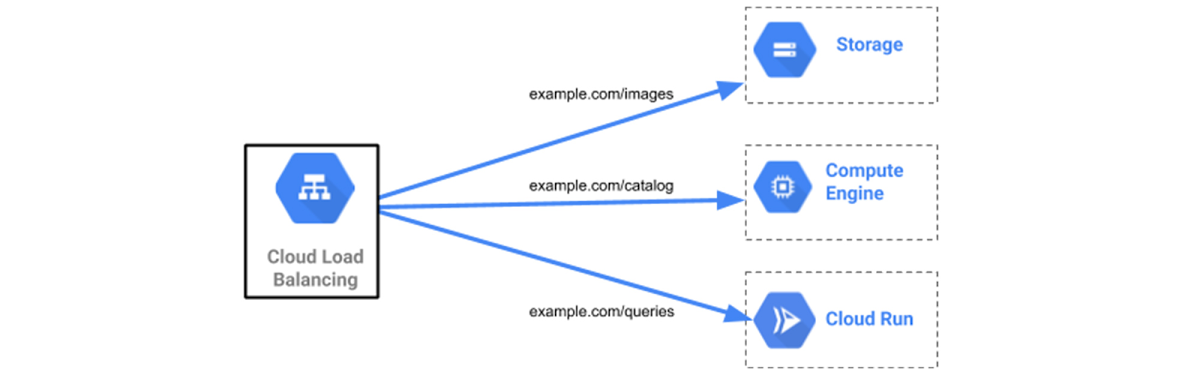

Seamless integration with other services

One of the key features of Google Cloud HTTP(S) load balancing is load balancing across multiple, different backend types, and the integration of serverless NEGs improves on that: the load balancer’s URL map can now mix Serverless NEGs with other backend types. For example, your external IPv4 and IPv6 clients can request video, API, and image content by using the same base URL, with the paths /catalog, /queries, /video, and /images. Via the load-balancer’s URL map, requests to various paths are routed to different backends:

/queries - >a backend service with a serverless NEG backend/catalog ->a backend service with a VM instance group or a zonal NEG backend/images ->a backend bucket with a Cloud Storage backend/video ->a backend service that points to an internet NEG containing an external endpoint that is located on-premises outside of Google Cloud.

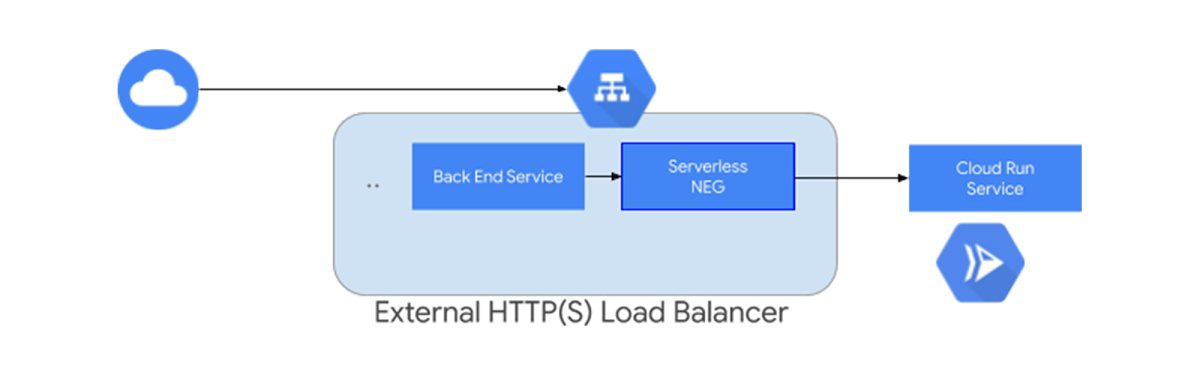

Multi-region availability for Cloud Run and Cloud Functions

By integrating our serverless offerings with HTTP(S) Load Balancer, you can now deploy Cloud Run and Cloud Functions services in multiple regions. Using Cloud Run as an example, a load balancer configured with a Premium Network Service Tier and a single forwarding rule, will deliver the request to the backend Cloud Run service closest to your clients. Processing requests from multiple regions helps improve your service’s availability as well as response latency to your clients. Requests land in the region closest to your users, however if the region is unavailable or short on capacity, the request will be routed to a different region.

Enabling this capability is as simple as deploying Cloud Run services to different regions, setting up multiple serverless NEGs and connecting the load balancer to global backend services.

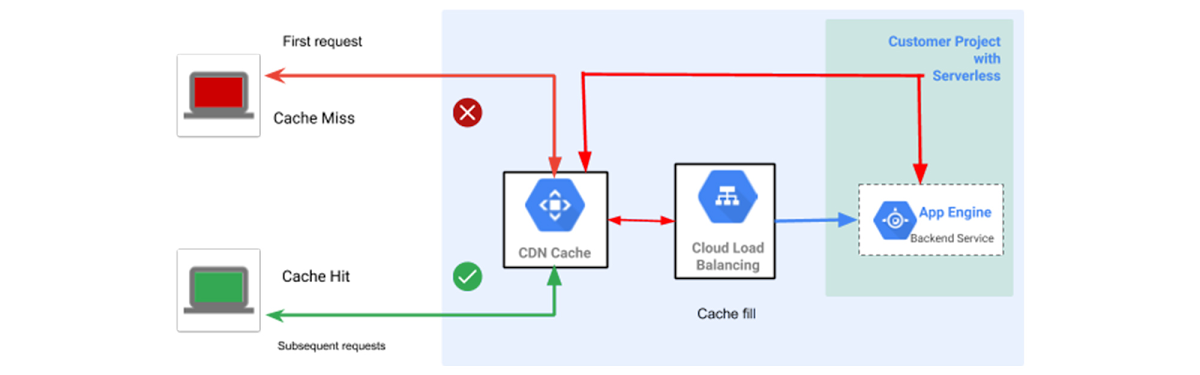

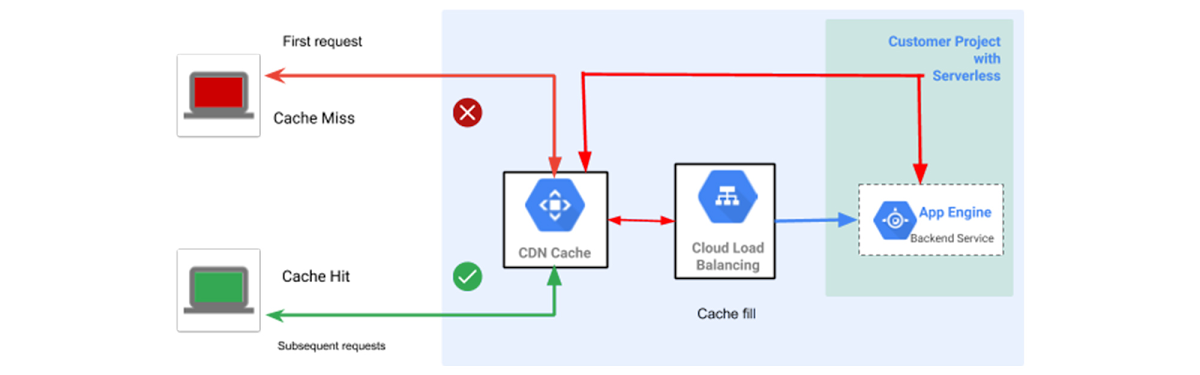

Better performance with Cloud CDN

Cloud Run, Cloud Functions and App Engine can have Cloud CDN enabled just like a Cloud Storage bucket or non-GCP backend (custom origin).

You can use the HTTP(S) Load Balancer to route static traffic to your Cloud Storage bucket and your web users or API clients to your serverless backend. This allows you to make the most of our CDN—quickly serving regularly accessed content, closer to users—thanks to Google’s globally distributed edge.

Alternatively, you can route all traffic to your serverless service (e.g. Cloud Run), and determine what content to cache on a per-response basis by returning the appropriate "Cache-Control" header. This can be great if you’re serving a public REST API from Cloud Run, and want to offload the most commonly accessed endpoints to Cloud CDN.

To use Cloud CDN with your serverless origin, simply enable Cloud CDN on the backend service that contains your serverless NEG.

SSL certificates and SSL policy management

Both SSL certificate management and SSL policies are now available to you for use with serverless applications. It is now easier than ever to specify a minimum TLS version and ciphers for authenticating and encrypting traffic to your serverless applications.

The integration of HTTP(S) Load Balancing with our serverless platform also allows you to reuse the same SSL certificates and private keys that you use for your domain traffic for Compute Engine, Cloud Storage and Google Kubernetes Engine (GKE). This eliminates the need to manage separate certificates.

What’s next?

The addition and integration of serverless NEGs with HTTP(S) Load Balancing helps enterprise customers leverage our serverless compute platforms and integrate them with their existing workloads across Google Cloud. It provides higher availability for Cloud Run, better performance with Cloud CDN and seamless integration with Cloud Storage and other services.

But we’re not done yet. With Cloud Armor integration, for example, serverless applications will get DDoS mitigation and a Web Application Firewall. And with Cloud IAP integration, serverless applications will get the benefits of BeyondCorp and its zero-trust architecture, including authentication and authorization based on identity and context, enabling a rich set of policies. We’re also improving Cloud Run and App Engine to allow you to restrict direct access, so that clients can only access your serverless backends via the HTTP(S) Load Balancer. To learn more and get started, please check out the following documentation. We’d love your feedback on these new capabilities.