Extended retention for custom and Prometheus metrics in Cloud Monitoring

Rory Petty

Cloud Monitoring Product Manager

Shishi Chen

Cloud Monitoring Software Engineer

Metrics help you understand how your business and applications are performing. Longer metric retention enables quarter-over-quarter or year-over-year analysis and reporting, forecasting seasonal trends, retention for compliance, and much more. We recently announced the general availability (GA) of extended metric retention for custom and Prometheus metrics in Cloud Monitoring, increasing retention from 6 weeks to 24 months. Extended retention for custom and Prometheus metrics is enabled by default.

Longer retention is particularly useful in financial services, retail, healthcare, and media organizations. For example, a finance team could use the extended data to forecast seasonal trends, so that you know how many Compute Engine instances to reserve ahead of time for Black Friday. Similarly, a DevOps team could use year over year data to help inform a scaling plan for Cyber Monday.

To achieve higher charting performance, Cloud Monitoring stores metric data for 6 weeks at its original sampling frequency, then downsamples it to 10-minute intervals for extended storage. This ensures that you can view extended retention metrics but still query with high performance. There is no additional cost for extended retention (see Cloud Monitoring chargeable services which is based on volume ingestion for specific metric types).

Extended retention for Google Cloud (system) metrics, agent metrics, and other metric types is coming soon.

How to query extended retention metrics

Let’s take an example scenario where you have a Compute Engine VM running a web application. In that web app, you write a metric that tracks a critical user journey for which you want to perform a month-over-month analysis.

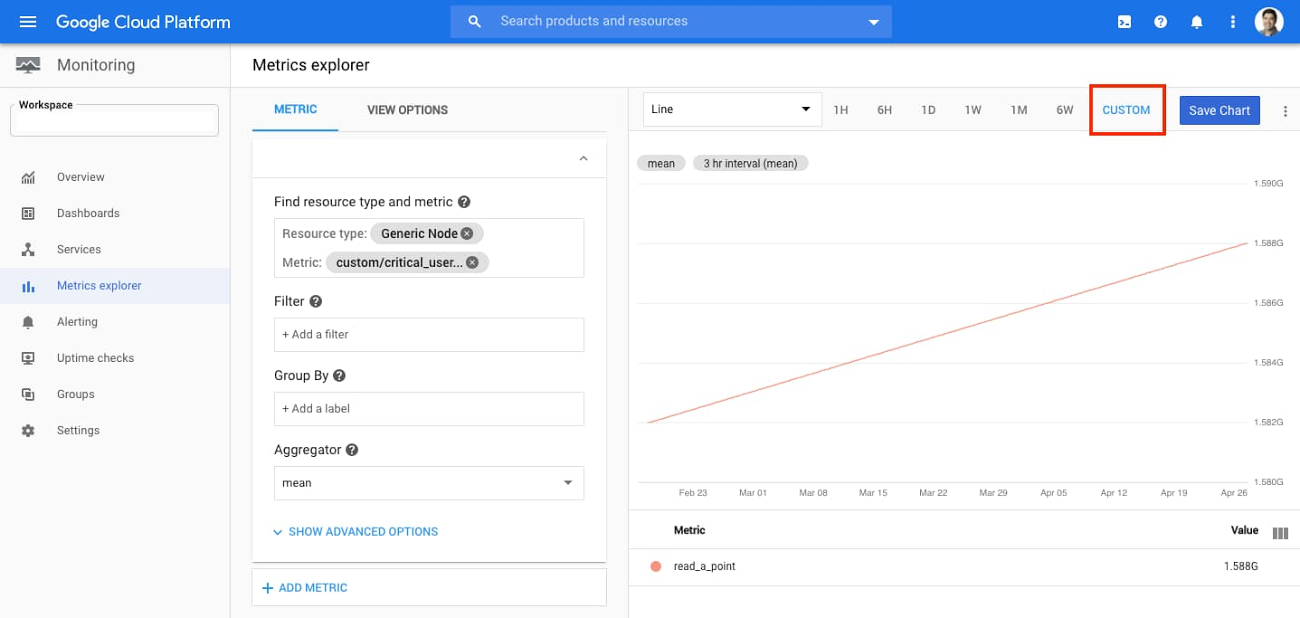

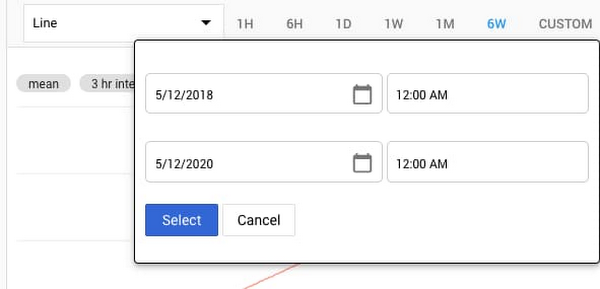

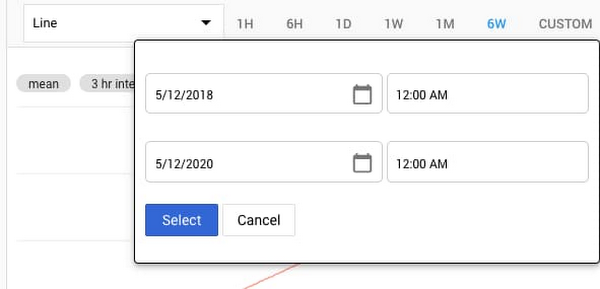

To query metric data for a month-over-month comparison, go to Cloud Monitoring and select Metrics Explorer. Select your custom or Prometheus metric and the resource type. Then click on “Custom” in the time range selector above the chart. Previously the time-range selector only allowed you to select up to 6 weeks of metric data; now you can select up to 24 months.

In addition to the UI, you can also perform the above query steps programmatically through the ListTimeSeries endpoint of the Monitoring API.

The above query lets you view metric data values for a given time range. But how do you compare results month over month?

To perform time shift analysis, you can use the Cloud Monitoring Query Language, which recently became generally available.

Let’s take the example of a custom metric that tracks request counts for a shopping cart service in an e-commerce application. The following query returns the overall mean request counts now and from a month ago. Using “union”, you can display these two results on the same chart.

Note: the resource and metric specified below are an example; to use it in your environment, replace them with your own custom or Prometheus metric.

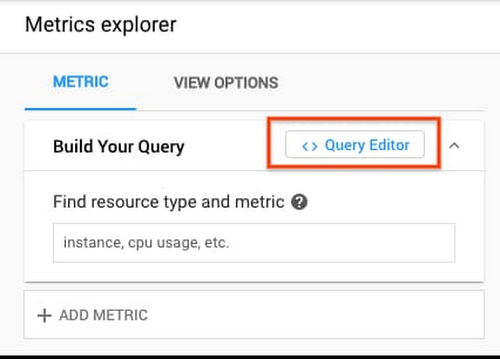

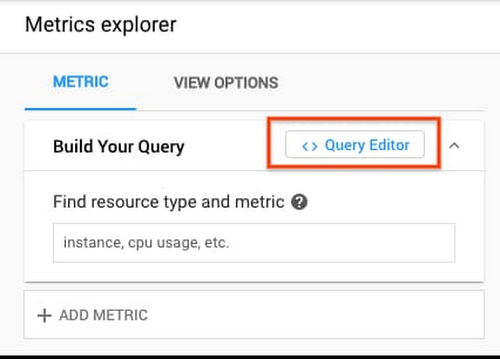

To enter the query, go to Metrics Explorer and click the “Query Editor” button:

Enter the above query, click “Run Query”, and you’ll see a result like the following:

Extending the usefulness of metrics

With Cloud Monitoring, we give you visibility into your data and help you to understand the health and performance of your services and applications. Extended metric retention helps your DevOps, engineering, and business teams with troubleshooting and debugging, compliance, reporting, and many other use cases. It allows you to do real-time operations and long-term data analysis in a single tool, without needing to export to another data analytics tool.

If you have any questions or feedback, please click Help > Send Feedback in the Cloud Monitoring UI or contact Cloud Support. We also invite you to join the discussion on our mailing list. As always, we welcome your feedback.