News, tips, and inspiration to accelerate your digital transformation

Infrastructure

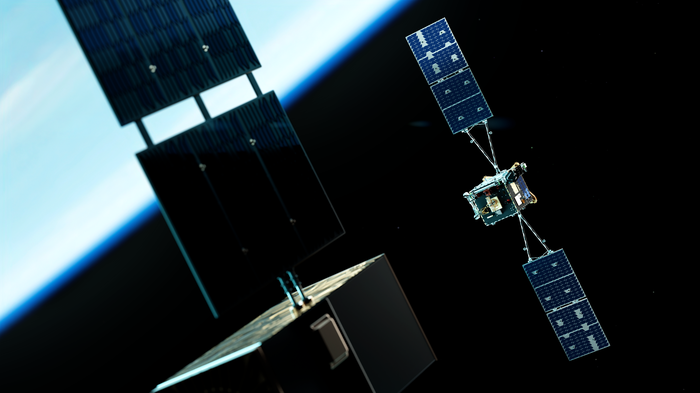

Announcing America-India Connect and new investments to advance global AI access

The America-India Connect system increases the reach, reliability, and resilience of digital connectivity across four continents, expanding AI access.

By Brian Quigley • 4-minute read

How Google Cloud is helping U.S. Olympians go bigger with AI

Building an experimental training tool so athletes from the U.S. Ski & Snowboard freestyle teams can gain a whole new perspective on their sport, and themselves.

Read nowData Cloud

AI & ML

Security

Application Modernization

Infrastructure

Developers & Operators

News in short

A quick take on updates, announcements, resources, events, and learning opportunities from Google Cloud in one handy location. Updated weekly.

Click here