What we learned doing serverless — the Smart Parking story

Brian Granatir

SmartCloud Engineering Team Lead, Smart Parking

Part 3

You made it through all the fluff and huff! Welcome to the main event. Time for us to explore some key concepts in depth. Of course, we won't have time to cover everything. If you have any further questions, or recommendations for a follow-up (or a prequel . . . who does a prequel to a tech blog?), please don't hesitate to email me."Indexing" in Google Cloud Bigtable

In parts one & two, we mentioned Cloud Bigtable a lot. It's an incredible, serverless database with immense power. However, like all great software systems, it's designed to deal with a very specific set of problems. Therefore, it has constraints on how it can be used. There are no traditional indexes in Bigtable. You can't say "index the email column" and then query it later. Wait. No indexes? Sounds useless, right? Yet, this is the storage mechanism used by Google to run our life-depending sites: Gmail, YouTube, Google Maps, etc. But I can search in those. How do they do it without traditional indexes? I'm glad you asked!!

The answer has two parts: (1) using rowkeys and (2) data mitosis. Let's take a look at both. But, before we do that, let's make one very important point: Never assume you have expertise in anything just because you read a blog about it!!!

I know, it feels like reading this blog [with its overflowing abundance of awesome] might be the exception. Unfortunately, it's not. To master anything, you need to study the deepest parts of its implementation and practice. In other words, to master Bigtable you need to understand "what" it is and "why" it is. Fortunately, Bigtable implements the HBase API. This means you can learn heaps about Bigtable and this amazing data storage and access model by reading the plentiful documentation on HBase, and its sister project Hadoop. In fact, if you want to understand how to build any system for scale, you need to have at least a basic understanding of MapReduce and Hadoooooooooop (little known fact, "Hadoop" can be spelt with as many o's as desired; reduce it later).

If you just followed the concepts covered in this blog, you'd walk away with an incomplete and potentially dangerous view of Bigtable and what it can do. Bigtable will change your development life, so at least take it out to dinner a few times before you get down on one knee!

Ok, got the disclaimer out of the way, now onto rowkeys!

Rowkeys are the only form of indexing provided in Bigtable. A rowkey is the ID used to distinguish individual rows. For example, if I was storing a single row per user, I might have the rowkeys be the unique usernames. For example:

We can then scan and select rows by using these keys. Sounds simple enough. However, we can make these rowkeys be compound indexes. That means that we carry multiple pieces of information within a single rowkey. For example, what if we had three kinds of users: admin, customer and employee. We can put this information in the rowkey. For example:

(Note: We're using # to delineate parts of our rowkey, but you can use any special character you want.)

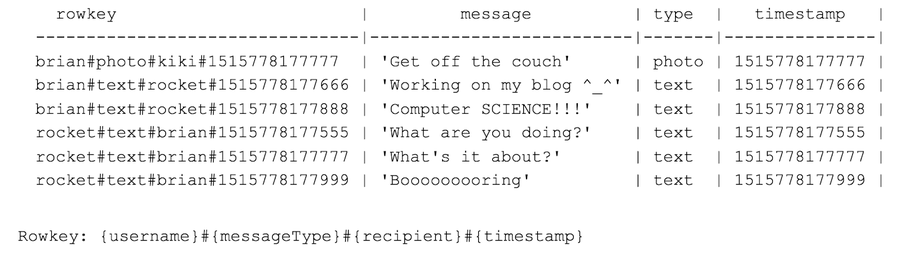

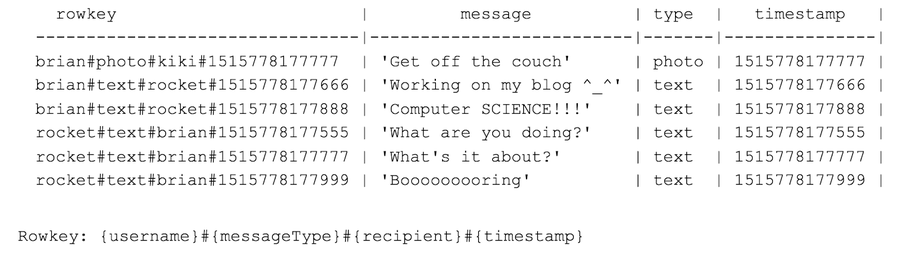

Now we can query for user type easily. In other words, I can easily fetch all "admin" user rows by doing a prefix search (i.e., find all rows that start with "admin#"). We can get really fancy with our rowkeys too. For example, we can store user messages using something like:

However, we cannot search for the latest 10 messages by Brian using rowkeys. Also, there's no easy way to get a series of related messages in order. Maybe I need a unique conversation ID that I put at the start of each rowkey? Maybe.

Determining the right rowkeys is paramount to using Bigtable effectively. However, you'll need to watch out for hotspotting (a topic not covered in this blog post). Also, any Bigtablians out there will be very upset with me because my examples don't show column families. Yeah, Bigtable must have the best holidays, because everything is about families.

So, we can efficiently search our rows using rowkeys, but this may seem every limited. Who could design a single rowkey that covers every possible query? You can't. This is where the second major concept comes in: data mitosis.

What is data mitosis? It's replication of data into multiple tables that are optimized for specific queries. What? I'm replicating data just to overcome indexing limits? This is madness. NO! THIS. IS. SERVERLESS!

While it might sound insane, storage is cheap. In fact, storage is so cheap, we'd be naive to not abuse it. This means that we shouldn't be afraid to store our data as many times as we want to simply improve overall access. Bigtable works efficiently with billions of rows. So go ahead and have billions of rows. Don't worry about capacity or maintaining a monsterous data cluster, Google does that for you. This is the power of serverless. I can do things that weren't possible before. I can take a single record and store it ten (or even a hundred) times just to make data sets optimized for specific usages (i.e., for specific queries).

To be honest, this is the true power of serverless. To quote myself, storage is magic!

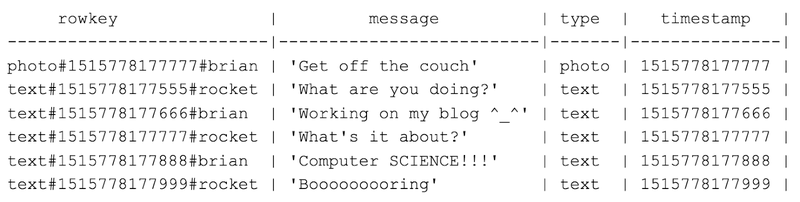

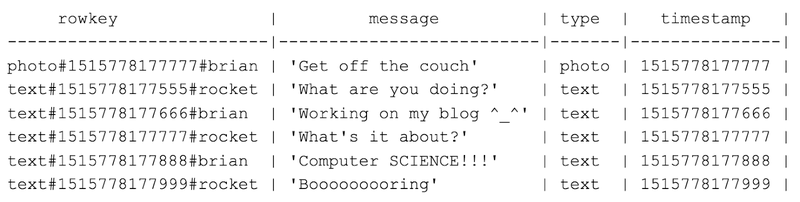

So, if I needed to access all messages in my system for analytics, why not make another view of the same data:

Of course, data mitosis means you have insanely fast access to data but it isn't without cost. You need to be careful in how you update data. Imagine the bookkeeping nightmare of trying to manage synchronized updates across dozens of data replicants. In most cases, the solution is never updating rows (only adding them). This is why event-driven architectures are ideal for Bigtable. That said, no database is perfect for all problems. That's why it's great that I can have SQL, noSQL, and HBASE databases all running for minimal costs (with no maintenance) using serverless! Why use only one database? Use them all!

Exporting to BigQuery

In the previous section we learned about the modern data storage model: store everything in the right database and store it multiple times. It sounds wonderful, but how do we run queries that transcend this eclectic set of data sources? The answer is. . . we cheat. BigQuery is cheating. I cannot think of any other way of describing the service. It's simply unfair. You know that room in your house (or maybe in your garage)? That place where you store EVERYTHING—all the stuff you never touch but don't want to toss out? Imagine if you had a service that could search through all the crap and instantly find what you're looking for. Wouldn't that be nice? That's BigQuery. If it existed IRL . . . it would save marriages. It's that good.

By using BigQuery, we can scale our searches across massive data sets and get results in seconds. Seriously. All we need to do is make our data accessible. Fortunately, BigQuery already has a bunch of onramps available (including pulling your existing data from Google Cloud Storage, CSVs, JSON, or Bigtable), but let's assume we need something custom. How do you do this? By streaming the data directly into BigQuery! Again, we're going to replicate our data into another place just for convenience. I would've never considered this until serverless made it cheap and easy.

In our architecture, this is almost too easy. We simply add a Cloud Function that listens to all our events and streams them into BigQuery. Just subscribe to the Pub/Sub topics and push. It’s so simple. Here's the code:

That's it! Did you think it was going to be a lot of code? These are Cloud Functions. They should be under 100 lines of code. In fact, they should be under 40. With a bit of boilerplate, we can make this one line of code:

Ok, but what is the boilerplate code? More on that in the next section. This section is short, as it should be. Honestly, getting data into BigQuery is easy. Google has provided a lot of input hooks and keeps adding more. Once you have the data in there (regardless of size), you can just run the standard SQL queries you all know and loathe love. Up, up, down, down, left, right, left, right, B, A!

Cloud Function boilerplate

Cloud Functions use Node? Cloud Functions are JavaScript? Gag! Yes, that was my initial reaction. Now (9 months later), I never want to write anything else in my career. Why? Because Cloud Functions are simple. They are tiny. You don't need a big robust programming language when all you're doing is one thing. In fact, this is a case where less is more. Keep it simple! If your Cloud Function is too complex, break it apart.

Of course, there are a sequence of steps that we do in every Cloud Function:

The only thing we should be writing is step 2 (and sometimes step 5). This is where boilerplate code comes in. I like my code like I like my wine: DRY! [DRY = Don't Repeat Yourself, btw].

So write the code to parse your triggers and send your outputs once. There are more steps! The actual sequence of steps for a Cloud Function is:

Ugh. So our simple Cloud Functions just became a giant list of steps. It sounds painful, but it can all be overcome with some boilerplate code and an understanding of how Cloud Functions work at a larger level.

How do we do this? By adding a common configuration for each Cloud Function that can be used to drive testing, deployment and common behaviour. All our Cloud Functions start with a block like this:

It may seem basic, but this understanding of Cloud Functions allows us to create a harness that can perform all of the above steps. We can deploy a Cloud Function if we know its trigger and its type. Since everything is inside GCP, we can easily create resources if we know our output targets and their types. We can perform efficient logging and track data through our system by knowing the start and end point (triggers and targets) for each function. The filter allows us to limit which events arriving in a Pub/Sub topic are handled.

So, what's the takeaway for this section? Make sure you understand Cloud Functions fully. See them as tiny connectors between a given input and target output (preferably only one). Use this to make boilerplate code. Each Cloud Function should contain a configuration and only the lines of code that make it unique. It may seem like a lot of work, but making a generic methodology for handling Cloud Functions will liberate you and your code. You'll get addicted and find yourself sheepishly saying, "Yeah, I kinda like JavaScript, and you know . . .Node" (imagine that!)

Testing

We can't end this blog without a quick talk on testing. Now let me be completely honest. I HATED testing for most of my career. I'm flawless, so why would I want to write tests? I know, I know . . . even a diamond needs to be polished every once-in-awhile.

That said, now I love testing. Why? Because testing Cloud Functions is super easy. Seriously. Just use Ava and Sinon and "BAM". . . sorted. It really couldn't be simpler. In fact, I wouldn't mind writing another series of posts on just testing Cloud Functions (a blog on testing, who'd read that?).

Of course, you don't need to follow my example. Those amazing engineers at Google already have examples for almost every possible subsystem. Just take a look at their Node examples on GitHub for Cloud Functions: https://github.com/GoogleCloudPlatform/nodejs-docs-samples/tree/master/functions [hint: look in the test folders].

For many of you, this will be very familiar. What might be new is integration testing across microservices. Again, this could be an entire series of articles, but I can provide a few quick tips here.

First, use Google's emulators. They have them for just about everything (Pub/Sub, Datastore, Bigtable, Cloud Functions). Getting them set up is easy. Getting them to all work together isn't super simple, but not too hard. Again, we can leverage our Cloud Function configuration (seen in the previous section), to help drive emulation.

Second, use monitoring to help design integration testing. What is good monitoring if not a constant integration test? Think about how you would monitor your distributed microservices and how you'd look at various data points to look for slowness or errors. For example, I'd probably like to monitor the average time it takes for a single input to propagate across my architecture and send alerts if we slip beyond standard deviation. How do I do this? By having a common ID carried from the start to end of a process.

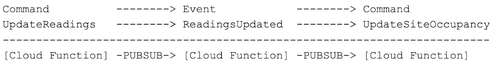

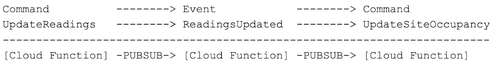

Take our architecture as an example. Everything is a chain of commands and events. Something like this:

If we have a single ID that flows through this chain, it'll be easy for us to monitor (and perform integration testing). This is why it's great to have a common parent for both commands and events. This is typically referred to as a "fact." So everything in our system is a "fact." The JSON might look something like this:

As we move through our chain of commands and events, we change the fact type and subtype, but never the ID. This means that we can log and monitor the flow of each of our initial inputs as it migrates through the system.

Of course, as with all things monitoring (and integration testing), life isn't so simple. This stuff is hard. You simply cannot perfect your monitoring or integration testing. If you did, you would've solved the Halting Problem. Seriously, if I could give any one piece of advice to aspiring computer scientists, it would be to fully understand the Halting Problem and the CAP theorem.

Pitfalls of serverless

Serverless has no flaws! You think I'm joking. I'm not. The pitfalls in serverless have nothing to do with the services themselves. They all have to do with you. Yep. True serverless systems are extremely powerful and cost-efficient. The only problem: developers have a tendency to use these services wrong. They don't take the time to truly understand the design and rationale of the underlying technologies. Google uses these exact same services to run the world's largest and most performant web applications. Yet, I hear a lot of developers complaining that these services are just "too slow" or "missing a lot."

Frankly, you're wrong. Serverless is not generic. It isn't compute instances that let you you install whatever you want. That’s not what we want! Serverless is a compute service that does a specific task. Now, those tasks may seem very generic (like databases or functions), but they're not. Each offering has a specific compute model in mind. Understanding that model is key to getting the maximum value.

So what is the biggest pitfall? Abuse. Serverless lets you do a LOT for very cheap. That means the mistakes are going to come from your design and implementation. With serverless, you have to really embrace the design process (more than ever). Boiling your problem down to its most fundamental elements will let you build a system that doesn't need to be replaced every three to four years. To get where we needed to be, my team rebuilt the entire kernel of our service three times in one year. This may seem like madness and it was. We were our own worst enemy. We took old (and outdated) notions of software and web services and kept baking it into the new world. We didn't believe in serverless. We didn't embrace data mitosis. We resisted streaming. We didn't put data first. All mistakes. All because we didn’t fully understanding the intent of our tools.

Now, we have an amazing platform (with a code base reduced by 80%) that will last for a very long time. It'w optimized for monitoring and analytics, but we didn't even need to try. By embracing data and design, we got so much for free. It's actually really easy, if you get out of your own way.

Conclusion

As development teams beginning to transition to a world of IoT and serverless, they will encounter an unique set of challenges. The goal of this series was to provide an overview of recommended techniques and technologies used by one team to ship a IoT/serverless product. A quick summary of each part is as follows:

Part 1 - Getting the most out of serverless computing requires a cutting-edge approach to software design. With the ability to rapidly prototype and release software, it’s important to form a flexible architecture that can expand at the speed of inspiration. Sound cheesy, but who doesn’t love cheese? Our team utilized domain-driven design (DDD) and event-driven architecture (EDA) to efficiently define a smart city platform. To implement this platform, we built microservices deployed on serverless compute services.

Biggest takeaway: serverless now makes event-driven architecture and microservices not only a reality, but almost a necessity. Viewing your system as a series of events will allow for resilient design and efficient expansion.

Part 2 - Implementation of an IoT architecture on serverless services is now easier than ever. On Google Cloud Platform (GCP), powerful serverless tools are available for:

- IoT fleet management and security -> IoT Core

- Data streaming and windowing -> Dataflow

- High-throughput data storage -> Bigtable

- Easy transaction data storage -> Datastore

- Message distribution -> Pub/Sub

- Custom compute logic -> Cloud Functions

- On-demand, analytics of disparate data sources -> BigQuery

Combining these services allows any development team to produce a robust, cost-efficient and extremely performant product. Our team uses all of these and was able to adopt each new service within a single one-week sprint.

Biggest takeaway

: DevOps is dead. Serverless systems (with proper non-destructive, deterministic data management and testing) means that we’re just developers again! No calls at 2am because some server got stuck? Sign me up for serverless!

Part 3 - To be truly serverless, a service must offer a limited set of computational actions. In other words, to be truly auto-scaling and self-maintaining, the service can’t do everything. Understanding the intent and design of the serverless services you use will greatly improve the quality of your code. Take the time to understand the use-cases designed for the service so that you extract the most. Using a serverless offering incorrectly can lead to greatly reduced performance.

For example, Pub/Sub is designed to guarantee rapid, at-least-once delivery. This means messages may arrive multiple times or out-of-order. That may sound scary, but it’s not. Pub/Sub is used by Google to manage distribution of data for their services across the globe. They make it work. So can you. Hint: consider deterministic code. Hint, hint: If order is essential at time of data inception, use windowing (see Dataflow).

Biggest takeaway

: Don’t try to use a hammer to clean your windows. Research serverless services and pick the ones that suit your problem best. In other words, not all serverless offerings are created equal. They may offer the same essential API, but the implementation and goals can be vastly different.

Finally, before we part, let me say, “Thank you.” Thanks for following through all my ramblings to reach this point. There's a lot of information, and I hope that it gives you a preview of the journey that lies ahead. We're entering a new era of web development. It's a landscape full of treasure, opportunity, dungeons and dragons. Serverless computing lets us discard the burden of DevOps and return to the adventure of pure coding. Remember when all you did was code (not maintenance calls at 2am)? It's time to get back there. I haven't felt this happy in my coding life in a long time. I want to share that feeling with all of you!

Please, send feedback, requests, and dogs (although, I already have 7). Software development is a never-ending story. Each chapter depends on the last. Serverless is just one more step on our shared quest for holodecks. Yeah, once we have holodecks, this party is over! But until then, code as one.