How to deploy geographically distributed services on Kubernetes Engine with kubemci

Matthew DeLio

Product Manager, Anthos, Google Cloud Platform

Nikhil Jindal

Software Engineer, Google Cloud Platform

Increasingly, many enterprise Google Cloud Platform (GCP) customers use multiple Google Kubernetes Engine clusters to host their applications, for better resilience, scalability, isolation and compliance. In addition, their users expect to low-latency access to applications from anywhere around the world. Today we are introducing a new command-line interface (CLI) tool called kubemci to automatically configure ingress using Google Cloud Load Balancer (GCLB) for multi-cluster Kubernetes Engine environments. This allows you to use a Kubernetes Ingress definition to leverage GCLB along with multiple Kubernetes Engine clusters running in regions around the world, to serve traffic from the closest cluster using a single anycast IP address, taking advantage of GCP’s 100+ Points of Presence and global network. For more information on how the GCLB handles cross-region traffic see this link.

Further, kubemci will be the initial interface to an upcoming controller-based multi-cluster ingress (MCI) solution that can adapt to different use-cases and can be manipulated using the standard kubectl CLI tool or via Kubernetes API calls.

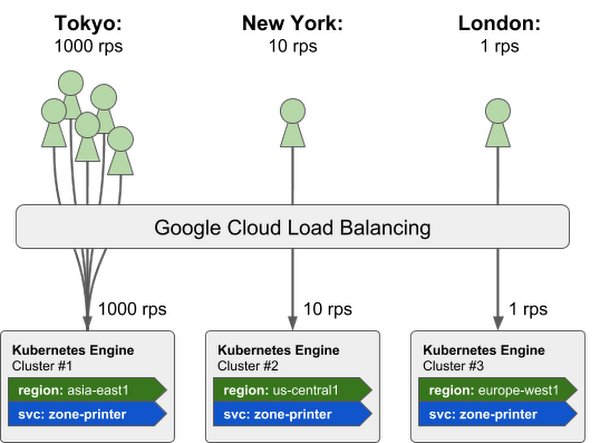

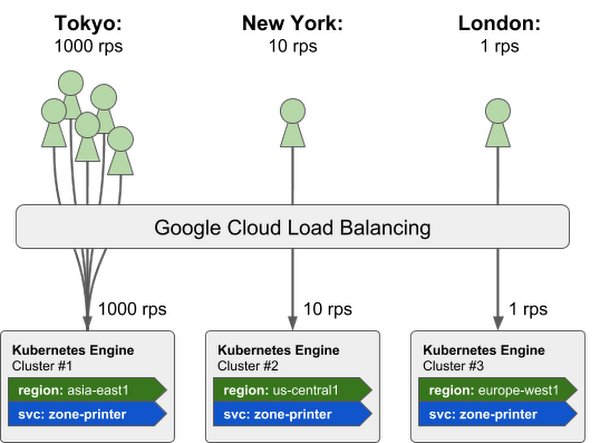

For example, in the picture below, we have created three independent Kubernetes Engine clusters and spread them across three continents (Asia, North America, and Europe). We then deployed the same service, “zone-printer”, to each of these clusters and used kubemci to create a single GCLB instance to stitch the services together. In this case, the 1000 requests-per-second (rps) from Tokyo are routed to the cluster in Asia, the New York requests are routed to the North American cluster, and the remaining 1 rps from London is routed to the European cluster. Because each of these requests arrive at the closest cluster to the end user they benefit from low round-trip latency. Additionally, if a region, cluster, or service were ever to become unavailable, GCLB automatically detects that and routes users to one of the other healthy service instances.

The feedback on kubemci has been great so far. Marfeel is a Spanish ad tech platform and has been using kubemci in production to improve their service offering:

At Marfeel, we appreciate the value that this tool provides for us and our customers. Kubemci is simple to use and easily integrates with our current processes, helping to speed up our Multi-Cluster deployment process. In summary, kubemci offers us granularity, simplicity, and speed.

Borja García, SRE Marfeel

Getting started

To get started with kubemci, please check out the how-to guide, which contains information on the prerequisites along with step-by-step instructions on how to download the tool and set up your clusters, services and ingress objects.As a quick preview, once your applications and services are running, you can set up a multi-cluster ingress by running the following command:

To learn more, check out this talk on Multicluster Ingress by Google software engineers Greg Harmon and Nikhil Jindal, at KubeCon Europe in Copenhagen, demonstrating some initial work in this space.