Enhanced persistent disks for Google Compute Engine = better Kubernetes and Docker support

Martin Buhr

Product Manager

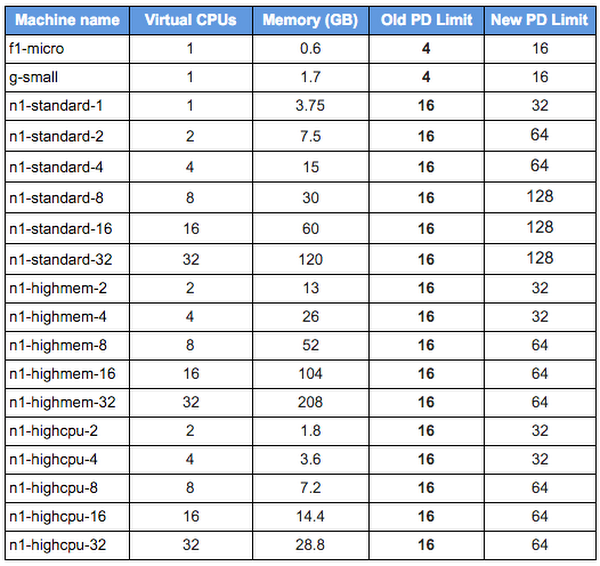

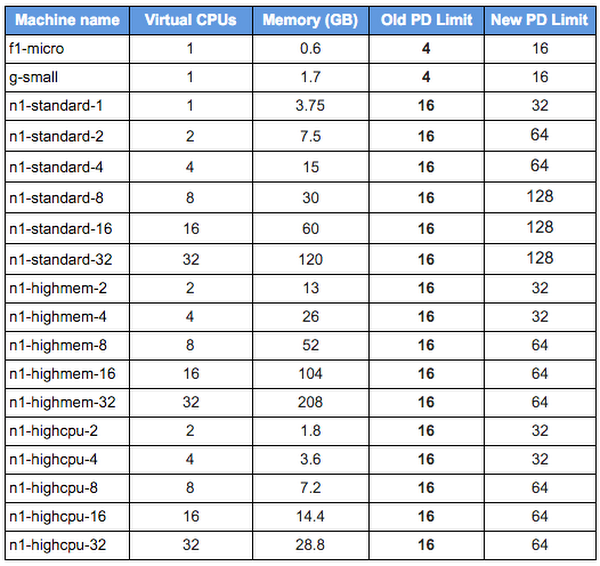

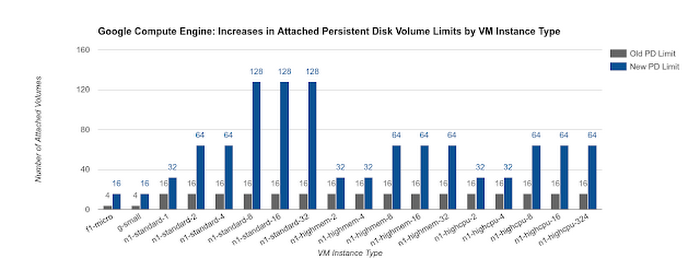

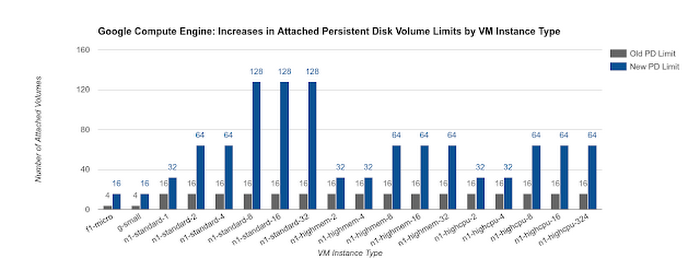

The infrastructure underpinning Google Cloud Platform has always been good, and it keeps getting better. We recently increased the limit on the number of attached persistent disk storage volumes per Google Compute Engine instance from 16 to as many as 128 volumes on our largest standard instance sizes (giving us three times the capacity of the competition). We also recently increased the total quantity of attached persistent disk storage per instances from 10TB to as much as 64TB on our largest instance sizes, and introduced the ability to resize persistent disk volumes with no downtime.

These changes were enabled by Google’s continued innovations in data center infrastructure, in this case networking. Combined with Colossus, Google’s high-performance global storage fabric, we have greatly increased the size, quantity, and flexibility of network attached storage per instance without sacrificing persistent disk’s legendary rock solid performance and reliability.

This ties back to one of Google Cloud Platform’s core strengths: hosting Linux containers. We are committed to making GCP the best place on the web to run Docker-based workloads, building on over a decade of experience running all of Google on Linux containers. To help you realize the same benefits that we did, we created and open-sourced Kubernetes, a tool for creating and managing clusters of Docker containers, and launched Google Container Engine to provide a managed, hosted, Kubernetes-based service for Docker applications.

Red Hat is a large Kubernetes user and has adopted it across its product portfolio, including making it a core component of OpenShift, its Platform as a Service offering. Working with Red Hat late last year in preparation for offering OpenShift Dedicated on Google Cloud Platform, it became clear that we needed to increase the number of attached persistent disk volumes per instance to help Red Hat efficiently bin pack Kubernetes Pods (each of which may have one or more attached volumes) across their Google Cloud Platform infrastructure.

Being able to attach more persistent disk volumes per instance isn’t just useful to Red Hat. In addition to better support for Kubernetes and Docker, this feature can also be used in various scenarios that require a large number of disks. For example, you keep data from different web servers on separate volumes to insure data isolation when an instance is running multiple web servers.

To take advantage of this feature for your own Kubernetes clusters running on Google Compute Engine, simply set the "KUBE_MAX_PD_VOLS" environment variable to whatever you want the limit to be, based on the maximum number of volumes the nodes in your cluster support.